一、依赖

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.example</groupId>

<artifactId>TestHadoop3</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<maven.compiler.source>8</maven.compiler.source>

<maven.compiler.target>8</maven.compiler.target>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.2.1</version>

</dependency>

<dependency>

<groupId>ch.qos.logback</groupId>

<artifactId>logback-classic</artifactId>

<version>1.2.3</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.47</version>

</dependency>

</dependencies>

</project>

二、数据库中建表

create table word_count(id int auto_increment primary key,word varchar(8192), count int);

三、定义实体类,实现DBWritable接口和Writable接口

package cn.edu.tju;

import org.apache.hadoop.io.Writable;

import org.apache.hadoop.mapred.lib.db.DBWritable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

import java.sql.PreparedStatement;

import java.sql.ResultSet;

import java.sql.SQLException;

public class MyDBWritable implements DBWritable, Writable {

private String word;

private int count;

public String getWord() {

return word;

}

public void setWord(String word) {

this.word = word;

}

public int getCount() {

return count;

}

public void setCount(int count) {

this.count = count;

}

@Override

public void write(PreparedStatement statement) throws SQLException {

statement.setString(1, word);

statement.setInt(2, count);

}

@Override

public void readFields(ResultSet resultSet) throws SQLException {

word = resultSet.getString(1);

count = resultSet.getInt(2);

}

@Override

public void write(DataOutput out) throws IOException {

out.writeUTF(word);

out.writeInt(count);

}

@Override

public void readFields(DataInput in) throws IOException {

word = in.readUTF();

count = in.readInt();

}

}

四、定义mapper

package cn.edu.tju;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class WordCountMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

private IntWritable one = new IntWritable(1);

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String valueString = value.toString();

String[] values = valueString.split(" ");

for (String val : values) {

context.write(new Text(val), one);

}

}

}

五、定义reducer

package cn.edu.tju;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.lib.db.DBWritable;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

import java.util.Iterator;

public class WordCountReducer extends Reducer<Text, IntWritable, DBWritable, NullWritable> {

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

Iterator<IntWritable> iterator = values.iterator();

int count = 0;

while (iterator.hasNext()) {

count += iterator.next().get();

}

MyDBWritable myDBWritable = new MyDBWritable();

myDBWritable.setCount(count);

myDBWritable.setWord(key.toString());

context.write(myDBWritable, NullWritable.get());

}

}

其中使用了上面定义的MyDBWritable类

六、定义主类,启动hadoop job

package cn.edu.tju;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.db.DBConfiguration;

import org.apache.hadoop.mapreduce.lib.db.DBOutputFormat;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

public class MyWordCount5 {

public static void main(String[] args) throws Exception {

Configuration configuration = new Configuration(true);

//hdfs路径

configuration.set("fs.defaultFS", "hdfs://xx.xx.xx.xx:9000/");

//yarn 运行 还是local 运行

configuration.set("mapreduce.framework.name", "local");

//configuration.set("yarn.resourcemanager.hostname", "xx.xx.xx.xx");

//configuration.set("mapreduce.job.jar","target\\TestHadoop3-1.0-SNAPSHOT.jar");

//job 创建

Job job = Job.getInstance(configuration);

//

job.setJarByClass(MyWordCount5.class);

//job name

job.setJobName("wordcount-" + System.currentTimeMillis());

//输入数据路径

FileInputFormat.setInputPaths(job, new Path("/user/root/tju/"));

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

job.setMapperClass(WordCountMapper.class);

//job.setCombinerClass(MyCombiner.class);

job.setReducerClass(WordCountReducer.class);

//job.setOutputFormatClass(MyOutputFormat.class);

String driverClassName = "com.mysql.jdbc.Driver";

String url = "jdbc:mysql://xx.xx.xx.xx:3306/test?serverTimezone=UTC&useUnicode=true&characterEncoding=utf-8";

String username = "root";

String password = "xxxxxx";

DBConfiguration.configureDB(job.getConfiguration(), driverClassName, url,

username, password);

// 设置输出的表

DBOutputFormat.setOutput(job, "word_count", "word", "count");

//等待任务执行完成

job.waitForCompletion(true);

}

}

其中DBOutputFormat.setOutput(job, “word_count”, “word”, “count”);这句设置往数据库写数据。任务的输入数据来自hdfs.

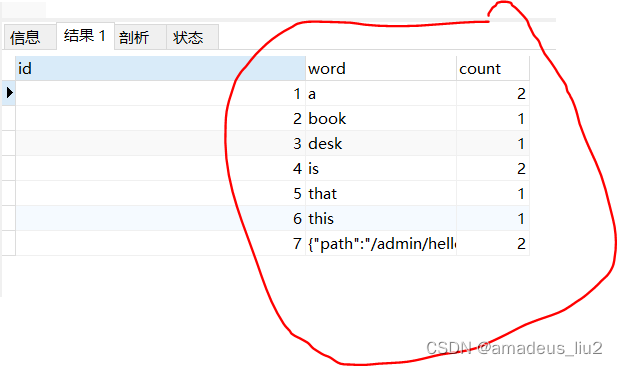

七、任务结束后在数据库中查询结果

231

231

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?