获取NBA中国官方网站中的新闻—解读:骑士三连败的原因何在?

作业要求

选一个自己感兴趣的主题。

网络上爬取相关的数据。

进行文本分析,生成词云。

对文本分析结果解释说明。

写一篇完整的博客,附上源代码、数据爬取及分析结果,形成一个可展示的成果。

1、使用360极速浏览器打开网页“http://china.nba.com/a/20171030/030246.htm”,在空白地方点击鼠标右键调出查看源代码选项。

可以通过网页源代码查看标题的代码,可以看出每条消息的标题与链接

2.直接获取新闻所有的内容

爬取到数据之后就对数据进行分析和统计,代码如下

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

|

import

jieba

output

=

open

(

'D:\\dd.txt'

,

'a'

,encoding

=

'utf-8'

)

txt

=

open

(

'D:\\bb.txt'

,

"r"

,encoding

=

'utf-8'

).read()

#去除一些无意义的词汇后

ex

=

{

'无非'

,

'他的'

,

'汗水'

,

'没有'

}

ls

=

[]

words

= reb

.dict(txt)

counts

=

{}

for

word

in

words:

ls.append(word)

if

len

(word)

=

=

1

or

word

in

ex:

continue

else

:

counts[word]

=

counts.get(word,

0

)

+

1

#for word in ex:

# del(word)

items

=

list

(counts.items())

items.sort(key

=

lambda

x:x[

1

], reverse

=

True

)

for

i

in

range

(

100

):

word , count

=

items[i]

print

(

"{:<10}{:>5}"

.

format

(word,count))

output.write(

"{:<10}{:>5}"

.

format

(word,count)

+

'\n'

)

output.close()

|

import requests

from bs4 import BeautifulSoup

import reb

def getHTMLText(url):

try:

r = requests.get(url, timeout = 30)

r.raise_for_status()

r.encoding = 'bg2312'

return r.text

except:

return ""

def getContent(url):#读取信息

html = getHTMLText(url)

soup = BeautifulSoup(html, "html.parser")

title = soup.select("div.hd > h1")#标题

print(title[0].get_text())

time = soup.select("div.a_Info > span.a_time")#时间

print(time[0].string)

paras = soup.select("div.Cnt-Main-Article-QQ > p.text")#内容

article = {

'Title' : title[0].get_text(),

'Time' : time[0].get_text(),

'Paragraph' : paras,

'Author' : author[0].get_text()

}

print(article)

def main():

url = "http://china.nba.com/a/20171030/030246.htm"

getContent(url);

main()

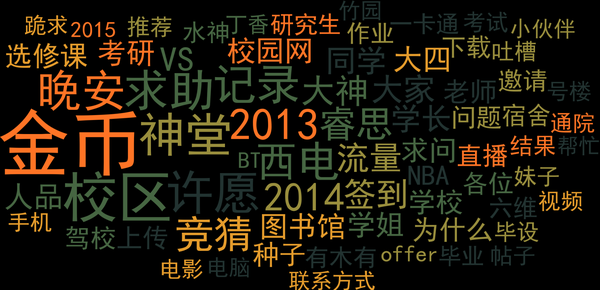

数据做成词云

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

|

#coding:utf-8

import

jieba

from

wordcloud

import

WordCloud

import

matplotlib.pyplot as plt

text

=

open

(

"D:\\cc.txt"

,

'r'

,encoding

=

'utf-8'

).read()

print

(text)

wordlist

=

jieba.cut(text,cut_all

=

True

)

wl_split

=

"/"

.join(wordlist)

mywc

=

WordCloud().generate(text)

plt.imshow(mywc)

plt.axis(

"off"

)

plt.show()

|

280

280

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?