视频监控H265学习笔记(一)

目录

推流示例

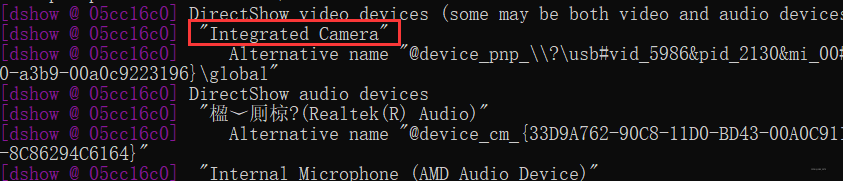

- 查看设备

ffmpeg -list_devices true -f dshow -i dummy

- 播放摄像头内容:

ffplay -f dshow -i video="Integrated Camera"

- 推流(打开Nginx服务)

ffmpeg -f dshow -i video="Integrated Camera" -vcodec libx264 -acodec aac -preset:v ultrafast -tune:v zerolatency -f flv -r 30 -g 30 rtmp://localhost:1935/live/test1

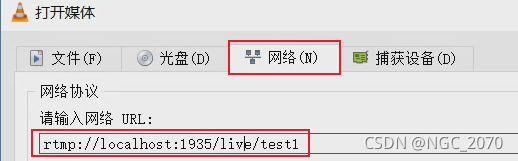

- VLC查看:

- Rtmp:互联网

- Rtsp: 视频监控(live555, easy-darwin)

视频监控系统的技术点总结与分析

- FFmpeg

- 编解码、封装解封装、SDK、颜色空间转换(yuv,rgb)

- 推流、拉流。

- Nginx、SRS、Darwin、Live555

- 视频服务器

- 音视频

- 音频、视频

- 编解码

- 软编码:x264,x265

- 硬编码:gpu, cuda, dxva2

- 流媒体

- Rtsp, rtmp,hls,http-flv; rtp/rtcp,flv。

- 解封装

- 视频监控

- 前端: 摄像头(local, ipc),编解码

- 后端: 视频服务器、转码、分发、智能分析(opencv)

搭建QT+FFmpeg+SDL2.0环境

INCLUDEPATH += $$PWD/../FFmpeg431dev/include INCLUDEPATH += $$PWD/../SDL2/include LIBS += $$PWD/../FFmpeg431dev/lib/avcodec.lib \ $$PWD/../FFmpeg431dev/lib/avdevice.lib \ $$PWD/../FFmpeg431dev/lib/avfilter.lib \ $$PWD/../FFmpeg431dev/lib/avformat.lib \ $$PWD/../FFmpeg431dev/lib/avutil.lib \ $$PWD/../FFmpeg431dev/lib/postproc.lib \ $$PWD/../FFmpeg431dev/lib/swresample.lib \ $$PWD/../FFmpeg431dev/lib/swscale.lib LIBS += $$PWD/../SDL2/lib/x86/SDL2.lib LIBS += $$PWD/../SDL2/lib/x86/SDL2main.lib

- 示例:

#include <QCoreApplication> extern "C"{ #include <libavcodec/avcodec.h> #include <libavformat/avformat.h> #include <libswscale/swscale.h> #include <libavdevice/avdevice.h> #include <libavformat/version.h> #include <libavutil/time.h> #include <libavutil/mathematics.h> #include <SDL.h> } #undef main //定义相关宏,否则就报错,如 error: #error missing -D__STDC_CONSTANT_MACROS” extern "C"{ #ifdef __cplusplus #define __STDC_CONSTANT_MACROS #ifdef _STDINT_H #undef _STDINT_H #endif #include <stdint.h> #endif } / SDL library already declare main funtion... #undef main int main(int argc, char *argv[]) { QCoreApplication a(argc, argv); / ffmpeg demo test printf("hello,ffmpeg\n"); printf("configuration:%s\n", avcodec_configuration() ); printf("version:%d\n", avcodec_version() ); / sdl demo test /* /// 1. structure, api /// SDL_Window, SDL_Surface, /// SDL_CreateWindow, SDL_DestroyWindow /// SDL_GetWindowSurface,SDL_UpdateWindowSurface /// /// 2. workflow /// Init /// Window /// Surface: canvas /// Draw: circle, rectanble, triangle, ellipse, ...., bmp, /// ......business logic /// Destroy: release, delete,.... /// */ /// 1. structures: window, surface SDL_Window* gWindow = nullptr; SDL_Surface* gScreenSurface = nullptr; /// 2. init library if(SDL_Init(SDL_INIT_VIDEO)<0) { printf( "Window could not be created! SDL_Error: %s\n", SDL_GetError() ); return 0; } /// 3. create window gWindow = SDL_CreateWindow("SHOW BMP", SDL_WINDOWPOS_UNDEFINED,SDL_WINDOWPOS_UNDEFINED, 640,480,SDL_WINDOW_SHOWN); if(gWindow == nullptr) { printf( "Window could not be created! SDL_Error: %s\n", SDL_GetError() ); return 0; } /// 4. get surface: canvas gScreenSurface = SDL_GetWindowSurface(gWindow); /// 5. draw ...... SDL_FillRect(gScreenSurface, nullptr, SDL_MapRGB(gScreenSurface->format, 0x00,0xFF, 0x00)); /// 6. update window..... SDL_UpdateWindowSurface(gWindow); SDL_Delay(2000); /// 7. destroy:release, delete ...... SDL_FreeSurface( gScreenSurface ); gScreenSurface = nullptr; SDL_DestroyWindow(gWindow); gWindow = nullptr ; SDL_Quit(); return a.exec(); }

打印视频文件信息

#include <stdio.h> extern "C"{ #include <libavformat/avformat.h> } /** * @brief 将一个AVRational类型的分数转换为double类型的浮点数 * @param r:r为一个AVRational类型的结构体变量,成员num表示分子,成员den表示分母,r的值即为(double)r.num / (double)r.den。用这种方法表示可以最大程度地避免精度的损失 * @return 如果变量r的分母den为0,则返回0(为了避免除数为0导致程序死掉);其余情况返回(double)r.num / (double)r.den */ static double r2d(AVRational r) { return r.den == 0 ? 0 : (double)r.num / (double)r.den; } int test{ //需要读取的本地媒体文件相对路径为video1.mp4,这里由于文件video1.mp4就在工程目录下,所以相对路径为video1.mp4 /const char *path = "ande_302.mp4";// or : rtsp/rtmp:///...... //const char *path = "audio1.mp3"; ///av_register_all(); //初始化所有组件,只有调用了该函数,才能使用复用器和编解码器。否则,调用函数avformat_open_input会失败,无法获取媒体文件的信息 /// 读取网络流:rtsp const char *path = "rtsp://127.0.0.1:8554/aabb"; avformat_network_init(); //打开网络流。这里如果只需要读取本地媒体文件,不需要用到网络功能,可以不用加上这一句 AVDictionary *opts = NULL; // dictionary[key:value], json //AVFormatContext是描述一个媒体文件或媒体流的构成和基本信息的结构体 AVFormatContext *ic = NULL; //媒体打开函数,调用该函数可以获得路径为path的媒体文件的信息,并把这些信息保存到指针ic指向的空间中(调用该函数后会分配一个空间,让指针ic指向该空间) int re = avformat_open_input(&ic, path, NULL, &opts); if (re != 0) //如果打开媒体文件失败,打印失败原因。比如,如果上面没有调用函数av_register_all,则会打印“XXX failed!:Invaliddata found when processing input” { char buf[1024] = { 0 }; av_strerror(re, buf, sizeof(buf) - 1); printf("open %s failed!:%s", path, buf); } else //打开媒体文件成功 { printf("打开媒体文件 %s 成功!\n", path); //调用该函数可以进一步读取一部分视音频数据并且获得一些相关的信息。 //调用avformat_open_input之后,我们无法获取到正确和所有的媒体参数,所以还得要调用avformat_find_stream_info进一步的去获取。 //查找音视频流的信息,之后,ic被进一步填充 avformat_find_stream_info(ic, NULL); //调用avformat_open_input读取到的媒体文件的路径/名字 printf("媒体文件名称:%s\n", ic->filename); //视音频流的个数,如果一个媒体文件既有音频,又有视频,则nb_streams的值为2。如果媒体文件只有音频,则值为1 printf("视音频流的个数:%d\n", ic->nb_streams); //媒体文件的平均码率,单位为bps printf("媒体文件的平均码率:%lldbps\n", ic->bit_rate); ///#define AV_TIME_BASE 1000000:精度高 printf("duration:%d\n", ic->duration); int tns, thh, tmm, tss; tns = (ic->duration) / AV_TIME_BASE; thh = tns / 3600; tmm = (tns % 3600) / 60; tss = (tns % 60); printf("媒体文件总时长:%d时%d分%d秒\n", thh, tmm, tss); //通过上述运算,可以得到媒体文件的总时长 printf("\n"); //通过遍历的方式读取媒体文件视频和音频的信息, //新版本的FFmpeg新增加了函数av_find_best_stream,也可以取得同样的效果,但这里为了兼容旧版本还是用这种遍历的方式 for (int i = 0; i < ic->nb_streams; i++) { AVStream *as = ic->streams[i]; if (AVMEDIA_TYPE_AUDIO == as->codecpar->codec_type) //如果是音频流,则打印音频的信息 { printf("音频信息:\n"); printf("index:%d\n", as->index); //如果一个媒体文件既有音频,又有视频,则音频index的值一般为1。但该值不一定准确,所以还是得通过as->codecpar->codec_type判断是视频还是音频 printf("音频采样率:%dHz\n", as->codecpar->sample_rate); //音频编解码器的采样率,单位为Hz if (AV_SAMPLE_FMT_FLTP == as->codecpar->format) //音频采样格式 { printf("音频采样格式:AV_SAMPLE_FMT_FLTP\n"); } else if (AV_SAMPLE_FMT_S16P == as->codecpar->format) { printf("音频采样格式:AV_SAMPLE_FMT_S16P\n"); } printf("音频信道数目:%d\n", as->codecpar->channels); //音频信道数目 if (AV_CODEC_ID_AAC == as->codecpar->codec_id) //音频压缩编码格式 { printf("音频压缩编码格式:AAC\n"); } else if (AV_CODEC_ID_MP3 == as->codecpar->codec_id) { printf("音频压缩编码格式:MP3\n"); } int DurationAudio = (as->duration) * r2d(as->time_base); //音频总时长,单位为秒。注意如果把单位放大为毫秒或者微妙,音频总时长跟视频总时长不一定相等的 printf("音频总时长:%d时%d分%d秒\n", DurationAudio / 3600, (DurationAudio % 3600) / 60, (DurationAudio % 60)); //将音频总时长转换为时分秒的格式打印到控制台上 printf("\n"); } else if (AVMEDIA_TYPE_VIDEO == as->codecpar->codec_type) //如果是视频流,则打印视频的信息 { printf("视频信息:\n"); printf("index:%d\n", as->index); //如果一个媒体文件既有音频,又有视频,则视频index的值一般为0。但该值不一定准确,所以还是得通过as->codecpar->codec_type判断是视频还是音频 printf("视频帧率:%lffps\n", r2d(as->avg_frame_rate)); //视频帧率,单位为fps,表示每秒出现多少帧 if (AV_CODEC_ID_MPEG4 == as->codecpar->codec_id) //视频压缩编码格式 { printf("视频压缩编码格式:MPEG4\n"); } printf("帧宽度:%d 帧高度:%d\n", as->codecpar->width, as->codecpar->height); //视频帧宽度和帧高度 int DurationVideo = (as->duration) * r2d(as->time_base); //视频总时长,单位为秒。注意如果把单位放大为毫秒或者微妙,音频总时长跟视频总时长不一定相等的 printf("视频总时长:%d时%d分%d秒\n", DurationVideo / 3600, (DurationVideo % 3600) / 60, (DurationVideo % 60)); //将视频总时长转换为时分秒的格式打印到控制台上 printf("\n"); } } //av_dump_format(ic, 0, path, 0); } if (ic) { avformat_close_input(&ic); //关闭一个AVFormatContext,和函数avformat_open_input()成对使用 } avformat_network_deinit(); getchar(); //加上这一句,防止程序打印完信息就马上退出了 return 0; }

#include <stdio.h> extern "C"{ #include <libavformat/avformat.h> } /** * @brief 将一个AVRational类型的分数转换为double类型的浮点数 * @param r:r为一个AVRational类型的结构体变量,成员num表示分子,成员den表示分母,r的值即为(double)r.num / (double)r.den。用这种方法表示可以最大程度地避免精度的损失 * @return 如果变量r的分母den为0,则返回0(为了避免除数为0导致程序死掉);其余情况返回(double)r.num / (double)r.den */ static double r2d(AVRational r) { return r.den == 0 ? 0 : (double)r.num / (double)r.den; } int test{ /// 1. avformat_open_input /// 2. avformat_find_stream_info /// 3. av_find_best_stream, return stream index for audio,or video,or subtitle /// 4. read packet: while(1){ av_read_frame(...) } /// 5. decoding : ...... // 1. 打开文件 const char *ifilename = "ande.mp4"; printf("in_filename = %s\n", ifilename); avformat_network_init(); // AVFormatContext是描述一个媒体文件或媒体流的构成和基本信息的结构体 AVFormatContext *ifmt_ctx = NULL; // 输入文件的demux // 打开文件,主要是探测协议类型,如果是网络文件则创建网络链接 int ret = avformat_open_input(&ifmt_ctx, ifilename, NULL, NULL); if (ret < 0) { char buf[1024] = {0}; av_strerror(ret, buf, sizeof (buf) - 1); printf("open %s failed: %s\n", ifilename, buf); return -1; } // 2. 读取码流信息 ret = avformat_find_stream_info(ifmt_ctx, NULL); if (ret < 0) //如果打开媒体文件失败,打印失败原因 { char buf[1024] = { 0 }; av_strerror(ret, buf, sizeof(buf) - 1); printf("avformat_find_stream_info %s failed:%s\n", ifilename, buf); avformat_close_input(&ifmt_ctx); return -1; } // 3.打印总体信息 printf_s("\n==== av_dump_format in_filename:%s ===\n", ifilename); av_dump_format(ifmt_ctx, 0, ifilename, 0); printf_s("\n==== av_dump_format finish =======\n\n"); printf("media name:%s\n", ifmt_ctx->url); printf("stream number:%d\n", ifmt_ctx->nb_streams); // nb_streams媒体流数量 printf("media average ratio:%lldkbps\n",(int64_t)(ifmt_ctx->bit_rate/1024)); // 媒体文件的码率,单位为bps/1000=Kbps // duration: 媒体文件时长,单位微妙 int total_seconds = (ifmt_ctx->duration) / AV_TIME_BASE; // 1000us = 1ms, 1000ms = 1秒 printf("audio duration: %02d:%02d:%02d\n", total_seconds / 3600, (total_seconds % 3600) / 60, (total_seconds % 60)); printf("\n"); // 4.读取码流信息 // 音频 int audioindex = av_find_best_stream(ifmt_ctx, AVMEDIA_TYPE_AUDIO, -1, -1, NULL, 0); if (audioindex < 0) { printf("av_find_best_stream %s eror.", av_get_media_type_string(AVMEDIA_TYPE_AUDIO)); return -1; } AVStream *audio_stream = ifmt_ctx->streams[audioindex]; printf("----- Audio info:\n"); printf("index: %d\n", audio_stream->index); // 序列号 printf("samplarate: %d Hz\n", audio_stream->codecpar->sample_rate); // 采样率 printf("sampleformat: %d\n", audio_stream->codecpar->format); // 采样格式 AV_SAMPLE_FMT_FLTP:8 printf("audio codec: %d\n", audio_stream->codecpar->codec_id); // 编码格式 AV_CODEC_ID_MP3:86017 AV_CODEC_ID_AAC:86018 if (audio_stream->duration != AV_NOPTS_VALUE) { int audio_duration = audio_stream->duration * av_q2d(audio_stream->time_base); printf("audio duration: %02d:%02d:%02d\n", audio_duration / 3600, (audio_duration % 3600) / 60, (audio_duration % 60)); } // 视频 int videoindex = av_find_best_stream(ifmt_ctx, AVMEDIA_TYPE_VIDEO, -1, -1, NULL, 0); if (videoindex < 0) { printf("av_find_best_stream %s eror.", av_get_media_type_string(AVMEDIA_TYPE_VIDEO)); return -1; } AVStream *video_stream = ifmt_ctx->streams[videoindex]; printf("----- Video info:\n"); printf("index: %d\n", video_stream->index); // 序列号 printf("fps: %lf\n", av_q2d(video_stream->avg_frame_rate)); // 帧率 printf("width: %d, height:%d \n", video_stream->codecpar->width, video_stream->codecpar->height); printf("video codec: %d\n", video_stream->codecpar->codec_id); // 编码格式 AV_CODEC_ID_H264: 27, if (video_stream->duration != AV_NOPTS_VALUE) { int video_duration = video_stream->duration * av_q2d(video_stream->time_base); printf("audio duration: %02d:%02d:%02d\n", video_duration / 3600, (video_duration % 3600) / 60, (video_duration % 60)); } // 5.提取码流 AVPacket *pkt = av_packet_alloc(); int pkt_count = 0; int print_max_count = 100; printf("\n-----av_read_frame start\n"); while (1) { ret = av_read_frame(ifmt_ctx, pkt); //AVFormatContext if (ret < 0) { printf("av_read_frame end\n"); break; } if(pkt_count++ < print_max_count) { if (pkt->stream_index == audioindex) { printf("audio pts: %lld\n", pkt->pts); printf("audio dts: %lld\n", pkt->dts); printf("audio size: %d\n", pkt->size); printf("audio pos: %lld\n", pkt->pos); printf("audio duration: %lf\n\n", pkt->duration * av_q2d(ifmt_ctx->streams[audioindex]->time_base)); } else if (pkt->stream_index == videoindex) { printf("video pts: %lld\n", pkt->pts); printf("video dts: %lld\n", pkt->dts); printf("video size: %d\n", pkt->size); printf("video pos: %lld\n", pkt->pos); printf("video duration: %lf\n\n", pkt->duration * av_q2d(ifmt_ctx->streams[videoindex]->time_base)); } else { printf("unknown stream_index:\n", pkt->stream_index); } } av_packet_unref(pkt); } // 6.结束 if(pkt) av_packet_free(&pkt); if(ifmt_ctx) avformat_close_input(&ifmt_ctx); avformat_network_deinit(); getchar(); //加上这一句,防止程序打印完信息马上退出 return 0; return 0; }

解码相关

#include <libavutil/imgutils.h> #include <libavutil/samplefmt.h> #include <libavutil/timestamp.h> #include <libavformat/avformat.h> #include "libavutil/pixfmt.h" #include "libavutil/rational.h" #include "ffmpeg_dxva2.h" static AVFormatContext *fmt_ctx = NULL; static AVCodecContext *video_dec_ctx = NULL, *audio_dec_ctx; static int width, height; static enum AVPixelFormat pix_fmt; static AVStream *video_stream = NULL, *audio_stream = NULL; static const char *src_filename = NULL; static const char *video_dst_filename = NULL; static const char *audio_dst_filename = NULL; static FILE *video_dst_file = NULL; static FILE *audio_dst_file = NULL; static uint8_t *video_dst_data[4] = {NULL}; static int video_dst_linesize[4]; static int video_dst_bufsize; static int video_stream_idx = -1, audio_stream_idx = -1; static AVFrame *frame = NULL; static AVPacket pkt; static int video_frame_count = 0; static int audio_frame_count = 0; static int output_video_frame(AVFrame *frame) { if (frame->width != width || frame->height != height || frame->format != pix_fmt) { /* To handle this change, one could call av_image_alloc again and * decode the following frames into another rawvideo file. */ fprintf(stderr, "Error: Width, height and pixel format have to be " "constant in a rawvideo file, but the width, height or " "pixel format of the input video changed:\n" "old: width = %d, height = %d, format = %s\n" "new: width = %d, height = %d, format = %s\n", width, height, av_get_pix_fmt_name(pix_fmt), frame->width, frame->height, av_get_pix_fmt_name(frame->format)); return -1; } printf("video_frame n:%d coded_n:%d\n", video_frame_count++, frame->coded_picture_number); /* copy decoded frame to destination buffer: * this is required since rawvideo expects non aligned data */ av_image_copy(video_dst_data, video_dst_linesize, (const uint8_t **)(frame->data), frame->linesize, pix_fmt, width, height); /* write to rawvideo file */ fwrite(video_dst_data[0], 1, video_dst_bufsize, video_dst_file); return 0; } static int output_audio_frame(AVFrame *frame) { size_t unpadded_linesize = frame->nb_samples * av_get_bytes_per_sample(frame->format); printf("audio_frame n:%d nb_samples:%d pts:%s\n", audio_frame_count++, frame->nb_samples, av_ts2timestr(frame->pts, &audio_dec_ctx->time_base)); /* Write the raw audio data samples of the first plane. This works * fine for packed formats (e.g. AV_SAMPLE_FMT_S16). However, * most audio decoders output planar audio, which uses a separate * plane of audio samples for each channel (e.g. AV_SAMPLE_FMT_S16P). * In other words, this code will write only the first audio channel * in these cases. * You should use libswresample or libavfilter to convert the frame * to packed data. */ fwrite(frame->extended_data[0], 1, unpadded_linesize, audio_dst_file); return 0; } static int decode_packet(AVCodecContext *dec, const AVPacket *pkt) { int ret = 0; // submit the packet to the decoder ret = avcodec_send_packet(dec, pkt); if (ret < 0) { fprintf(stderr, "Error submitting a packet for decoding (%s)\n", av_err2str(ret)); return ret; } // get all the available frames from the decoder while (ret >= 0) { ret = avcodec_receive_frame(dec, frame); if (ret < 0) { // those two return values are special and mean there is no output // frame available, but there were no errors during decoding if (ret == AVERROR_EOF || ret == AVERROR(EAGAIN)) return 0; fprintf(stderr, "Error during decoding (%s)\n", av_err2str(ret)); return ret; } // write the frame data to output file if (dec->codec->type == AVMEDIA_TYPE_VIDEO) ret = output_video_frame(frame); else ret = output_audio_frame(frame); av_frame_unref(frame); if (ret < 0) return ret; } return 0; } enum AVPixelFormat GetHwFormat(AVCodecContext *s, const enum AVPixelFormat *pix_fmts) { InputStream* ist = (InputStream*)s->opaque; ist->active_hwaccel_id = HWACCEL_DXVA2; ist->hwaccel_pix_fmt = AV_PIX_FMT_DXVA2_VLD; return ist->hwaccel_pix_fmt; } static int open_codec_context(int *stream_idx, AVCodecContext **dec_ctx, AVFormatContext *fmt_ctx, enum AVMediaType type) { int ret, stream_index; AVStream *st; AVCodec *dec = NULL; AVDictionary *opts = NULL; ret = av_find_best_stream(fmt_ctx, type, -1, -1, NULL, 0); if (ret < 0) { fprintf(stderr, "Could not find %s stream in input file '%s'\n", av_get_media_type_string(type), src_filename); return ret; } else { stream_index = ret; st = fmt_ctx->streams[stream_index]; /* find decoder for the stream */ dec = avcodec_find_decoder(st->codecpar->codec_id); if (!dec) { fprintf(stderr, "Failed to find %s codec\n", av_get_media_type_string(type)); return AVERROR(EINVAL); } /* Allocate a codec context for the decoder */ *dec_ctx = avcodec_alloc_context3(dec); if (!*dec_ctx) { fprintf(stderr, "Failed to allocate the %s codec context\n", av_get_media_type_string(type)); return AVERROR(ENOMEM); } /* Copy codec parameters from input stream to output codec context */ if ((ret = avcodec_parameters_to_context(*dec_ctx, st->codecpar)) < 0) { fprintf(stderr, "Failed to copy %s codec parameters to decoder context\n", av_get_media_type_string(type)); return ret; } if ( (*dec_ctx)->codec_type == AVMEDIA_TYPE_VIDEO) { switch (dec->id) { case AV_CODEC_ID_MPEG2VIDEO: case AV_CODEC_ID_H264: case AV_CODEC_ID_VC1: case AV_CODEC_ID_WMV3: case AV_CODEC_ID_HEVC: case AV_CODEC_ID_VP9: { (*dec_ctx)->thread_count = 1; // Multithreading is apparently not compatible with hardware decoding InputStream *ist = malloc(sizeof(struct InputStream) ); ist->hwaccel_id = HWACCEL_AUTO; ist->active_hwaccel_id = HWACCEL_AUTO; ist->hwaccel_device = "dxva2"; ist->dec = dec; ist->dec_ctx = (*dec_ctx); (*dec_ctx)->opaque = ist; if (dxva2_init((*dec_ctx) ) == 0) { (*dec_ctx)->get_buffer2 = ist->hwaccel_get_buffer; (*dec_ctx)->get_format = GetHwFormat; (*dec_ctx)->thread_safe_callbacks = 1; break; } } break; } } /* Init the decoders */ if ((ret = avcodec_open2(*dec_ctx, dec, &opts)) < 0) { fprintf(stderr, "Failed to open %s codec\n", av_get_media_type_string(type)); return ret; } *stream_idx = stream_index; } return 0; } static int get_format_from_sample_fmt(const char **fmt, enum AVSampleFormat sample_fmt) { int i; struct sample_fmt_entry { enum AVSampleFormat sample_fmt; const char *fmt_be, *fmt_le; } sample_fmt_entries[] = { { AV_SAMPLE_FMT_U8, "u8", "u8" }, { AV_SAMPLE_FMT_S16, "s16be", "s16le" }, { AV_SAMPLE_FMT_S32, "s32be", "s32le" }, { AV_SAMPLE_FMT_FLT, "f32be", "f32le" }, { AV_SAMPLE_FMT_DBL, "f64be", "f64le" }, }; *fmt = NULL; for (i = 0; i < FF_ARRAY_ELEMS(sample_fmt_entries); i++) { struct sample_fmt_entry *entry = &sample_fmt_entries[i]; if (sample_fmt == entry->sample_fmt) { *fmt = AV_NE(entry->fmt_be, entry->fmt_le); return 0; } } fprintf(stderr, "sample format %s is not supported as output format\n", av_get_sample_fmt_name(sample_fmt)); return -1; } int main(int argc, char **argv) { int ret = 0; if (argc != 4) { fprintf(stderr, "usage: %s input_file video_output_file audio_output_file\n" "API example program to show how to read frames from an input file.\n" "This program reads frames from a file, decodes them, and writes decoded\n" "video frames to a rawvideo file named video_output_file, and decoded\n" "audio frames to a rawaudio file named audio_output_file.\n", argv[0]); exit(1); } src_filename = argv[1]; video_dst_filename = argv[2]; audio_dst_filename = argv[3]; /* open input file, and allocate format context */ if (avformat_open_input(&fmt_ctx, src_filename, NULL, NULL) < 0) { fprintf(stderr, "Could not open source file %s\n", src_filename); exit(1); } /* retrieve stream information */ if (avformat_find_stream_info(fmt_ctx, NULL) < 0) { fprintf(stderr, "Could not find stream information\n"); exit(1); } if (open_codec_context(&video_stream_idx, &video_dec_ctx, fmt_ctx, AVMEDIA_TYPE_VIDEO) >= 0) { video_stream = fmt_ctx->streams[video_stream_idx]; video_dst_file = fopen(video_dst_filename, "wb"); if (!video_dst_file) { fprintf(stderr, "Could not open destination file %s\n", video_dst_filename); ret = 1; goto end; } /* allocate image where the decoded image will be put */ width = video_dec_ctx->width; height = video_dec_ctx->height; pix_fmt = video_dec_ctx->pix_fmt; ret = av_image_alloc(video_dst_data, video_dst_linesize, width, height, pix_fmt, 1); if (ret < 0) { fprintf(stderr, "Could not allocate raw video buffer\n"); goto end; } video_dst_bufsize = ret; } if (open_codec_context(&audio_stream_idx, &audio_dec_ctx, fmt_ctx, AVMEDIA_TYPE_AUDIO) >= 0) { audio_stream = fmt_ctx->streams[audio_stream_idx]; audio_dst_file = fopen(audio_dst_filename, "wb"); if (!audio_dst_file) { fprintf(stderr, "Could not open destination file %s\n", audio_dst_filename); ret = 1; goto end; } } /* dump input information to stderr */ av_dump_format(fmt_ctx, 0, src_filename, 0); if (!audio_stream && !video_stream) { fprintf(stderr, "Could not find audio or video stream in the input, aborting\n"); ret = 1; goto end; } frame = av_frame_alloc(); if (!frame) { fprintf(stderr, "Could not allocate frame\n"); ret = AVERROR(ENOMEM); goto end; } /* initialize packet, set data to NULL, let the demuxer fill it */ av_init_packet(&pkt); pkt.data = NULL; pkt.size = 0; if (video_stream) printf("Demuxing video from file '%s' into '%s'\n", src_filename, video_dst_filename); if (audio_stream) printf("Demuxing audio from file '%s' into '%s'\n", src_filename, audio_dst_filename); /* read frames from the file */ while (av_read_frame(fmt_ctx, &pkt) >= 0) { // check if the packet belongs to a stream we are interested in, otherwise // skip it if (pkt.stream_index == video_stream_idx) ret = decode_packet(video_dec_ctx, &pkt); else if (pkt.stream_index == audio_stream_idx) ret = decode_packet(audio_dec_ctx, &pkt); av_packet_unref(&pkt); if (ret < 0) break; } /* flush the decoders */ if (video_dec_ctx) decode_packet(video_dec_ctx, NULL); if (audio_dec_ctx) decode_packet(audio_dec_ctx, NULL); printf("Demuxing succeeded.\n"); if (video_stream) { printf("Play the output video file with the command:\n" "ffplay -f rawvideo -pix_fmt %s -video_size %dx%d %s\n", av_get_pix_fmt_name(pix_fmt), width, height, video_dst_filename); } if (audio_stream) { enum AVSampleFormat sfmt = audio_dec_ctx->sample_fmt; int n_channels = audio_dec_ctx->channels; const char *fmt; if (av_sample_fmt_is_planar(sfmt)) { const char *packed = av_get_sample_fmt_name(sfmt); printf("Warning: the sample format the decoder produced is planar " "(%s). This example will output the first channel only.\n", packed ? packed : "?"); sfmt = av_get_packed_sample_fmt(sfmt); n_channels = 1; } if ((ret = get_format_from_sample_fmt(&fmt, sfmt)) < 0) goto end; printf("Play the output audio file with the command:\n" "ffplay -f %s -ac %d -ar %d %s\n", fmt, n_channels, audio_dec_ctx->sample_rate, audio_dst_filename); } end: avcodec_free_context(&video_dec_ctx); avcodec_free_context(&audio_dec_ctx); avformat_close_input(&fmt_ctx); if (video_dst_file) fclose(video_dst_file); if (audio_dst_file) fclose(audio_dst_file); av_frame_free(&frame); av_free(video_dst_data[0]); return ret < 0; }

- 参考梅会东老师教程:https://edu.csdn.net/learn/31691

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?