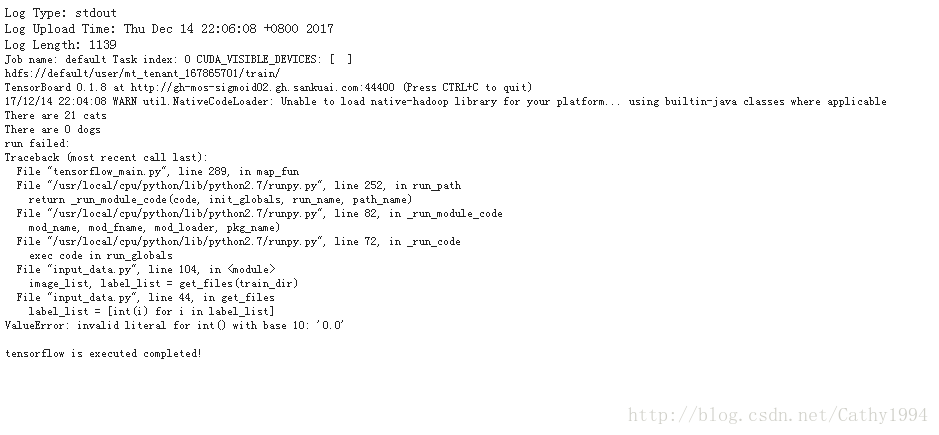

报错:”invalid literal for int() with base 10: ‘0.0’”,如下图

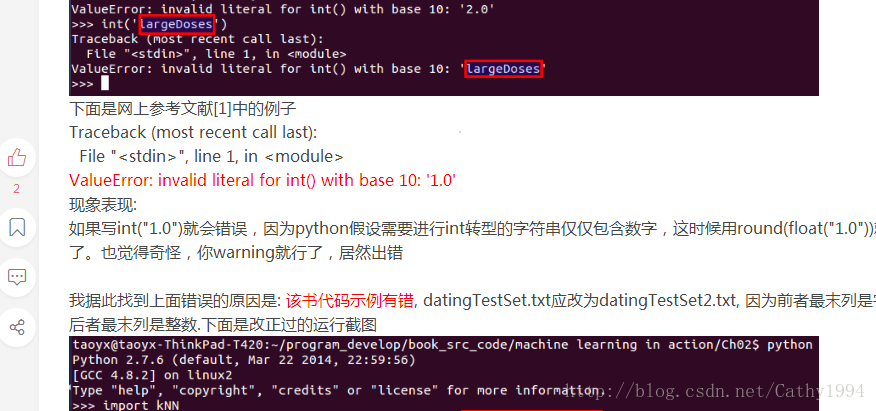

网上搜索到的方法如下图:

下面是完整的代码(依据优酷上的视频写的,视频集地址:http://list.youku.com/albumlist/show/id_49381710.html?spm=a2h0j.8191423.Drama.5~5~H3~A):

input_data.py

# -*- coding: utf-8 -*-

#!/usr/bin/python

import tensorflow as tf

import numpy as np

import os

import argparse

#%%

img_width = 208

img_height = 208

#%%

def get_files(file_dir):

'''

Args:

file_dir: file directory

Returns:

list of images and labels

'''

cats = []

label_cats = []

dogs = []

label_dogs = []

for file in tf.gfile.ListDirectory(file_dir):

name = file.split('.')#sep=

if name[0] == 'cat':

cats.append(file_dir + file)

label_cats.append(0)

else:

dogs.append(file_dir + file)

label_dogs.append(1)

print('There are %d cats\nThere are %d dogs' %(len(cats), len(dogs)))

image_list = np.hstack((cats, dogs))

label_list = np.hstack((label_cats, label_dogs))

temp = np.array([image_list, label_list])

temp = temp.transpose()

np.random.shuffle(temp)#打乱顺序,这里打乱后,之后分批就不用再打乱了,方便些

image_list = list(temp[:, 0])

label_list = list(temp[:, 1])

label_list = [round(float(i)) for i in label_list]

return image_list, label_list

#%%

def get_batch(image, label, image_W, image_H, batch_size, capacity):

'''

Args:

image: list type

label: list type

image_W: image width

image_H: image height

batch_size: batch size

capacity: the maxmum elements in queue

Returns:

image_batch: 4D tensor [batch_size, width, height, 3], dtype=tf.float32

label_batch: 1D tensor [batch_size], dtype=tf.int32

'''

image = tf.cast(image, tf.string)

label = tf.cast(label, tf.int32)

#make an input queue

input_queue = tf.train.slice_input_producer([image, label])

label = input_queue[1]

image_contents = tf.read_file(input_queue[0])

image = tf.image.decode_jpeg(image_contents, channels=3)

#data argumentation should go to here

image = tf.image.resize_image_with_crop_or_pad(image, image_W, image_H)

image = tf.image.per_image_standardization(image)

image_batch, label_batch = tf.train.batch([image, label],

batch_size=batch_size,

num_threads=64,

capacity=capacity)

#image_batch, label_batch = tf.train.shuffle_batch([image, label],

# batch_size=BATCH_SIZE,

# num_threads=64,

# capacity=CAPACITY,

# min_after_dequeue=CAPACITY-1)

label_batch = tf.reshape(label_batch, [batch_size])

return image_batch, label_batch

###TEST

#import matplotlib.pyplot as plt

BATCH_SIZE = 10

CAPACITY = 256

IMG_W = 208

IMG_H = 208

#依据美团官网上的说明,修改了数据集的路径变量

parser = argparse.ArgumentParser()

parser.add_argument('--data_dir', type=str, default='', help='input data path')

parser.add_argument('--model_dir', type=str, default='', help='output model path')

FLAGS, _ = parser.parse_known_args()

train_dir = FLAGS.data_dir

print(train_dir)

image_list, label_list = get_files(train_dir)

image_batch, label_batch = get_batch(image_list, label_list, IMG_W, IMG_H, BATCH_SIZE,CAPACITY)

with tf.Session() as sess:

i = 0

coord = tf.train.Coordinator()

threads = tf.train.start_queue_runners(coord=coord)

try:

while not coord.should_stop() and i<1:

img, label = sess.run([image_batch, label_batch])

#just trst one batch

for j in np.arange(BATCH_SIZE):

print('label: %d' %label[j])

#plt.imshow(img[j,:,:,:])

#plt.show()

i+=1

except tf.errors.OutOfRangeError:

print('done!')

finally:

coord.request_stop()

coord.join(threads)

5713

5713

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?