文本主要讲述windows系统下如何利用ffmpeg获取摄像机流并推送到rtmp服务,命令的用法前文

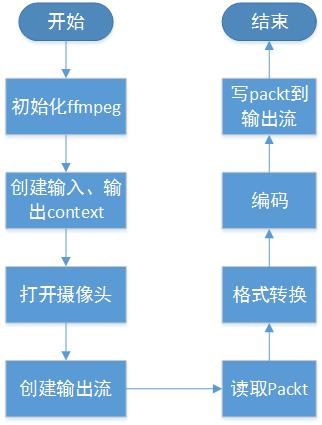

中有讲到过,这次是通过代码来实现。实现该项功能的基本流程如下:

图1 ffmpeg推流流程图

较前面的文章的流程图而言,本流程图显的复杂些,稍微解释下:

ffmpeg 打开摄像头跟打开普通的视频流方法一致,只是输入url是摄像头的名称。真正打开

摄像头操作由dshow来完成,ffmpeg只是调用dshow相应的接口获取返回值;读取packet

的API 依然是av_read_frame,返回的packet并不是编码后的视频包而是原始的图片帧,图片

帧的格式是YUV422(我测试的机器是这样的)。拿到图片帧后并不能直接编码,需要做格式转换

因为libx264不支持YUV422,所以有了上图中的格式转换这一环节。转换图片帧格式后将视频数据

丢给编码器就行编码,编码的packet才可以写到输出流中。

下面给出代码:

1.初始化Ffmpeg

void Init()

{

av_register_all();

avfilter_register_all();

avformat_network_init();

avdevice_register_all();

av_log_set_level(AV_LOG_ERROR);

}2.创建输入上下文,打开摄像头

int OpenInput(char *fileName)

{

context = avformat_alloc_context();

context->interrupt_callback.callback = interrupt_cb;

AVInputFormat *ifmt = av_find_input_format("dshow");

AVDictionary *format_opts = nullptr;

av_dict_set_int(&format_opts, "rtbufsize", 18432000 , 0);

int ret = avformat_open_input(&context, fileName, ifmt, &format_opts);

if(ret < 0)

{

return ret;

}

ret = avformat_find_stream_info(context,nullptr);

av_dump_format(context, 0, fileName, 0);

if(ret >= 0)

{

cout <<"open input stream successfully" << endl;

}

return ret;

}3. 初始化编码器

int InitOutputCodec(AVCodecContext** pOutPutEncContext, int iWidth, int iHeight)

{

AVCodec * pH264Codec = avcodec_find_encoder(AV_CODEC_ID_H264);

if(NULL == pH264Codec)

{

printf("%s", "avcodec_find_encoder failed");

return 0;

}

*pOutPutEncContext = avcodec_alloc_context3(pH264Codec);

(*pOutPutEncContext)->codec_id = pH264Codec->id;

(*pOutPutEncContext)->time_base.num =0;

(*pOutPutEncContext)->time_base.den = 1;

(*pOutPutEncContext)->pix_fmt = AV_PIX_FMT_YUV420P;

(*pOutPutEncContext)->width = iWidth;

(*pOutPutEncContext)->height = iHeight;

(*pOutPutEncContext)->has_b_frames = 0;

(*pOutPutEncContext)->max_b_frames = 0;

AVDictionary *options = nullptr;

(*pOutPutEncContext)->flags |= AV_CODEC_FLAG_GLOBAL_HEADER;

int ret = avcodec_open2(*pOutPutEncContext, pH264Codec, &options);

if (ret < 0)

{

printf("%s", "open codec failed");

return ret;

}

return 1;

}4.创建输出上下文以及输出流

int OpenOutput(char *fileName)

{

int ret = 0;

ret = avformat_alloc_output_context2(&outputContext, nullptr, "flv", fileName);

if(ret < 0)

{

goto Error;

}

ret = avio_open2(&outputContext->pb, fileName, AVIO_FLAG_READ_WRITE,nullptr, nullptr);

if(ret < 0)

{

goto Error;

}

for(int i = 0; i < context->nb_streams; i++)

{

AVStream * stream = avformat_new_stream(outputContext, pOutPutEncContext->codec);

stream->codec = pOutPutEncContext;

if(ret < 0)

{

goto Error;

}

}

av_dump_format(outputContext, 0, fileName, 1);

ret = avformat_write_header(outputContext, nullptr);

if(ret < 0)

{

goto Error;

}

if(ret >= 0)

cout <<"open output stream successfully" << endl;

return ret ;

Error:

if(outputContext)

{

for(int i = 0; i < outputContext->nb_streams; i++)

{

avcodec_close(outputContext->streams[i]->codec);

}

avformat_close_input(&outputContext);

}

return ret ;

}4.从输入流读取视频包

std::shared_ptr<AVPacket> ReadPacketFromSource()

{

std::shared_ptr<AVPacket> packet(static_cast<AVPacket*>(av_malloc(sizeof(AVPacket))), [&](AVPacket *p) { av_packet_free(&p); av_freep(&p); });

av_init_packet(packet.get());

lastFrameRealtime = av_gettime();

int ret = av_read_frame(context, packet.get());

if(ret >= 0)

{

return packet;

}

else

{

return nullptr;

}

}5.格式转换

int YUV422To420(uint8_t *yuv422, uint8_t *yuv420, int width, int height)

{

int s = width * height;

int i,j,k = 0;

for(i = 0; i < s;i++)

{

yuv420[i] = yuv422[i * 2];

}

for(i = 0; i < height; i++)

{

if(i%2 != 0) continue;

for(j = 0; j <(width /2); j++)

{

if(4 * j + 1 > 2 * width) break;

yuv420[s + k * 2 * width / 4 +j] = yuv422[i * 2 * width + 4 *j + 1];

}

k++;

}

k = 0;

for(i = 0; i < height; i++)

{

if(i % 2 == 0) continue;

for(j = 0; j < width / 2; j++)

{

if(4 * j + 3 > 2 * width) break;

yuv420[s + s / 4 + k * 2 *width / 4 + j] = yuv422[i *2 * width + 4 * j + 3];

}

k++;

}

return 1;

};6. 简单实例

string fileInput="video=Integrated Webcam";

string fileOutput="rtmp://127.0.0.1/live/mystream";

Init();

if(OpenInput((char *)fileInput.c_str()) < 0)

{

cout << "Open file Input failed!" << endl;

this_thread::sleep_for(chrono::seconds(10));

return 0;

}

InitOutputCodec(&pOutPutEncContext,DstWidth,DstHeight);

if(OpenOutput((char *)fileOutput.c_str()) < 0)

{

cout << "Open file Output failed!" << endl;

this_thread::sleep_for(chrono::seconds(10));

return 0;

}

auto timebase = av_q2d(context->streams[0]->time_base);

int count = 0;

auto in_stream = context->streams[0];

auto out_stream = outputContext->streams[0];

int iGetPic = 0;

uint8_t *yuv420Buffer = (uint8_t *)malloc(DstWidth * DstHeight * 3 / 2);

yuv420Buffer[DstWidth * DstHeight * 3 / 2 - 1] = 0;

while(true)

{

auto packet = ReadPacketFromSource();

if(packet)

{

auto pSwsFrame = av_frame_alloc();

int numBytes=av_image_get_buffer_size(AV_PIX_FMT_YUYV422, DstWidth, DstHeight, 1);

YUV422To420(packet->data, yuv420Buffer, DstWidth, DstHeight);

av_image_fill_arrays((pSwsFrame)->data, (pSwsFrame)->linesize, yuv420Buffer, AV_PIX_FMT_YUV420P, DstWidth, DstHeight, 1);

AVPacket *pTmpPkt = (AVPacket *)av_malloc(sizeof(AVPacket));

av_init_packet(pTmpPkt);

pTmpPkt->data = NULL;

pTmpPkt->size = 0;

int iRet = avcodec_encode_video2(pOutPutEncContext, pTmpPkt, pSwsFrame, &iGetPic);

if(iRet >= 0 && iGetPic)

{

int ret = av_write_frame(outputContext, pTmpPkt);

cout << "ret:"<< ret <<endl;

//this_thread::sleep_for(std::chrono::milliseconds(40));

}

av_frame_free(&pSwsFrame);

av_packet_free(&pTmpPkt);

}

}

CloseInput();

CloseOutput();

cout <<"Transcode file end!" << endl;

this_thread::sleep_for(chrono::hours(10));

return 0;如需实例源码或技术交流,请加入 流媒体/Ffmpeg/音视频 127903734

源码地址 http://pan.baidu.com/s/1o8Lkozw

视频地址:网盘地址:http://pan.baidu.com/s/1jH4dYN8

本文介绍在Windows系统中使用FFmpeg从摄像头捕获视频流,并将其推送到RTMP服务器的方法。文章提供了详细的步骤说明及核心代码示例,包括初始化FFmpeg、打开摄像头、配置编码器等。

本文介绍在Windows系统中使用FFmpeg从摄像头捕获视频流,并将其推送到RTMP服务器的方法。文章提供了详细的步骤说明及核心代码示例,包括初始化FFmpeg、打开摄像头、配置编码器等。

1590

1590

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?