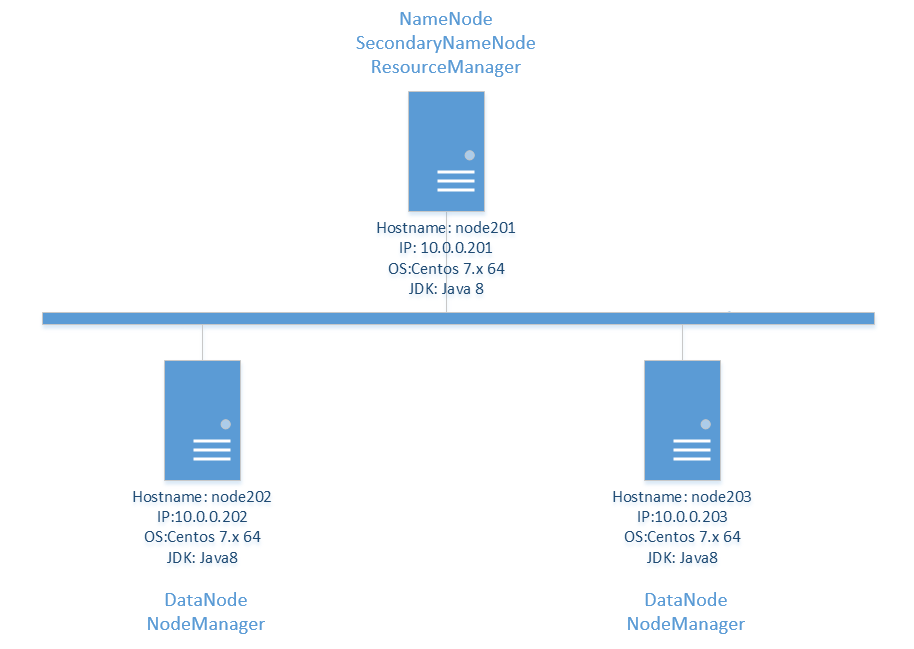

系统拓扑

| 角色 | ip地址 | hdfs | yarn |

| Master | 10.0.0.201 | NameNode | ResourceManager |

| slave | 10.0.0.202 | DataNode | NodeManager |

| slave | 10.0.0.203 | DataNode | NodeManager |

前提条件

安装jdk

关闭selinux

关闭防火墙或者配置端口

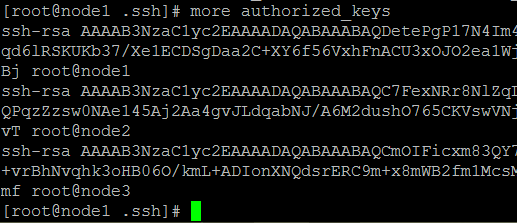

设置ssh免密登录

在所有节点生RSA密钥对, 不要设置私钥密码。

ssh-keygen

把所有节点的公钥加入authorized_keys文件。

cat id_rsa.pub >> authorized_keys

cat id_rsa.pub.202 >> authorized_keys

cat id_rsa.pub.203 >> authorized_keys

scp authorized_keys root@10.0.0.202:/root/.ssh/authorized_keys

scp authorized_keys root@10.0.0.203:/root/.ssh/authorized_keys

ssh node1

ssh node2

ssh node3

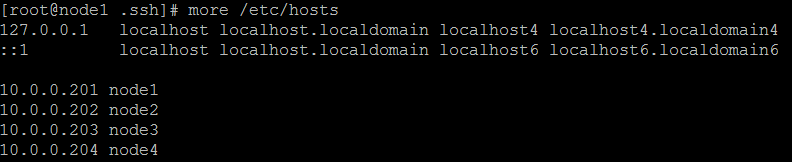

设置/etc/hosts

scp /etc/hosts root@10.0.0.202:/etc/hosts

scp /etc/hosts root@10.0.0.203:/etc/hosts

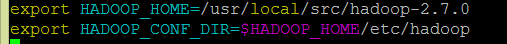

设置环境变量

/etc/profile

配置Hadoop

解压hadoop

并创建目录

mkdir –p dfs/name

mkdir –p dfs/data

vim /etc/profile

exportHADOOP_HOME=/usr/local/src/Hadoop-2.7.0

vim ./etc/Hadoop/hadoop-env.sh

export JAVA_HOME=/usr/local/src/jdk1.7.0_60

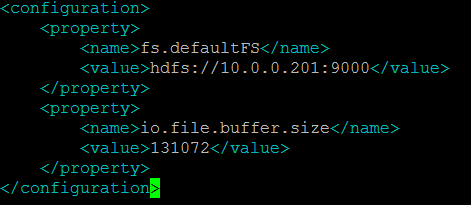

core-site.xml

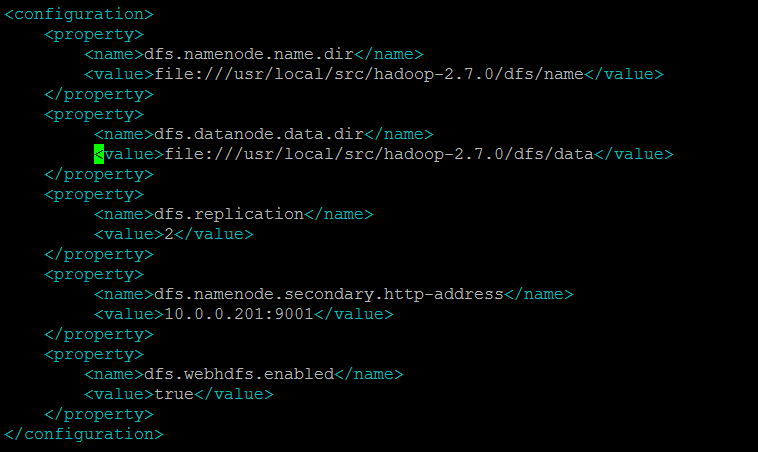

hdfs-site.xml

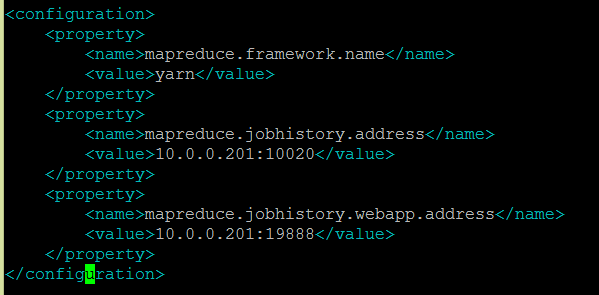

mapred-site.xml

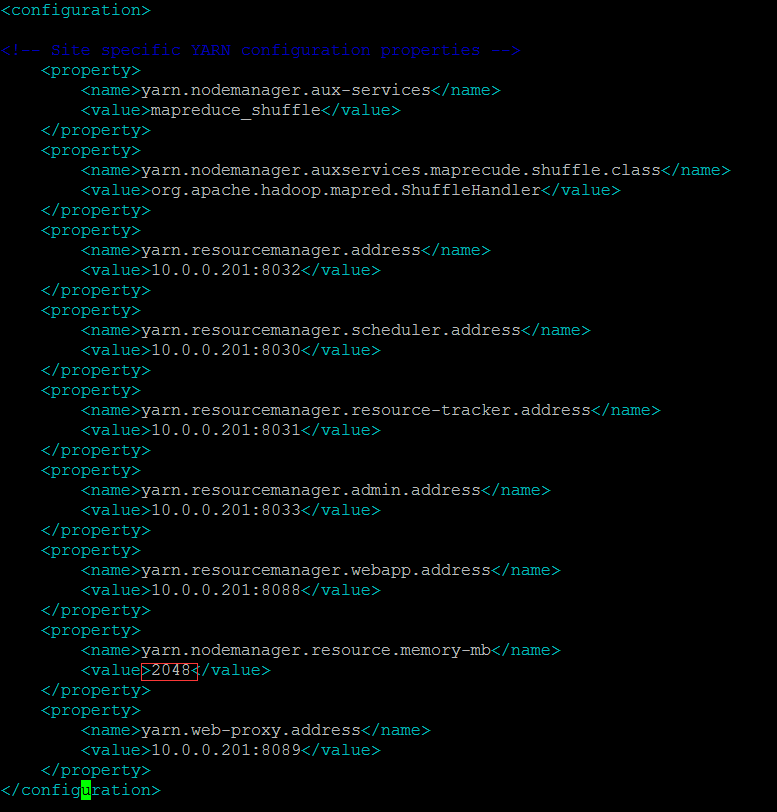

yarn-site.xml

yarn.nodemanager.resource.memory-mb必须大于等于2048

图中有误,改为yarn.nodemanager.aux-services.mapreduce_shuffle.class

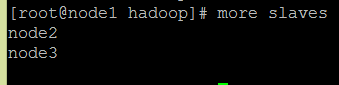

修改slaves

分发hadoop

scp -r ./hadoop-2.7.0 root@10.0.0.202:/usr/local/src

scp -r ./hadoop-2.7.0 root@10.0.0.203:/usr/local/src

运行hadoop

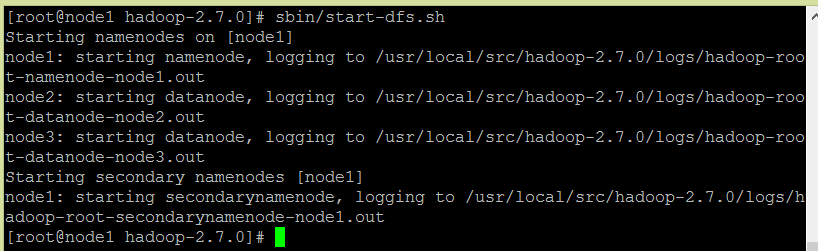

运行HDFS

bin/hdfs namenode –format (运行一次)

sbin/start-dfs.sh

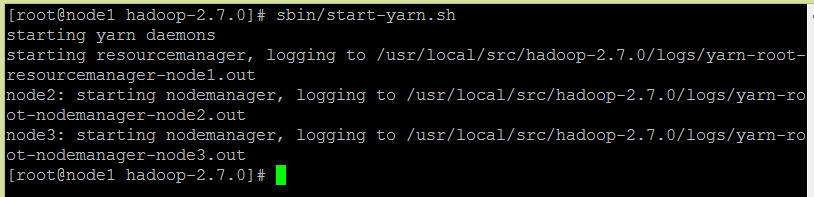

运行yarn

sbin/yarn-daemon.sh --config ./etc/hadoop start proxyserver

sbin/start-yarn.sh

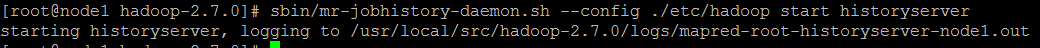

运行mapreduce JobHistory Server

sbin/mr-jobhistory-daemon.sh –config ./etc/hadoopstart historyserver

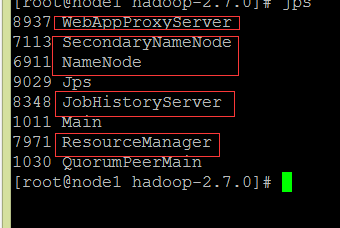

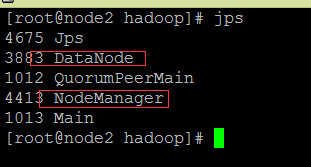

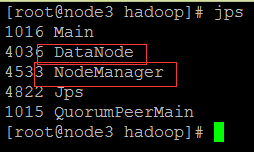

查看进程

201

202

203

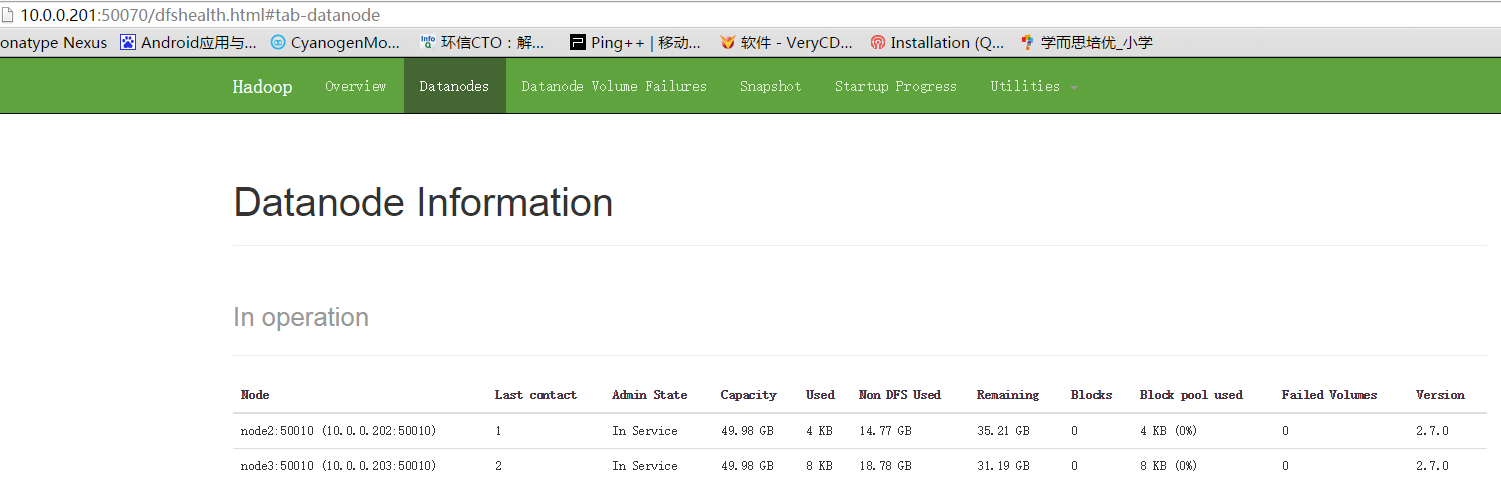

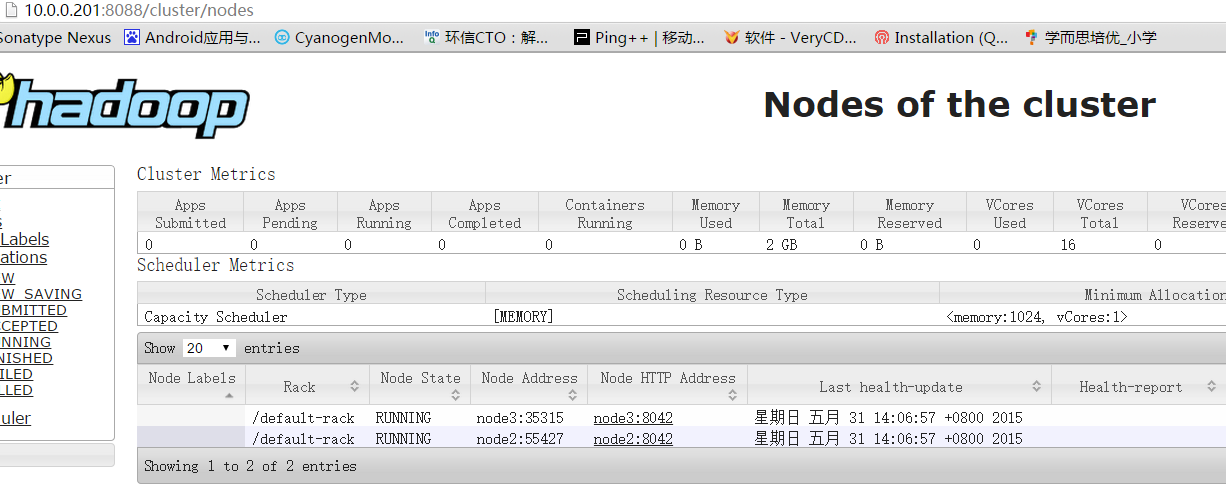

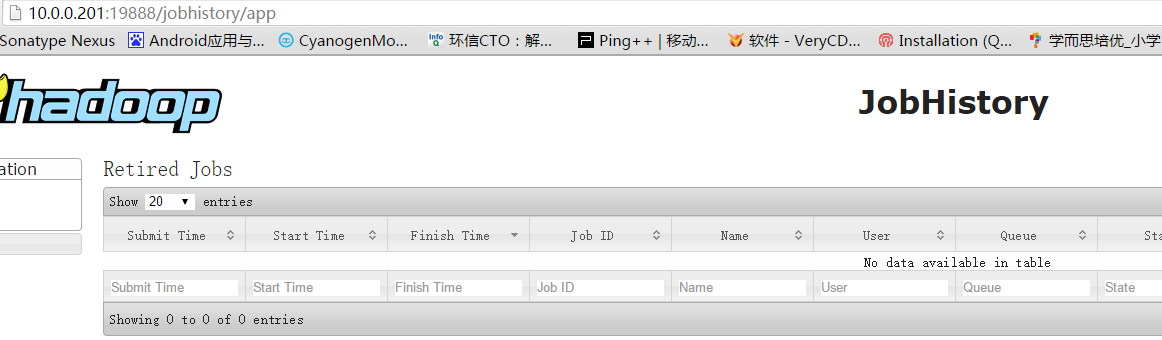

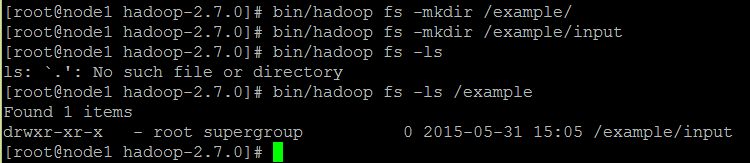

测试hadoop

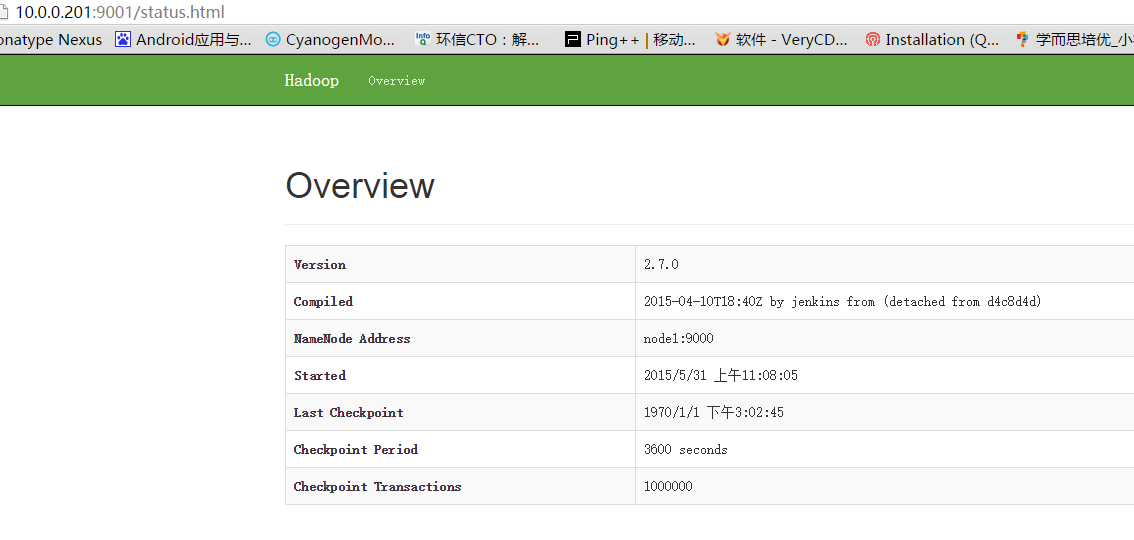

hdfs namenode

secondary namenode

yarn ResourceManager

MapReduce JobHistory Server

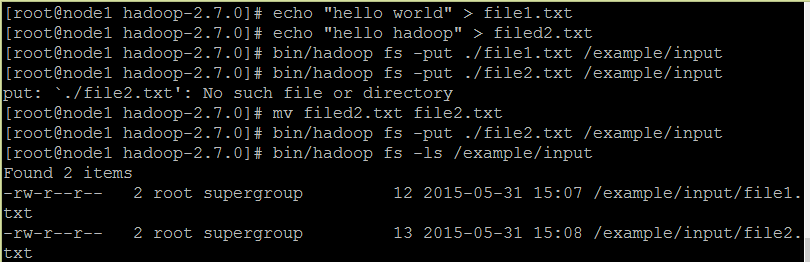

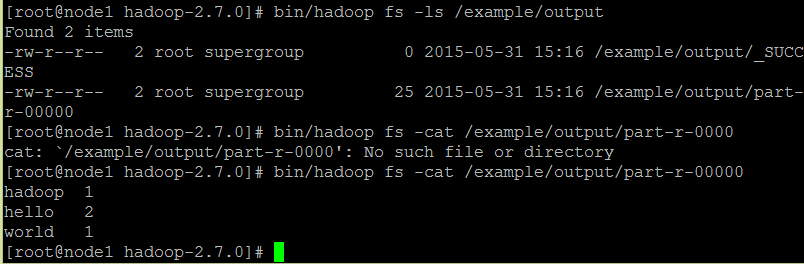

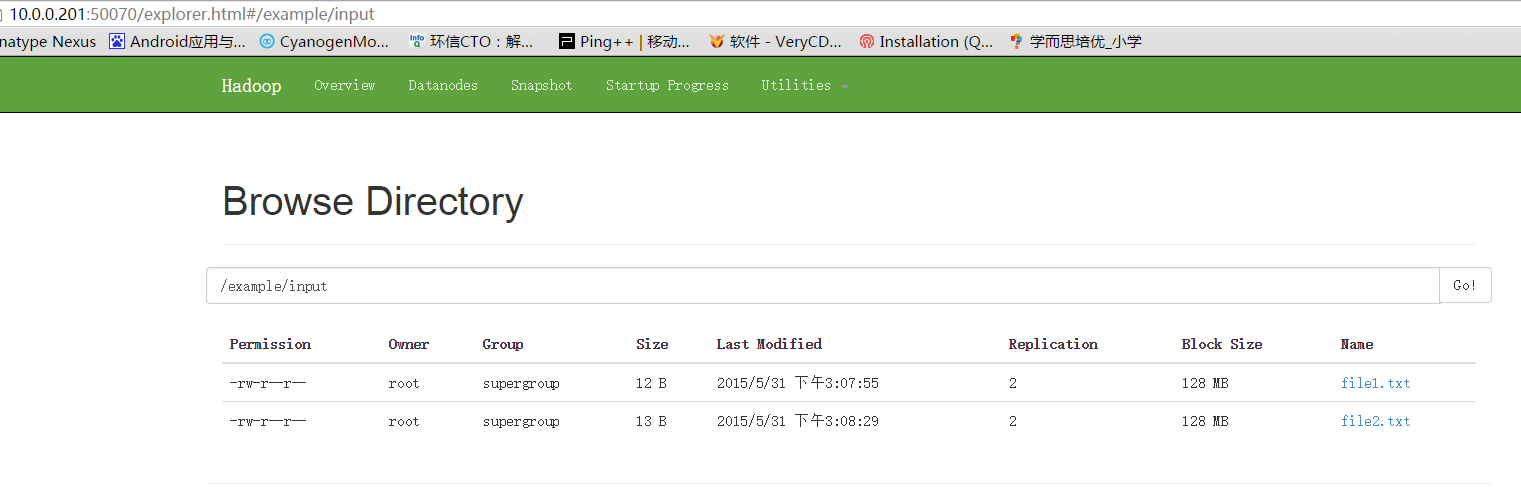

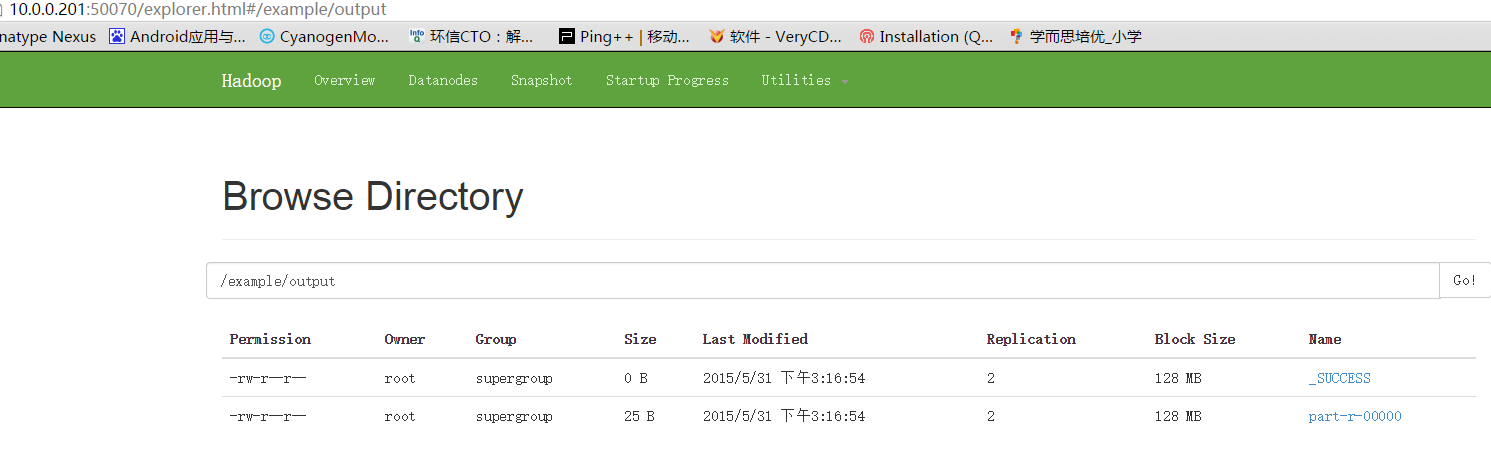

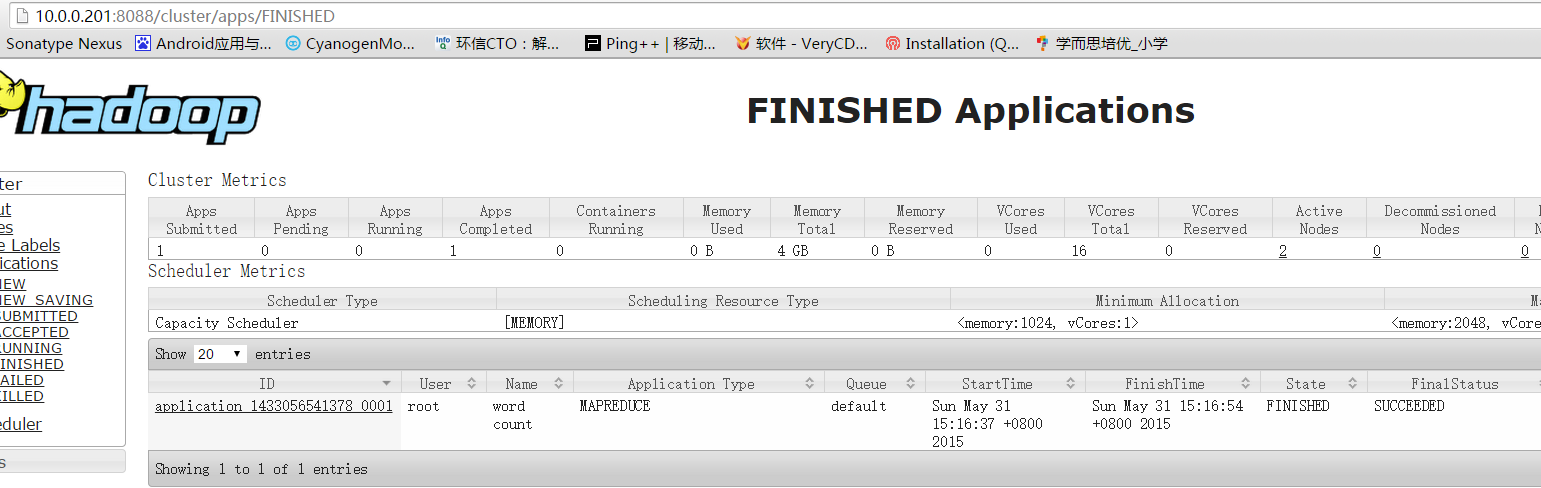

运行mapreduce worldcount

bin/hadoop jar./share/hadoop/mapreduce/hadoop-mapred uce-examples-2.7.0.jarwordcount /example/input /example/output

停止hadoop

停止hdfs

sbin/stop-dfs.sh

停止yarn

sbin/stop-yarn.sh

停止WebAppProxy

sbin/yarn-daemon.sh –config ./etc/hadoopstop proxyserver

停止MapReduce JobHistory Server

sbin/mr-jobhistory-daemon.sh –config ./etc/hadoopstop historyserver

3143

3143

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?