我们按官网的docker方式安装ELK:

Elasticsearch

docker pull docker.elastic.co/elasticsearch/elasticsearch:5.5.3

启动Elasticsearch容器(适合小内存的机器):

docker run -d --name ecs2 -p 9200:9200 -p 9300:9300 -e "discovery.type=single-node" -e ES_JAVA_OPTS="-Xms1g -Xmx1g" -e "http.host=0.0.0.0" -e "transport.host=127.0.0.1" docker.elastic.co/elasticsearch/elasticsearch:5.5.3

Kibana

docker pull docker.elastic.co/kibana/kibana:5.5.3

启动Kibana容器:

docker run -p 5601:5601 -e "ELASTICSEARCH_URL=http://localhost:9200" --name my-kibana \

--network host -d docker.elastic.co/kibana/kibana:5.5.3Logstash

docker pull docker.elastic.co/logstash/logstash:5.5.3

在/home建立logstash目录

创建logstash/logstash.yml,配置xpack对于logstash的监控:

http.host: "0.0.0.0"

path.config: /usr/share/logstash/pipeline

xpack.monitoring.elasticsearch.url: http://localhost:9200

xpack.monitoring.elasticsearch.username: elastic

xpack.monitoring.elasticsearch.password: changeme创建logstash/conf.d/logstash.conf,配置logstash的输入输出:

input {

file {

path => "/tmp/access.log"

start_position => "beginning"

}

}

output {

elasticsearch {

hosts => ["localhost:9200"]

user => "elastic"

password => "changeme"

}

}

启动Logstash容器:

docker run -v /home/logstash/conf.d:/usr/share/logstash/pipeline/:ro -v /tmp:/tmp:ro -v /home/logstash/logstash.yml:/usr/share/logstash/config/logstash.yml:ro --name my-logstash --network host -d docker.elastic.co/logstash/logstash:5.5.3

Dockerfile制作镜像

FROM docker.elastic.co/logstash/logstash:5.5.3

RUN rm -f /usr/share/logstash/pipeline/logstash.conf

ADD pipeline/ /usr/share/logstash/pipeline/

ADD config/ /usr/share/logstash/config/

docker run -v /tmp:/tmp:ro --name=容器名 --network host 镜像名

测试一下,在/tmp/access.log中加入些日志信息:

加入些日志信息

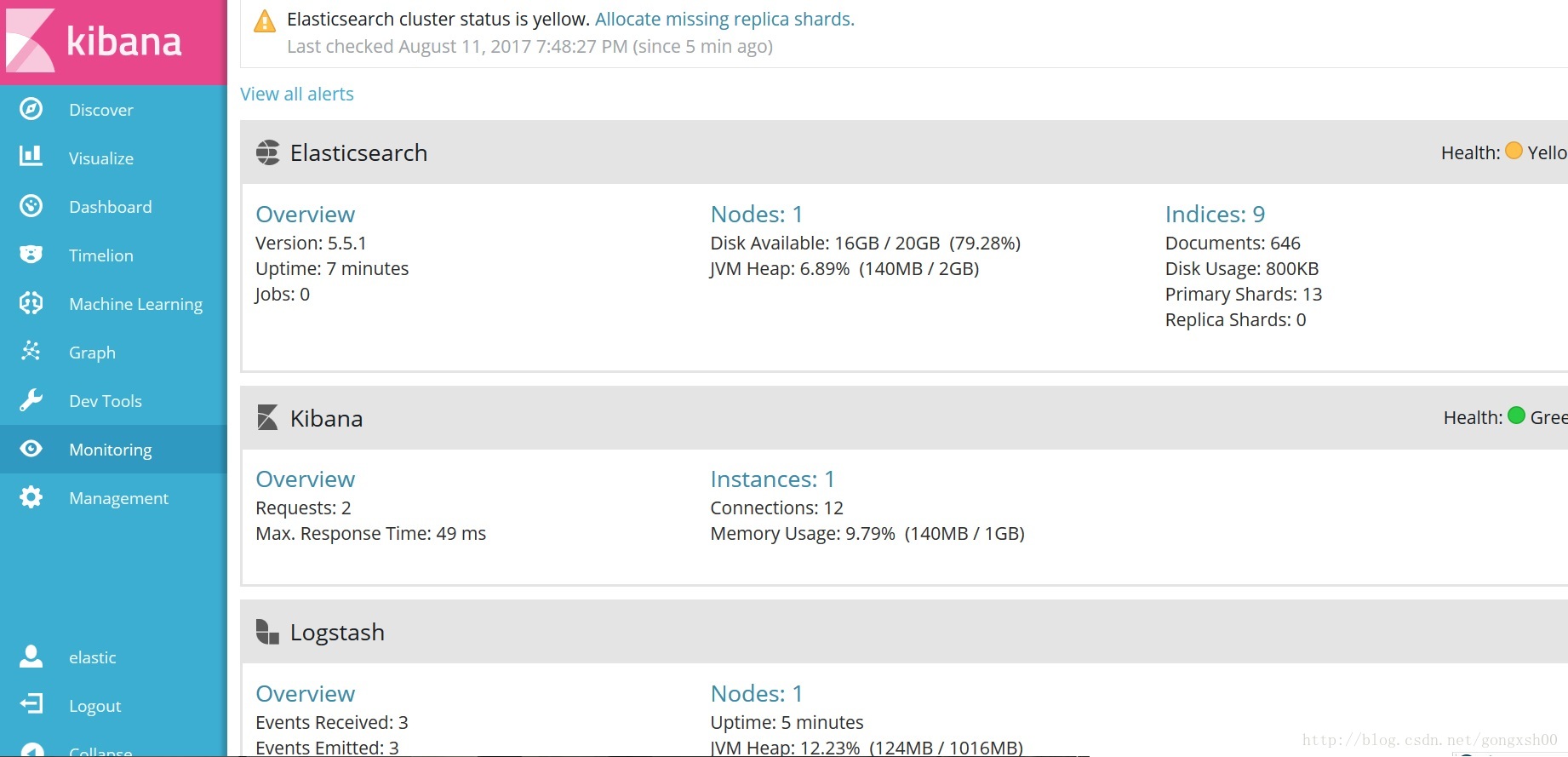

打开kibana的链接http://yourhost:5601,使用用户名/密码: elastic/changeme登录。在”Configure an index pattern”页面点击Create按钮。点击菜单Monitor即可查看ELK节点的状态

在Kibana点击Discover菜单,可以看到相关的日志信息:

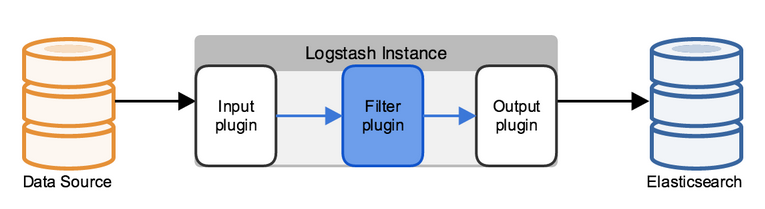

Logstash原理:

Logstash使用管道方式进行日志的搜集处理和输出。有点类似*NIX系统的管道命令 xxx | ccc | ddd,xxx执行完了会执行ccc,然后执行ddd。

在logstash中,包括了三个阶段:

输入input --> 处理filter(不是必须的) --> 输出output

每个阶段都由很多的插件配合工作,比如file、elasticsearch、redis等等。

每个阶段也可以指定多种方式,比如输出既可以输出到elasticsearch中,也可以指定到stdout在控制台打印。

由于这种插件式的组织方式,使得logstash变得易于扩展和定制。

修改默认密码

Elastic Docker Images的默认账号密码是elastic/changeme,使用默认密码是不安全的,假设要把密码改为elastic0。在Docker所在服务器上执行命令,修改用户elastic的密码:

curl -XPUT -u elastic 'localhost:9200/_xpack/security/user/elastic/_password' -H "Content-Type: application/json" \

-d '{

"password" : "elastic0"

}'设置密码,重启Kibana:

docker stop my-kibana && docker rm my-kibana

docker run -p 5601:5601 -e "ELASTICSEARCH_URL=http://localhost:9200" -e "ELASTICSEARCH_PASSWORD=elastic0" \

--name my-kibana --network host -d docker.elastic.co/kibana/kibana:5.5.3

修改logstash/logstash.yml,logstash/conf.d/logstash.conf中的密码,然后重启logstash服务

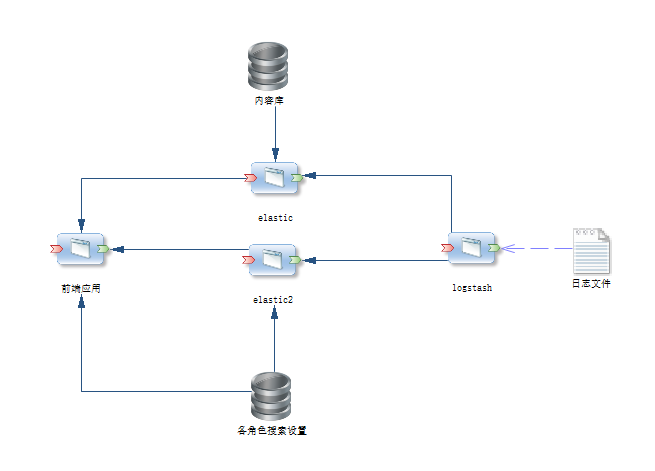

docker restart my-logstash目前构架图:

目前想法是logstash收集日志,放入elastic进行存储,医学库的基础数据同步到elastic索引存储。前端应该的查询结果根据内容库存储索引(elastic自动得分倒序为第一顺序),后台设置各角色的权限排序和日志索引内分析来改变搜索结果的权重排序。

目前设计的角色排序内容如下(按点击量倒序):

role field_order order

admin comments_num desc

DSL语言查询方案:

GET /content/about_test/_search

{

"query": {

"function_score" : {

"query" : {

"bool" : {

"should" : [ {

"match" : {

"title" : {

"query" : "springboot",

"type" : "boolean"

}

}

}, {

"match" : {

"author" : {

"query" : "springboot",

"type" : "boolean"

}

}

} ]

}

},

"functions" : [ {

"filter" : {

"match" : {

"title" : {

"query" : "springboot",

"type" : "boolean"

}

}

},

"weight" : 100

}, {

"filter" : {

"match" : {

"author" : {

"query" : "springboot",

"type" : "boolean"

}

}

},

"weight" : 20

} ]

}

},

"sort": {

"_score":{ "order": "desc" },

"commentsNum": { "order": "desc" }}

}

其中content 索引名 about_test 为type 查询关键词为springboot ,按查询得到结果的分数倒序排序,加入人工角色排序内容commentsNum 倒序排列 得到结果如下:

{

"took": 319,

"timed_out": false,

"_shards": {

"total": 5,

"successful": 5,

"failed": 0

},

"hits": {

"total": 3,

"max_score": null,

"hits": [

{

"_index": "content",

"_type": "about_test",

"_id": "2",

"_score": 9.775897,

"_source": {

"id": "2",

"cid": 100,

"title": "springboot thymeleaf和shiro 整合——按钮可见性",

"slug": 0,

"created": null,

"modified": null,

"type": "article",

"content": "springboot thymeleaf和shiro 整合——按钮可见性后台管理系统",

"tags": "",

"categories": "",

"hits": null,

"commentsNum": 3,

"allowComment": true,

"allowPing": false,

"allowFeed": false,

"status": 1,

"gtmcreated": "2017-09-22T05:24:30.000Z",

"author": "bootdo",

"gtmModified": "2017-09-22T05:24:30.000Z"

},

"sort": [

9.775897,

3

]

},

{

"_index": "content",

"_type": "about_test",

"_id": "7",

"_score": 6.721332,

"_source": {

"id": "7",

"cid": 112,

"title": " SpringBoot 在启动时运行代码",

"slug": 0,

"created": null,

"modified": null,

"type": "article",

"content": "热热",

"tags": "",

"categories": "",

"hits": null,

"commentsNum": 1,

"allowComment": true,

"allowPing": false,

"allowFeed": true,

"status": 1,

"gtmcreated": "2017-09-26T07:18:15.000Z",

"author": "bootdo",

"gtmModified": "2017-09-26T07:18:15.000Z"

},

"sort": [

6.721332,

1

]

},

{

"_index": "content",

"_type": "about_test",

"_id": "1",

"_score": 5.3770657,

"_source": {

"id": "1",

"cid": 75,

"title": "基于 springboot 和 Mybatis 的后台管理系统 BootDo",

"slug": 0,

"created": null,

"modified": null,

"type": "article",

"content": "基于 springboot 和 Mybatis 的后台管理系统 BootDo",

"tags": "",

"categories": "",

"hits": null,

"commentsNum": 2,

"allowComment": false,

"allowPing": false,

"allowFeed": true,

"status": 1,

"gtmcreated": "2017-09-22T06:44:44.000Z",

"author": "bootdo",

"gtmModified": "2017-09-22T06:44:44.000Z"

},

"sort": [

5.3770657,

2

]

}

]

}

}

结果已按_score(分数)第一排序倒序,commentsNum 第二倒序排列

实现代码如下:

SearchRequestBuilder searchRequestBuilder = client

.prepareSearch(indexId);

String key = "springboot";

QueryStringQueryBuilder queryBuilder = new QueryStringQueryBuilder(key);

queryBuilder.field("title").field("content");

searchRequestBuilder.

setQuery(queryBuilder);

// 分页应用

searchRequestBuilder.setFrom(0).setSize(3000);

// 设置是否按查询匹配度排序

searchRequestBuilder.setExplain(true);

searchRequestBuilder.addSort("_score", SortOrder.DESC);

searchRequestBuilder.addSort("commentsNum", SortOrder.DESC);

BoolQueryBuilder boolQuery = QueryBuilders.boolQuery();

boolQuery.should(QueryBuilders.matchQuery("title", key));

boolQuery.should(QueryBuilders.matchQuery("content", key));

/* QueryBuilder qb = QueryBuilders.multiMatchQuery(

"kimchy elasticsearch", // Text you are looking for

"user", "message" // Fields you query on

);*/

// 设置高亮显示

searchRequestBuilder.addHighlightedField("title");

searchRequestBuilder.addHighlightedField("content");

searchRequestBuilder

.setHighlighterPreTags("<span color:=''>");

searchRequestBuilder.setHighlighterPostTags("");

FunctionScoreQueryBuilder functionScoreQueryBuilder = QueryBuilders.functionScoreQuery(boolQuery);

functionScoreQueryBuilder.add(QueryBuilders.matchQuery("title", key),

ScoreFunctionBuilders.weightFactorFunction(1000));

functionScoreQueryBuilder.add(QueryBuilders.matchQuery("content", key),

ScoreFunctionBuilders.weightFactorFunction(500));

searchRequestBuilder.setQuery(functionScoreQueryBuilder);

//打印的内容 可以在 Elasticsearch head 和 Kibana 上执行查询

log.info("\n{}", searchRequestBuilder);

SearchResponse searchResponse = searchRequestBuilder.execute().actionGet();;

long totalHits = searchResponse.getHits().totalHits();

long length = searchResponse.getHits().getHits().length;

log.info("共查询到[{}]条数据,处理数据条数[{}]", totalHits, length);

小弟能力有限,具体日志来分析改变结果将在以后博客中继续研究。

3435

3435

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?