BeeGFS读文件分析

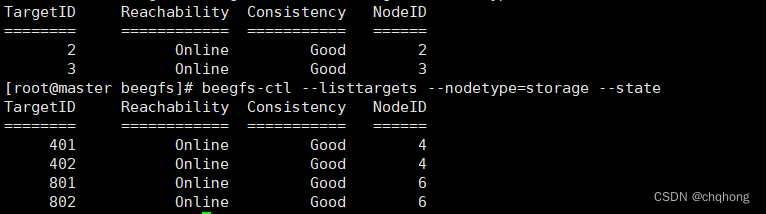

集群部署角色

| 节点名 | 节点IP | 节点角色 | 软件版本 |

|---|---|---|---|

| master | 192.168.10.100 | mgmtd、meta、storage、client | 系统版本:CentOS 7.6 软件版本:BeeGFS 7.2.4 |

| node1 | 192.168.10.101 | meta、storage、client | 系统版本:CentOS 7.6 软件版本:BeeGFS 7.2.4 |

| node2 | 192.168.10.102 | client | 系统版本:CentOS 7.6 软件版本:BeeGFS 7.2.4 |

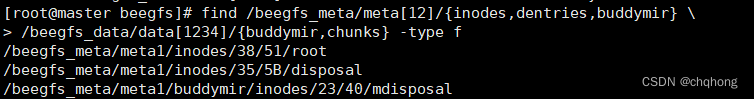

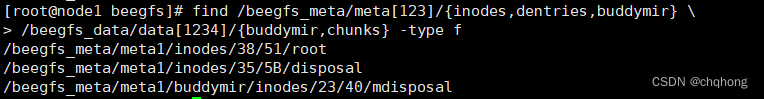

无文件时元数据目录

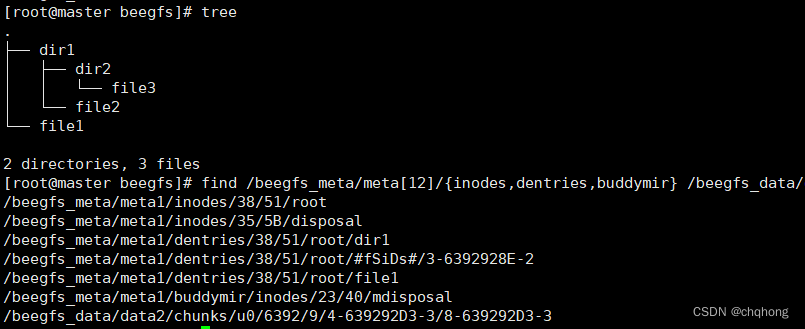

新建文件时元数据目录

元数据目录结构

Meta源码分析

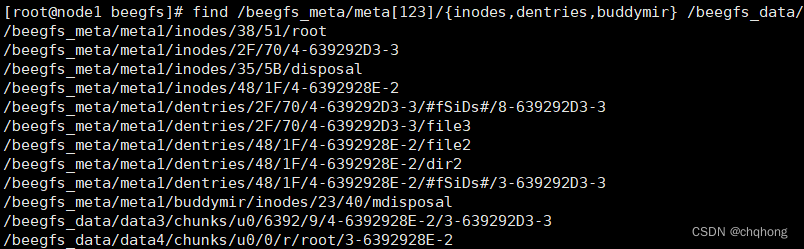

首先我根据file1元数据存储的目录反向分析**/beegfs_meta/meta1/dentries/38/51/root/#fSiDs#/3-6392928E-2**,分析root为何存储在38/51目录下。

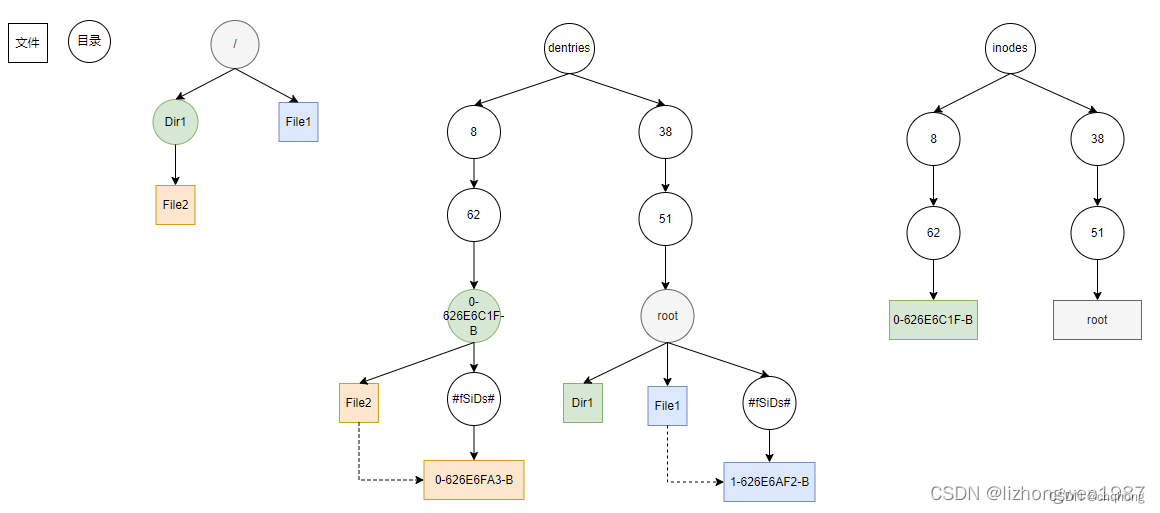

dentries、inodes目录都有128*128个十六进制目录, 128个十六进制表示的level1级目录,每个level1下有128个十六进制表示的level2级目录,总共16384个目录;

根据资料可知3-6392928E-2,这个FileID文件的名字组成结构为**--。

其中counterPart起计数作用,新增inode:idCounter+1;timestampPart为时间戳;localNodeID根据结果来看可能是对应元数据存储的节点。

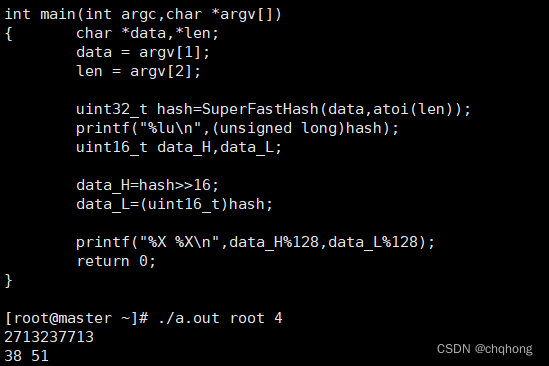

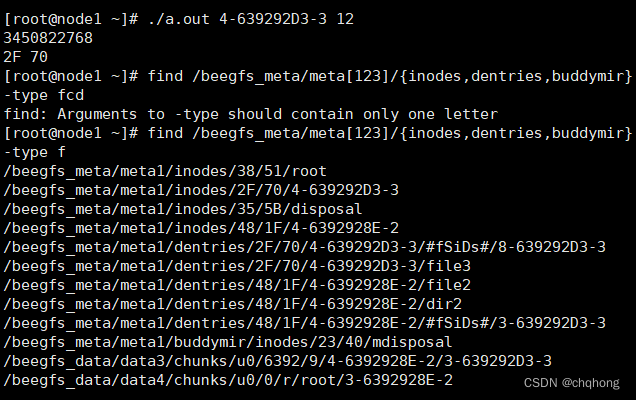

文档中有提到FileID的32位hash字符串,高16位%128,得到level1目录,低16位%128,得到level2目录,hash算法使用的是hash算法Paul Hsieh**。但是没有提及到是对什么字符串进行hash,我将Paul Hsieh算法运行,并输入尝试,结果如下。

那么结果说明其是对文件名进行hash,这也就是为什么root总是处于/38/51目录下。可以看到file3所在的目录dentries/2F/70/4-639292D3-3/也是这样得来的。且只有在新建dir时,才会新建inodes和dentries目录,新建的文件的元数据保存在其父目录元数据目录dentries/2F/70/4-639292D3-3/下。

根据上面的结果我猜测读取文件的过程,如读取file2,由客户端发起读取请求,元数据服务器接收到请求后,根据文件名在root元数据目录下找到dentries/38/51/root/dir1,dir1中可能存储着dir1的元数据存储节点以及FileID,也就是node1和4-6392928E-2,而localNodeID为2,也对应上面的猜测,是元数据存储节点master对应的ID。根据信息来到node1节点,根据FileID的hash结果可以找到inodes/48/1F/4-6392928E-2和dentries/48/1F/4-6392928E-2/file2。

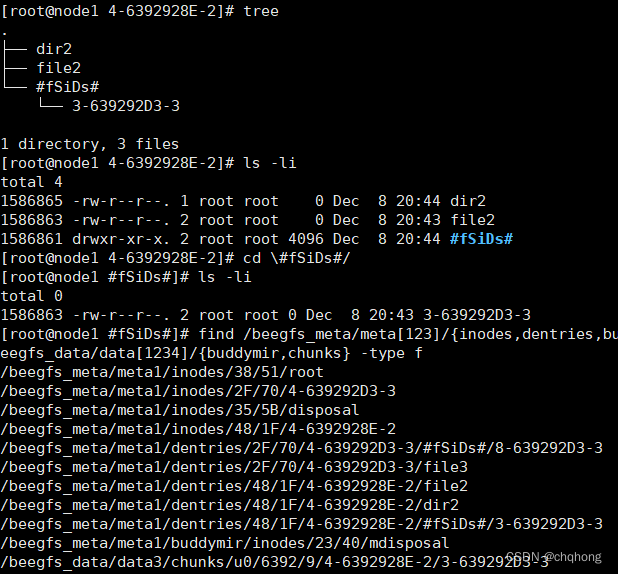

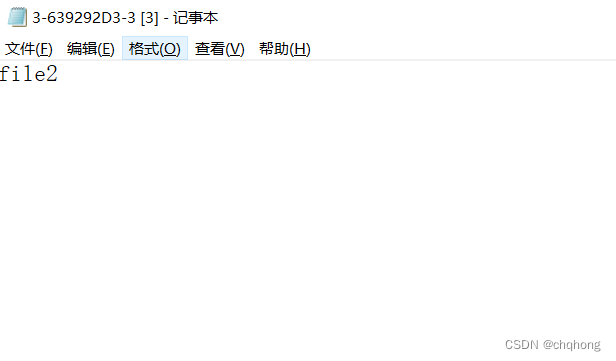

可以看到目录下的file2和**#fSiDs#目录下的3-639292D3-3有相同的inode号,说明是一个硬链接。那么至此,就拿到了file2对应的FileID文件,我猜测在这个文件中有存储信息,也就是存储节点和目录的信息。从data3的存储目录中,也能看到FileID和存储路径多少有些联系,打开其FileID在存储节点对应的文件,也就是file2的内容。

以上是根据结果对读取结果的猜测,那么接下来就是根据源码来验证猜测了。既然文件的读写都离不开hash,那么就从hash开始入手。

在beeGFS\v7-master\common\source\common\toolkit\StorageTk.h**里能找到如下函数。

/**

* @return path/hashDir1/hashDir2/fileName

*/

static std::string getHashPath(const std::string path, const std::string entryID,

size_t numHashesLevel1, size_t numHashesLevel2)

{

return path + "/" + getBaseHashPath(entryID, numHashesLevel1, numHashesLevel2);

}

/**

* @return (hashDir1, hashDir2)

*/

static std::pair<unsigned, unsigned> getHash(const std::string& entryID,

size_t numHashesLevel1, size_t numHashesLevel2)

{

uint16_t hashLevel1;

uint16_t hashLevel2;

getHashes(entryID, numHashesLevel1, numHashesLevel2, hashLevel1, hashLevel2);

return {hashLevel1, hashLevel2};

}

/**

* @return hashDir1/hashDir2/entryID

*/

static std::string getBaseHashPath(const std::string entryID,

size_t numHashesLevel1, size_t numHashesLevel2)

{

uint16_t hashLevel1;

uint16_t hashLevel2;

getHashes(entryID, numHashesLevel1, numHashesLevel2, hashLevel1, hashLevel2);

return StringTk::uint16ToHexStr(hashLevel1) + "/" + StringTk::uint16ToHexStr(hashLevel2) +

"/" + entryID;

}

在beeGFS\v7-master\common\source\common\toolkit\MetaStorageTk.h 中getMetaDirEntryPath函数使用了getHashPath。

/**

* Get path to a contents directory (i.e. the dir containing the dentries by name).

*

* @param dirEntryID entryID of the directory for which the caller wants the contents path

*/

static std::string getMetaDirEntryPath(const std::string dentriesPath,

const std::string dirEntryID)

{

return StorageTk::getHashPath(dentriesPath, dirEntryID,

META_DENTRIES_LEVEL1_SUBDIR_NUM, META_DENTRIES_LEVEL2_SUBDIR_NUM);

}

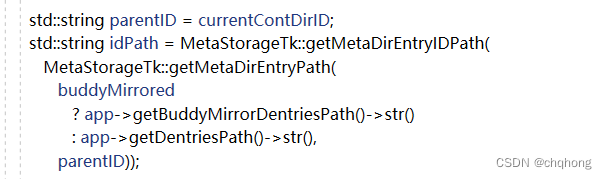

在beeGFS\v7-master\meta\source\net\message\fsck\RetrieveFsIDsMsgEx.cpp中RetrieveFsIDsMsgEx函数使用了getMetaDirEntryPath。

bool RetrieveFsIDsMsgEx::processIncoming(ResponseContext& ctx)

{

LogContext log("Incoming RetrieveFsIDsMsg");

log->log(Log_NOTICE, "Registration and management info download complete.");

App* app = Program::getApp();

MetaStore* metaStore = app->getMetaStore();

unsigned hashDirNum = getHashDirNum();

bool buddyMirrored = getBuddyMirrored();

std::string currentContDirID = getCurrentContDirID();

unsigned maxOutIDs = getMaxOutIDs();

int64_t lastContDirOffset = getLastContDirOffset();

int64_t lastHashDirOffset = getLastHashDirOffset();

int64_t newHashDirOffset;

FsckFsIDList fsIDsOutgoing;

unsigned readOutIDs = 0;

NumNodeID localNodeNumID = buddyMirrored

? NumNodeID(Program::getApp()->getMetaBuddyGroupMapper()->getLocalGroupID())

: Program::getApp()->getLocalNode().getNumID();

MirrorBuddyGroupMapper* bgm = Program::getApp()->getMetaBuddyGroupMapper();

if (buddyMirrored &&

(bgm->getLocalBuddyGroup().secondTargetID == app->getLocalNode().getNumID().val()

|| bgm->getLocalGroupID() == 0))

{

ctx.sendResponse(

RetrieveFsIDsRespMsg(&fsIDsOutgoing, currentContDirID, lastHashDirOffset,

lastContDirOffset));

return true;

}

bool hasNext;

if ( currentContDirID.empty() )

{

hasNext = StorageTkEx::getNextContDirID(hashDirNum, buddyMirrored, lastHashDirOffset,

¤tContDirID, &newHashDirOffset);

if ( hasNext )

lastHashDirOffset = newHashDirOffset;

}

else

hasNext = true;

while ( hasNext )

{

std::string parentID = currentContDirID;

std::string idPath = MetaStorageTk::getMetaDirEntryIDPath(

MetaStorageTk::getMetaDirEntryPath(

buddyMirrored

? app->getBuddyMirrorDentriesPath()->str()

: app->getDentriesPath()->str(),

parentID));

bool parentDirInodeIsTemp = false;

StringList outNames;

int64_t outNewServerOffset;

ListIncExOutArgs outArgs(&outNames, NULL, NULL, NULL, &outNewServerOffset);

FhgfsOpsErr listRes;

unsigned remainingOutIDs = maxOutIDs - readOutIDs;

DirInode* parentDirInode = metaStore->referenceDir(parentID, buddyMirrored, true);

// it could be, that parentDirInode does not exist

// in fsck we create a temporary inode for this case, so that we can modify the dentry

// hopefully, the inode itself will get fixed later

if ( unlikely(!parentDirInode) )

{

log.log(

Log_NOTICE,

"Could not reference directory. EntryID: " + parentID

+ " => using temporary directory inode ");

// create temporary inode

int mode = S_IFDIR | S_IRWXU;

UInt16Vector stripeTargets;

Raid0Pattern stripePattern(0, stripeTargets, 0);

parentDirInode = new DirInode(parentID, mode, 0, 0,

Program::getApp()->getLocalNode().getNumID(), stripePattern, buddyMirrored);

parentDirInodeIsTemp = true;

}

listRes = parentDirInode->listIDFilesIncremental(lastContDirOffset, 0, remainingOutIDs,

outArgs);

lastContDirOffset = outNewServerOffset;

if ( parentDirInodeIsTemp )

SAFE_DELETE(parentDirInode);

else

metaStore->releaseDir(parentID);

if (listRes != FhgfsOpsErr_SUCCESS)

{

log.logErr("Could not read dentry-by-ID files; parentID: " + parentID);

}

// process entries

readOutIDs += outNames.size();

for ( StringListIter iter = outNames.begin(); iter != outNames.end(); iter++ )

{

std::string id = *iter;

std::string filename = idPath + "/" + id;

// stat the file to get device and inode information

struct stat statBuf;

int statRes = stat(filename.c_str(), &statBuf);

int saveDevice;

uint64_t saveInode;

if ( likely(!statRes) )

{

saveDevice = statBuf.st_dev;

saveInode = statBuf.st_ino;

}

else

{

saveDevice = 0;

saveInode = 0;

log.log(Log_CRITICAL,

"Could not stat ID file; ID: " + id + ";filename: " + filename);

}

FsckFsID fsID(id, parentID, localNodeNumID, saveDevice, saveInode, buddyMirrored);

fsIDsOutgoing.push_back(fsID);

}

if ( readOutIDs < maxOutIDs )

{

// directory is at the end => proceed with next

hasNext = StorageTkEx::getNextContDirID(hashDirNum, buddyMirrored, lastHashDirOffset,

¤tContDirID, &newHashDirOffset);

if ( hasNext )

{

lastHashDirOffset = newHashDirOffset;

lastContDirOffset = 0;

}

}

else

{

// there are more to come, but we need to exit the loop now, because maxCount is reached

hasNext = false;

}

}

ctx.sendResponse(

RetrieveFsIDsRespMsg(&fsIDsOutgoing, currentContDirID, lastHashDirOffset,

lastContDirOffset) );

return true;

}

最终在元数据服务器的网络消息监听meta/source/net/message/NetMessageFactory.cpp中找到了RetrieveFsIDsMsgEx。

根据函数的输入可以看到,确实是将收到的文件路径进行查询,在元数据服务器一步步获取到相应的FileId。按照同样的方法也找到寻找inode相关的源码,但是由于meta端源码编译没跑通,没有进一步的验证,很多东西都是靠猜测,也是一知半解。目前打算将编译跑通后先验证以上猜测,再进一步对FileId文件的存储信息进行分析。

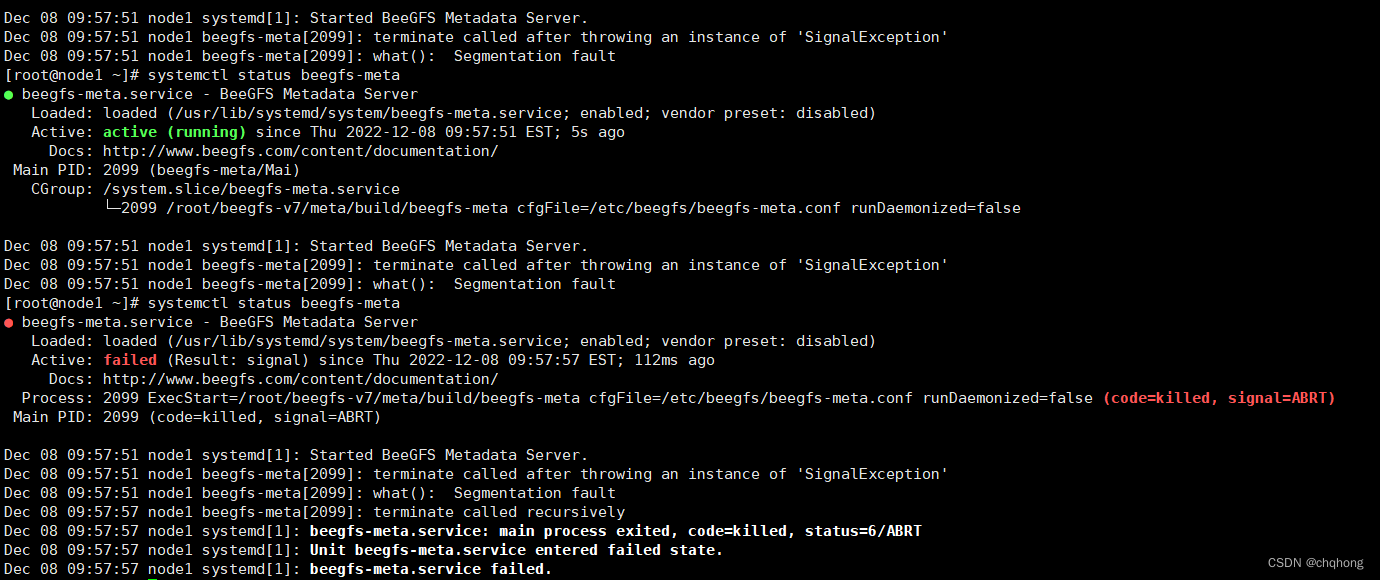

调试问题

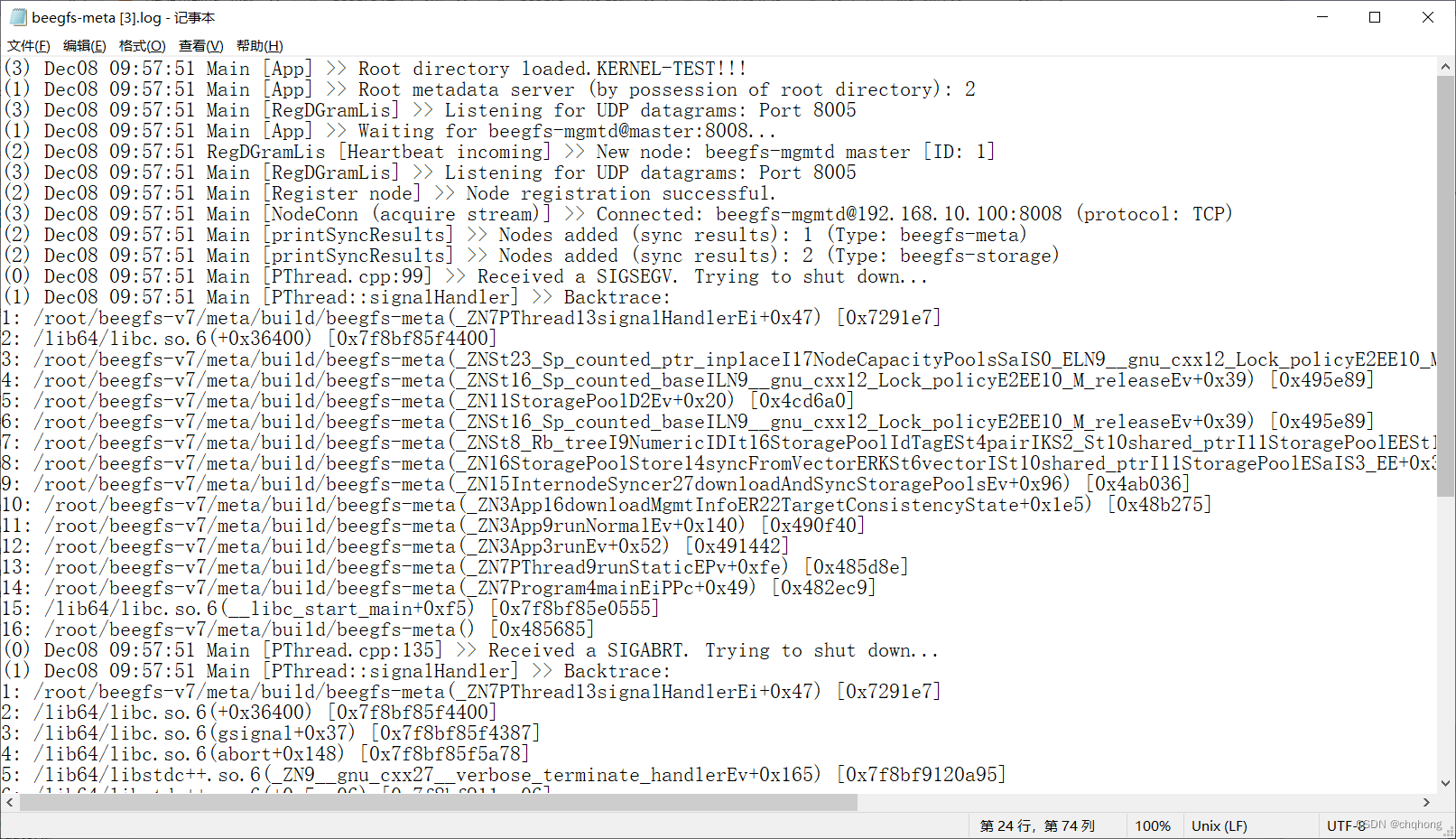

编译后修改了服务启动路径,可以看到日志第一行的**KERNEL-TEST!!!**是完成了修改后的编译,但是服务跑了大概5秒左右就会报错。如果是路径或者编译错误的话应该会跑不通吧,这个地方卡了很久,单机测试也试了结果也一样。

$ vi /etc/init.d/beegfs-meta

#APP_BIN=/opt/beegfs/sbin/${SERVICE_NAME}

APP_BIN=/root/beegfs-v7/beegfs_meta/build/${SERVICE_NAME}

$ vi /usr/lib/systemd/system/beegfs-meta.service

#ExecStart=/opt/beegfs/sbin/beegfs-meta cfgFile=/etc/beegfs/beegfs-meta.conf runDaemonized=false

ExecStart=/root/beegfs-v7/beegfs-meta cfgFile=/etc/beegfs/beegfs-meta.conf runDaemonized=false

978

978

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?