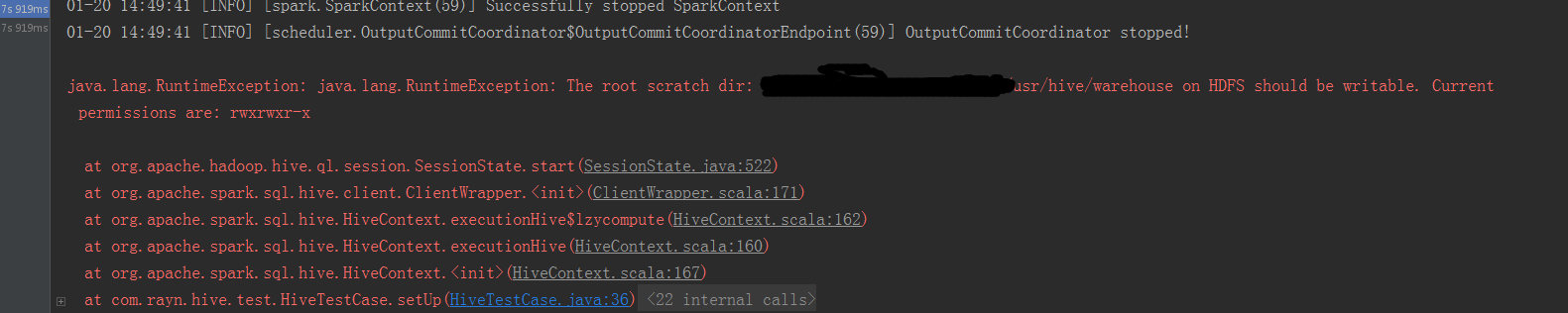

最近在使用 Spark 结合 Hive 来执行查询操作。。跑了一个demo 出现如下错误:

01-20 14:49:41 [INFO] [storage.BlockManagerMaster(59)] BlockManagerMaster stopped

01-20 14:49:41 [INFO] [spark.SparkContext(59)] Successfully stopped SparkContext

01-20 14:49:41 [INFO] [scheduler.OutputCommitCoordinator$OutputCommitCoordinatorEndpoint(59)] OutputCommitCoordinator stopped!

java.lang.RuntimeException: java.lang.RuntimeException: The root scratch dir: hdfs://testserver:9000/usr/hive/warehouse on HDFS should be writable. Current permissions are: rwxrwxr-x

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:522)

at org.apache.spark.sql.hive.client.ClientWrapper.<init>(ClientWrapper.scala:171)

at org.apache.spark.sql.hive.HiveContext.executionHive$lzycompute(HiveContext.scala:162)

at org.apache.spark.sql.hive.HiveContext.executionHive(HiveContext.scala:160)

at org.apache.spark.sql.hive.HiveContext.<init>(HiveContext.scala:167)

at com.rayn.hive.test.HiveTestCase.setUp(HiveTestCase.java:36)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:47)

at org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

at org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:44)

at org.junit.internal.runners.statements.RunBefores.evaluate(RunBefores.java:24)

at org.junit.internal.runners.statements.RunAfters.evaluate(RunAfters.java:27)

at org.junit.runners.ParentRunner.runLeaf(ParentRunner.java:271)

at org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:70)

at org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:50)

at org.junit.runners.ParentRunner$3.run(ParentRunner.java:238)

at org.junit.runners.ParentRunner$1.schedule(ParentRunner.java:63)

at org.junit.runners.ParentRunner.runChildren(ParentRunner.java:236)

at org.junit.runners.ParentRunner.access$000(ParentRunner.java:53)

at org.junit.runners.ParentRunner$2.evaluate(ParentRunner.java:229)

at org.junit.runners.ParentRunner.run(ParentRunner.java:309)

at org.junit.runner.JUnitCore.run(JUnitCore.java:160)

at com.intellij.junit4.JUnit4IdeaTestRunner.startRunnerWithArgs(JUnit4IdeaTestRunner.java:117)

at com.intellij.rt.execution.junit.JUnitStarter.prepareStreamsAndStart(JUnitStarter.java:234)

at com.intellij.rt.execution.junit.JUnitStarter.main(JUnitStarter.java:74)

经过我查看调试源码,最终将问题定位在 hive-exec.1.2.1 的 SessionState.java 这个类的方法:

private Path createRootHDFSDir(HiveConf conf) throws IOException {

Path rootHDFSDirPath = new Path(HiveConf.getVar(conf, HiveConf.ConfVars.SCRATCHDIR));

FsPermission writableHDFSDirPermission = new FsPermission((short)00733);

FileSystem fs = rootHDFSDirPath.getFileSystem(conf);

if (!fs.exists(rootHDFSDirPath)) {

Utilities.createDirsWithPermission(conf, rootHDFSDirPath, writableHDFSDirPermission, true);

}

FsPermission currentHDFSDirPermission = fs.getFileStatus(rootHDFSDirPath).getPermission();

if (rootHDFSDirPath != null && rootHDFSDirPath.toUri() != null) {

String schema = rootHDFSDirPath.toUri().getScheme();

LOG.debug(

"HDFS root scratch dir: " + rootHDFSDirPath + " with schema " + schema + ", permission: " +

currentHDFSDirPermission);

} else {

LOG.debug(

"HDFS root scratch dir: " + rootHDFSDirPath + ", permission: " + currentHDFSDirPermission);

}

// If the root HDFS scratch dir already exists, make sure it is writeable.

if (!((currentHDFSDirPermission.toShort() & writableHDFSDirPermission

.toShort()) == writableHDFSDirPermission.toShort())) {

throw new RuntimeException("The root scratch dir: " + rootHDFSDirPath

+ " on HDFS should be writable. Current permissions are: " + currentHDFSDirPermission);

}

return rootHDFSDirPath;

}

FsPermission writableHDFSDirPermission = new FsPermission((short)00733);这个是 Hive 验证 hive的文件中 HDFS上面必须有写入权限的初始化值。

抛出异常信息的代码是在 :

/* If the root HDFS scratch dir already exists, make sure it is writeable. */

if (!((currentHDFSDirPermission.toShort() & writableHDFSDirPermission

.toShort()) == writableHDFSDirPermission.toShort())) {

throw new RuntimeException("The root scratch dir: " + rootHDFSDirPath

+ " on HDFS should be writable. Current permissions are: " + currentHDFSDirPermission);

}根据这里,我写了测试方法测试代码及结果如下:

/**

* 权限测试

*

* @throws Exception

*/

@Test

public void testPressmission() throws Exception

{

List<String> s475 = new ArrayList<String>();

FsPermission writableHDFSDirPermission = new FsPermission((short) 00733);

for (int key = 0; key < 512; key++)

{

writableHDFSDirPermission.fromShort((short) key);

String permissionStr = writableHDFSDirPermission.toString();

//log.info(i + " -- " + permissionStr);

if ((key & 475) == 475)

{

log.info("key = {}, permission = {}", key, writableHDFSDirPermission.toString());

s475.add(String.valueOf(key));

}

if (StringUtils.equalsIgnoreCase("rwxrwxrwx", permissionStr))

{

break;

}

}

}

01-20 14:57:12 [INFO] [test.OtherTestCase(59)] key = 475, permission = rwx-wx-wx

01-20 14:57:12 [INFO] [test.OtherTestCase(59)] key = 479, permission = rwx-wxrwx

01-20 14:57:12 [INFO] [test.OtherTestCase(59)] key = 507, permission = rwxrwx-wx

01-20 14:57:12 [INFO] [test.OtherTestCase(59)] key = 511, permission = rwxrwxrwx

Process finished with exit code 0我就是执行 Select column1 from tablename 类似这样的语句,也没有写入的东西。。。

搞的人很恼火。。。。。。

最后解决办法:

下载源码,注释掉这行,

进入 $HIVE_HOME/sq 目录下,,,

执行命令: MVN clean install -Phadoop-2 -DskipTests 进行打包发布。正常使用!

718

718

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?