ssis 映射列 使用变量

The Data Flow Task is an essential component in SQL Server Integration Services (SSIS) as it provides SSIS ETL developers with an ability to conveniently extract data from various data sources; perform basic, fuzzy to advance data transformations; and migrate data into all kinds of data repository systems. Yet, with all its popularity and convenience, there are instances whereby the Data Flow Task is simply not good enough and recently, I got to experience such inefficiencies. To demonstrate some of the limitations of SSIS’s Data Flow Task, I have put together a random list of Premier League’s leading goal scorers for the 2019-2020 season.

数据流任务是SQL Server集成服务(SSIS)的重要组成部分,因为它为SSIS ETL开发人员提供了从各种数据源中方便地提取数据的能力。 执行基本的,模糊的以推进数据转换; 并将数据迁移到各种数据存储系统中。 但是,尽管它非常流行并且很方便,但在某些情况下, 数据流任务根本不够好,最近,我遇到了这种效率低下的情况。 为了证明SSIS 数据流任务的某些局限性,我随机整理了一份2019-2020赛季英超领先进球手的清单。

Pos | Player | Nationality | Club | Goals | Jersey Number |

1 | Jamie Vardy | England | Leicester City | 17 | 9 |

2 | Pierre-Emerick Aubameyang | Gabon | Arsenal | 13 | 14 |

2 | Danny Ings | England | Southampton | 13 | 9 |

4 | Marcus Rashford | England | Manchester United | 12 | 10 |

4 | Tammy Abraham | England | Chelsea | 12 | 9 |

位置 | 播放器 | 国籍 | 俱乐部 | 目标 | 球衣号码 |

1个 | 杰米·瓦迪(Jamie Vardy) | 英国 | 莱斯特城 | 17 | 9 |

2 | 皮埃尔·埃默里克·奥巴梅扬 | 加蓬 | 兵工厂 | 13 | 14 |

2 | 丹尼·英格斯(Danny Ings) | 英国 | 南安普敦 | 13 | 9 |

4 | 马库斯·拉什福德 | 英国 | 曼联 | 12 | 10 |

4 | 塔米·亚伯拉罕(Tammy Abraham) | 英国 | 切尔西 | 12 | 9 |

Script 1 shows a definition of a SQL Server table that will be used to store data imported from Table 1.

脚本1显示了SQL Server表的定义,该表将用于存储从表1导入的数据。

CREATE TABLE [dbo].[PLGoalScorers](

[Pos] [tinyint] NULL,

[Player] [varchar](50) NULL,

[Nationality] [varchar](50) NULL,

[Club] [varchar](50) NULL,

[Goals] [tinyint] NULL,

[Jersey Number] [tinyint] NULL

) ON [PRIMARY]

GO

With my source (Table 1) and destination (SQL Server table in Script 1) defined, I proceed to save the data from Table 1 into a CSV file and successfully import it using a Data Flow Task in SSIS, as shown in Figure 1.

定义好源( 表1 )和目标( 脚本1中的 SQL Server表)后,我继续将表1中的数据保存到CSV文件中,并使用SSIS中的“ 数据流任务”将其成功导入, 如图1所示。

数据流任务限制#1:无法自动刷新列映射 (Data Flow Task Limitation #1: Unable to Automatically Refresh Column Mappings)

Now, suppose our source dataset is later updated to include a player’s Age column, as shown in Figure 2.

现在,假设我们的源数据集后来进行了更新,以包括玩家的年龄列, 如图2所示。

In order to bring in this new column, we refactor the definition of our SQL Server target table to include the “Age” column. However, when we run our SSIS Data Flow Task package – without updating the metadata of its flat file connection, you will notice in Figure 3 that all data under the “Age” column has been appended to the existing “Jersey Number” column. This is because the column mappings in both the flat file connection and the Data Flow Task have not dynamically refreshed to detect the latest structural changes in both source and target datasets.

为了引入此新列,我们重构了SQL Server目标表的定义以包括“年龄”列。 但是,当我们运行SSIS 数据流任务包时-在不更新其平面文件连接的元数据的情况下,您会在图3中注意到,“年龄”列下的所有数据都已附加到现有的“泽西岛编号”列中。 这是因为平面文件连接和数据流任务中的列映射都没有动态刷新以检测源数据集和目标数据集中的最新结构更改。

数据流任务限制2:重新排列列位置会破坏ETL (Data Flow Task Limitation #2: Reshuffling Column Positions Breaks the ETL)

Another scenario that is likely to get your Data Flow Task throwing errors is when columns in the source dataset get reshuffled. For instance, Figure 4 shows the same dataset as Table 1, but this time, columns “Player” and “Pos” have swapped positions.

可能导致数据流任务抛出错误的另一种情况是,源数据集中的列被重新排列时。 例如, 图4显示了与表1相同的数据集,但是这次,“ Player”和“ Pos”列已互换位置。

The first thing you will notice when you try to import the reshuffled data is that whilst the content in rows appear to have been swapped – thus matching the changes in our latest dataset (in Figure 4) – the column headings in the flat file source output remain in their old positions thus creating a mismatch between values and labels, as shown in Figure 5.

当您尝试导入经过改组的数据时,您会注意到的第一件事是,虽然行中的内容似乎已被交换(因此与我们最新数据集中的更改匹配( 图4中 )),但是平面文件源输出中的列标题保持原来的位置,从而在值和标签之间造成不匹配, 如图5所示。

Not surprisingly, when we press “Play” in the data viewer to allow this mismatched input data to continue downstream, we run into an error, as shown in Figure 6.

毫不奇怪,当我们在数据查看器中按“播放”以允许不匹配的输入数据继续向下游传输时,我们会遇到错误, 如图6所示。

If you closely examine the details of the error encountered in Figure 6, you will see that a data conversion error occurred as the first column was expecting a numeric value instead of a string.

如果仔细检查图6中遇到的错误的详细信息,您将看到发生数据转换错误,因为第一列需要数字值而不是字符串。

To resolve this Data Flow Task error, we will have to manually refresh the metadata of the flat file source and then remap input and output columns of the Data Flow Task. Manually remapping input and output columns in a Data Flow Task can be tolerable if you are dealing with one or two sources with limited fields but can easily become a very daunting and frustrating exercise when dealing with multiple data sources with hundreds of columns.

要解决此数据流任务错误,我们将必须手动刷新平面文件源的元数据,然后重新映射数据流任务的输入和输出列。 如果您要处理一个或两个字段有限的源,则可以容忍在数据流任务中手动重新映射输入和输出列,但是当处理具有数百个列的多个数据源时,很容易成为一项令人生畏和令人沮丧的练习。

使用SqlBulkCopy类的动态源到目标列映射 (Dynamic Source-To-Target column mapping using SqlBulkCopy class)

The Data Flow Task limitation that was demonstrated above can easily be resolved by using the SqlBulkCopy class. As you might have guessed, the SqlBulkCopy class involves replacing your Data Flow Task with a .Net script, which can then be executed via a Script Task in SSIS. There are 3 main components to getting your data successfully imported using the SqlBulkCopy class.

上面演示的数据流任务限制可以通过使用 SqlBulkCopy 类。 您可能已经猜到了, SqlBulkCopy类涉及将数据流任务替换为.Net脚本,然后可以通过SSIS中的脚本任务执行该脚本。 使用SqlBulkCopy类成功获取数据有3个主要组件。

Define your connections

定义您的连接

Similarly to configuring a connection manager in the Data Flow Task, the SqlBulkCopy class requires that you specify a source and destination connections. In my case, I have defined two local variables that specify a connection string to a SQL Server database as well as a path to where my CSV source file is stored.

与在数据流任务中配置连接管理器类似, SqlBulkCopy类要求您指定源连接和目标连接。 就我而言,我定义了两个局部变量,它们指定了到SQL Server数据库的连接字符串以及CSV源文件的存储路径。

Figure 8: Declaration of local variables containing details about a SQL Server connection string and location of a CSV flat file document

图8:局部变量声明,包含有关SQL Server连接字符串和CSV平面文件文档位置的详细信息

Prepare your DataTable object

准备您的DataTable对象

Next, we build a DataTable object as shown in Figure 9, which will be used to store data at runtime. In this example, the role of a DataTable is similar to that of a Data Flow Task in SSIS, however, with one significant advantage being the fact that a DataTable is a memory object that is created at runtime and disposed at the end of an execution – it is never persisted like a Data Flow Task. This means that the DataTable’s source to target column mappings is always refreshed every time the DataTable object is created, which is a valuable benefit when dealing with a SQL Server table or flat file with column structure that keeps on changing.

接下来,我们构建如图9所示的DataTable对象,该对象将用于在运行时存储数据。 在此示例中, DataTable的角色与SSIS中的Data Flow Task相似,但是一个重要的优点是, DataTable是在运行时创建并在执行结束时放置的内存对象。 –它永远不会像“ 数据流任务”那样持续存在。 这意味着,每次创建DataTable对象时,始终会刷新DataTable的源到目标列的映射,这对于处理具有不断变化的列结构SQL Server表或平面文件是一个宝贵的好处。

Figure 9: A sample implementation of a DataTable class in C# for extracting data from flat file document

图9:C#中DataTable类的示例实现,用于从平面文件文档中提取数据

Write data into SQL Server Table

将数据写入SQL Server表

Once you have successfully extracted the contents of your source dataset into a DataTable, you can then bring on the SqlBulkCopy class and use it to bulk-copy the DataTable into a SQL Server table. As shown in line 86 in Figure 10, the SqlBulkCopy class method that is responsible for the actual transfer of data into SQL Server is called WriteToServer and it takes a DataTable as an argument.

一旦成功将源数据集的内容提取到DataTable中 ,就可以打开SqlBulkCopy类,并使用它将DataTable批量复制到SQL Server表中。 如图10中的第86行所示 ,负责将数据实际传输到SQL Server中的SqlBulkCopy类方法称为WriteToServer ,它使用DataTable作为参数。

Figure 10: Implementation of SQLBulkCopy class in C# to dynamically map source to target and write output using the WriteToServer method.

图10:C#中SQLBulkCopy类的实现,以将源动态映射到目标并使用WriteToServer方法写入输出。

You would have noticed that the actual source to target column mapping in Figure 10 was implemented in line 84, wherein both source (input) and target (output) columns were mapped to similar column names. Mapping input and output columns to the same column name is just one of several column mapping options available within the SqlBulkCopy class. In the following section, we explore the rest of the column mapping options available within the SqlBulkCopy class.

您可能已经注意到, 图10中的实际源到目标列映射是在第84行中实现的,其中源(输入)列和目标(输出)列都映射到了相似的列名。 将输入和输出列映射到相同的列名只是SqlBulkCopy类中可用的几个列映射选项之一。 在下一节中,我们将探讨SqlBulkCopy类中可用的其余列映射选项。

SqlBulkCopy类:列映射选项 (SqlBulkCopy Class: Column Mapping Options)

Option #1: Column Mapping by Name

选项1:按名称进行列映射

The mapping of a column by name assumes that a given column name (i.e., “Player”) exists in both source and target. One advantage of this kind of mapping is that you don’t necessarily need to maintain the order of columns in both source and target datasets. Figure 11 demonstrates the SqlBulkCopy class mapping of a column by name.

按名称进行列的映射假定源和目标中都存在给定的列名称(即“ Player”)。 这种映射的优点之一是,您不必同时维护源数据集和目标数据集中的列顺序。 图11通过名称演示了列的SqlBulkCopy类映射。

Figure 11: Implementation of SQLBulkCopy class in C# using the column by name mapping option

图11:使用按名称列映射选项的C#中SQLBulkCopy类的实现

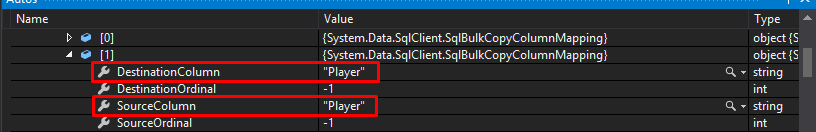

Figure 12 shows how the mapping specified in Figure 11 results in the same column name “Player” being assigned to both destination and source columns.

图12显示了图11中指定的映射如何导致将相同的列名“ Player”分配给目标列和源列。

Figure 12: Runtime demonstration of a column by name mapping between DestinationColumn and SourceColumn properties

图12:DestinationColumn和SourceColumn属性之间按名称映射的列的运行时演示

One biggest drawback of a column by name mapping is that when there is no exact match on the specified column name, you will run into “The given ColumnMapping does not match up with any column in the source or destination.” Error. The rest of the pros and cons of using a column by name mapping within a SqlBulkCopy class are as follows:

按名称映射列的最大缺点是,当指定的列名称不完全匹配时,您将遇到“ 给定的ColumnMapping与源或目标中的任何列都不匹配 。” 错误。 在SqlBulkCopy类中使用按名称进行列映射的其余优缺点如下:

Pros:

优点:

- Column by name mapping is not affected by column positions 列名映射不受列位置的影响

Cons:

缺点:

- The given ColumnMapping does not match up with any column in the source or destination.” error 给定的ColumnMapping与源或目标中的任何列都不匹配 。” 错误

Option #2: Column Mapping by Column Position or Index

选项2:按列位置或索引进行列映射

Another column mapping option within the SqlBulkCopy class involves mapping input and output columns by column position or index. This approach assumes that the source and target columns are positioned correctly. This is actually the default mapping behavior if you don’t implement the ColumnMappings.Add method, as shown in Figure 13.

SqlBulkCopy类中的另一个列映射选项涉及按列位置或索引映射输入和输出列。 此方法假定源列和目标列的位置正确。 如果您未实现ColumnMappings.Add方法,则实际上这是默认的映射行为, 如图13所示。

Figure 13: Implementation of SQLBulkCopy class in C# using the column by column position mapping option – without using ColumnMappings.Add method

图13:使用逐列位置映射选项的C#中SQLBulkCopy类的实现–不使用ColumnMappings.Add方法

The column by column position mapping in the SqlBulkCopy class also has the option for developers to explicitly implement column mappings by specifying column positions. The simplest implementation of this mapping option is shown in Figure 14, whereby a local variable is declared and incremented at the end of every column mapping combination.

SqlBulkCopy类中的逐列位置映射还为开发人员提供了通过指定列位置来显式实现列映射的选项。 此映射选项的最简单实现如图14所示,其中声明了局部变量,并在每个列映射组合的末尾增加了局部变量。

Figure 14: using ColumnMappings.Add method to implement column by column position mapping option of SQLBulkCopy class

图14:使用ColumnMappings.Add方法实现SQLBulkCopy类的逐列位置映射选项

Unlike in the column by name mapping option, the column by column position mapping is not affected by a mismatch in the names of input and output columns instead, data is successfully migrated provided both source and target column data types are compatible. Thus, the responsibility falls with the developer to ensure that the column position and data types of source and target columns are compatible; otherwise, an error similar to that in Figure 15 will be returned.

与逐列名称映射选项不同,逐列位置映射不受输入和输出列名称不匹配的影响,只要源列和目标列数据类型都兼容,就可以成功迁移数据。 因此,开发人员有责任确保列位置以及源列和目标列的数据类型兼容; 否则,将返回类似于图15的错误。

Figure 15: Column by position mapping error relating to a mismatch between source and target data types

图15:与源数据类型和目标数据类型不匹配有关的逐列位置映射错误

The column by column position mapping has its own pros and cons, as shown below:

逐列位置映射有其优点和缺点,如下所示:

Pros:

优点:

- Column by column position mapping is not affected by changes in column names 逐列位置映射不受列名称更改的影响

- ColumnMappings.Add method ColumnMappings.Add方法

Cons:

缺点:

- Source and target columns must be positioned correctly 源列和目标列必须正确放置

- Requires data type compatibility between source and target columns 需要源列和目标列之间的数据类型兼容性

Option #3: Hybrid of Column by Name & Column by Position Options

选项3: 按名称 列和按位置 列的混合

Another column mapping option available to you within the SqlBulkCopy class is to use the best of both worlds as it were – as demonstrated in Figure 16 wherein the input source columns are mapped by their positions against column names in the target. This means that column names in the target can be renamed or moved around without having to reorder column positions in the source. You will find this mapping option very useful when dealing with a source that doesn’t have column headers.

SqlBulkCopy类中可供您使用的另一种列映射选项是利用两全其美的方式– 如图16所示,其中输入源列根据它们在目标中的列名的位置进行映射。 这意味着可以重命名或移动目标中的列名,而不必重新排序源中的列位置。 当处理没有列标题的源时,您会发现此映射选项非常有用。

Figure 16: Hybrid of Column by Name & Column by Position mapping options available within the SQLBulkCopy class

图16:SQLBulkCopy类中可用的按名称列和按位置列映射选项的混合

In conclusion, consider using the SqlBulkCopy class inside a Script Task instead of SSIS’s data flow tasks when you are dealing with a data source whose structure keeps on changing. This will ensure that you can dynamically build column mappings at runtime between source and target without having to manually refresh the input and output column metadata, as is often the case with data flow tasks. Another benefit of using the SqlBulkCopy class is that it offers better data migration speed and performance over the data flow task. However, the SqlBulkCopy class has its own limitations:

总之,在处理结构不断变化的数据源时,请考虑在Script Task中使用SqlBulkCopy类而不是SSIS的数据流任务。 这将确保您可以在运行时在源和目标之间动态构建列映射,而不必像数据流任务那样手动刷新输入和输出列元数据。 使用SqlBulkCopy类的另一个好处是,与数据流任务相比,它提供了更好的数据迁移速度和性能。 但是, SqlBulkCopy类具有其自身的局限性:

- SqlBulkCopy class requires your ETL developers to pick up .Net programming skills SqlBulkCopy类要求您的ETL开发人员掌握.Net编程技能

- SqlBulkCopy class can only write its output into a SQL Server table SqlBulkCopy类只能将其输出写入SQL Server表中

- The given ColumnMapping does not match up with any column in the source or destination.” error while working with 给定的ColumnMapping与源或目标中的任何列都不匹配 。” SqlBulkCopy class, which is actually vague SqlBulkCopy类时出错,实际上是模糊的

资料下载 (Downloads)

- SqlBulkCopy class sample scriptSqlBulkCopy类示例脚本

- Sample data – pl_leading_goalscorers.csv 样本数据– pl_lead_goalscorers.csv

翻译自: https://www.sqlshack.com/dynamic-column-mapping-in-ssis-sqlbulkcopy-class-vs-data-flow/

ssis 映射列 使用变量

1150

1150

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?