Windows安装Kafka及Springboot整合

Windows安装Kafka

一、安装Zookeeper

1、官网下载安装包

地址:http://zookeeper.apache.org/releases.html#download

选择任意一个版本

![![[Pasted image 20230713223429.png]]](https://img-blog.csdnimg.cn/435fd70566194beeaf329e072a230e70.png)

2、解压文件 进入conf目录 C:\myWork\dev_env\zookeeper\apache-zookeeper-3.8.0-bin\conf

**3、**复制"zoo_sample.cfg”文件并重命名为“zoo.cfg”

4、打开"zoo.cfg" 配置

dataDir=C:\myWork\dev_env\zookeeper\apache-zookeeper-3.8.0-bin\data

dataLogDir=C:\myWork\dev_env\zookeeper\apache-zookeeper-3.8.0-bin\log

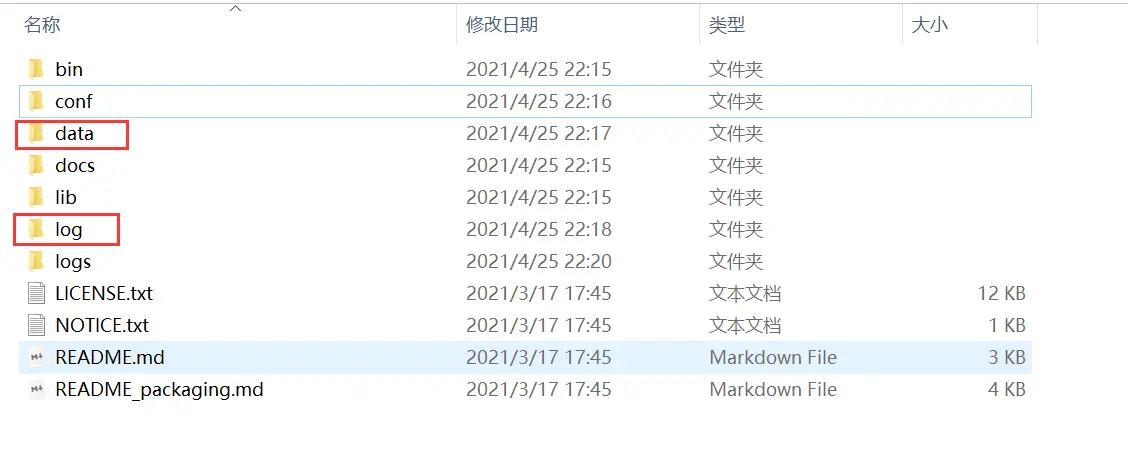

在D:\codeapp\apache-zookeeper-3.7.0-bin目录下新建data文件和log文件

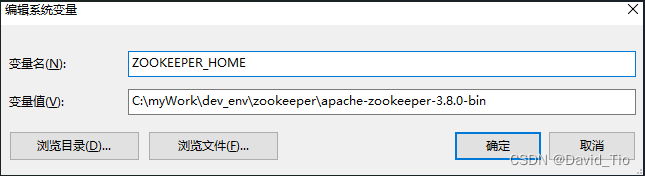

**5、添加系统变量 :**ZOOKEEPER_HOME =C:\myWork\dev_env\zookeeper\apache-zookeeper-3.8.0-bin

在path变量下添加 %ZOOKEEPER_HOME%\bin

![![[Pasted image 20230713223538.png]]](https://img-blog.csdnimg.cn/59695dbda3c84ba49f88e3d9475be87d.png)

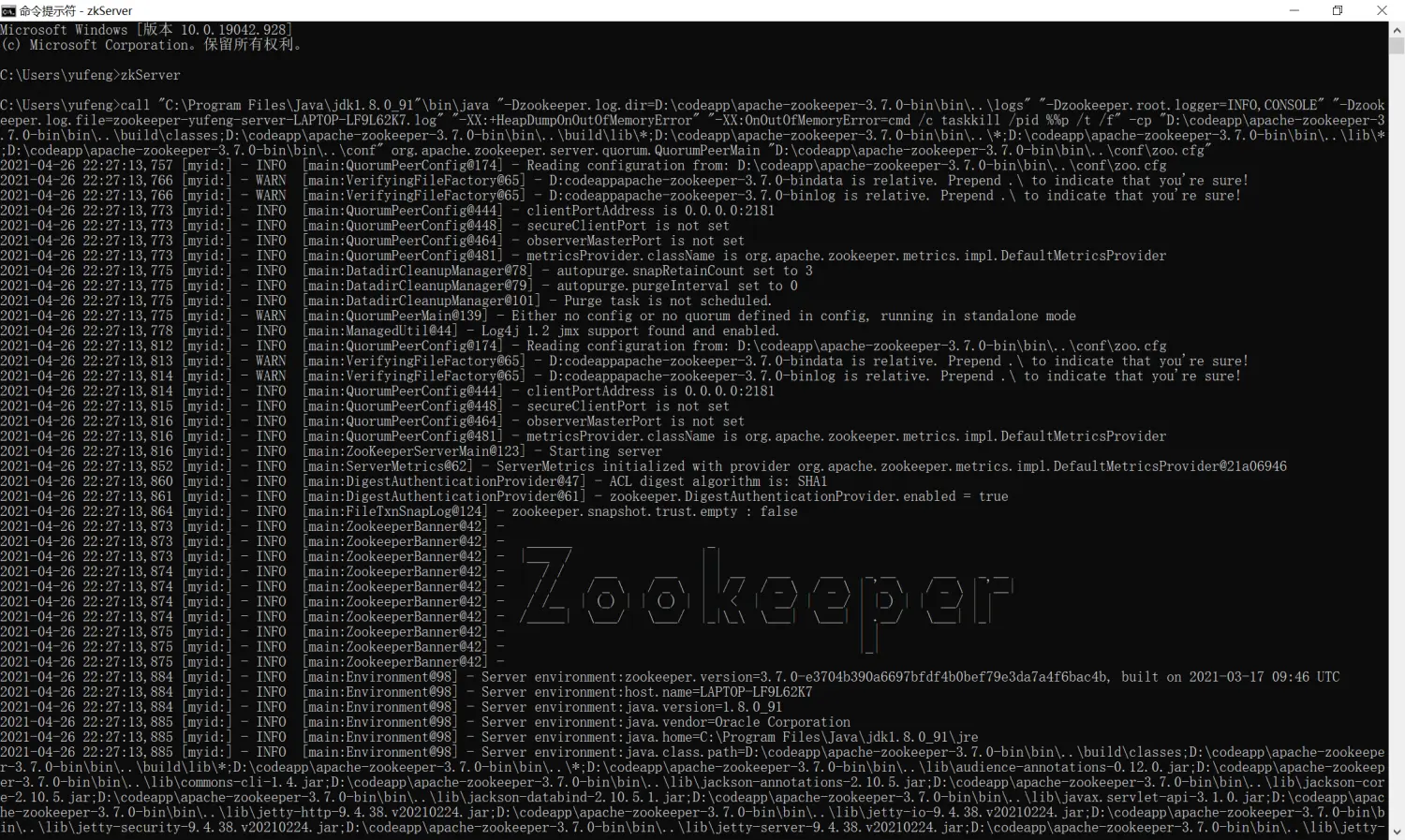

6、打开CMD 输入命令 zkServer 运行Zookeeper

启动成功 窗口保持运行

二、安装Kafka

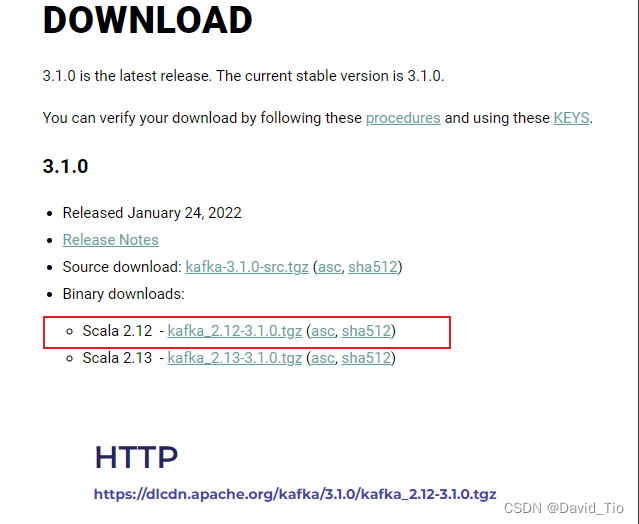

1、进入官网下载安装包 http://kafka.apache.org/downloads

2、解压并进入config目录 C:\myWork\dev_env\kafka\kafka_2.12-3.1.0\config

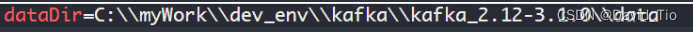

3、打开server.properties编辑以下属性

注意分隔符是\\

4、打开zookeeper.properties文件编辑以下属性

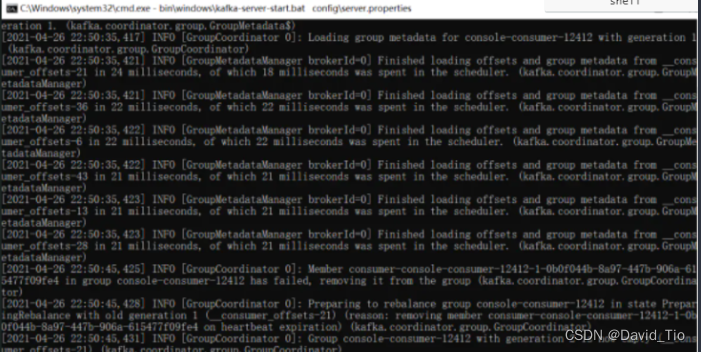

5、进入Kafka主目录C:\myWork\dev_env\kafka\kafka_2.12-3.1.0 地址栏输入cmd回车打开cmd窗口,在cmd中输入命令

start bin\windows\kafka-server-start.bat config\server.properties

三、说明

2181:zookeper 、kafka和zookeeper的通信端口

9092:kakfa Producer和Consumer的端口

四、应用

1、创建topic

不要关闭以上两个cmd窗口 进入C:\myWork\dev_env\kafka\kafka_2.12-3.1.0 主目录 重新打开一个cmd窗口

输入以下命令创建一个topic

bin\windows\kafka-topics.bat --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic kafka-test

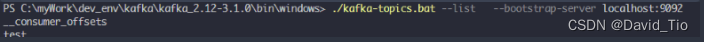

2、查看topic

./kafka-topics.bat --list --bootstrap-server localhost:9092

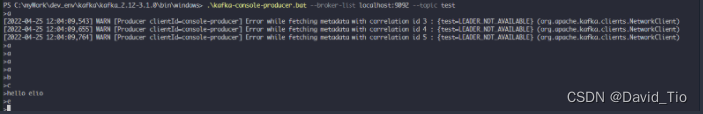

3、新建生产者Producer

.\kafka-console-producer.bat --broker-list localhost:9092 --topic test

输入几段消息

4、新建消费者Consumer

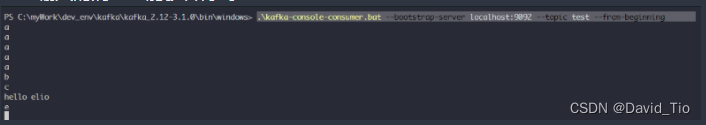

.\kafka-console-consumer.bat --bootstrap-server localhost:9092 --topic test --from-beginning

刚才输入的消息也能够看得到

SpringBoot整合Kafka

一、新建springboot项目

![[Pasted image 20230713223823.png]]

二、pom.xml添加依赖

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>2.6.7</version>

<relativePath/> <!-- lookup parent from repository -->

</parent>

<groupId>com.dt</groupId>

<artifactId>biz</artifactId>

<version>0.0.1-SNAPSHOT</version>

<name>SpringBootKafka</name>

<description>SpringbootKafka3.1</description>

<properties>

<java.version>1.8</java.version>

</properties>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<scope>runtime</scope>

</dependency>

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka-test</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-devtools</artifactId>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

</plugin>

</plugins>

</build>

</project>

三、application.yml 配置kafka

server:

servlet:

#应用访问地址

context-path: /springboot-kafka

spring:

#kafka配置

kafka:

#这里改为你的kafka服务器ip和端口号

bootstrap-servers: 192.168.56.1:9092

#=============== producer =======================

producer:

#如果该值大于零时,表示启用重试失败的发送次数

retries: 0

#每当多个记录被发送到同一分区时,生产者将尝试将记录一起批量处理为更少的请求,默认值为16384(单位字节)

batch-size: 16384

#生产者可用于缓冲等待发送到服务器的记录的内存总字节数,默认值为3355443

buffer-memory: 33554432

#key的Serializer类,实现类实现了接口org.apache.kafka.common.serialization.Serializer

key-serializer: org.apache.kafka.common.serialization.StringSerializer

#value的Serializer类,实现类实现了接口org.apache.kafka.common.serialization.Serializer

value-serializer: org.apache.kafka.common.serialization.StringSerializer

#=============== consumer =======================

consumer:

#用于标识此使用者所属的使用者组的唯一字符串

group-id: dt-consumer-group

#当Kafka中没有初始偏移量或者服务器上不再存在当前偏移量时该怎么办,默认值为latest,表示自动将偏移重置为最新的偏移量

#可选的值为latest, earliest, none

auto-offset-reset: earliest

#消费者的偏移量将在后台定期提交,默认值为true

enable-auto-commit: true

#如果'enable-auto-commit'为true,则消费者偏移自动提交给Kafka的频率(以毫秒为单位),默认值为5000。

auto-commit-interval: 100

#密钥的反序列化器类,实现类实现了接口org.apache.kafka.common.serialization.Deserializer

key-deserializer: org.apache.kafka.common.serialization.StringDeserializer

#值的反序列化器类,实现类实现了接口org.apache.kafka.common.serialization.Deserializer

value-deserializer: org.apache.kafka.common.serialization.StringDeserializer

四、KafkaUtil

package com.dt.biz.util;

import org.apache.kafka.clients.admin.*;

import org.apache.kafka.common.TopicPartitionInfo;

import org.springframework.beans.factory.annotation.Value;

import org.springframework.kafka.core.KafkaTemplate;

import org.springframework.stereotype.Component;

import javax.annotation.PostConstruct;

import javax.annotation.Resource;

import java.util.*;

import java.util.concurrent.ExecutionException;

import java.util.concurrent.atomic.AtomicReference;

import java.util.stream.Collectors;

/**

* web方式使用,通过容器管理,由Srping管理对象

*/

@Component

public class KafkaUtils {

@Value("${spring.kafka.bootstrap-servers}")

private String springKafkaBootstrapServers;

private AdminClient adminClient;

@Resource

private KafkaTemplate<String, Object> kafkaTemplate;

/**

* 初始化AdminClient

* '@PostConstruct该注解被用来修饰一个非静态的void()方法。

* 被@PostConstruct修饰的方法会在服务器加载Servlet的时候运行,并且只会被服务器执行一次。

* PostConstruct在构造函数之后执行,init()方法之前执行。

*/

@PostConstruct

private void initAdminClient() {

Map<String, Object> props = new HashMap<>(1);

props.put(AdminClientConfig.BOOTSTRAP_SERVERS_CONFIG, springKafkaBootstrapServers);

adminClient = KafkaAdminClient.create(props);

}

/**

* 新增topic,支持批量

*/

public void createTopic(Collection<NewTopic> newTopics) {

adminClient.createTopics(newTopics);

}

/**

* 删除topic,支持批量

*/

public void deleteTopic(Collection<String> topics) {

adminClient.deleteTopics(topics);

}

/**

* 获取指定topic的信息

*/

public String getTopicInfo(Collection<String> topics) {

AtomicReference<String> info = new AtomicReference<>("");

try {

adminClient.describeTopics(topics).all().get().forEach((topic, description) -> {

for (TopicPartitionInfo partition : description.partitions()) {

info.set(info + partition.toString() + "\n");

}

});

} catch (InterruptedException | ExecutionException e) {

e.printStackTrace();

}

return info.get();

}

/**

* 获取全部topic

*/

public List<String> getAllTopic() {

try {

List<String> list = adminClient.listTopics().listings().get().stream().map(TopicListing::name).collect(Collectors.toList());

for (String s : list) {

System.out.println(s);

}

return list;

} catch (InterruptedException | ExecutionException e) {

e.printStackTrace();

}

return new ArrayList<>();

}

/**

* 往topic中发送消息

*/

public void sendMessage(String topic, String message) {

kafkaTemplate.send(topic, message);

}

}

五、kafkaDemo (不用spring容器管理)

package com.dt.biz.demo;

import org.apache.kafka.clients.admin.*;

import org.apache.kafka.common.TopicPartitionInfo;

import org.springframework.kafka.core.KafkaTemplate;

import javax.annotation.Resource;

import java.util.*;

import java.util.concurrent.ExecutionException;

import java.util.concurrent.atomic.AtomicReference;

import java.util.stream.Collectors;

/**

* 不用spring容器管理

*/

public class KafkaDemo {

private AdminClient adminClient;

@Resource

private KafkaTemplate<String,Object> kafkaTemplate;

/**

* 获得kafka操作句柄

*/

private void initAdminClient() {

Map<String, Object> props = new HashMap<>(1);

props.put(AdminClientConfig.BOOTSTRAP_SERVERS_CONFIG, "192.168.56.1:9092");

adminClient = KafkaAdminClient.create(props);

System.out.println("adminClient " + adminClient);

}

/**

* 新增topic,支持批量

*/

public void createTopic(Collection<NewTopic> newTopics) {

adminClient.createTopics(newTopics);

}

/**

* 删除topic,支持批量

*/

public void deleteTopic(Collection<String> topics) {

adminClient.deleteTopics(topics);

}

/**

* 获取指定topic的信息

*/

public String getTopicInfo(Collection<String> topics) {

AtomicReference<String> info = new AtomicReference<>("");

try {

adminClient.describeTopics(topics).all().get().forEach((topic, description) -> {

for (TopicPartitionInfo partition : description.partitions()) {

info.set(info + partition.toString() + "\n");

}

});

} catch (InterruptedException | ExecutionException e) {

e.printStackTrace();

}

return info.get();

}

/**

* 获取全部topic

*/

public List<String> getAllTopic() {

try {

List<String> list = adminClient.listTopics().listings().get().stream().map(TopicListing::name).collect(Collectors.toList());

return list;

} catch (InterruptedException | ExecutionException e) {

e.printStackTrace();

}

return new ArrayList<>();

}

/**

* 往topic中发送消息

* @param topic topicName

* @param message 发送内容

*/

public void sendMessage(String topic, String message) {

kafkaTemplate.send(topic, message);

}

public static void main(String[] args) {

KafkaDemo kafkaDemo = new KafkaDemo();

//1.获得kafka句柄

kafkaDemo.initAdminClient();

//2.创建topic

NewTopic newTopic = new NewTopic("test2", 1, Short.valueOf("1"));

List<NewTopic> list2 = new ArrayList<NewTopic>();

list2.add(newTopic);

Collection<NewTopic> topics = new ArrayList<NewTopic>(list2);

// kafkaDemo.createTopic(topics);

//3.删除索引

List<String> deleteTopicList = new ArrayList<String>();

deleteTopicList.add("test");

Collection<String> deleteCollection = deleteTopicList;

//删除topic

//kafkaDemo.deleteTopic(deleteCollection);

//4.查询指定Topic

String topicInfo = kafkaDemo.getTopicInfo(deleteTopicList);

System.out.println("查询指定TopicInfo:" + topicInfo);

//5.查询所有topic

List<String> list = kafkaDemo.getAllTopic();

for (String ss : list) {

System.out.println("查询所有Topic:" + ss);

}

//6.往topic发送内容

// kafkaDemo.sendMessage("test","kafkaDemo-t1");

}

}

六、KafkaController

package com.dt.biz.controller;

import com.dt.biz.util.KafkaUtils;

import org.apache.kafka.clients.admin.NewTopic;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.kafka.core.KafkaTemplate;

import org.springframework.web.bind.annotation.*;

import javax.annotation.Resource;

import java.util.ArrayList;

import java.util.Date;

import java.util.List;

/**

* kafka控制器

*/

@RestController

@RequestMapping("/rest")

public class KafkaController {

@Autowired

private KafkaUtils KafkaUtils;

private final static String TOPIC_NAME = "test";

@Resource

private KafkaTemplate<String, String> kafkaTemplate;

@RequestMapping("/kafka/send")

public void send() {

Date date = new Date();

System.out.println("发送时间:=================" + date);

kafkaTemplate.send(TOPIC_NAME, "msg:" + date);

}

/**

* 生产者往topic中发送消息demo

*

* @param topic

* @param message

*/

@PostMapping("kafka/message")

public void sendMessage(String topic, String message) {

KafkaUtils.sendMessage(topic, message);

}

/**

* 消费者示例demo

* <p>

* 基于注解监听多个topic,消费topic中消息

* (注意:如果监听的topic不存在则会自动创建)

*/

@KafkaListener(topics = {"test"})

public void consume(String message) {

System.out.println("receive msg: " + message);

}

/**

* 查询topic信息 (支持批量,这里就单个作为演示)

*/

@PostMapping("/kafka/all")

public void getAllTopics() {

List<String> allTopics = KafkaUtils.getAllTopic();

for (String s : allTopics) {

System.out.println("getAllTopics:" + s);

}

}

/*

* 新增topic (支持批量,这里就单个作为演示)

*

* @param topic topic

* @return ResponseVo

*/

@PostMapping("/newTopic")

public void add(String topic) {

NewTopic newTopic = new NewTopic(topic, 1, (short) 1);

List<NewTopic> list2 = new ArrayList<NewTopic>();

list2.add(newTopic);

KafkaUtils.createTopic(list2);

}

/**

* 删除topic (支持批量,这里就单个作为演示)

* (注意:如果topic正在被监听会给人感觉删除不掉(但其实是删除掉后又会被创建))

*

* @param topic topic

*/

@DeleteMapping("kafka/{topic}")

public void delete(@PathVariable String topic) {

List<String> deleteTopicList = new ArrayList<String>();

deleteTopicList.add(topic);

KafkaUtils.deleteTopic(deleteTopicList);

}

}

七、项目gitee地址

https://gitee.com/DavidTio/springboot-kafka.git

256

256

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?