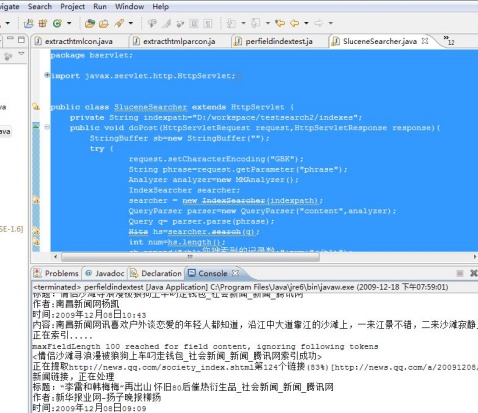

代码:(索引建立)

package bindex;

import java.io.IOException;

import java.io.PrintStream;

import java.net.URL;

import java.util.ArrayList;

import java.util.List;

import jeasy.analysis.MMAnalyzer;

import org.apache.lucene.analysis.PerFieldAnalyzerWrapper;

import org.apache.lucene.analysis.standard.StandardAnalyzer;

import org.apache.lucene.document.Document;

import org.apache.lucene.document.Field;

import org.apache.lucene.index.CorruptIndexException;

import org.apache.lucene.index.IndexWriter;

import org.apache.lucene.store.Directory;

import org.apache.lucene.store.FSDirectory;

import org.apache.lucene.store.LockObtainFailedException;

import org.apache.lucene.store.RAMDirectory;

import org.htmlparser.Node;

import org.htmlparser.NodeFilter;

import org.htmlparser.Parser;

import org.htmlparser.beans.LinkBean;

import org.htmlparser.filters.AndFilter;

import org.htmlparser.filters.HasAttributeFilter;

import org.htmlparser.filters.NotFilter;

import org.htmlparser.filters.OrFilter;

import org.htmlparser.filters.RegexFilter;

import org.htmlparser.filters.TagNameFilter;

import org.htmlparser.util.NodeList;

import org.htmlparser.util.ParserException;

public class perfieldindextest {

/**

* @param args

*/

public static void main(String[] args) {

// TODO Auto-generated method stub

String indexpath="./indexes";

IndexWriter writer;

PerFieldAnalyzerWrapper wr;

Document doc;

try {

RAMDirectory rd=new RAMDirectory();

writer=new IndexWriter(rd,new StandardAnalyzer());

//限制第个field最大词条数为100

writer.setMaxFieldLength(100);

writer.setInfoStream(System.out);//查看索引过程

wr=new PerFieldAnalyzerWrapper(new StandardAnalyzer());

wr.addAnalyzer("title",new MMAnalyzer());

wr.addAnalyzer("content", new MMAnalyzer());

wr.addAnalyzer("author", new MMAnalyzer());

wr.addAnalyzer("time", new StandardAnalyzer());

//提取腾迅国内新闻链接

LinkBean lb=new LinkBean();

List baseurls=new ArrayList();

baseurls.add("http://news.qq.com/china_index.shtml");

baseurls.add("http://news.qq.com/world_index.shtml");

baseurls.add("http://news.qq.com/society_index.shtml");

for (int j=0;j<baseurls.size();j++){

lb.setURL((String)baseurls.get(j));

URL[] urls=lb.getLinks();

for (int i=0;i<urls.length;i++){

doc=new Document();

String title="";

String content="";

String time="";

String author="";

System.out.println("正在提取"+(String)baseurls.get(j)+"第"+i+"个链接("+(int)(100*(i+1)/urls.length)+"%)["+urls[i].toString()+"].....");

if (!(urls[i].toString().startsWith("http://news.qq.com/a/"))){

System.out.println("非新闻链接,忽略......");continue;

}

System.out.println("新闻链接,正在处理");

Parser parser=new Parser(urls[i].toString());

parser.setEncoding("GBK");

String url=urls[i].toString();

NodeFilter filter_title=new TagNameFilter("title");

NodeList nodelist=parser.parse(filter_title);

Node node_title=nodelist.elementAt(0);

title=node_title.toPlainTextString();

System.out.println("标题:"+title);

parser.reset();

NodeFilter filter_auth=new OrFilter(new HasAttributeFilter("class","auth"),new HasAttributeFilter("class","where"));

nodelist=parser.parse(filter_auth);

Node node_auth=nodelist.elementAt(0);

if (node_auth != null) author=node_auth.toPlainTextString();

else author="腾讯网";

node_auth=nodelist.elementAt(1);

if (node_auth != null) author+=node_auth.toPlainTextString();

System.out.println("作者:"+author);

parser.reset();

NodeFilter filter_time=new OrFilter(new HasAttributeFilter("class","info"),new RegexFilter("[0-9]{4}年[0-9]{1,2}月[0-9]{1,2}日[' ']*[0-9]{1,2}:[0-9]{1,2}"));

nodelist=parser.parse(filter_time);

Node node_time=nodelist.elementAt(0);

if (node_time!=null) {

if (node_time.getChildren()!=null) node_time=node_time.getFirstChild();

time=node_time.toPlainTextString().replaceAll("[ |\t|\n|\f|\r\u3000]","").substring(0,16);

}

System.out.println("时间:"+time);

parser.reset();

NodeFilter filter_content=new OrFilter(new OrFilter(new HasAttributeFilter("style","TEXT-INDENT: 2em"),new HasAttributeFilter("id","Cnt-Main-Article-QQ")),new HasAttributeFilter("id","ArticleCnt"));

nodelist=parser.parse(filter_content);

Node node_content=nodelist.elementAt(0);

if (node_content!=null){

content=node_content.toPlainTextString().replaceAll("(#.*)|([a-z].*;)|}","").replaceAll(" |\t|\r|\n|\u3000","");

}

System.out.println("内容:"+content);

System.out.println("正在索引.....");

Field field=new Field("title",title,Field.Store.YES,Field.Index.TOKENIZED);

doc.add(field);

field=new Field("content",content,Field.Store.YES,Field.Index.TOKENIZED);

doc.add(field);

field=new Field("author",author,Field.Store.YES,Field.Index.UN_TOKENIZED);

doc.add(field);

field=new Field("time",time,Field.Store.YES,Field.Index.NO);

doc.add(field);

field=new Field("url",url,Field.Store.YES,Field.Index.NO);

doc.add(field);

writer.addDocument(doc,new MMAnalyzer());

System.out.println("<"+title+"索引成功>");

}

}

writer.close();

wr.close();

FSDirectory fd=FSDirectory.getDirectory(indexpath);

IndexWriter wi=new IndexWriter(fd,new MMAnalyzer());

PrintStream ps=new PrintStream("log.txt");

wi.setInfoStream(ps);//将索引过程输出到文件

wi.addIndexes(new Directory[]{rd});

//内存中文档最大值是40

wi.setMaxMergeDocs(80);

//内存中存储40个文档时写成磁盘一个块

wi.setMergeFactor(80);

System.out.println("<索引优化中>");

//索引本身的优化,多个索引文件合并成单个文件

wi.optimize();

wi.close();

System.out.println("<索引建立完毕>");

} catch (ParserException e) {

// TODO Auto-generated catch block

e.printStackTrace();

} catch (CorruptIndexException e) {

// TODO Auto-generated catch block

e.printStackTrace();

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

}

}

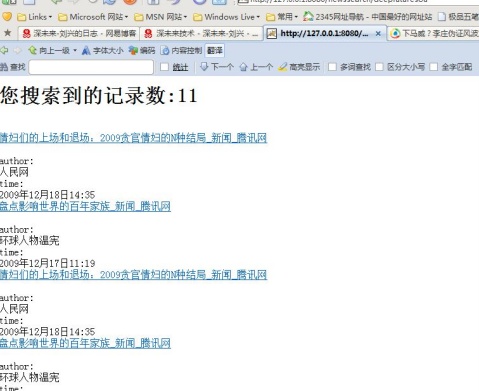

servlet:

package bservlet;

import javax.servlet.http.HttpServlet;

import javax.servlet.http.HttpServletRequest;

import javax.servlet.http.HttpServletResponse;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.document.Document;

import org.apache.lucene.document.Field;

import org.apache.lucene.index.*;

import org.apache.lucene.queryParser.ParseException;

import org.apache.lucene.queryParser.QueryParser;

import org.apache.lucene.search.*;

import java.io.*;

import jeasy.analysis.MMAnalyzer;

public class SluceneSearcher extends HttpServlet {

private String indexpath="D:/workspace/testsearch2/indexes";

public void doPost(HttpServletRequest request,HttpServletResponse response){

StringBuffer sb=new StringBuffer("");

try {

request.setCharacterEncoding("GBK");

String phrase=request.getParameter("phrase");

Analyzer analyzer=new MMAnalyzer();

IndexSearcher searcher;

searcher = new IndexSearcher(indexpath);

QueryParser parser=new QueryParser("content",analyzer);

Query q= parser.parse(phrase);

Hits hs=searcher.search(q);

int num=hs.length();

sb.append("<h1>您搜索到的记录数:"+num+"</h1>");

for (int i=0;i<num;i++){

Document doc=hs.doc(i);

if (doc==null){

continue;

}

Field field_title=doc.getField("title");

String title="<br><a href="+doc.getField("url").stringValue()+" target='_blank'>"+field_title.stringValue()+"</a><br>";

Field field_author=doc.getField("author");

String author="<br>author:<br>"+field_author.stringValue();

Field field_time=doc.getField("time");

String time="<br>time:<br>"+field_time.stringValue();

sb.append(title);

sb.append(author);

sb.append(time);

}

searcher.close();

} catch (CorruptIndexException e1) {

// TODO Auto-generated catch block

e1.printStackTrace();

} catch (IOException e1) {

// TODO Auto-generated catch block

e1.printStackTrace();

} catch (ParseException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

PrintWriter out;

try {

response.setContentType("text/html;charset=GBK");

out = response.getWriter();

out.print(sb.toString());

out.close();

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

}

public void doGet(HttpServletRequest request,HttpServletResponse response){

doPost(request,response);

}

}

199

199

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?