爬取实战:

爬取今日头条美图,通过传入想要爬取的搜索内容,爬取对应的图片,本文以抓取街拍美图,下载街拍美图。

爬取思路:

1.创建动态请求网页代码,可以方便以后爬取代码的修改

2.分析网页响应,筛选提取搜索目录中各网址的URL

3.请求提取出的网页

4.通过BeautifulSoup库和正则表达式提取图集名和图片的网址

5.请求图片的网址并将图片下载到本地

6.主函数及其引入多线程及项目格式化

爬取实现:

1.创建动态请求网页代码,可以方便以后爬取代码的修改

#请求网页

def get_one_page(offset,keyword):

#请求信息

data ={

'offset': offset,

'format': 'json',

'keyword': keyword,

'autoload': 'true',

'count': '20',

'cur_tab': '3',

'from':'gallery'

}

headers = {'User-Agent': 'Mozilla/5.0 (compatible; MSIE 10.0; Windows NT 6.2; Trident/6.0)'}

#urlencode()函数将字典转为对应的网址内容

url = 'https://www.toutiao.com/search_content/?' + urlencode(data)

try:

response = requests.get(url,headers = headers)

if response.status_code == 200:

return response.text

return None

except RequestException:

print("请求出错")

return None2.分析网页响应,筛选提取搜索目录中各网址的URL

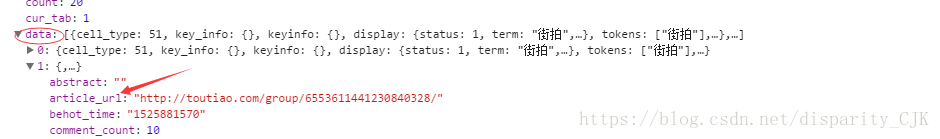

#处理json文件找出目录中网页的链接

def get_page_index(html):

try:

data = json.loads(html)

if data and 'data' in data.keys():

for item in data.get('data'):

yield item.get('article_url')

except JSONDecodeError:

pass3.请求提取出的网页

请求网页响应

def get_page_detail(url):

headers = {'User-Agent':'Mozilla/5.0 (compatible; MSIE 10.0; Windows NT 6.2; Trident/6.0)'}

try:

response = requests.get(url,headers = headers)

if response.status_code == 200:

return response.text

return None

except RequestException:

print("请求出错")

return None4.通过BeautifulSoup库和正则表达式提取图集名和图片的网址

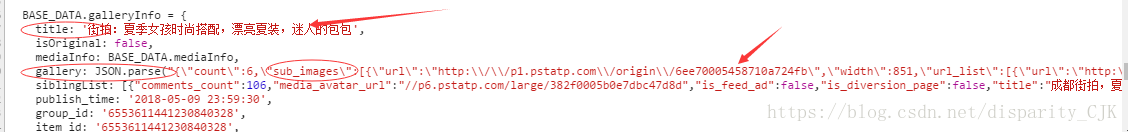

#获取网页图集名及图片网址

def parse_page_detail(html,url):

soup = BeautifulSoup(html,'lxml') #声明BeautifulSoup的lxml对象

title = soup.select('title')

if title:

title = title[0].get_text()

#头信息不一样返回的响应内容略有区别,自己的浏览器头信息是gallery JSON.parse:

images_pattern = re.compile('var gallery = (.*?);',re.S)

#images_pattern = re.compile(' gallery: JSON.parse\("(.*?)"\),',re.S) #另一个正则表达式

result = re.search(images_pattern,html)

if result:

data = json.loads(result.group(1))

if data and 'sub_images'in data.keys():

sub_images = data.get('sub_images')

images = [item.get('url') for item in sub_images]

for image in images:

download_image(image,title)

return { #数据格式化返回,方便后续操作

'title':title,

'url':url,

'images':images

}5.请求图片的网址并将图片下载到本地

#请求下载图片

def download_image(url,title):

url = 'http:' + url

print("正在下载",url)

headers = {'User-Agent': 'Mozilla/5.0 (compatible; MSIE 10.0; Windows NT 6.2; Trident/6.0)'}

try:

response = requests.get(url,headers=headers)

if response.status_code == 200:

save_image(response.content,title) #content返回二进制结果,text返回网页正常结果

return None

except RequestException:

print("请求图片出错")

return None

#下载图片

def save_image(content,title):

if not os.path.exists(title): #如果文件夹不存在创建文件夹

os.mkdir(title)

# 第一个参数是保持路径,第二个参数用来去掉重复图片,第三个参数为图片的格式

file_path = '{0}/{1}.{2}'.format(title,md5(content).hexdigest(),'jpg')

if not os.path.exists(file_path):

with open(file_path,"wb") as f:

f.write(content)

f.close()6.主函数及其引入多线程及项目格式化

def main(offset):

html = get_one_page(offset,KEYWROD)

for url in get_page_index(html):

html = get_page_detail(url)

if html:

result = parse_page_detail(html,url)

print(result)

#save_to_mongo(result)

if __name__ == '__main__':

groups = [x*20 for x in range(GROUP_START,GROUP_END)]

pool = Pool() #声明多线程Pool对象

pool.map(main,groups) #多线程map函数实现多线程在同目录下新定义一个config.py文件写入一些想要爬取的内容和爬取的范围及MongoDB数据库的信息

MONGD_URL = 'localhost'

MONGD_DB = 'toutiao'

MONGD_TABLE = 'toutiao'

GROUP_START = 1

GROUP_END = 20

KEYWROD = '街拍'

完整代码

import os

import re

import json

import requests

import pymongo

from urllib.parse import urlencode

from bs4 import BeautifulSoup

from requests.exceptions import RequestException

from hashlib import md5

from 爬虫.小项目.TouTiao.config import *

from multiprocessing import Pool

from json.decoder import JSONDecodeError

client = pymongo.MongoClient(MONGD_URL,connect=False)

db = client[MONGD_DB]

headers = {'User-Agent': 'Mozilla/5.0 (compatible; MSIE 10.0; Windows NT 6.2; Trident/6.0)'}

#请求网页

def get_one_page(offset,keyword):

#请求信息

data ={

'offset': offset,

'format': 'json',

'keyword': keyword,

'autoload': 'true',

'count': '20',

'cur_tab': '3',

'from':'gallery'

}

#urlencode()函数将字典转为对应的网址内容

url = 'https://www.toutiao.com/search_content/?' + urlencode(data)

try:

response = requests.get(url,headers = headers)

if response.status_code == 200:

return response.text

return None

except RequestException:

print("请求出错")

return None

#处理json文件找出目录中网页的链接

def get_page_index(html):

try:

data = json.loads(html)

if data and 'data' in data.keys():

for item in data.get('data'):

yield item.get('article_url')

except JSONDecodeError:

pass

#请求网页响应

def get_page_detail(url):

try:

response = requests.get(url,headers = headers)

if response.status_code == 200:

return response.text

return None

except RequestException:

print("请求出错")

return None

#获取网页图集名及图片网址

def parse_page_detail(html,url):

soup = BeautifulSoup(html,'lxml') #声明BeautifulSoup的lxml对象

title = soup.select('title')

if title:

title = title[0].get_text()

#头信息不一样返回的响应内容略有区别,自己的浏览器头信息是gallery JSON.parse:

images_pattern = re.compile('var gallery = (.*?);',re.S)

result = re.search(images_pattern,html)

if result:

data = json.loads(result.group(1))

if data and 'sub_images'in data.keys():

sub_images = data.get('sub_images')

images = [item.get('url') for item in sub_images]

for image in images:

download_image(image,title)

return {

'title':title,

'url':url,

'images':images

}

#保存到MongoDB中

def save_to_mongo(result):

if db[MONGD_TABLE].insert(result):

print('存储到MongoDB成功',result)

return True;

return False

#请求下载图片

def download_image(url,title):

url = 'http:' + url

print("正在下载",url)

try:

response = requests.get(url,headers=headers)

if response.status_code == 200:

save_image(response.content,title) #content返回二进制结果,text返回网页正常结果

return None

except RequestException:

print("请求图片出错")

return None

#下载图片

def save_image(content,title):

if not os.path.exists(title): #如果文件夹不存在创建文件夹

os.mkdir(title)

# 第一个参数是保持路径,第二个参数用来去掉重复图片,第三个参数为图片的格式

file_path = '{0}/{1}.{2}'.format(title,md5(content).hexdigest(),'jpg')

if not os.path.exists(file_path):

with open(file_path,"wb") as f:

f.write(content)

f.close()

def main(offset):

html = get_one_page(offset,KEYWROD)

for url in get_page_index(html):

html = get_page_detail(url)

if html:

result = parse_page_detail(html,url)

print(result)

#save_to_mongo(result)

if __name__ == '__main__':

groups = [x*20 for x in range(GROUP_START,GROUP_END)]

pool = Pool() #声明多线程Pool对象

pool.map(main,groups) #多线程map函数实现多线程收获:

熟练掌握了数据格式化及其做项目时为了以后方便修改而使项目格式化,动态GET请求,项目的稳定性和可靠性,了解到json的操作和BeautifulSoup库和正则表达式组合使用来获取信息,format函数的灵活使用及hashlib的判断文件重复。

2679

2679

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?