准备工作

- 安装3台centos7 服务器

- 配置主机名字hd01\hd02\hd03

hostnamectl set-hostname hd01

查询地址

ip addr

连接xshell:填写名称:hostname 主机:ip地址 用户身份验证

可以向xshell窗口拖拽文件:

yum -y install lrzsz

hostname和ip地址形成映射:

vi /etc/hosts

192.168.181.131 hd01

192.168.181.132 hd02

192.168.181.133 hd03

3.配置网络

vi /etc/sysconfig/network-scripts/ifcfg-ens33

BOOTPROTO="static"

IPADDR=192.168.181.131

NETWORK=255.255.255.0

GATEWAY=192.168.181.2

DNS1=8.8.8.8

DNS2=114.114.114.114

重启网络

systemctl restart network

4.关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

systemctl status firewalld

测试网络

ping www.baidu.com

5.关闭hd01,创建完整克隆hd02,hd03

hd02修改hostname和ip 并连接xshell

hostnamectl set-hostname hd02

vi /etc/hosts

192.168.181.131 hd01

192.168.181.132 hd02

192.168.181.133 hd03

vi /etc/sysconfig/network-scripts/ifcfg-ens33

BOOTPROTO="static"

IPADDR=192.168.181.132

NETWORK=255.255.255.0

GATEWAY=192.168.181.2

DNS1=8.8.8.8

DNS2=114.114.114.114

重启网络

systemctl restart network

hd03按上述步骤一样的修改hostname和ip 并连接xshell

hostnamectl set-hostname hd03

vi /etc/hosts

192.168.181.131 hd01

192.168.181.132 hd02

192.168.181.133 hd03

vi /etc/sysconfig/network-scripts/ifcfg-ens33

BOOTPROTO="static"

IPADDR=192.168.181.133

NETWORK=255.255.255.0

GATEWAY=192.168.181.2

DNS1=8.8.8.8

DNS2=114.114.114.114

systemctl restart network

6.三台机器之间实现免密

打开三台虚拟–>连接Xshell–>排列–>垂直排列

右击–>发送到所有会话

ssh-keygen -t rsa–>三次回车,生成.ssh文件

cd .ssh/

ssh-copy-id -i id_rsa.pub root@hd01-->yes-->root

ssh-copy-id -i id_rsa.pub root@hd02-->yes-->root

ssh-copy-id -i id_rsa.pub root@hd03-->yes-->root

注:root是自己设置的密码

7.所有服务器时间同步

yum -y install chrony

#配置chrony

vi /etc/chrony.conf

server ntp1.aliyun.com

server ntp2.aliyun.com

server ntp3.aliyun.com

注释掉 以下三行,前面加#

#server 0.centos.pool.ntp.org iburst

#server 1.centos.pool.ntp.org iburst

#server 2.centos.pool.ntp.org iburst

#server 3.centos.pool.ntp.org iburst

#启动chrony

systemctl start chronyd

8.安装wget

yum install -y wget

安装psmisc(linux命令工具包 namenode主备切换时要用到 只需要安装在两个namenode节点上)

yum install -y psmisc

9.集群部署

1.安装JDK

cd /opt/

mkdir soft

yum install -y lrzsz

拖进来压缩包,解压缩并重命名

tar -zxf hadoop-2.6.0-cdh5.14.2.tar.gz -C /opt/soft/

tar -zxf jdk-8u111-linux-x64.tar.gz -C /opt/soft/

tar -zxf zookeeper-3.4.5-cdh5.14.2.tar.gz -C /opt/soft/

mv hadoop-2.6.0-cdh5.14.2/ hadoop260

mv jdk1.8.0_111/ jdk180

mv zookeeper-3.4.5-cdh5.14.2/ zk345

配置JAVA_HOME环境变量

vi /etc/profile

#JAVA_HOME

export JAVA_HOME=/opt/soft/jdk180

export PATH=$JAVA_HOME/bin:$PATH

export CLASS_PATH=.:$JAVA_HOME/lib/tools.jar:$JAVA_HOME/lib/dt.jar

激活环境变量

source /etc/profile

验证

java -version

2.安装zookeeper集群

配置/opt/soft/zk345/conf/zoo.cfg

cp zoo_sample.cfg zoo.cfg

vi zoo.cfg

dataDir=/opt/soft/zk345/data

server.1=hd01:2888:3888

server.2=hd02:2888:3888

server.3=hd03:2888:3888

#在zk345下新建data ,data 下建立一个myid文件(不同主机的数字不同)

mkdir data

cd data

vi myid

写1保存退出

#配置环境变量

#ZK_HOME

export ZK_HOME=/opt/soft/zk345

export PATH=$ZK_HOME/bin:$PATH

激活

source /etc/profile

#配置hd02,hd03

打开同步会话:

mkdir soft

scp -r jdk180/ zk345/ root@hd02:/opt/soft/

scp -r jdk180/ zk345/ root@hd03:/opt/soft/

#启动服务

zkServer.sh start

- 安装hadoop集群

解压

tar -zxf hadoop-2.6.0-cdh5.14.2.tar.gz

移动到自己的安装文件夹下

mv hadoop-2.6.0-cdh5.14.2 soft/hadoop260

添加对应各个文件夹

mkdir -p /opt/soft/hadoop260/tmp

mkdir -p /opt/soft/hadoop260/dfs/journalnode_data

mkdir -p /opt/soft/hadoop260/dfs/edits

mkdir -p /opt/soft/hadoop260/dfs/datanode_data

mkdir -p /opt/soft/hadoop260/dfs/namenode_data

- 配置hadoop-env.sh

cd /opt/soft/hadoop260/etc/hadoop

export JAVA_HOME=/opt/soft/jdk180

export HADOOP_CONF_DIR=/opt/soft/hadoop260/etc/hadoop

- 配置core-site.xml

<configuration>

<!--指定hadoop集群在zookeeper上注册的节点名-->

<property>

<name>fs.defaultFS</name>

<value>hdfs://hacluster</value>

</property>

<!--指定hadoop运行时产生的临时文件-->

<property>

<name>hadoop.tmp.dir</name>

<value>file:///opt/soft/hadoop260/tmp</value>

</property>

<!--设置缓存大小 默认4KB-->

<property>

<name>io.file.buffer.size</name>

<value>4096</value>

</property>

<!--指定zookeeper的存放地址-->

<property>

<name>ha.zookeeper.quorum</name>

<value>hd01:2181,hd02:2181,hd03:2181</value>

</property>

<!--配置允许root代理访问主机节点-->

<property>

<name>hadoop.proxyuser.root.hosts</name><value>*</value>

</property>

<!--配置该节点允许root用户所属的组-->

<property>

<name>hadoop.proxyuser.root.groups</name>

<value>*</value>

</property>

</configuration>

- 配置hdfs-site.xml

<configuration>

<property>

<!--数据块默认大小128M-->

<name>dfs.block.size</name>

<value>134217728</value>

</property>

<property>

<!--副本数量 不配置默认为3-->

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<!--namenode节点数据(元数据)的存放位置-->

<name>dfs.name.dir</name>

<value>file:///opt/soft/hadoop260/dfs/namenode_data</value>

</property>

<property>

<!--datanode节点数据(元数据)的存放位置-->

<name>dfs.data.dir</name>

<value>file:///opt/soft/hadoop260/dfs/datanode_data</value>

</property>

<property>

<!--开启hdfs的webui界面-->

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<!--datanode上负责进行文件操作的线程数-->

<name>dfs.datanode.max.transfer.threads</name>

<value>4096</value>

</property>

<property>

<!--指定hadoop集群在zookeeper上的注册名-->

<name>dfs.nameservices</name>

<value>hacluster</value>

</property>

<property>

<!--hacluster集群下有两个namenode分别是nn1,nn2-->

<name>dfs.ha.namenodes.hacluster</name>

<value>nn1,nn2</value>

</property>

<!--nn1的rpc、servicepc和http通讯地址 -->

<property>

<name>dfs.namenode.rpc-address.hacluster.nn1</name>

<value>hd01:9000</value>

</property><property>

<name>dfs.namenode.servicepc-address.hacluster.nn1</name>

<value>hd01:53310</value>

</property>

<property>

<name>dfs.namenode.http-address.hacluster.nn1</name>

<value>hd01:50070</value>

</property>

<!--nn2的rpc、servicepc和http通讯地址 -->

<property>

<name>dfs.namenode.rpc-address.hacluster.nn2</name>

<value>hd02:9000</value>

</property>

<property>

<name>dfs.namenode.servicepc-address.hacluster.nn2</name>

<value>hd02:53310</value>

</property>

<property>

<name>dfs.namenode.http-address.hacluster.nn2</name>

<value>hd02:50070</value>

</property>

<property>

<!--指定Namenode的元数据在JournalNode上存放的位置-->

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://hd01:8485;hd02:8485;hd03:8485/hacluster</value>

</property>

<property>

<!--指定JournalNode在本地磁盘的存储位置-->

<name>dfs.journalnode.edits.dir</name>

<value>/opt/soft/hadoop260/dfs/journalnode_data</value>

</property>

<property>

<!--指定namenode操作日志存储位置-->

<name>dfs.namenode.edits.dir</name>

<value>/opt/soft/hadoop260/dfs/edits</value>

</property>

<property>

<!--开启namenode故障转移自动切换-->

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<property>

<!--配置失败自动切换实现方式-->

<name>dfs.client.failover.proxy.provider.hacluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<property>

<!--配置隔离机制-->

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<property>

<!--配置隔离机制需要SSH免密登录-->

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/root/.ssh/id_rsa</value></property>

<property>

<!--hdfs文件操作权限 false为不验证-->

<name>dfs.premissions</name>

<value>false</value>

</property>

</configuration>

- 配置mapper-site.xml

<configuration>

<property>

<!--指定mapreduce在yarn上运行-->

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<!--配置历史服务器地址-->

<name>mapreduce.jobhistory.address</name>

<value>hd01:10020</value>

</property>

<property>

<!--配置历史服务器webUI地址-->

<name>mapreduce.jobhistory.webapp.address</name>

<value>hd01:19888</value>

</property>

<property>

<!--开启uber模式-->

<name>mapreduce.job.ubertask.enable</name>

<value>true</value>

</property>

</configuration>

6. 配置yarn-site.xml

<configuration>

<property>

<!--开启yarn高可用-->

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<property>

<!-- 指定Yarn集群在zookeeper上注册的节点名-->

<name>yarn.resourcemanager.cluster-id</name>

<value>hayarn</value>

</property>

<property>

<!--指定两个resourcemanager的名称-->

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<property>

<!--指定rm1的主机-->

<name>yarn.resourcemanager.hostname.rm1</name>

<value>hd02</value>

</property><property>

<!--指定rm2的主机-->

<name>yarn.resourcemanager.hostname.rm2</name>

<value>hd03</value>

</property>

<property>

<!--配置zookeeper的地址-->

<name>yarn.resourcemanager.zk-address</name>

<value>hd01:2181,hd02:2181,hd03:2181</value>

</property>

<property>

<!--开启yarn恢复机制-->

<name>yarn.resourcemanager.recovery.enabled</name>

<value>true</value>

</property>

<property>

<!--配置执行resourcemanager恢复机制实现类-->

<name>yarn.resourcemanager.store.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value>

</property>

<property>

<!--指定主resourcemanager的地址-->

<name>yarn.resourcemanager.hostname</name>

<value>hd03</value>

</property>

<property>

<!--nodemanager获取数据的方式-->

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<!--开启日志聚集功能-->

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<!--配置日志保留7天-->

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

</configuration>

- 配置slaves

hd01

hd02

hd03

- 分发安装包到hd02,hd03

scp -r hadoop260/ root@hd02:/opt/soft/

scp -r hadoop260/ root@hd03:/opt/soft/

9.为3台节点配置hadoop环境变量

vi /etc/profile

#hadoop

export HADOOP_HOME=/opt/soft/hadoop260

export HADOOP_MAPRED_HOME=$HADOOP_HOME

export HADOOP_COMMON_HOME=$HADOOP_HOME

export HADOOP_HDFS_HOME=$HADOOP_HOME

export YARN_HOME=$HADOOP_HOME

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/bin

export HADOOP_INSTALL=$HADOOP_HOME

激活

source /etc/profile

- 启动集群

- 启动zookeeper(3个都启动)

zkServer.sh start

- 启动JournalNode(3个都启动)

hadoop-daemon.sh start journalnode

- 格式化namenode(只在hd01主机上)

hdfs namenode -format

- 将hd01上的Namenode的元数据复制到hd02相同位置

scp -r /opt/soft/hadoop260/dfs/namenode_data/current/

root@hd02:/opt/soft/hadoop260/dfs/namenode_data

- 在hd01或hd02格式化故障转移控制器zkfc

hdfs zkfc -formatZK

- 在hd01上启动dfs服务

start-dfs.sh

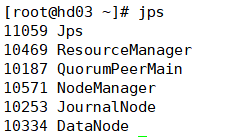

- 在hd03上启动yarn服务

start-yarn.sh

- 在hd01上启动history服务器

/opt/soft/hadoop260/sbin

mr-jobhistory-daemon.sh start historyserver

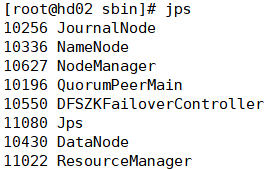

- 在hd02上启动resourcemanager服务 ,检查集群情况

yarn-daemon.sh start resourcemanager

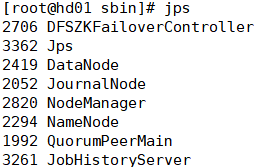

1.jps 查看上面服务不能缺少

-

查看状态

在hd01上查看服务状态

hdfs haadmin -getServiceState nn1 #active

hdfs haadmin -getServiceState nn2 #standby

在hd03上查看resourcemanager状态

yarn rmadmin -getServiceState rm1 #standby

yarn rmadmin -getServiceState rm2 #active

-

检查主备切换

kill 掉Namenode主节点 查看Namenode standby节点状态

kill -9 namenode主节点进程

恢复后重新加入,也只是standby节点

hadoop-daemon.sh start namenode

- 集群二次启动

在hd01上启动dfs

start-dfs.sh

在hd03上启动yarn

start-yarn.sh

在hd02上启动resourcemanager

yarn-daemon.sh start resourcemanager

472

472

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?