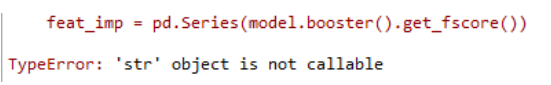

当使用XGB想得到特征重要性时报错,代码及报错如下,

from xgboost.sklearn import XGBClassifier

import xgboost as xgb

def modelfit(alg, dtrain,y_train, dtest=None ,useTrainCV=True, cv_folds=5, early_stopping_rounds=50):

if useTrainCV:

xgb_param = alg.get_xgb_params()

xgtrain = xgb.DMatrix(dtrain.as_matrix()[: ,1:], label=y_train)

#xgtest = xgb.DMatrix(dtest.as_matrix()[: , :])

cvresult = xgb.cv(xgb_param, xgtrain, num_boost_round=alg.get_params()['n_estimators'], nfold=cv_folds ,early_stopping_rounds=early_stopping_rounds)

alg.set_params(n_estimators=cvresult.shape[0])

#建模

alg.fit(dtrain.as_matrix()[: ,1:], y_train ,eval_metric='auc')

#对训练集预测

dtrain_predictions = alg.predict(dtrain.as_matrix()[: ,1:])

dtrain_predprob = alg.predict_proba(dtrain.as_matrix()[: ,1:])[:,1]

#输出模型的一些结果

#print(dtrain_predictions)

#print(alg.predict_proba(dtrain.as_matrix()[: ,1:]))

print(cvresult.shape[0])

print ("\n关于现在这个模型")

print ("准确率 : %.4g" % metrics.accuracy_score(y_train, dtrain_predictions))

print ("AUC 得分 (训练集): %f" % metrics.roc_auc_score(y_train, dtrain_predprob))

feat_imp = pd.Series(alg.get_booster().get_fscore()).sort_values(ascending=False)

print(feat_imp.head(25))

print(feat_imp.shape)

feat_imp.plot(kind='bar', title='Feature Importances')

plt.ylabel('Feature Importance Score')

xgb1 = XGBClassifier(

learning_rate=0.1,

n_estimators =50,

max_depth=4,

min_child_weight =1,

gamma = 0,

subsample=0.8,

colsample_bytree = 0.8,

objective ='binary:logistic' ,

nthread=4,

scale_pos_weight=0.8,

seed = 27

)

modelfit(xgb1 ,X_train,y_train)

遇到这个问题,开始我是佷懵的。

参考:https://stackoverflow.com/questions/38212649/feature-importance-with-xgbclassifier 上的内容后找到解决方法。

此时我们只需要将model.booster()改为model.get_booster()即可。

参考:https://blog.csdn.net/u012735708/article/details/84334218

1201

1201

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?