环境准备

| IP | Hostname | Role | Role2 |

|---|---|---|---|

| 10.0.0.1 | flink-01 | master,woker | JobManager,TaskManager,Dinky |

| 10.0.0.2 | flink-02 | woker | TaskManager |

| 10.0.0.3 | flink-03 | woker | TaskManager |

安装JDK

yum install java-11-openjdk.x86_64 java-11-openjdk-devel.x86_64 -y

配置hosts

vim /etc/hosts

10.0.0.1 flink-01

10.0.0.2 flink-02

10.0.0.3 flink-03

配置免密

- 在flink-01上面生成公钥和私钥对,按照提示一直按下回车键,直到生成密钥对为止。

ssh-keygen -t rsa

- 将flink-01上生成的公钥复制到flink-01,flink-02,flink-03

ssh-copy-id root@flink-01

ssh-copy-id root@flink-02

ssh-copy-id root@flink-03

1. Flink集群部署

Master节点操作

- 获取Flink安装包

cd /opt && wget https://archive.apache.org/dist/flink/flink-1.15.4/flink-1.15.4-bin-scala_2.12.tgz

- 解压压缩包并创建软链接

tar xf flink-1.15.4-bin-scala_2.12.tgz

ln -s flink-1.15.4 flink

- 修改配置文件flink-conf.yaml

vim /opt/flink/conf/flink-conf.yaml

jobmanager.rpc.address: 10.0.0.1

jobmanager.bind-host: 0.0.0.0

taskmanager.bind-host: 0.0.0.0

taskmanager.host: flink-01

taskmanager.memory.process.size: 14400m #taskmanager可用内存

taskmanager.numberOfTaskSlots: 3 #taskmanager任务数量 一般跟cpu数量相同

rest.address: flink-01

rest.bind-address: 0.0.0.0

- 修改节点文件

vim /opt/flink/conf/masters

10.0.0.1:8081

vim/opt/flink/conf/workers

10.0.0.1

10.0.0.2

10.0.0.3

- 将flink包发送到flink-02,flink-03

scp -r flink-1.15.4/ root@flink-02:/opt

scp -r flink-1.15.4/ root@flink-03:/opt

worker节点操作(flink-02 flink-03同步操作)

- 创建软链接

cd /opt && ln -s flink-1.15.4 flink

- 修改配置文件

taskmanager.host: 10.0.0.2/10.0.0.3 #根据服务器本身ip进行修改

启动flink集群(Master节点进行启动)

cd /opt/flink/bin && ./start-cluster.sh

2. Dinky部署(flink-01)

- 获取Dinky安装包

cd /opt/ && wget https://github.com/DataLinkDC/dlink/releases/download/v0.7.5/dlink-release-0.7.5.tar.gz

- 解压压缩包并创建软链接

tar xf dlink-release-0.7.5.tar.gz && ln -s dlink-release-0.7.5 dinky

- 创建数据库用户

#登录mysql

mysql -h 10.0.0.1 -uroot -proot@123

#创建数据库并授权

mysql> create database dinky;

mysql> grant all privileges on dinky.* to 'dinky'@'%' identified by 'dinky' with grant option;

mysql> flush privileges;

- 初始化数据库

#此处用 dinky 用户登录

mysql -h 10.0.0.1 -udinky -pdinky

mysql> source /opt/dinky/sql/dinky.sql

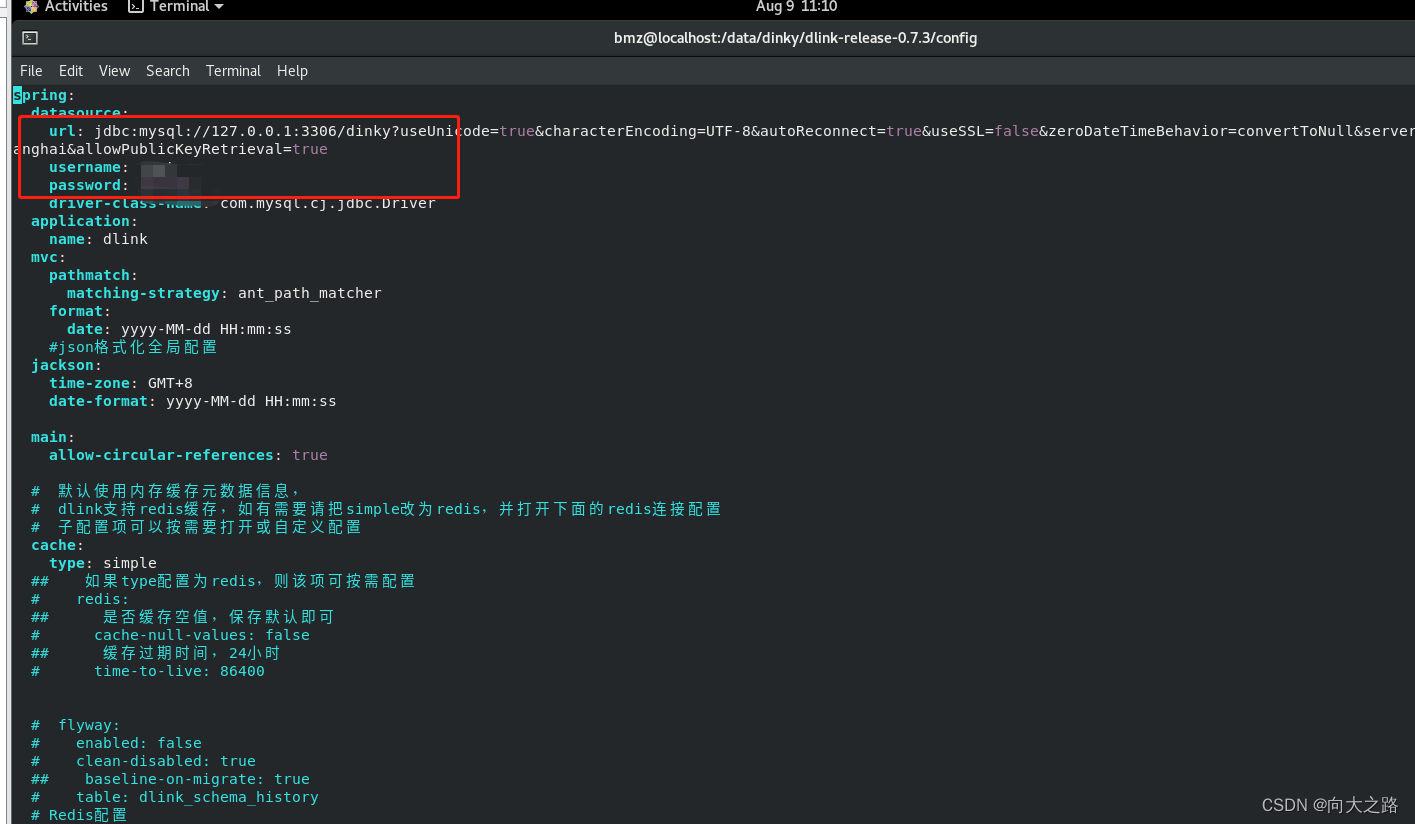

- 修改配置文件

vim /opt/dinky/config/application.yml

url: jdbc:mysql://${MYSQL_ADDR:10.0.0.1:3306}/${MYSQL_DATABASE:dinky}?useUnicode=true&characterEncoding=UTF-8&autoReconnect=true&useSSL=false&zeroDateTimeBehavior=convertToNull&serverTimezone=Asia/Shanghai&allowPublicKeyRetrieval=true

username: ${MYSQL_USERNAME:dinky}

password: ${MYSQL_PASSWORD:dinky}

- 启动dinky

cd /opt/dinky &&sh auto.sh start

- 访问Web 默认用户密码admin/admin

10.0.0.1:8888

3. 整库同步

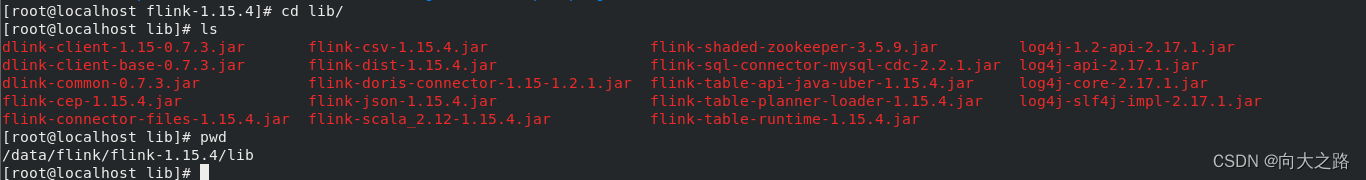

- 将Dinky整库同步依赖包放到flink/lib下

cp /opt/dinky/lib/dlink-client-base-0.7.5.jar /opt/flink/lib/

cp /opt/dinky/lib/dlink-common-0.7.5.jar /opt/flink/lib/

cp /opt/dinky/plugins/flink1.15/dinky/dlink-client-1.15-0.7.5.jar /opt/flink/lib/

- 获取其他依赖包到flink/lib下

wget https://repo.maven.apache.org/maven2/org/apache/flink/flink-connector-jdbc/1.15.4/flink-connector-jdbc-1.15.4.jar

wget https://repo.maven.apache.org/maven2/org/apache/doris/flink-doris-connector-1.15/1.4.0/flink-doris-connector-1.15-1.4.0.jar

wget https://repo1.maven.org/maven2/com/ververica/flink-sql-connector-mysql-cdc/2.4.2/flink-sql-connector-mysql-cdc-2.4.2.jar

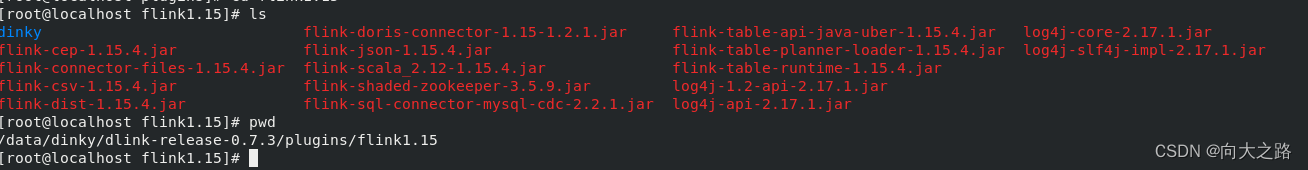

- 将flink/lib下面的所有包复制到dinky plugins目录对应的版本下

cd /opt/flink/lib &&cp * /opt/dinky/plugins/flink1.15/

- 重启flink

cd /opt/flink/bin && ./stop-cluster.sh

./start-cluster.sh

- 重启dinky

cd /opt/dinky && sh auto.sh stop

sh auto.sh start 1.15

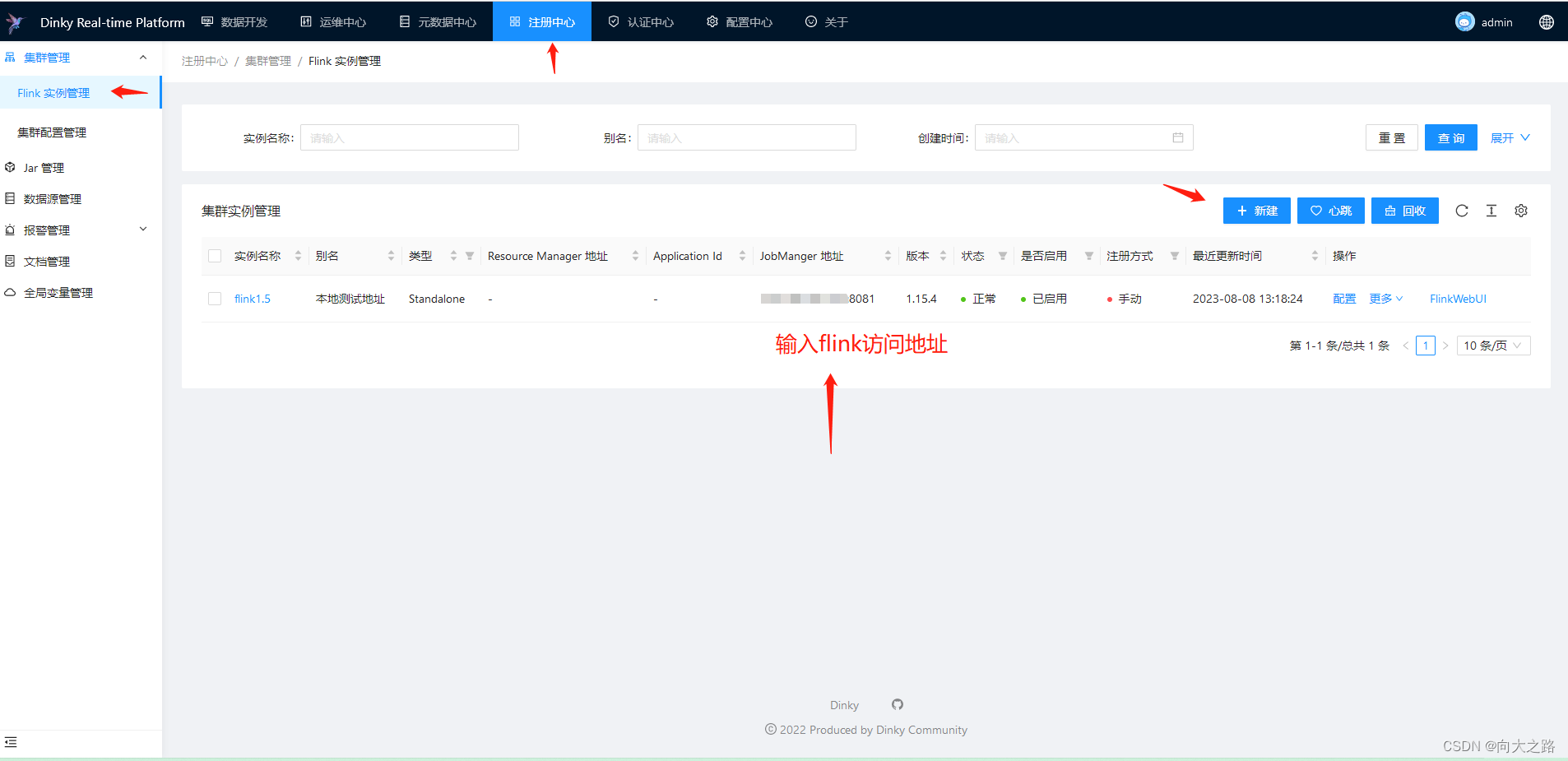

操作web界面

访问10.0.0.1:8888 默认密admin/admin

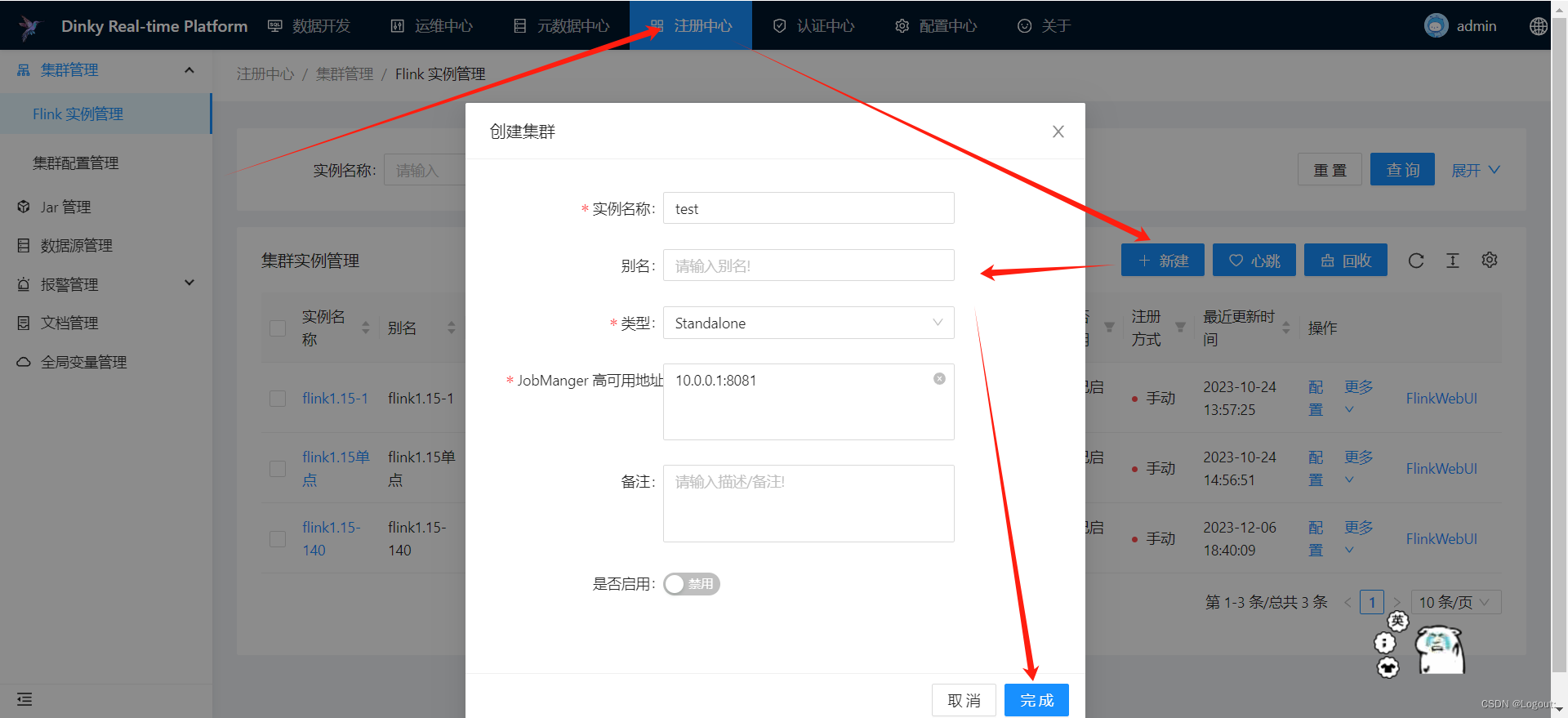

- 添加注册中心

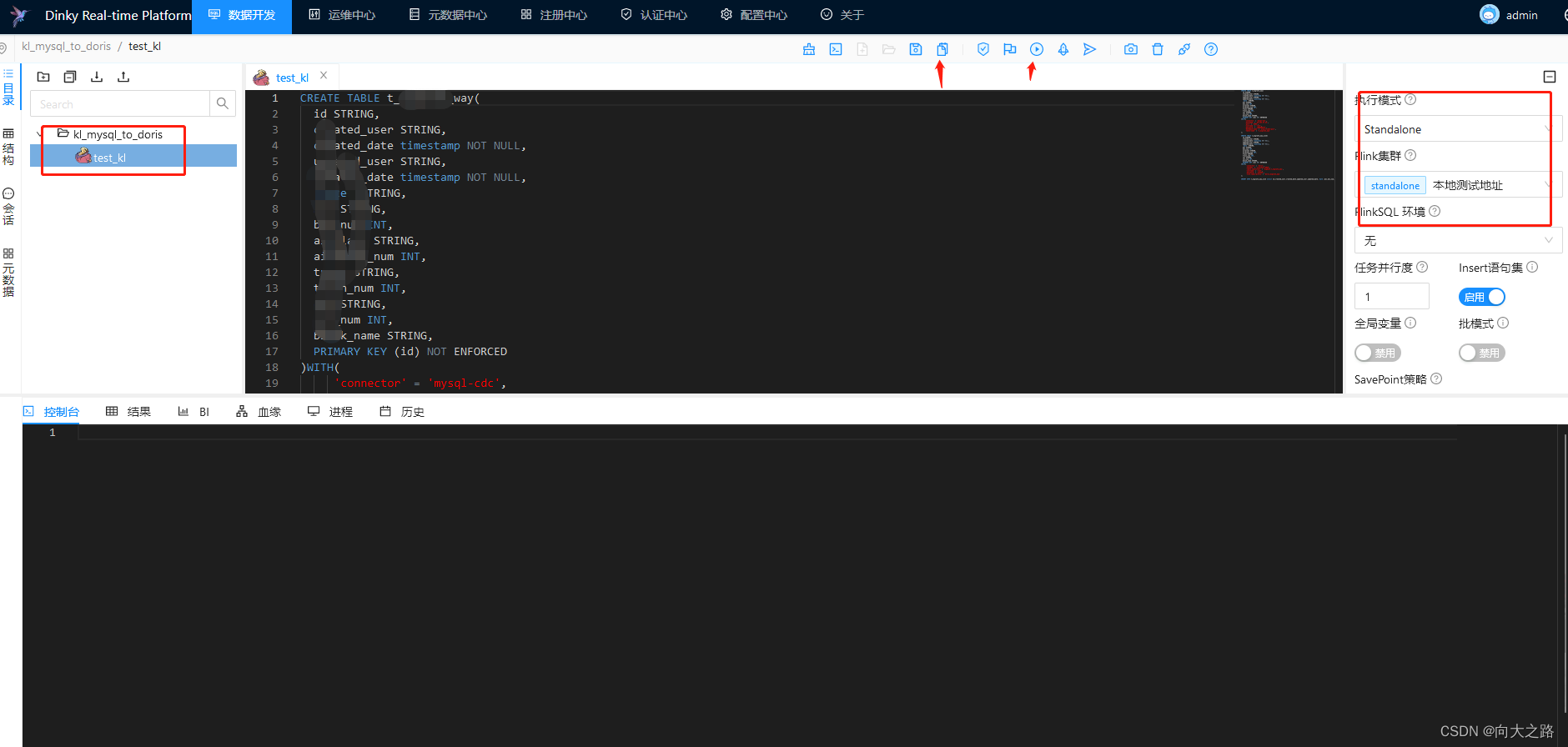

- 创建整库同步作业

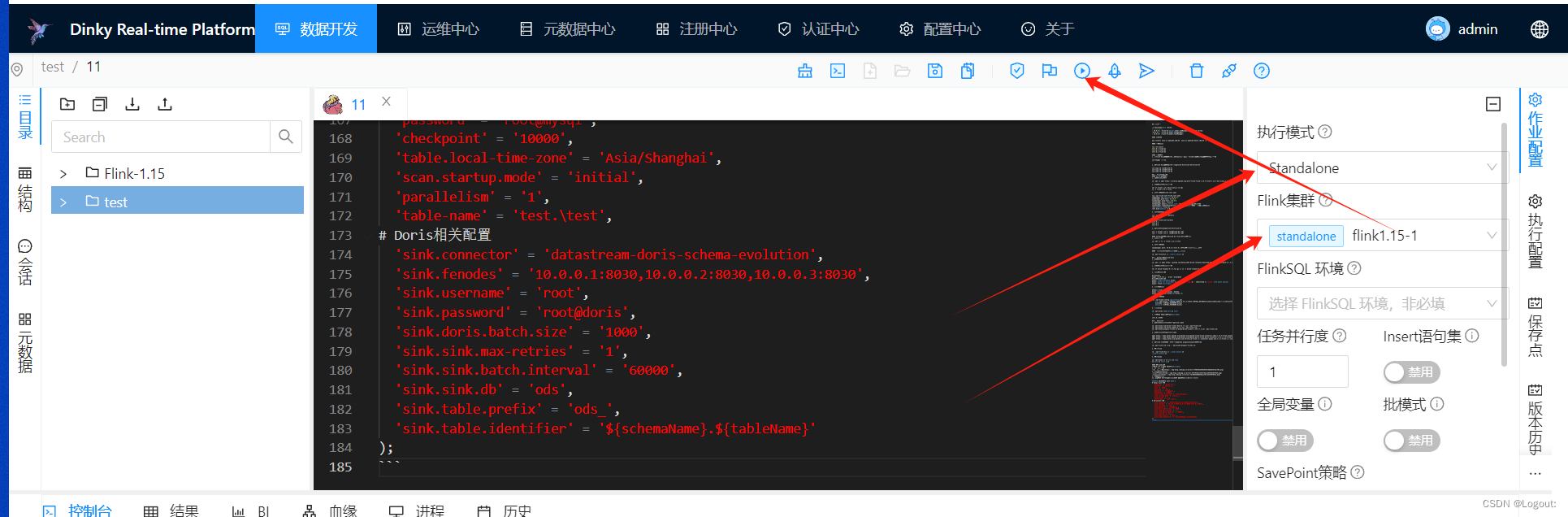

- 编辑整库作业

由于bug问题 要同步到Doris库的表 需要先手动在Doris中创建好

EXECUTE CDCSOURCE test WITH (

# mysql 相关配置

'connector' = 'mysql-cdc',

'hostname' = '10.0.0.1',

'port' = '3306',

'username' = 'root',

'password' = 'root@mysql',

'checkpoint' = '10000',

'table.local-time-zone' = 'Asia/Shanghai',

'scan.startup.mode' = 'initial',

'parallelism' = '1',

'table-name' = 'test.\test',

# Doris相关配置

'sink.connector' = 'datastream-doris-schema-evolution',

'sink.fenodes' = '10.0.0.1:8030,10.0.0.2:8030,10.0.0.3:8030',

'sink.username' = 'root',

'sink.password' = 'root@doris',

'sink.doris.batch.size' = '1000',

'sink.sink.max-retries' = '1',

'sink.sink.batch.interval' = '60000',

'sink.sink.db' = 'ods',

'sink.table.prefix' = 'ods_',

'sink.table.identifier' = '${schemaName}.${tableName}'

);

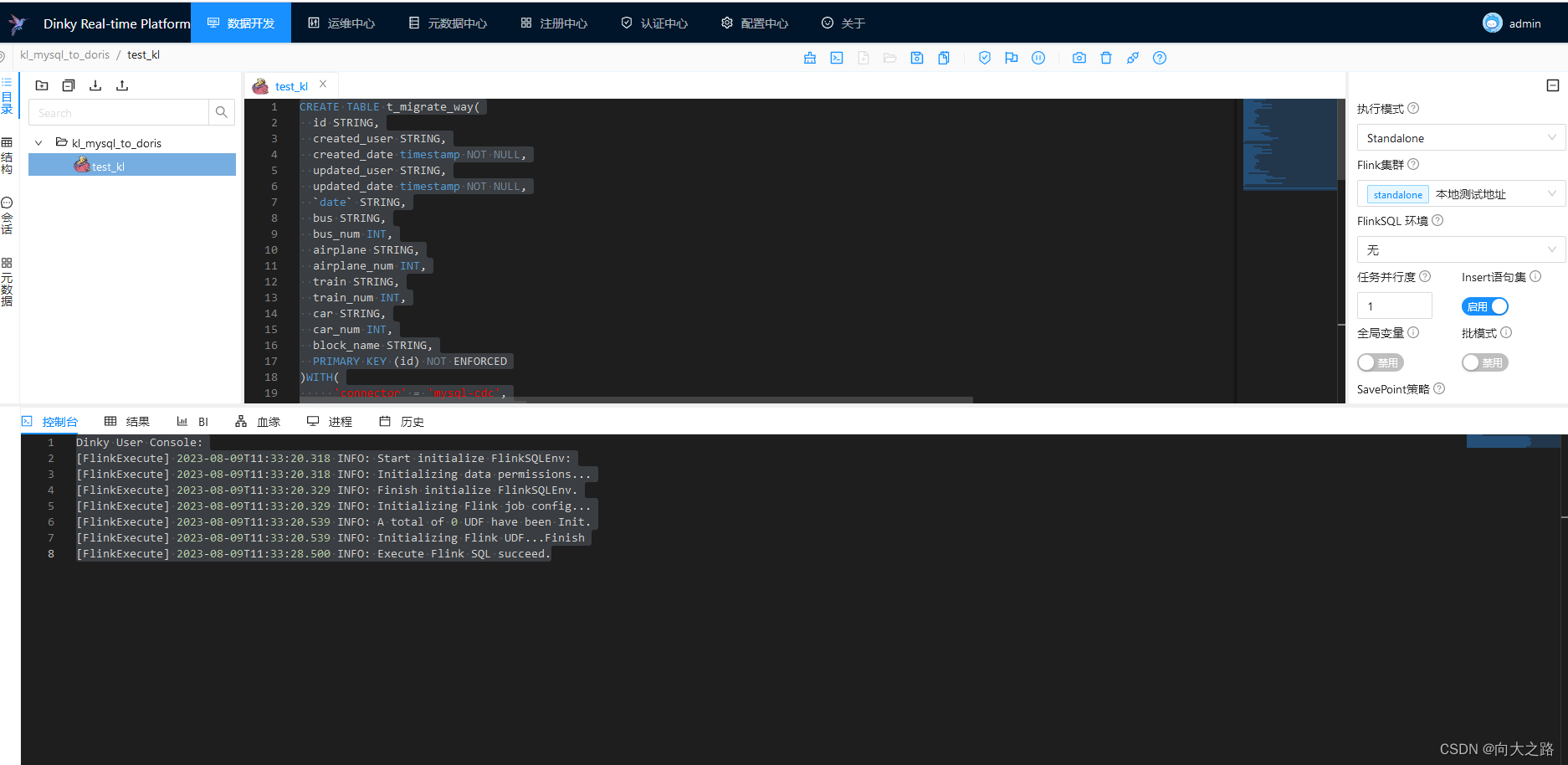

- 选择模式跟集群后开始任务

一、下载软件

Flink1.15.1: https://download.csdn.net/download/qq_37247664/88192279

Dinky0.7.3:https://download.csdn.net/download/qq_37247664/88192354

flinkcdc+doris连接器:https://download.csdn.net/download/qq_37247664/88192362

二、软件安装

flink安装:下载flink后解压文件 tar -zxvf 文件命名

将下载的flincdc和doris连接器jar包放入flink的lib包下

修改conf下的flink的yaml文件中rest.bind-address:0.0.0.0

启动flink bin/start-cluster.sh

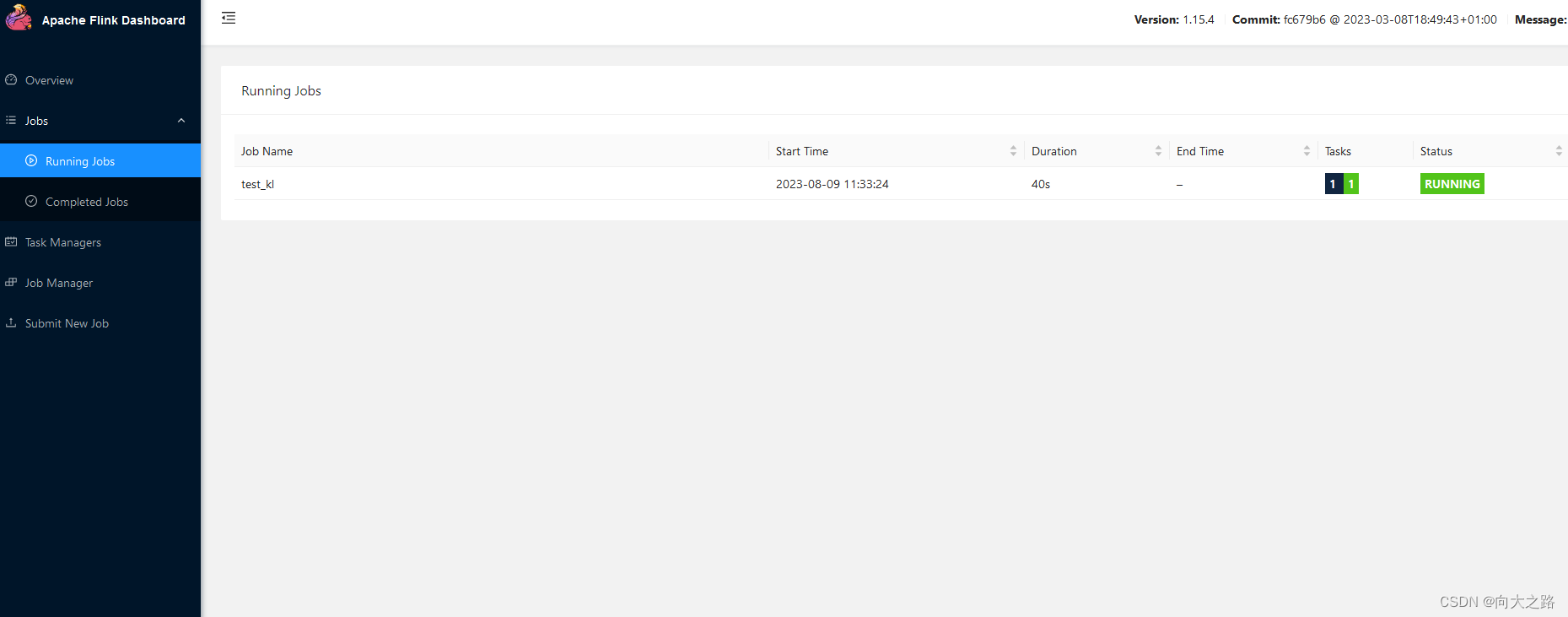

此时flink启动完毕,可以通过ip:8081访问flink页面

Dinky安装:下载Dinky后解压文件 tar -zxvf 文件名

修改config下的application.yml,主要修改数据库连接

将dinky下的sql同步到dinky库中。

将flink1.15下的lib中jar包放到dinky下plugins中flink1.15下

需要将dinky的dlink-common-0.7.3.jar,dlink-client-1.15-0.7.3.jar,dlink-client-base-0.7.3.jar 这三个jar包放入flink的lib下

启动dinky ./auto.sh start 1.15 使用1.15版本

访问:ip:8888

三、使用dinky创建job

注册flink集群选择standalone

数据开发

测试脚本

CREATE TABLE t_migrate_way(

id STRING,

created_user STRING,

created_date timestamp NOT NULL,

updated_user STRING,

updated_date timestamp NOT NULL,

`date` STRING,

bus STRING,

bus_num INT,

airplane STRING,

airplane_num INT,

train STRING,

train_num INT,

car STRING,

car_num INT,

block_name STRING,

PRIMARY KEY (id) NOT ENFORCED

)WITH(

'connector' = 'mysql-cdc',

'hostname' = '127.0.0.1',

'port' = '3306',

'username' = 'zc',

'password' = '123456',

'database-name' = '库名',

'table-name' = '表名'

);

CREATE TABLE t_migrate_way_sink(

id STRING,

created_user STRING,

created_date timestamp NOT NULL,

updated_user STRING,

updated_date timestamp NOT NULL,

`date` STRING,

bus STRING,

bus_num INT,

airplane STRING,

airplane_num INT,

train STRING,

train_num INT,

car STRING,

car_num INT,

block_name STRING,

PRIMARY KEY (id) NOT ENFORCED

)WITH(

'connector' = 'doris',

'fenodes' = 'localhost:8030',

'table.identifier' = 'bigdata.t_migrate_way',

'username' = '账号',

'password' = '密码',

'sink.label-prefix' = '唯一'

);

INSERT INTO t_migrate_way_sink select id,created_user,created_date,updated_user,updated_date,`date`,bus,bus_num,airplane,airplane_num,train,train_num,car,car_num,block_name from t_migrate_way;

四、效果

其中flink中的job等核心参数需自己设置

542

542

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?