大数据环境搭建

一、Docker安装

1.1 Centos Docker安装

# 镜像比较大, 需要准备一个网络稳定的环境

# 其中--mirror Aliyun代表使用阿里源

curl -fsSL https://get.docker.com | bash -s docker --mirror Aliyun

1.2 Ubuntu Docker安装【推荐】

curl -fsSL https://get.docker.com | bash -s docker --mirror Aliyun

上面运行不成功用下面的

yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

yum install docker-ce

1.3 MacOs Docker安装

# 下载安装包, 拖动安装即可

https://hub.docker.com/editions/community/docker-ce-desktop-mac/

1.4 Windows Docker安装【不推荐】

# win10家庭版 【参考】

https://docs.docker.com/docker-for-windows/install-windows-home/

# win10专业版、商业版或教育版 【参考】

https://docs.docker.com/docker-for-windows/install/

二、容器准备

su root mima

systemctl start docker

docker start singleNode

2.1 拉取镜像

docker pull centos:7

2.2 启动并创建容器

docker run -itd --privileged --name singleNode -h singleNode \

-p 2222:22 \

-p 3306:3306 \

-p 8020:8020 \

-p 9870:9870 \

-p 19888:19888 \

-p 8088:8088 \

-p 9083:9083 \

-p 10000:10000 \

-p 2181:2181 \

-p 9092:9092 \

-p 8091:8091 \

-p 8080:8080 \

-p 16010:16010 \

-p 4000:4000 \

-p 3000:3000 \

centos:7 /usr/sbin/init

# 其中端口号解释

2222:22# SSH

3306:3306 #MySQL

8020:8020 # HDFS RPC

9870:9870 # HDFS web UI

19888:19888 # Yarn job history

8088:8088 # Yarn web UI

9083:9083 # Hive metastore

10000:10000 # HiveServer2

2181:2181 # zk

9092:9092 # kafka

8091:8091 # flink

2.3 进入容器

docker exec -it singleNode /bin/bash

三、环境准备

3.1 安装必要软件

yum clean all

yum -y install unzip bzip2-devel vim bashname

yum install kde-l10n-Chinese -y

yum install glibc-common -y

localedef -c -f UTF-8 -i zh_CN zh_CN.utf8

echo "export LANG=zh_CN.UTF-8" >> /etc/locale.conf

echo "LC_ALL zh_CN.UTF-8" >> ~/.bashrc

3.2 配置SSH免密登录

# 修改root密码

passwd root # 输入两次密码

# 安装必要SSH服务

yum install -y openssh openssh-server openssh-clients openssl openssl-devel

# 生成秘钥

ssh-keygen -t rsa -f ~/.ssh/id_rsa -P ''

# 配置免密

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

# 方式2: ssh-copy-id

# 启动SSH服务

systemctl start sshd

3.3 设置时区

cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

3.4 关闭防火墙

yum -y install firewalld

systemctl stop firewalld

systemctl disable firewalld

3.5 时间同步、静态ip、主机映射

- 由于本次课使用docker进行环境搭建,所以对于静态ip和主机映射可以不用配置

- 由于本次课搭建的是单节点的伪分布式集群,所以时间同步可以用不设置

- 如果在物理机上搭建多节点的完全分布式集群则必须配置

SSH连接到宿主机,ip为宿主机ip,端口为容器映射的端口

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-7fdCp8Oj-1627893901945)(数据仓库环境部署.pic/image-20210802110310426.png)]

四、MySQL安装

4.1 上传解压安装包

cd /opt/software/

tar xvf MySQL-5.5.40-1.linux2.6.x86_64.rpm-bundle.tar

4.2 安装必要依赖

yum -y install libaio perl

4.3 安装服务端和客户端

rpm -ivh MySQL-server-5.5.40-1.linux2.6.x86_64.rpm

rpm -ivh MySQL-client-5.5.40-1.linux2.6.x86_64.rpm

4.4 启动并配置MySQL

方式一

# 启动服务

systemctl start mysql

# 修改MySQL密码

/usr/bin/mysqladmin -u root password 'root'

# 登陆MySQL设置权限

mysql -uroot -proot

> update mysql.user set host='%' where host='localhost';

> delete from mysql.user where host<>'%' or user='';

> flush privileges;

方式二

# 启动服务

systemctl start mysql

# 执行MySQL的初始化

/usr/bin/mysql_secure_installation

# 输入一次回车, 两次相同的密码进行修改密码

# Remove anonymous users? [Y/n] 是否移除掉anonymous用户 n

# Disallow root login remotely? [Y/n] 是否允许root用户远程登录 y

# Remove test database and access to it? [Y/n] 是否移除掉test数据库 n

# Reload privilege tables now? [Y/n] 是否现在刷新权限 y

# 登陆MySQL设置权限

mysql -uroot -proot

> update mysql.user set host='%' where host='localhost';

> delete from mysql.user where host<>'%' or user='';

> flush privileges;

五、安装JDK

5.1 上传并解压

tar zxvf /opt/software/jdk-8u171-linux-x64.tar.gz -C /opt/install/

ln -s /opt/install/jdk1.8.0_171 /opt/install/java

5.2 配置环境变量

环境变量配置在 ~/.bashrc 里

vi ~/.bashrc

-------------------------------------------

export JAVA_HOME=/opt/install/java

export PATH=$JAVA_HOME/bin:$PATH

-------------------------------------------

source ~/.bashrc

5.3 查看版本

java -version

六、Hadoop安装

6.1 上传并解压

tar zxvf hadoop-3.2.1.tar.gz -C /opt/install/

ln -s /opt/install/hadoop-3.2.1/ /opt/install/hadoop

6.2 修改配置

# 进入路径

cd /opt/install/hadoop/etc/hadoop/

6.2.1 配置core-site.xml

vi core-site.xml

-------------------------------------------

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://singleNode:8020</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/install/hadoop/data</value>

</property>

<property>

<name>hadoop.proxyuser.root.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.root.groups</name>

<value>*</value>

</property>

<property>

<name>hadoop.http.staticuser.user</name>

<value>root</value>

</property>

</configuration>

-------------------------------------------

6.2.2 配置hdfs-site.xml

vi hdfs-site.xml

-------------------------------------------

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>singleNode:9868</value>

</property>

</configuration>

-------------------------------------------

6.2.3 配置mapred-site.xml

vi mapred-site.xml

-------------------------------------------

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>singleNode:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>singleNode:19888</value>

</property>

</configuration>

-------------------------------------------

6.2.4 配置yarn-site.xml

vi yarn-site.xml

-------------------------------------------

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>singleNode</value>

</property>

<property>

<name>yarn.nodemanager.env-whitelist</name>

<value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOME</value>

</property>

<property>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>512</value>

</property>

<property>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>4096</value>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>4096</value>

</property>

<property>

<name>yarn.nodemanager.pmem-check-enabled</name>

<value>false</value>

</property>

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.log.server.url</name>

<value>http://${yarn.timeline-service.webapp.address}/applicationhistory/logs</value>

</property>

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

<property>

<name>yarn.timeline-service.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.timeline-service.hostname</name>

<value>${yarn.resourcemanager.hostname}</value>

</property>

<property>

<name>yarn.timeline-service.http-cross-origin.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.system-metrics-publisher.enabled</name>

<value>true</value>

</property>

</configuration>

-------------------------------------------

6.2.5 配置hadoop-env.sh

vi hadoop-env.sh --55

-------------------------------------------

export JAVA_HOME=/opt/install/java

-------------------------------------------

6.2.6 配置mapred-env.sh

vi mapred-env.sh

-------------------------------------------

export JAVA_HOME=/opt/install/java

-------------------------------------------

6.2.7 配置yarn-env.sh

vi yarn-env.sh

-------------------------------------------

export JAVA_HOME=/opt/install/java

-------------------------------------------

6.2.8 配置works

vi works

-------------------------------------------

singleNode

-------------------------------------------

6.3 添加变量

vi ~/.bashrc

------------------------------------------------

export HADOOP_HOME=/opt/install/hadoop

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

export PATH=$HADOOP_HOME/bin:$PATH

------------------------------------------------

source ~/.bashrc

# 以下两个配置文件都需要配置 切记:配置在前边,不要配置在最后

vi $HADOOP_HOME/sbin/start-dfs.sh

vi $HADOOP_HOME/sbin/stop-dfs.sh

------------------------------------------------

HDFS_NAMENODE_USER=root

HDFS_DATANODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

YARN_RESOURCEMANAGER_USER=root

YARN_NODEMANAGER_USER=root

------------------------------------------------

# 以下两个配置文件都需要配置 切记:配置在前边,不要配置在最后

vi $HADOOP_HOME/sbin/start-yarn.sh

vi $HADOOP_HOME/sbin/stop-yarn.sh

------------------------------------------------

YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root

------------------------------------------------

6.4 HDFS格式化

hdfs namenode -format

6.5 启动Hadoop服务

# 启动HDFS

$HADOOP_HOME/sbin/start-dfs.sh

# 启动yarn

$HADOOP_HOME/sbin/start-yarn.sh

# 启动历史服务器

mapred --daemon start historyserver

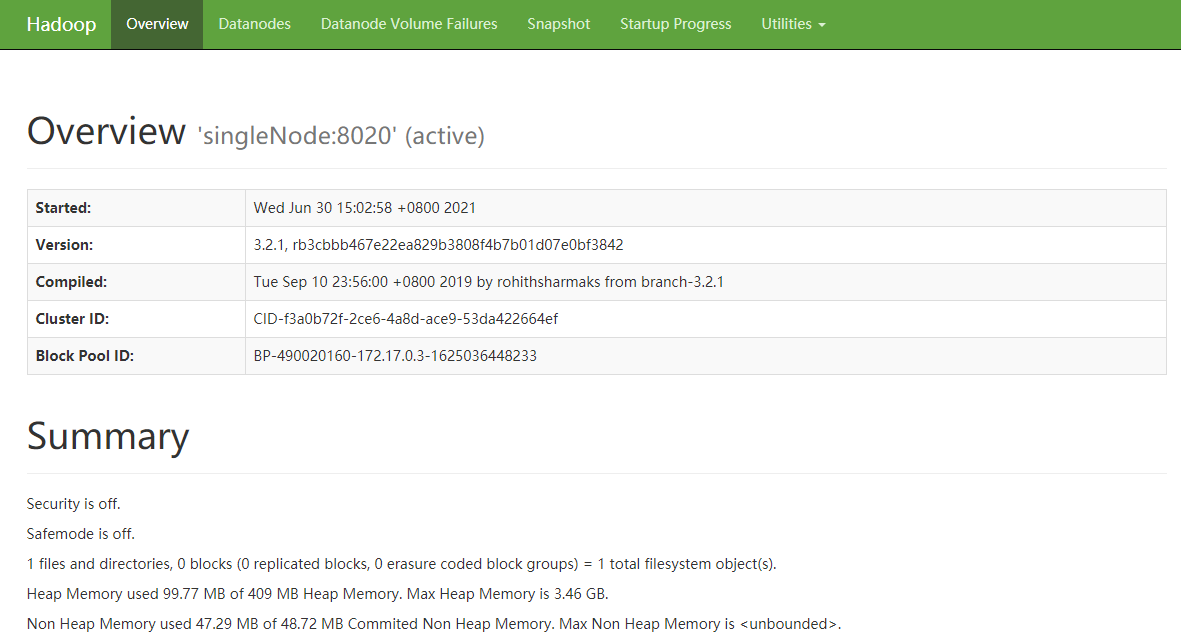

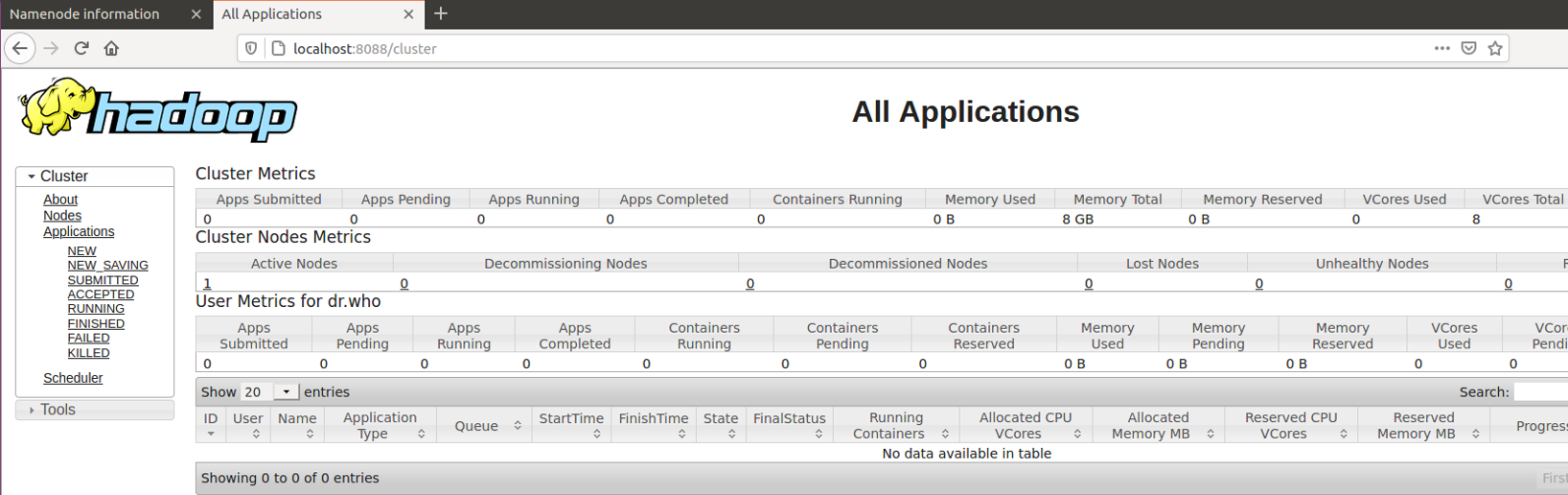

6.6 Web端查看

查看9870端口

查看8088端口

七、Hive安装

7.1 上传并解压

tar zxvf /opt/software/apache-hive-3.1.2-bin.tar.gz -C /opt/install/

ln -s /opt/install/apache-hive-3.1.2-bin/ /opt/install/hive

7.2 修改配置

# 进入路径

cd /opt/install/hive/conf/

7.2.1 修改hive-site.xml

cp hive-default.xml.template hive-site.xml

vi hive-site.xml

# !!!注意:删除掉原来的默认配置 dG 从当前行删除到最后一行

-------------------------------------------

<configuration>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://singleNode:3306/metastore?createDatabaseIfNotExist=true&useUnicode=true&characterEncoding=UTF-8</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>root</value>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

</property>

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

</property>

<property>

<name>hive.metastore.uris</name>

<value>thrift://singleNode:9083</value>

</property>

<property>

<name>hive.server2.thrift.port</name>

<value>10000</value>

</property>

<property>

<name>hive.server2.thrift.bind.host</name>

<value>singleNode</value>

</property>

<property>

<name>hive.metastore.event.db.notification.api.auth</name>

<value>false</value>

</property>

</configuration>

-------------------------------------------

7.2.2 修改hive-env.sh

cp hive-env.sh.template hive-env.sh

vi hive-env.sh

-------------------------------------------

HADOOP_HOME=/opt/install/hadoop

-------------------------------------------

7.3 添加依赖包

cp /opt/software/mysql-connector-java-5.1.31.jar /opt/install/hive/lib/

7.4 添加环境变量

vi ~/.bashrc

------------------------------------------------

export HIVE_HOME=/opt/install/hive

export PATH=$HIVE_HOME/bin:$PATH

------------------------------------------------

source ~/.bashrc

7.5 启动服务

# 初始化元数据表

schematool -dbType mysql -initSchema

# 启动hiveserver2服务

nohup hive --service hiveserver2 &

# 启动元数据服务

nohup hive --service metastore &

#############报错 Exception in thread "main" java.lang.NoSuchMethodError ################

# jar 包冲突, 需要删除低版本包

rm -rf /opt/install/hive/lib/guava-19.0.jar

cp /opt/install/hadoop/share/hadoop/common/lib/guava-27.0-jre.jar /opt/install/hive/lib/

测试:

beeline -u jdbc:hive2://singleNode:10000 -n root -p 0000 --color=true

7.6 Jps查看

八、Sqoop安装

8.1 上传并解压

tar zxvf /opt/software/sqoop-1.4.7.bin__hadoop-2.6.0.tar.gz -C /opt/install/

ln -s /opt/install/sqoop-1.4.7.bin__hadoop-2.6.0/ /opt/install/sqoop

8.2 修改sqoop-env.sh

cd /opt/install/sqoop/conf/

cp sqoop-env-template.sh sqoop-env.sh

vi sqoop-env.sh

-------------------------------------------

#Set path to where bin/hadoop is available

export HADOOP_COMMON_HOME=/opt/install/hadoop

#Set path to where hadoop-*-core.jar is available

export HADOOP_MAPRED_HOME=/opt/install/hadoop

#Set the path to where bin/hive is available

export HIVE_HOME=/opt/install/hive

-------------------------------------------

8.3 添加依赖包

cp /opt/software/mysql-connector-java-5.1.31.jar /opt/install/sqoop/lib/

cp /opt/software/commons-lang-2.6.jar /opt/install/sqoop/lib/

cp /opt/software/java-json.jar /opt/install/sqoop/lib/

8.4 添加环境变量

vi ~/.bashrc

------------------------------------------------

export SQOOP_HOME=/opt/install/sqoop

export PATH=$SQOOP_HOME/bin:$PATH

------------------------------------------------

8.5 查看版本

sqoop version

九、Flume安装

9.1 上传并解压

tar zxvf /opt/software/apache-flume-1.9.0-bin.tar.gz -C /opt/install/

cd /opt/install/

ln -s apache-flume-1.9.0-bin/ flume

9.2 删除依赖

cd flume/

rm -rf lib/guava-11.0.2.jar

9.3 添加环境变量

vi ~/.bashrc

---------------------

export FLUME_HOME=/opt/install/flume

export PATH=$FLUME_HOME/bin:$PATH

---------------------

source ~/.bashrc

十、Docker常用命令

镜像操作

拉取镜像

docker pull image_name:version

# 案例

docker pull centos:7

查看镜像

docker images

删除镜像

docker rmi centos:7

容器操作

创建容器

docker run -it --name test centos:7

# -i 前台运行

# -t 提供一个终端,一般和-i连用

# -d 后台运行

查看容器

docker ps -a

# -a 显示所有容器,包括已经退出的

进入容器

docker exec -it continer_name|continer_ID bash

关闭容器

docker stop continer_name|continer_ID

# 示例

docker stop test

删除容器

docker rm test

434

434

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?