I1113 20:56:49.405777 23834 net.cpp:42] Initializing net from parameters:

name: "Zeiler_conv5"

input: "data"

input_dim: 1

input_dim: 3

input_dim: 224

input_dim: 224

state {

phase: TEST

}

layer {

name: "conv1"

type: "Convolution"

bottom: "data"

top: "conv1"

param {

lr_mult: 1

}

param {

lr_mult: 2

}

convolution_param {

num_output: 96

pad: 3

kernel_size: 7

stride: 2

weight_filler {

type: "gaussian"

std: 0.01

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "relu1"

type: "ReLU"

bottom: "conv1"

top: "conv1"

}

layer {

name: "norm1"

type: "LRN"

bottom: "conv1"

top: "norm1"

lrn_param {

local_size: 3

alpha: 5e-05

beta: 0.75

norm_region: WITHIN_CHANNEL

}

}

layer {

name: "pool1"

type: "Pooling"

bottom: "norm1"

top: "pool1"

pooling_param {

pool: MAX

kernel_size: 3

stride: 2

pad: 1

}

}

layer {

name: "conv2"

type: "Convolution"

bottom: "pool1"

top: "conv2"

param {

lr_mult: 1

}

param {

lr_mult: 2

}

convolution_param {

num_output: 256

pad: 2

kernel_size: 5

stride: 2

weight_filler {

type: "gaussian"

std: 0.01

}

bias_filler {

type: "constant"

value: 1

}

}

}

layer {

name: "relu2"

type: "ReLU"

bottom: "conv2"

top: "conv2"

}

layer {

name: "norm2"

type: "LRN"

bottom: "conv2"

top: "norm2"

lrn_param {

local_size: 3

alpha: 5e-05

beta: 0.75

norm_region: WITHIN_CHANNEL

}

}

layer {

name: "pool2"

type: "Pooling"

bottom: "norm2"

top: "pool2"

pooling_param {

pool: MAX

kernel_size: 3

stride: 2

pad: 1

}

}

layer {

name: "conv3"

type: "Convolution"

bottom: "pool2"

top: "conv3"

param {

lr_mult: 1

}

param {

lr_mult: 2

}

convolution_param {

num_output: 384

pad: 1

kernel_size: 3

stride: 1

weight_filler {

type: "gaussian"

std: 0.01

}

bias_filler {

type: "constant"

value: 0

}

}

}

layer {

name: "relu3"

type: "ReLU"

bottom: "conv3"

top: "conv3"

}

......

I1113 20:56:49.433302 23834 net.cpp:194] relu5 does not need backward computation.

I1113 20:56:49.433305 23834 net.cpp:194] conv5 does not need backward computation.

I1113 20:56:49.433307 23834 net.cpp:194] relu4 does not need backward computation.

I1113 20:56:49.433310 23834 net.cpp:194] conv4 does not need backward computation.

I1113 20:56:49.433312 23834 net.cpp:194] relu3 does not need backward computation.

I1113 20:56:49.433315 23834 net.cpp:194] conv3 does not need backward computation.

I1113 20:56:49.433317 23834 net.cpp:194] pool2 does not need backward computation.

I1113 20:56:49.433320 23834 net.cpp:194] norm2 does not need backward computation.

I1113 20:56:49.433323 23834 net.cpp:194] relu2 does not need backward computation.

I1113 20:56:49.433326 23834 net.cpp:194] conv2 does not need backward computation.

I1113 20:56:49.433328 23834 net.cpp:194] pool1 does not need backward computation.

I1113 20:56:49.433331 23834 net.cpp:194] norm1 does not need backward computation.

I1113 20:56:49.433334 23834 net.cpp:194] relu1 does not need backward computation.

I1113 20:56:49.433336 23834 net.cpp:194] conv1 does not need backward computation.

I1113 20:56:49.433339 23834 net.cpp:235] This network produces output proposal_bbox_pred

I1113 20:56:49.433341 23834 net.cpp:235] This network produces output proposal_cls_prob

I1113 20:56:49.433354 23834 net.cpp:492] Collecting Learning Rate and Weight Decay.

I1113 20:56:49.433359 23834 net.cpp:247] Network initialization done.

I1113 20:56:49.433362 23834 net.cpp:248] Memory required for data: 21358056

错误:

Preparing training data...Starting parallel pool (parpool) using the 'local' profile ... connected to 4 workers.

Done.

Preparing validation data...Done.

Error using randperm

K must be less than or equal to N.

Error in proposal_train (line 88)

shuffled_inds_val = shuffled_inds_val(randperm(length(shuffled_inds_val), opts.val_iters));

Error in Faster_RCNN_Train.do_proposal_train (line 7)

model_stage.output_model_file = proposal_train(conf, dataset.imdb_train, dataset.roidb_train, ...

Error in script_faster_rcnn_VOC2007_ZF (line 45)

model.stage1_rpn = Faster_RCNN_Train.do_proposal_train(conf_proposal, dataset, model.stage1_rpn, opts.do_val);

解决:

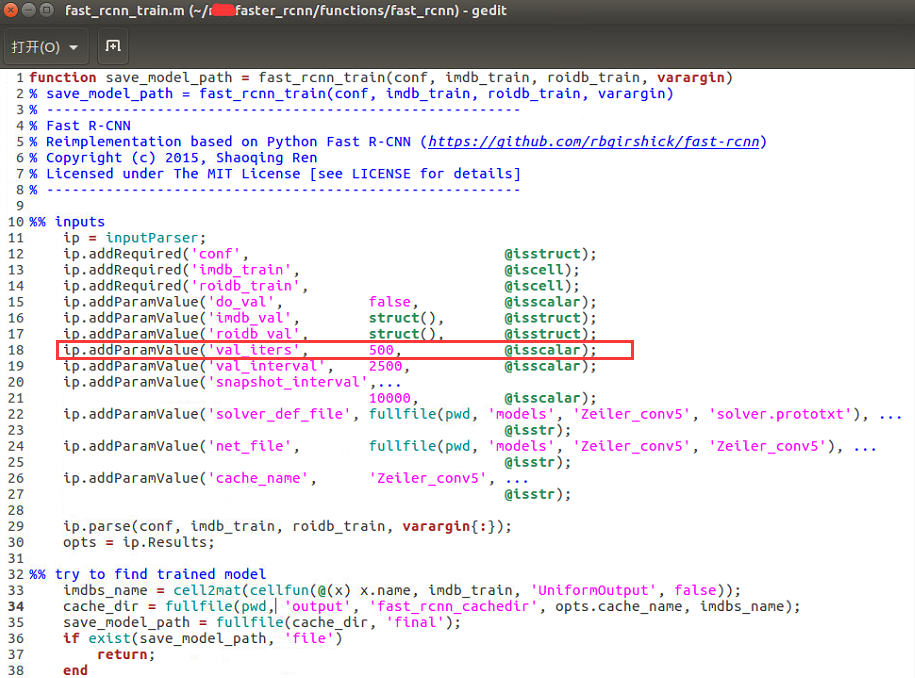

functions/fast_rcnn/fast_rcnn_train.m

将val_iters改小,为val的1/5

2166

2166

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?