Hive源码包下载

mirror.bit.edu.cn/apache/spark/

集群环境

- Master 172.16.11.97

- Slave1 172.16.11.98

- Slave2 172.16.11.99

下载软件包

#Master

wget http://mirror.bit.edu.cn/apache/spark/spark-1.6.3/spark-1.6.3-bin-hadoop2.6.tgz

tar zxvf spark-1.6.3-bin-hadoop2.6.tgz

wget https://downloads.lightbend.com/scala/2.12.4/scala-2.12.4.tgz

tar zxvf scala-2.12.4.tgz

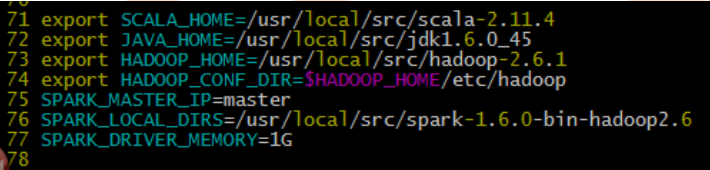

修改Spark配置文件

cd /usr/local/src/spark-1.6.3-bin-hadoop2.6/conf

mv spark-env.sh.template spark-env.sh

vim spark-env.sh

export SCALA_HOME=/usr/local/src/scala-2.12.4

export JAVA_HOME=/usr/local/src/jdk1.8.0_152

export HADOOP_HOME=/usr/local/src/hadoop-2.8.2

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

export SPARK_MASTER_IP=master

export SPARK_MASTER_PORT=7077

SPARK_LOCAL_DIRS=/usr/local/src/spark-1.6.3-bin-hadoop2.6

SPARK_DRIVER_MEMORY=1G

mv slaves.templateslaves

vim slaves

slave1

slave2

拷贝安装包

scp -r /usr/local/src/spark-1.6.3-bin-hadoop2.6 root@slave1:/usr/local/src/spark-1.6.3-bin-hadoop2.6

scp -r /usr/local/src/spark-1.6.3-bin-hadoop2.6 root@slave2:/usr/local/src/spark-1.6.3-bin-hadoop2.6

也可以用shell命令for i in {1..2}; do scp -r /bigdata/spark-2.2.0-bin-hadoop2.7/ slave$i:/bigdata; done

scp -r /usr/local/src/scala-2.12.4 root@slave1:/usr/local/src/scala-2.12.4

scp -r /usr/local/src/scala-2.12.4 root@slave2:/usr/local/src/scala-2.12.4

到此为止,Spark集群安装完毕,但是有一个很大的问题,那就是Master节点存在单点故障,要解决此问题,就要借助zookeeper,并且启动至少两个Master节点来实现高可靠,配置方式比较简单:

Spark集群规划:node1,node2是Master;node3,node4,node5是Worker

安装配置zk集群,并启动zk集群

停止spark所有服务,修改配置文件spark-env.sh,在该配置文件中删掉SPARK_MASTER_IP并添加如下配置

exportSPARK_DAEMON_JAVA_OPTS="-Dspark.deploy.recoveryMode=ZOOKEEPER-Dspark.deploy.zookeeper.url=zk1,zk2,zk3-Dspark.deploy.zookeeper.dir=/spark"

1.在node1节点上修改slaves配置文件内容指定worker节点

2.在node1上执行sbin/start-all.sh脚本,然后在node2上执行sbin/start-master.sh启动第二个Master

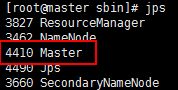

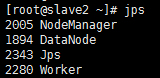

启动集群

cd /usr/local/src/spark-1.6.3-bin-hadoop2.6/sbin

./start-all.sh

网页监控面板

master:8080

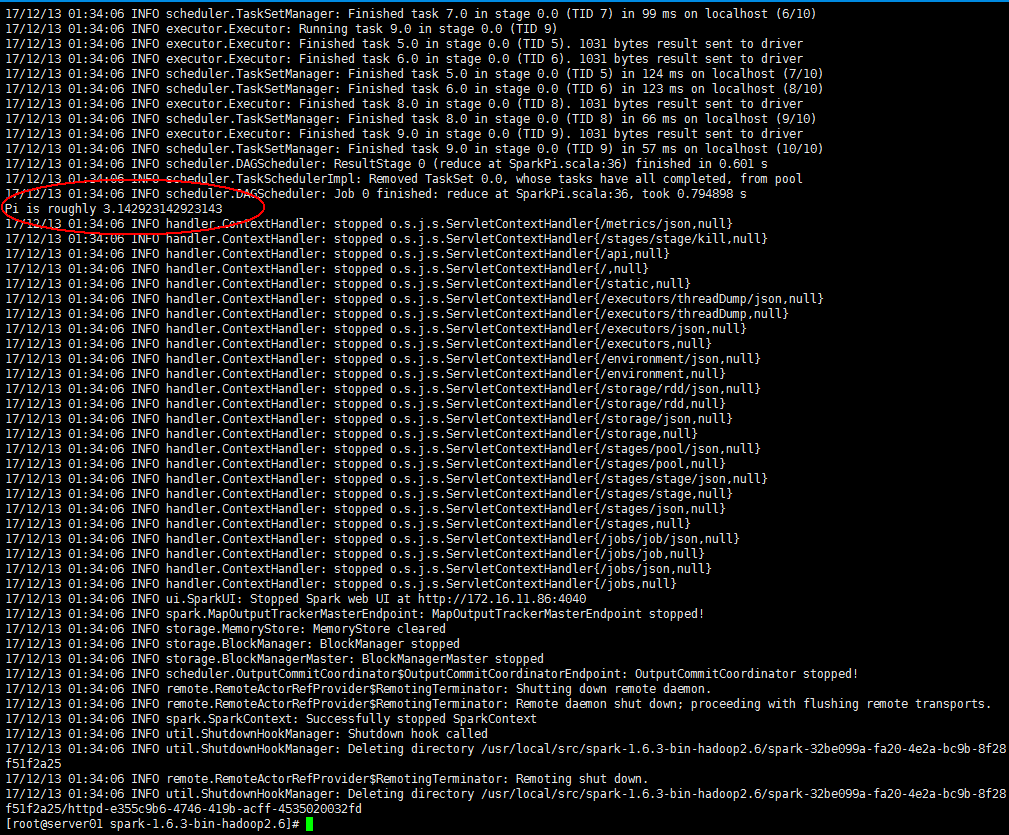

验证

#本地模式

./bin/run-example SparkPi 10 --master local[2]

#集群Standlone

./bin/spark-submit --class org.apache.spark.examples.SparkPi --master spark://master:7077 lib/spark-examples-1.6.3-hadoop2.6.0.jar 100

#集群

Spark on Yarn

./bin/spark-submit --class org.apache.spark.examples.SparkPi --master yarn-cluster lib/spark-examples-1.6.3-hadoop2.6.0.jar 10

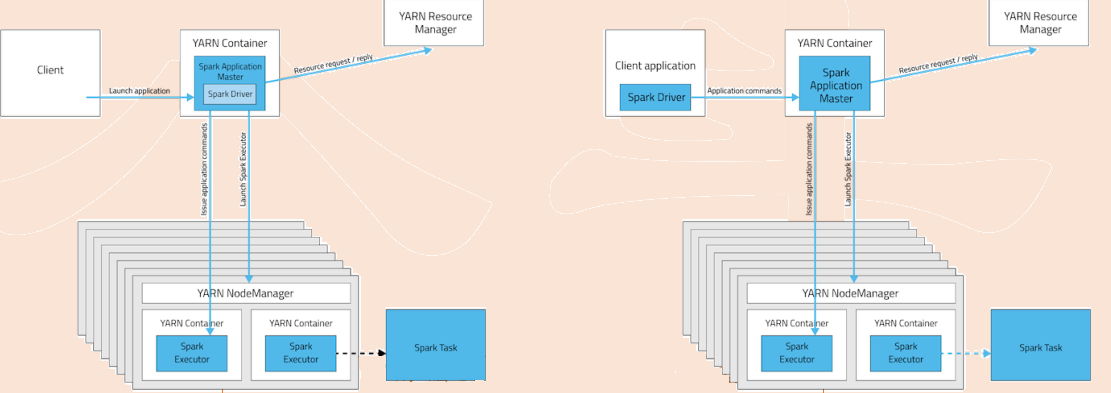

• Spark standalone vs. Spark on Yarn

• Spark standalone:独立模式,类似MapReduce 1.0所采取的模式,完全由内部实现容错性和资源管理

• Spark on Yarn:让Spark运行在一个通用的资源管理系统之上,这样可以与其他计算框架共享资源

• Yarn Client vs. Spark Standlone vs. Yarn Cluster

• Yarn Client:适用于交互与调试

– Driver在任务提交机上执行

– ApplicationMaster只负责向ResourceManager申请executor需要的资源

– 基于yarn时,spark-shell和pyspark必须要使用yarn-client模式

• Yarn Cluster:适用于生产环境

• Yarn Cluster vs. Yarn Client区别:本质是AM进程的区别,cluster模式下,driver运行在AM中,负责向Yarn申请资

源,并监督作业运行状况,当用户提交完作用后,就关掉Client,作业会继续在yarn上运行。然而cluster模式不适

合交互类型的作业。而client模式,AM仅向yarn请求executor,client会和请求的container通信来调度任务,即

client不能离开

Spark的资源管理组件

• Yarn(通用)

– Master/Slave结构

• RM:全局资源管理器,负责系统的资源管理和分配

• NM:每个节点上的资源和任务管理器

• AM:每个应用程序都有一个,负责任务调度和监视,并与RM调度器协商为任务获取资源

• Standalone(Spark自带)

– Master/Slave结构

• Master:类似Yarn中的RM

• Worker:类似Yarn中的NM

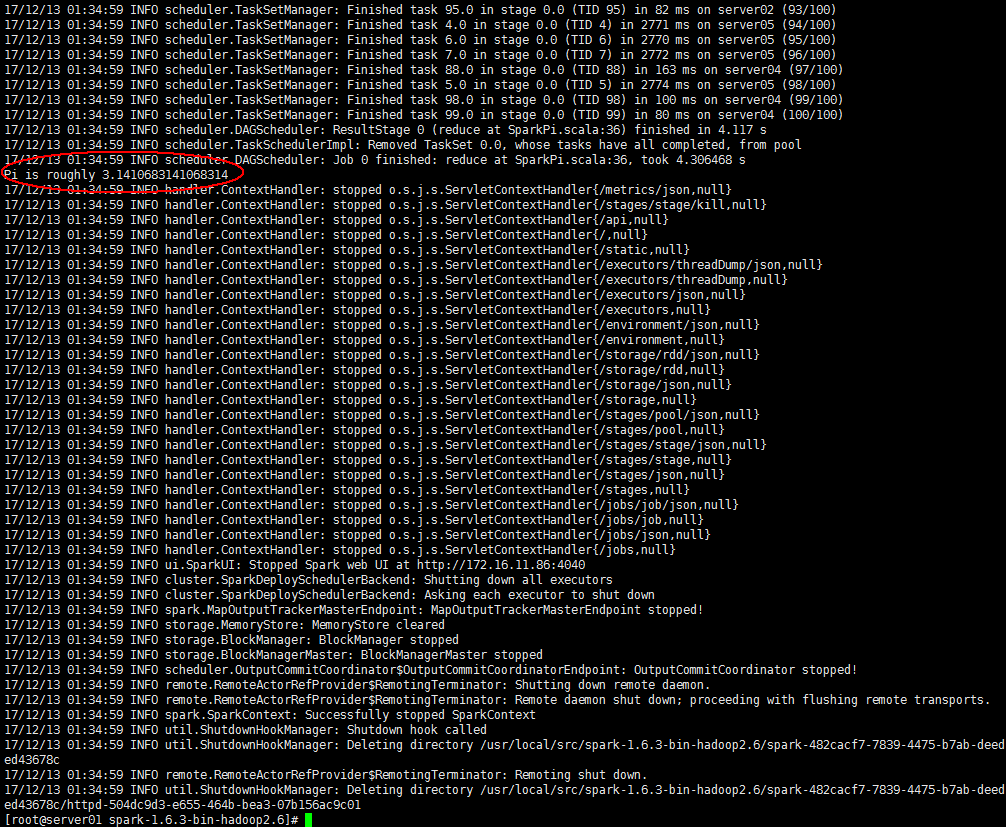

第一个spark程序

/usr/local/spark-1.5.2-bin-hadoop2.6/bin/spark-submit\

--classorg.apache.spark.examples.SparkPi \

--masterspark://node1.itcast.cn:7077 \

--executor-memory 1G\

--total-executor-cores2 \

/usr/local/spark-1.5.2-bin-hadoop2.6/lib/spark-examples-1.5.2-hadoop2.6.0.jar\

100

该算法是利用蒙特·卡罗算法求PI

Spark Shell

spark-shell是Spark自带的交互式Shell程序,方便用户进行交互式编程,用户可以在该命令行下用scala编写spark程序。

启动Spark Shell

/usr/local/spark-1.5.2-bin-hadoop2.6/bin/spark-shell\

--master spark://node1.itcast.cn:7077\

--executor-memory 2g\

--total-executor-cores2

参数说明:

--masterspark://node1.itcast.cn:7077 指定Master的地址

--executor-memory 2g 指定每个worker可用内存为2G

--total-executor-cores 2 指定整个集群使用的cup核数为2个

注意:

如果启动spark shell时没有指定master地址,但是也可以正常启动spark shell和执行spark shell中的程序,其实是启动了spark的local模式,该模式仅在本机启动一个进程,没有与集群建立联系。

Spark Shell中已经默认将SparkContext类初始化为对象sc。用户代码如果需要用到,则直接应用sc即可

spark shell中编写WordCount程序

1.首先启动hdfs

2.向hdfs上传一个文件到hdfs://node1.itcast.cn:9000/words.txt

3.在spark shell中用scala语言编写spark程序

sc.textFile("hdfs://node1.itcast.cn:9000/words.txt").flatMap(_.split(""))

.map((_,1)).reduceByKey(_+_).saveAsTextFile("hdfs://node1.itcast.cn:9000/out")

4.使用hdfs命令查看结果

hdfs dfs -lshdfs://node1.itcast.cn:9000/out/p*

说明:

sc是SparkContext对象,该对象时提交spark程序的入口

textFile(hdfs://node1.itcast.cn:9000/words.txt)是hdfs中读取数据

flatMap(_.split(" "))先map在压平

map((_,1))将单词和1构成元组

reduceByKey(_+_)按照key进行reduce,并将value累加

saveAsTextFile("hdfs://node1.itcast.cn:9000/out")将结果写入到hdfs中

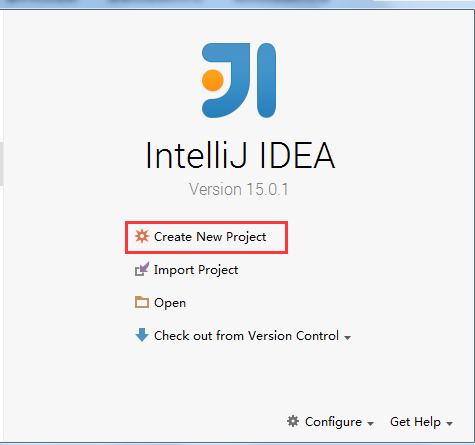

在IDEA中编写WordCount程序

spark shell仅在测试和验证我们的程序时使用的较多,在生产环境中,通常会在IDE中编制程序,然后打成jar包,然后提交到集群,最常用的是创建一个Maven项目,利用Maven来管理jar包的依赖。

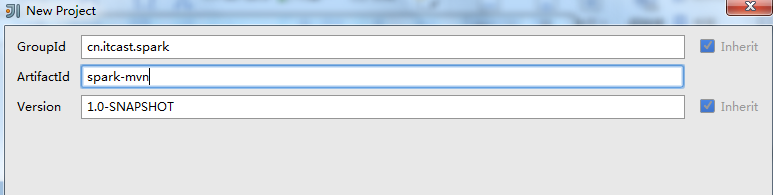

1.创建一个项目

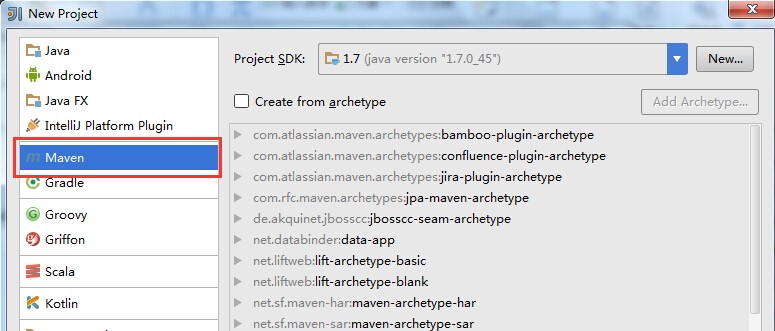

2.选择Maven项目,然后点击next

3.填写maven的GAV,然后点击next

4.填写项目名称,然后点击finish

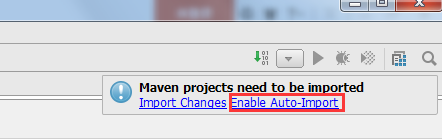

5.创建好maven项目后,点击Enable Auto-Import

6.配置Maven的pom.xml

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>cn.itcast.spark</groupId>

<artifactId>spark-mvn</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<maven.compiler.source>1.7</maven.compiler.source>

<maven.compiler.target>1.7</maven.compiler.target>

<encoding>UTF-8</encoding>

<scala.version>2.10.6</scala.version>

<scala.compat.version>2.10</scala.compat.version>

</properties>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.10</artifactId>

<version>1.5.2</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.10</artifactId>

<version>1.5.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.6.2</version>

</dependency>

</dependencies>

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<testSourceDirectory>src/test/scala</testSourceDirectory>

<plugins>

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.2.0</version>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

<configuration>

<args>

<arg>-make:transitive</arg>

<arg>-dependencyfile</arg>

<arg>${project.build.directory}/.scala_dependencies</arg>

</args>

</configuration>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-surefire-plugin</artifactId>

<version>2.18.1</version>

<configuration>

<useFile>false</useFile>

<disableXmlReport>true</disableXmlReport>

<includes>

<include>**/*Test.*</include>

<include>**/*Suite.*</include>

</includes>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>2.3</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<filters>

<filter>

<artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF</exclude>

<exclude>META-INF/*.DSA</exclude>

<exclude>META-INF/*.RSA</exclude>

</excludes>

</filter>

</filters>

<transformers>

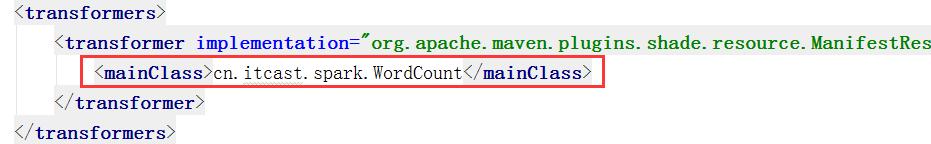

<transformer implementation="org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<mainClass>cn.itcast.spark.WordCount</mainClass>

</transformer>

</transformers>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

7.将src/main/java和src/test/java分别修改成src/main/scala和src/test/scala,与pom.xml中的配置保持一致

8.新建一个scala class,类型为Object

9.编写spark程序

package cn.itcast.spark

import org.apache.spark.{SparkContext, SparkConf}

object WordCount {

def main(args: Array[String]) {

//创建SparkConf()并设置App名称

val conf = new SparkConf().setAppName("WC")

//创建SparkContext,该对象是提交spark App的入口

val sc = new SparkContext(conf)

//使用sc创建RDD并执行相应的transformation和action

sc.textFile(args(0)).flatMap(_.split(" ")).map((_, 1)).reduceByKey(_+_, 1).sortBy(_._2, false).saveAsTextFile(args(1))

//停止sc,结束该任务

sc.stop()

}

}

10.使用Maven打包:首先修改pom.xml中的main class

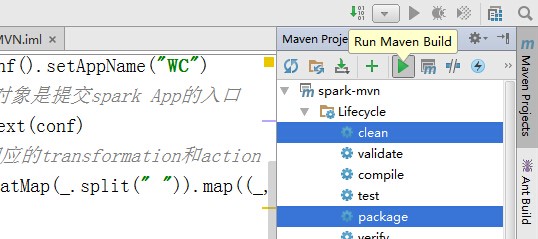

点击idea右侧的Maven Project选项

点击Lifecycle,选择clean和package,然后点击Run Maven Build

11.选择编译成功的jar包,并将该jar上传到Spark集群中的某个节点上

12.首先启动hdfs和Spark集群

启动hdfs

/usr/local/hadoop-2.6.1/sbin/start-dfs.sh

启动spark

/usr/local/spark-1.5.2-bin-hadoop2.6/sbin/start-all.sh

13.使用spark-submit命令提交Spark应用(注意参数的顺序)

/usr/local/spark-1.5.2-bin-hadoop2.6/bin/spark-submit\

--classcn.itcast.spark.WordCount \

--masterspark://node1.itcast.cn:7077 \

--executor-memory 2G\

--total-executor-cores4 \

/root/spark-mvn-1.0-SNAPSHOT.jar\

hdfs://node1.itcast.cn:9000/words.txt\

hdfs://node1.itcast.cn:9000/out

查看程序执行结果

hdfs dfs -cathdfs://node1.itcast.cn:9000/out/part-00000

(hello,6)

(tom,3)

(kitty,2)

(jerry,1)

1418

1418

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?