简介

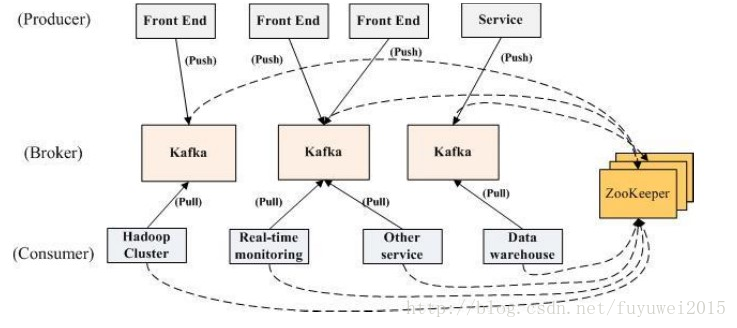

我们先看看官方给出的kafka分布式架构图

多个 broker 协同合作,producer 和 consumer 部署在各个业务逡辑中被频繁的调用,三者通过 zookeeper管理协调请求和转収。返样一个高怅能的分布式消息収布不订阅系统就完成了。

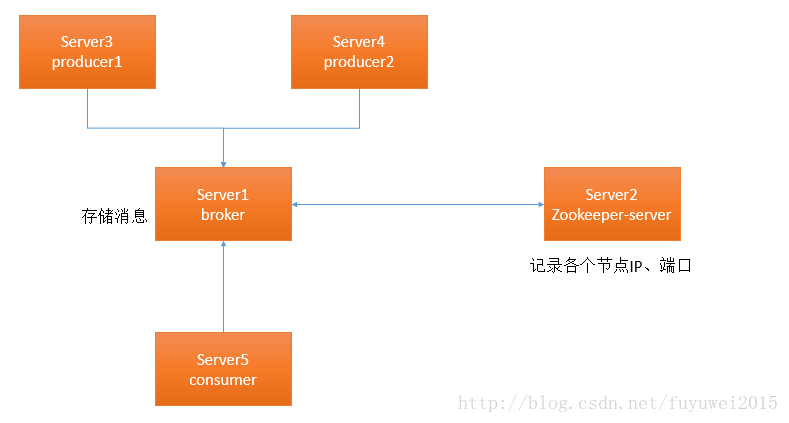

我们以一个broker为例介绍下整个消息系统的启动过程

整个系统运行的顺序:

1.启劢 zookeeper 的 server

2.启劢 kafka 的 server

3.Producer 如果生产了数据,会先通过 zookeeper 找刡 broker,然后将数据存放迕 broker

4.Consumer 如果要消费数据,会先通过 zookeeper 找对应的 broker,然后消费。

分布式环境搭建

单机版

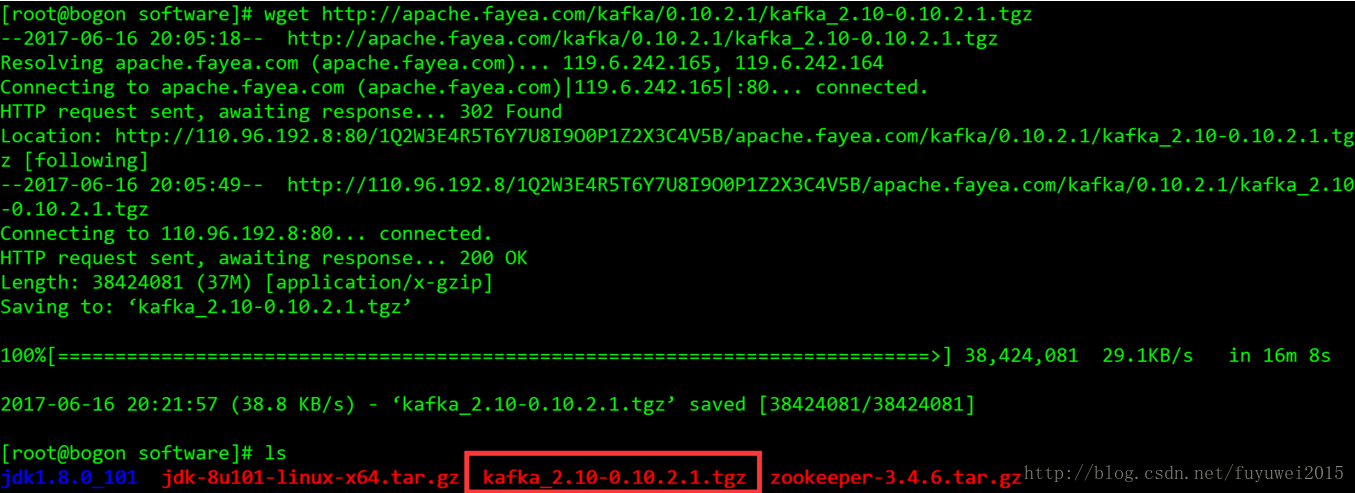

先去kafka官网现在最新的kafka安装包

[root@bogon software]# wget http://apache.fayea.com/kafka/0.10.2.1/kafka_2.10-0.10.2.1.tgz

然后把kafka解压到安装目录下

[root@bogon software]# tar -zvxf kafka_2.10-0.10.2.1.tgz -C /usr/local/kafka/

重命名kafka安装包,方便配置

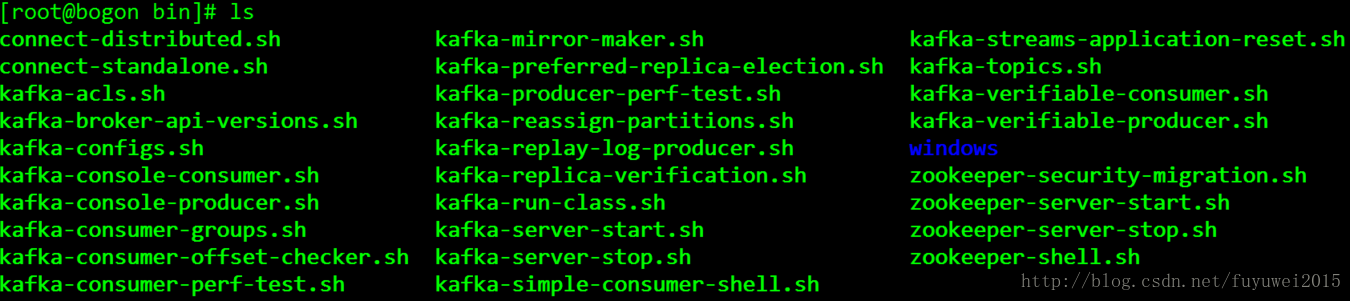

[root@bogon kafka]# mv kafka_2.10-0.10.2.1 kafk进入kafka/bin文件夹,这里有各种功能脚本,包括发送消息,消费消息、创建topic,查看topic以及各种启动、停止服务脚本等

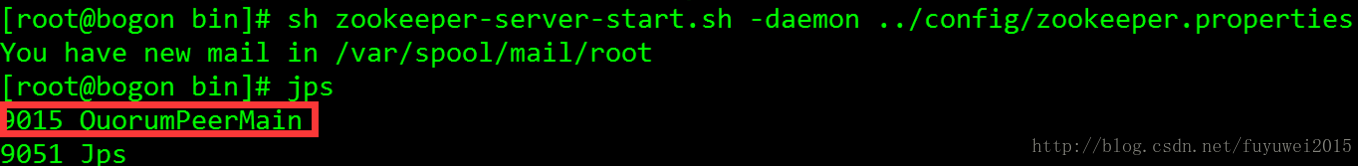

启动zookeeper

[root@bogon bin]# sh zookeeper-server-start.sh -daemon ../config/zookeeper.properties

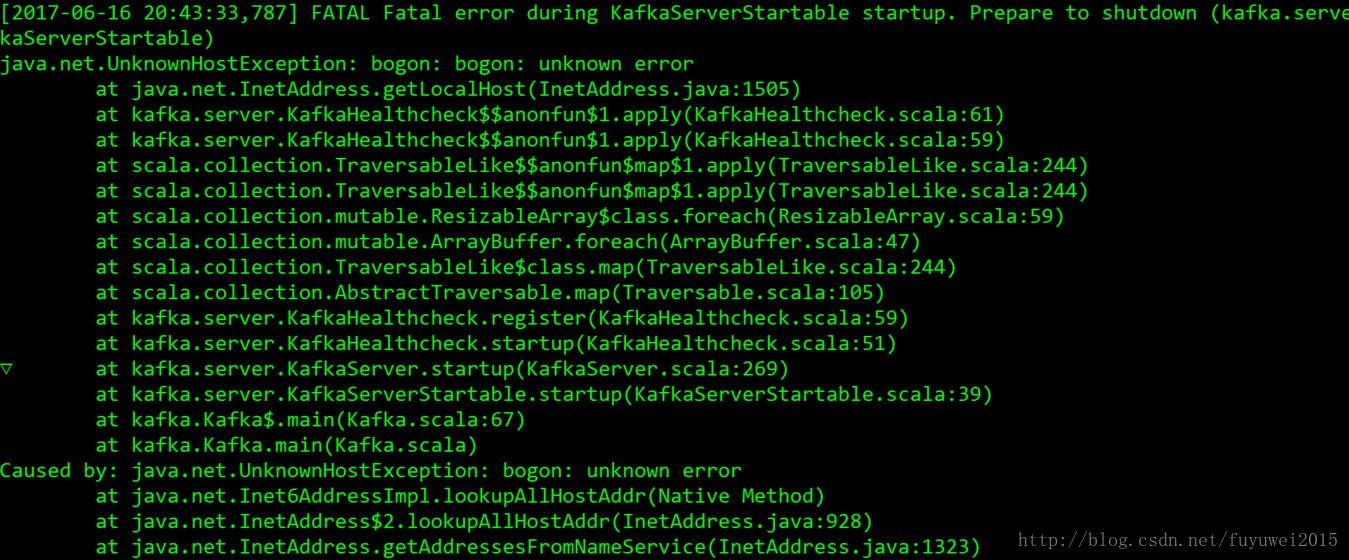

启动kafka服务

[root@bogon bin]# sh kafka-server-start.sh ../config/server.properties提示如下错误:

这时候我们需要修改下主机名

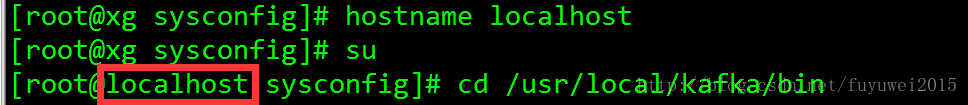

[root@xg sysconfig]# hostname localhost

[root@xg sysconfig]# su

再次执行

[root@localhost bin]# sh kafka-server-start.sh ../config/server.properties[root@localhost bin]# sh kafka-server-start.sh ../config/server.properties

[2017-06-16 20:49:10,581] INFO KafkaConfig values:

advertised.host.name = null

advertised.listeners = null

advertised.port = null

authorizer.class.name =

auto.create.topics.enable = true

auto.leader.rebalance.enable = true

background.threads = 10

broker.id = 0

broker.id.generation.enable = true

broker.rack = null

compression.type = producer

connections.max.idle.ms = 600000

controlled.shutdown.enable = true

controlled.shutdown.max.retries = 3

controlled.shutdown.retry.backoff.ms = 5000

controller.socket.timeout.ms = 30000

create.topic.policy.class.name = null

default.replication.factor = 1

delete.topic.enable = false

fetch.purgatory.purge.interval.requests = 1000

group.max.session.timeout.ms = 300000

group.min.session.timeout.ms = 6000

host.name =

inter.broker.listener.name = null

inter.broker.protocol.version = 0.10.2-IV0

leader.imbalance.check.interval.seconds = 300

leader.imbalance.per.broker.percentage = 10

listener.security.protocol.map = SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,TRACE:TRACE,SASL_SSL:SASL_SSL,PLAINTEXT:PLAINTEXT

listeners = null

log.cleaner.backoff.ms = 15000

log.cleaner.dedupe.buffer.size = 134217728

log.cleaner.delete.retention.ms = 86400000

log.cleaner.enable = true

log.cleaner.io.buffer.load.factor = 0.9

log.cleaner.io.buffer.size = 524288

log.cleaner.io.max.bytes.per.second = 1.7976931348623157E308

log.cleaner.min.cleanable.ratio = 0.5

log.cleaner.min.compaction.lag.ms = 0

log.cleaner.threads = 1

log.cleanup.policy = [delete]

log.dir = /tmp/kafka-logs

log.dirs = /tmp/kafka-logs

log.flush.interval.messages = 9223372036854775807

log.flush.interval.ms = null

log.flush.offset.checkpoint.interval.ms = 60000

log.flush.scheduler.interval.ms = 9223372036854775807

log.index.interval.bytes = 4096

log.index.size.max.bytes = 10485760

log.message.format.version = 0.10.2-IV0

log.message.timestamp.difference.max.ms = 9223372036854775807

log.message.timestamp.type = CreateTime

log.preallocate = false

log.retention.bytes = -1

log.retention.check.interval.ms = 300000

log.retention.hours = 168

log.retention.minutes = null

log.retention.ms = null

log.roll.hours = 168

log

本文详细介绍了在CentOS7环境下,如何一步步进行Kafka的单机和集群安装配置,包括启动Zookeeper,设置不同broker,创建并验证topic,以及处理启动过程中的问题。内容涵盖从下载安装包到多机多broker集群的配置和测试。

本文详细介绍了在CentOS7环境下,如何一步步进行Kafka的单机和集群安装配置,包括启动Zookeeper,设置不同broker,创建并验证topic,以及处理启动过程中的问题。内容涵盖从下载安装包到多机多broker集群的配置和测试。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?