先导入所需要的库:

import urllib.request

import re

from requests import RequestException

import requests

import os

模拟UA:

headers = {'content-type': 'application/json',

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/85.0.4183.83 Safari/537.36'}

req = urllib.request.Request(url=url, headers=headers)

response = urllib.request.urlopen(req)

html = response.read().decode('utf-8')

return html提取信息:

# 题目

pattern = re.compile('<h1 class="main-title">(.*?)</h1>', re.S)

title = pattern.findall(html)[0]

题目,日期,来源,正文一样都可以按这个方法爬取。

数据存储:

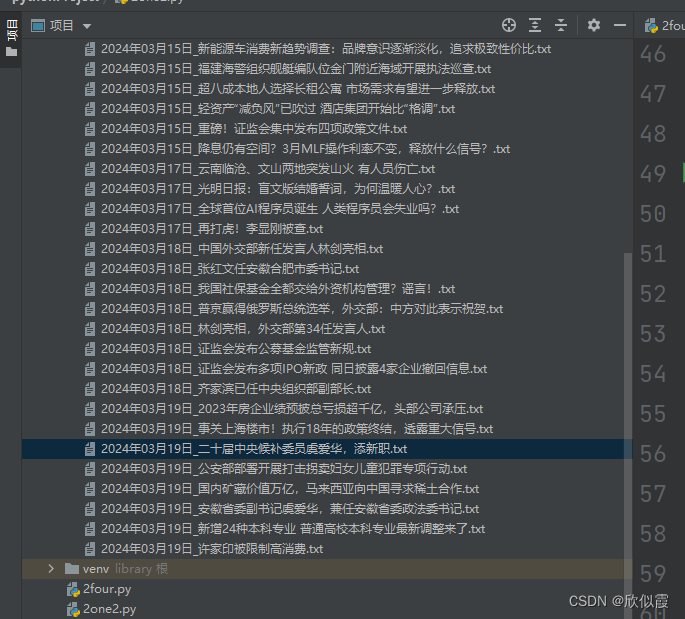

with open('./news/{}.txt'.format(date[:11] + '_' + title), 'w', encoding='utf-8') as f:

f.write(title + '\t' + date + '\t' + source + '\n')

for i in article:

f.write(i.strip())存储文件夹:

if not os.path.exists('news'):

os.mkdir('news')循环爬取:

for i in range(len(links)):

links[i] = links[i].replace('\\', '')

检测异常:

try:

html = get_page(url)

# 解析响应、保存结果

get_parser(html)

count += 1

except:

print(f'爬取中止,已爬取{count}条新闻')

f = 0

break运行结果截图:

如果需要完整代码,关注博主私聊哟!

联系Q:3041893695

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?