RAC删除节点

概述

删除节点比添加节点稍微麻烦点,步骤稍微要多一点。是添加节点的逆过来操作。

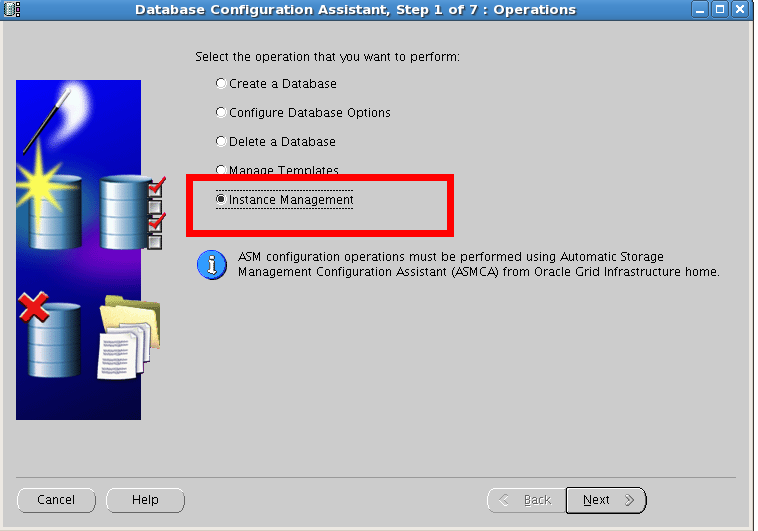

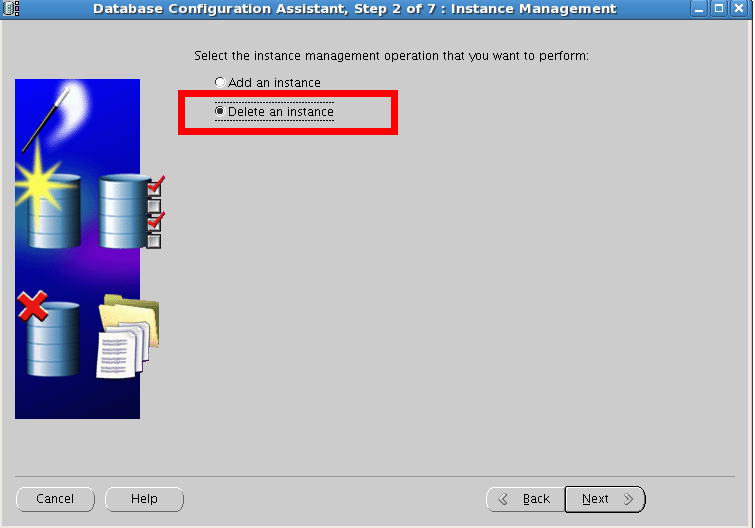

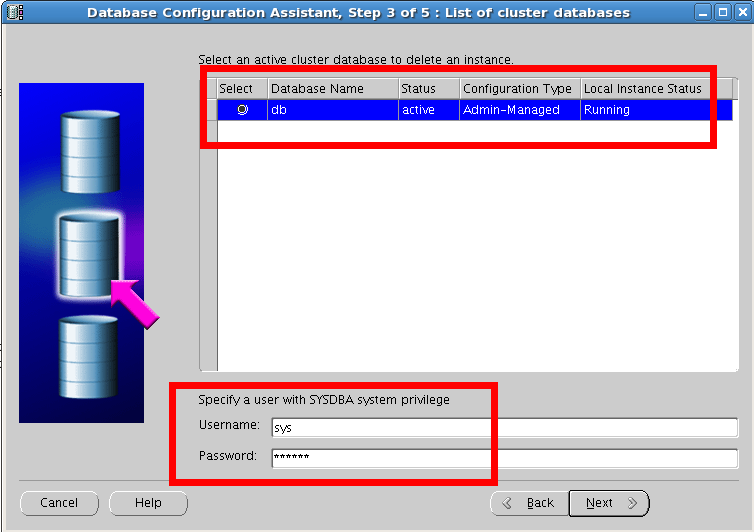

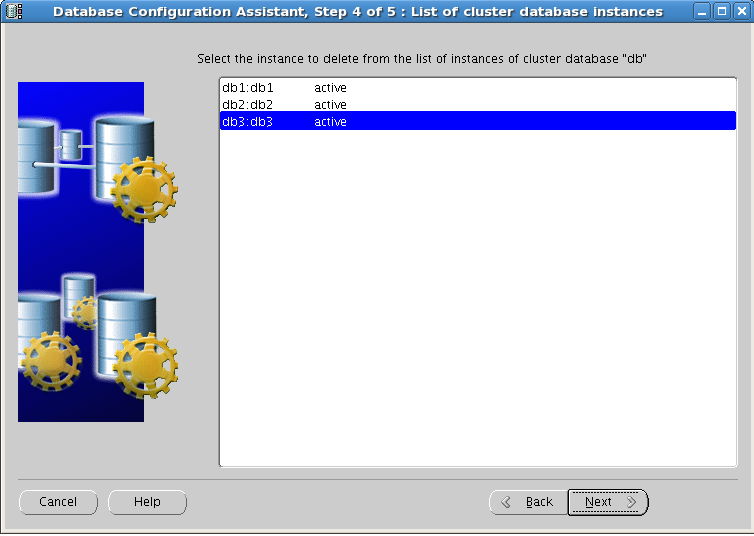

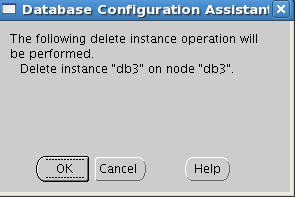

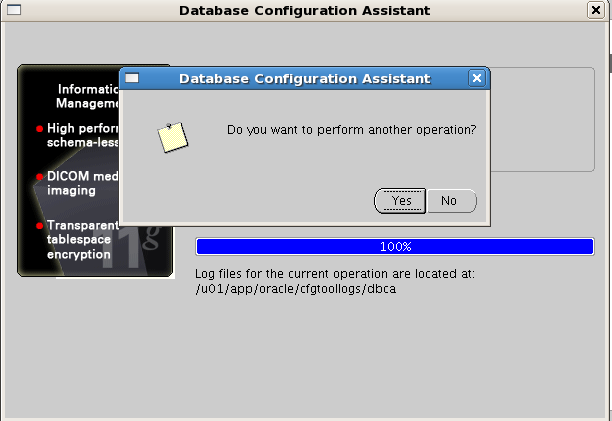

删除实例

用dbca来删除实例

| [grid@db1 bin]$ crsctl status res -t -------------------------------------------------------------------------------- NAME TARGET STATE SERVER STATE_DETAILS -------------------------------------------------------------------------------- Local Resources -------------------------------------------------------------------------------- ora.DATA.dg ONLINE ONLINE db1 ONLINE ONLINE db2 ONLINE ONLINE db3 ora.LISTENER.lsnr ONLINE ONLINE db1 ONLINE ONLINE db2 ONLINE ONLINE db3 ora.asm ONLINE ONLINE db1 Started ONLINE ONLINE db2 Started ONLINE ONLINE db3 Started ora.eons ONLINE ONLINE db1 ONLINE ONLINE db2 ONLINE ONLINE db3 ora.gsd OFFLINE OFFLINE db1 OFFLINE OFFLINE db2 OFFLINE OFFLINE db3 ora.net1.network ONLINE ONLINE db1 ONLINE ONLINE db2 ONLINE ONLINE db3 ora.ons ONLINE ONLINE db1 ONLINE ONLINE db2 ONLINE ONLINE db3 -------------------------------------------------------------------------------- Cluster Resources -------------------------------------------------------------------------------- ora.LISTENER_SCAN1.lsnr 1 ONLINE ONLINE db2 ora.db.db 1 ONLINE ONLINE db1 Open 2 ONLINE ONLINE db2 Open ora.db1.vip 1 ONLINE ONLINE db1 ora.db2.vip 1 ONLINE ONLINE db2 ora.db3.vip 1 ONLINE ONLINE db3 ora.oc4j 1 OFFLINE OFFLINE ora.scan1.vip 1 ONLINE ONLINE db2 |

可以看到ora.db.db对应的db3,已经没有了。

停listener

停止节点3的listener

| [grid@db3 ~]$ srvctl config listener -a Name: LISTENER Network: 1, Owner: grid Home: <CRS home> /u01/app/11.2.0/grid on node(s) db3,db2,db1 End points: TCP:1521 [grid@db3 ~]$ srvctl disable listener -l listener -n db3 [grid@db3 ~]$ srvctl stop listener -l listener -n db3 |

删database软件,更新oracle用户信息

更新要删除节点的集群列表(oracle用户)

| [root@db3 ~]# su - oracle [oracle@db3 ~]$cd /u01/app/oracle/product/11.2.0/db/oui/bin/ [oracle@db3 bin]$ ./runInstaller -updateNodeList ORACLE_HOME=$ORACLE_HOME "CLUSTER_NODES={db3}" –local Starting Oracle Universal Installer...

Checking swap space: must be greater than 500 MB. Actual 4627 MB Passed The inventory pointer is located at /etc/oraInst.loc The inventory is located at /u01/app/oraInventory 'UpdateNodeList' was successful. |

| [oracle@db3 db]$ cd /u01/app/oracle/product/11.2.0/db/deinstall/ [oracle@db3 deinstall]$./deinstall -local Checking for required files and bootstrapping ... Please wait ... Location of logs /u01/app/oraInventory/logs/

############ ORACLE DEINSTALL & DECONFIG TOOL START ############

######################## CHECK OPERATION START ######################## Install check configuration START

Checking for existence of the Oracle home location /u01/app/oracle/product/11.2.0/db Oracle Home type selected for de-install is: RACDB Oracle Base selected for de-install is: /u01/app/oracle Checking for existence of central inventory location /u01/app/oraInventory Checking for existence of the Oracle Grid Infrastructure home /u01/app/11.2.0/grid The following nodes are part of this cluster: db3

Install check configuration END

Network Configuration check config START

Network de-configuration trace file location: /u01/app/oraInventory/logs/netdc_check9197824796654866846.log

Network Configuration check config END

Database Check Configuration START

Database de-configuration trace file location: /u01/app/oraInventory/logs/databasedc_check2332725355778202763.log

Database Check Configuration END

Enterprise Manager Configuration Assistant START

EMCA de-configuration trace file location: /u01/app/oraInventory/logs/emcadc_check.log

Enterprise Manager Configuration Assistant END Oracle Configuration Manager check START OCM check log file location : /u01/app/oraInventory/logs//ocm_check6825.log Oracle Configuration Manager check END

######################### CHECK OPERATION END #########################

####################### CHECK OPERATION SUMMARY ####################### Oracle Grid Infrastructure Home is: /u01/app/11.2.0/grid The cluster node(s) on which the Oracle home exists are: (Please input nodes seperated by ",", eg: node1,node2,...)db3 Since -local option has been specified, the Oracle home will be de-installed only on the local node, 'db3', and the global configuration will be removed. Oracle Home selected for de-install is: /u01/app/oracle/product/11.2.0/db Inventory Location where the Oracle home registered is: /u01/app/oraInventory The option -local will not modify any database configuration for this Oracle home.

No Enterprise Manager configuration to be updated for any database(s) No Enterprise Manager ASM targets to update No Enterprise Manager listener targets to migrate Checking the config status for CCR Oracle Home exists with CCR directory, but CCR is not configured CCR check is finished Do you want to continue (y - yes, n - no)? [n]: y A log of this session will be written to: '/u01/app/oraInventory/logs/deinstall_deconfig2014-03-06_07-03-28-PM.out' Any error messages from this session will be written to: '/u01/app/oraInventory/logs/deinstall_deconfig2014-03-06_07-03-28-PM.err'

######################## CLEAN OPERATION START ########################

Enterprise Manager Configuration Assistant START

EMCA de-configuration trace file location: /u01/app/oraInventory/logs/emcadc_clean.log

Updating Enterprise Manager ASM targets (if any) Updating Enterprise Manager listener targets (if any) Enterprise Manager Configuration Assistant END Database de-configuration trace file location: /u01/app/oraInventory/logs/databasedc_clean6091093531264526434.log

Network Configuration clean config START

Network de-configuration trace file location: /u01/app/oraInventory/logs/netdc_clean1217065274837444160.log

De-configuring backup files... Backup files de-configured successfully.

The network configuration has been cleaned up successfully.

Network Configuration clean config END

Oracle Configuration Manager clean START OCM clean log file location : /u01/app/oraInventory/logs//ocm_clean6825.log Oracle Configuration Manager clean END Oracle Universal Installer clean START

Detach Oracle home '/u01/app/oracle/product/11.2.0/db' from the central inventory on the local node : Done

Delete directory '/u01/app/oracle/product/11.2.0/db' on the local node : Done

Failed to delete the directory '/u01/app/oracle'. The directory is in use. Delete directory '/u01/app/oracle' on the local node : Failed <<<<

Oracle Universal Installer cleanup completed with errors.

Oracle Universal Installer clean END

Oracle install clean START

Clean install operation removing temporary directory '/tmp/install' on node 'db3'

Oracle install clean END

######################### CLEAN OPERATION END #########################

####################### CLEAN OPERATION SUMMARY ####################### Cleaning the config for CCR As CCR is not configured, so skipping the cleaning of CCR configuration CCR clean is finished Successfully detached Oracle home '/u01/app/oracle/product/11.2.0/db' from the central inventory on the local node. Successfully deleted directory '/u01/app/oracle/product/11.2.0/db' on the local node. Failed to delete directory '/u01/app/oracle' on the local node. Oracle Universal Installer cleanup completed with errors.

Oracle install successfully cleaned up the temporary directories. #######################################################################

############# ORACLE DEINSTALL & DECONFIG TOOL END #############

|

在上面的脚本执行过程可以看到ORACLE_HOME下面的文件的大小在逐步减少

| [root@db3 db]# du -sh 4.2G . [root@db3 db]# du -sh 1.8G . [root@db3 db]# du -sh 1.7G . [root@db3 db]# du -sh 1.7G . [root@db3 db]# du -sh 4.0K . |

在任一正常的节点上停止故障节点NodeApps,此时可以看到db3上的部分服务已经停止

| [grid@db1 ~]$ crs_stat -t -v Name Type R/RA F/FT Target State Host ---------------------------------------------------------------------- ora.DATA.dg ora....up.type 0/5 0/ ONLINE ONLINE db1 ora....ER.lsnr ora....er.type 0/5 0/ ONLINE ONLINE db1 ora....N1.lsnr ora....er.type 0/5 0/0 ONLINE ONLINE db2 ora.asm ora.asm.type 0/5 0/ ONLINE ONLINE db1 ora.db.db ora....se.type 0/2 0/1 ONLINE ONLINE db1 ora....SM1.asm application 0/5 0/0 ONLINE ONLINE db1 ora....B1.lsnr application 0/5 0/0 ONLINE ONLINE db1 ora.db1.gsd application 0/5 0/0 OFFLINE OFFLINE ora.db1.ons application 0/3 0/0 ONLINE ONLINE db1 ora.db1.vip ora....t1.type 0/0 0/0 ONLINE ONLINE db1 ora....SM2.asm application 0/5 0/0 ONLINE ONLINE db2 ora....B2.lsnr application 0/5 0/0 ONLINE ONLINE db2 ora.db2.gsd application 0/5 0/0 OFFLINE OFFLINE ora.db2.ons application 0/3 0/0 ONLINE ONLINE db2 ora.db2.vip ora....t1.type 0/0 0/0 ONLINE ONLINE db2 ora....SM3.asm application 0/5 0/0 ONLINE ONLINE db3 ora....B3.lsnr application 0/5 0/0 OFFLINE OFFLINE ora.db3.gsd application 0/5 0/0 OFFLINE OFFLINE ora.db3.ons application 0/3 0/0 ONLINE ONLINE db3 ora.db3.vip ora....t1.type 0/0 0/0 ONLINE ONLINE db3 ora.eons ora.eons.type 0/3 0/ ONLINE ONLINE db1 ora.gsd ora.gsd.type 0/5 0/ OFFLINE OFFLINE ora....network ora....rk.type 0/5 0/ ONLINE ONLINE db1 ora.oc4j ora.oc4j.type 0/5 0/0 OFFLINE OFFLINE ora.ons ora.ons.type 0/3 0/ ONLINE ONLINE db1 ora.scan1.vip ora....ip.type 0/0 0/0 ONLINE ONLINE db2 |

| [grid@db1 ~]$ srvctl stop nodeapps -n db3 -f [grid@db1 ~]$ crs_stat -t -v Name Type R/RA F/FT Target State Host ---------------------------------------------------------------------- ora.DATA.dg ora....up.type 0/5 0/ ONLINE ONLINE db1 ora....ER.lsnr ora....er.type 0/5 0/ ONLINE ONLINE db1 ora....N1.lsnr ora....er.type 0/5 0/0 ONLINE ONLINE db2 ora.asm ora.asm.type 0/5 0/ ONLINE ONLINE db1 ora.db.db ora....se.type 0/2 0/1 ONLINE ONLINE db1 ora....SM1.asm application 0/5 0/0 ONLINE ONLINE db1 ora....B1.lsnr application 0/5 0/0 ONLINE ONLINE db1 ora.db1.gsd application 0/5 0/0 OFFLINE OFFLINE ora.db1.ons application 0/3 0/0 ONLINE ONLINE db1 ora.db1.vip ora....t1.type 0/0 0/0 ONLINE ONLINE db1 ora....SM2.asm application 0/5 0/0 ONLINE ONLINE db2 ora....B2.lsnr application 0/5 0/0 ONLINE ONLINE db2 ora.db2.gsd application 0/5 0/0 OFFLINE OFFLINE ora.db2.ons application 0/3 0/0 ONLINE ONLINE db2 ora.db2.vip ora....t1.type 0/0 0/0 ONLINE ONLINE db2 ora....SM3.asm application 0/5 0/0 ONLINE ONLINE db3 ora....B3.lsnr application 0/5 0/0 OFFLINE OFFLINE ora.db3.gsd application 0/5 0/0 OFFLINE OFFLINE ora.db3.ons application 0/3 0/0 OFFLINE OFFLINE ora.db3.vip ora....t1.type 0/0 0/0 OFFLINE OFFLINE ora.eons ora.eons.type 0/3 0/ ONLINE ONLINE db1 ora.gsd ora.gsd.type 0/5 0/ OFFLINE OFFLINE ora....network ora....rk.type 0/5 0/ ONLINE ONLINE db1 ora.oc4j ora.oc4j.type 0/5 0/0 OFFLINE OFFLINE ora.ons ora.ons.type 0/3 0/ ONLINE ONLINE db1 ora.scan1.vip ora....ip.type 0/0 0/0 ONLINE ONLINE db2 |

到现在是有节点3上的asm是存活的

更新正常节点的集群列表(oracle用户)在db1,db2上执行

| [root@db1 bin]# su - oracle [oracle@db1 ~]$ echo $ORACLE_HOME /u01/app/oracle/product/11.2.0/db [oracle@db1 ~]$cd /u01/app/oracle/product/11.2.0/db/oui/bin/ [oracle@db1 bin]$ ./runInstaller -updateNodeList ORACLE_HOME=$ORACLE_HOME "CLUSTER_NODES={db1,db2}" Starting Oracle Universal Installer...

Checking swap space: must be greater than 500 MB. Actual 4457 MB Passed The inventory pointer is located at /etc/oraInst.loc The inventory is located at /u01/app/oraInventory 'UpdateNodeList' was successful. |

删除grid

删除故障节点的集群软件,在节点3执行

| [root@db3 install]#cd /u01/app/11.2.0/grid/crs/install [root@db3 install]# ./rootcrs.pl -deconfig -force 2014-03-06 19:17:24: Parsing the host name 2014-03-06 19:17:24: Checking for super user privileges 2014-03-06 19:17:24: User has super user privileges Using configuration parameter file: ./crsconfig_params VIP exists.:db1 VIP exists.: /db1-vip/192.168.1.163/255.255.255.0/eth0 VIP exists.:db2 VIP exists.: /db2-vip/192.168.1.164/255.255.255.0/eth0 VIP exists.:db3 VIP exists.: /db3-vip/192.168.1.174/255.255.255.0/eth0 GSD exists. ONS daemon exists. Local port 6100, remote port 6200 eONS daemon exists. Multicast port 19363, multicast IP address 234.95.137.142, listening port 2016 PRKO-2426 : ONS is already stopped on node(s): db3 PRKO-2427 : eONS is already stopped on node(s): db3 PRKO-2425 : VIP is already stopped on node(s): db3 PRKO-2440 : Network resource is already stopped.

ACFS-9200: Supported CRS-2613: Could not find resource 'ora.registry.acfs'. CRS-4000: Command Stop failed, or completed with errors. CRS-2791: Starting shutdown of Oracle High Availability Services-managed resources on 'db3' CRS-2673: Attempting to stop 'ora.crsd' on 'db3' CRS-2790: Starting shutdown of Cluster Ready Services-managed resources on 'db3' CRS-2673: Attempting to stop 'ora.DATA.dg' on 'db3' CRS-2677: Stop of 'ora.DATA.dg' on 'db3' succeeded CRS-2673: Attempting to stop 'ora.asm' on 'db3' CRS-2677: Stop of 'ora.asm' on 'db3' succeeded CRS-2792: Shutdown of Cluster Ready Services-managed resources on 'db3' has completed CRS-2677: Stop of 'ora.crsd' on 'db3' succeeded CRS-2673: Attempting to stop 'ora.cssdmonitor' on 'db3' CRS-2673: Attempting to stop 'ora.ctssd' on 'db3' CRS-2673: Attempting to stop 'ora.evmd' on 'db3' CRS-2673: Attempting to stop 'ora.asm' on 'db3' CRS-2673: Attempting to stop 'ora.mdnsd' on 'db3' CRS-2677: Stop of 'ora.cssdmonitor' on 'db3' succeeded CRS-2677: Stop of 'ora.evmd' on 'db3' succeeded CRS-2677: Stop of 'ora.mdnsd' on 'db3' succeeded CRS-2677: Stop of 'ora.ctssd' on 'db3' succeeded CRS-2677: Stop of 'ora.asm' on 'db3' succeeded CRS-2673: Attempting to stop 'ora.cssd' on 'db3' CRS-2677: Stop of 'ora.cssd' on 'db3' succeeded CRS-2673: Attempting to stop 'ora.gpnpd' on 'db3' CRS-2673: Attempting to stop 'ora.diskmon' on 'db3' CRS-2677: Stop of 'ora.gpnpd' on 'db3' succeeded CRS-2673: Attempting to stop 'ora.gipcd' on 'db3' CRS-2677: Stop of 'ora.diskmon' on 'db3' succeeded CRS-2677: Stop of 'ora.gipcd' on 'db3' succeeded CRS-2793: Shutdown of Oracle High Availability Services-managed resources on 'db3' has completed CRS-4133: Oracle High Availability Services has been stopped. Successfully deconfigured Oracle clusterware stack on this node |

删除故障节点的VIP

| 如果上面的步执行顺利的,故障节点的VIP此时已被删除,在任一正常节点执行crs_stat -t验证一下: |

| [grid@db2 ~]$ crsctl status res -t -------------------------------------------------------------------------------- NAME TARGET STATE SERVER STATE_DETAILS -------------------------------------------------------------------------------- Local Resources -------------------------------------------------------------------------------- ora.DATA.dg ONLINE ONLINE db1 ONLINE ONLINE db2 ora.LISTENER.lsnr ONLINE ONLINE db1 ONLINE ONLINE db2 ora.asm ONLINE ONLINE db1 Started ONLINE ONLINE db2 Started ora.eons ONLINE ONLINE db1 ONLINE ONLINE db2 ora.gsd OFFLINE OFFLINE db1 OFFLINE OFFLINE db2 ora.net1.network ONLINE ONLINE db1 ONLINE ONLINE db2 ora.ons ONLINE ONLINE db1 ONLINE ONLINE db2 -------------------------------------------------------------------------------- Cluster Resources -------------------------------------------------------------------------------- ora.LISTENER_SCAN1.lsnr 1 ONLINE ONLINE db2 ora.db.db 1 ONLINE ONLINE db1 Open 2 ONLINE ONLINE db2 Open ora.db1.vip 1 ONLINE ONLINE db1 ora.db2.vip 1 ONLINE ONLINE db2 ora.oc4j 1 OFFLINE OFFLINE ora.scan1.vip 1 ONLINE ONLINE db2 |

| 如果仍然有故障节点的VIP服务存在,执行如下: srvctl stop vip -i ora.rac3.vip -f srvctl remove vip -i ora.rac3.vip -f crsctl delete resource ora.rac3.vip -f |

在任一正常的节点上删除故障节点

| [root@db1 ~]# /u01/app/11.2.0/grid/bin/crsctl delete node -n db3 CRS-4661: Node db3 successfully deleted. [root@db1 ~]# /u01/app/11.2.0/grid/bin/olsnodes -t -s db1 Active Unpinned db2 Active Unpinned |

更新要删除节点的集群列表(grid用户)

在要删除节点上执行:

| [root@db3 install]# su - grid. [grid@db3 grid]$cd /u01/app/11.2.0/grid/oui/bin [grid@db3 bin]$./runInstaller -updateNodeList ORACLE_HOME=$ORACLE_HOME "CLUSTER_NODES={db3}" CRS=true -local Starting Oracle Universal Installer...

Checking swap space: must be greater than 500 MB. Actual 4900 MB Passed The inventory pointer is located at /etc/oraInst.loc The inventory is located at /u01/app/oraInventory 'UpdateNodeList' was successful. |

删除要删除节点的集群软件

在要删除节点上执行:db3

| [grid@db3 bin]$ cd /u01/app/11.2.0/grid/deinstall/ [grid@db3 deinstall]$ ./deinstall –local ##此时一路回车下去 Checking for required files and bootstrapping ... Please wait ... Location of logs /tmp/deinstall2014-03-06_07-27-15-PM/logs/

############ ORACLE DEINSTALL & DECONFIG TOOL START ############

######################## CHECK OPERATION START ######################## Install check configuration START

Checking for existence of the Oracle home location /u01/app/11.2.0/grid Oracle Home type selected for de-install is: CRS Oracle Base selected for de-install is: /u01/app/grid Checking for existence of central inventory location /u01/app/oraInventory Checking for existence of the Oracle Grid Infrastructure home The following nodes are part of this cluster: db3

Install check configuration END

Traces log file: /tmp/deinstall2014-03-06_07-27-15-PM/logs//crsdc.log Enter an address or the name of the virtual IP used on node "db3"[db3-vip] >

The following information can be collected by running ifconfig -a on node "db3" Enter the IP netmask of Virtual IP "192.168.1.174" on node "db3"[255.255.255.0] >

Enter the network interface name on which the virtual IP address "192.168.1.174" is active >

Enter an address or the name of the virtual IP[] >

Network Configuration check config START

Network de-configuration trace file location: /tmp/deinstall2014-03-06_07-27-15-PM/logs/netdc_check5691065690501268016.log

Specify all RAC listeners that are to be de-configured [LISTENER]:

Network Configuration check config END

Asm Check Configuration START

ASM de-configuration trace file location: /tmp/deinstall2014-03-06_07-27-15-PM/logs/asmcadc_check496595205979151620.log

######################### CHECK OPERATION END #########################

####################### CHECK OPERATION SUMMARY ####################### Oracle Grid Infrastructure Home is: The cluster node(s) on which the Oracle home exists are: (Please input nodes seperated by ",", eg: node1,node2,...)db3 Since -local option has been specified, the Oracle home will be de-installed only on the local node, 'db3', and the global configuration will be removed. Oracle Home selected for de-install is: /u01/app/11.2.0/grid Inventory Location where the Oracle home registered is: /u01/app/oraInventory Following RAC listener(s) will be de-configured: LISTENER Option -local will not modify any ASM configuration. Do you want to continue (y - yes, n - no)? [n]: Exited from program.

############# ORACLE DEINSTALL & DECONFIG TOOL END #############

|

当会话结束时在节点 'rac3'上以 root 用户身份运行

| [root@db3 ~]# rm -rf /etc/oraInst.loc [root@db3 ~]# rm -rf /opt/ORCLfmap |

更新正常节点的集群列表(grid用户)

在所有正常的节点上执行:

| [root@db1 ~]# su - grid [grid@db1 ~]$ cd /u01/app/11.2.0/grid/oui/bin [grid@db1 bin]$./runInstaller -updateNodeList ORACLE_HOME=$ORACLE_HOME "CLUSTER_NODES={db1,db2}" CRS=true Starting Oracle Universal Installer...

Checking swap space: must be greater than 500 MB. Actual 4463 MB Passed The inventory pointer is located at /etc/oraInst.loc The inventory is located at /u01/app/oraInventory 'UpdateNodeList' was successful. |

验证故障节点被删除

在任一正常的节点上:

| [grid@db1 ~]$ cluvfy stage -post nodedel -n db3

Performing post-checks for node removal

Checking CRS integrity...

CRS integrity check passed

Node removal check passed

Post-check for node removal was successful. |

| [grid@db1 ~]$ crsctl status res -t -------------------------------------------------------------------------------- NAME TARGET STATE SERVER STATE_DETAILS -------------------------------------------------------------------------------- Local Resources -------------------------------------------------------------------------------- ora.DATA.dg ONLINE ONLINE db1 ONLINE ONLINE db2 ora.LISTENER.lsnr ONLINE ONLINE db1 ONLINE ONLINE db2 ora.asm ONLINE ONLINE db1 Started ONLINE ONLINE db2 Started ora.eons ONLINE ONLINE db1 ONLINE ONLINE db2 ora.gsd OFFLINE OFFLINE db1 OFFLINE OFFLINE db2 ora.net1.network ONLINE ONLINE db1 ONLINE ONLINE db2 ora.ons ONLINE ONLINE db1 ONLINE ONLINE db2 -------------------------------------------------------------------------------- Cluster Resources -------------------------------------------------------------------------------- ora.LISTENER_SCAN1.lsnr 1 ONLINE ONLINE db2 ora.db.db 1 ONLINE ONLINE db1 Open 2 ONLINE ONLINE db2 Open ora.db1.vip 1 ONLINE ONLINE db1 ora.db2.vip 1 ONLINE ONLINE db2 ora.oc4j 1 OFFLINE OFFLINE ora.scan1.vip 1 ONLINE ONLINE db2 |

457

457

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?