Apache Spark简介

Apache Spark是一个开源集群运算框架。使用Spark需要搭配集群管理和分布式存储系统。Spark 支持独立、Hadoop YARN或者Apache Mesos 集群管理。Spark可以和Hadoop Distrubuted File System (HDFS), MapR File System (MapR-FS), Cassandra, OpenStack Swift, Amazon S3 等分布式存储系统配合使用。Spark的运行依赖Scala编程语言。

Apache Spark 集群搭建

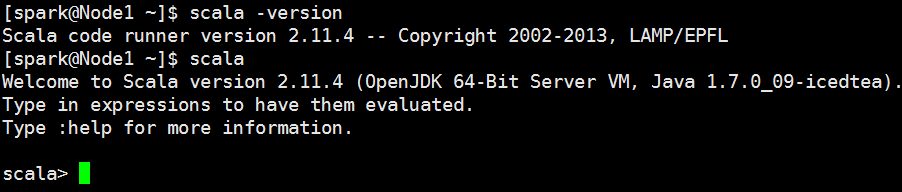

1. 下载安装Scala

Scala2.11.4 下载地址:http://www.scala-lang.org/download/2.11.4.html

解压:[spark@Node1 apache_spark]$ tar zxvf scala-2.11.4.tgz

配置:编辑 ~/.bash_profile文件 增加SCALA_HOME环境变量配置

export SCALA_HOME=/home/spark/apache_spark/scala-2.11.4

PATH=$PATH:$SCALA_HOME/bin

export PATH验证Scala环境是否正常?(source ~/.bash_profile)

2. 安装JDK

下载地址:http://www.oracle.com/technetwork/cn/java/javase/downloads/java-se-jdk-7-download-432154-zhs.html

解压:tar jdk-7-linux-x64.tar.gz

3. 安装Spark

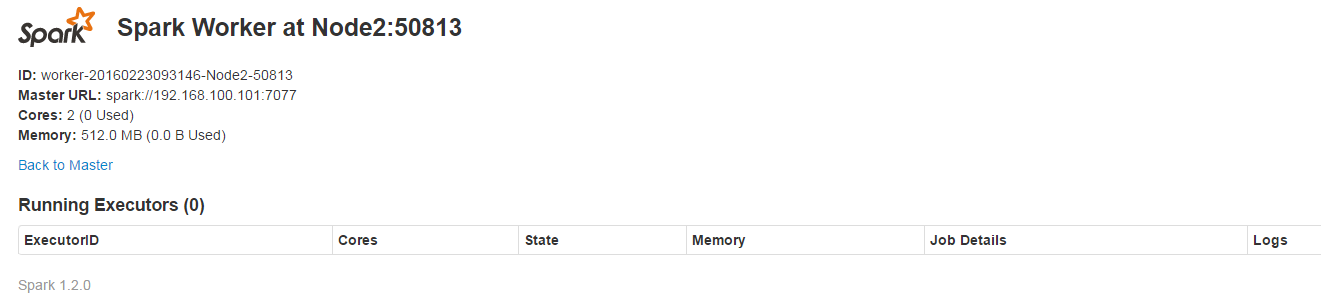

集群信息说明

Node1 192.168.100.101 (Master)

Node2 192.168.100.102 (Slaver)

Node3 192.168.100.103 (Slaver)

Node4 192.168.100.104 (Slaver)下载Spark

wget http://d3kbcqa49mib13.cloudfront.net/spark-1.2.0-bin-hadoop2.4.tgz

解压

[spark@Node1 apache_spark]$ tar zxvf spark-1.2.0-bin-hadoop2.4.tgz

配置环境变量

export SPARK_HOME=/home/spark/apache_spark/spark-1.2.0-bin-hadoop2.4

PATH=$PATH:$SPARK_HOME/bin修改配置文件:

cd /home/spark/apache_spark/spark-1.2.0-bin-hadoop2.4/conf

//1 修改spark-env.sh.template文件

mv spark-env.sh.template spark-env.sh

vim spark-env.sh

//添加需要导出的环境变量

export SCALA_HOME=/home/spark/apache_spark/scala-2.11.4

export JAVA_HOME=/home/spark/apache_spark/jdk1.7.0_51

export SPARK_MASTER_IP=192.168.100.101

export SPARK_WORKER_MEMORY=512M

export master=spark://192.168.100.101:7077

//2 修改slaves文件

mv slaves.template slaves

vim slaves

//添加从节点

Node2

Node3

Node4分发文件到从节点(已配置节点之间的ssh互信)

//分发配置文件

scp ~/.bash_profile Node2:~/.bash_profile

scp ~/.bash_profile Node3:~/.bash_profile

scp ~/.bash_profile Node4:~/.bash_profile

//分发安装文件

scp -r ~/apache_spark node2:~/

scp -r ~/apache_spark node3:~/

scp -r ~/apache_spark node4:~/启动和停止spark

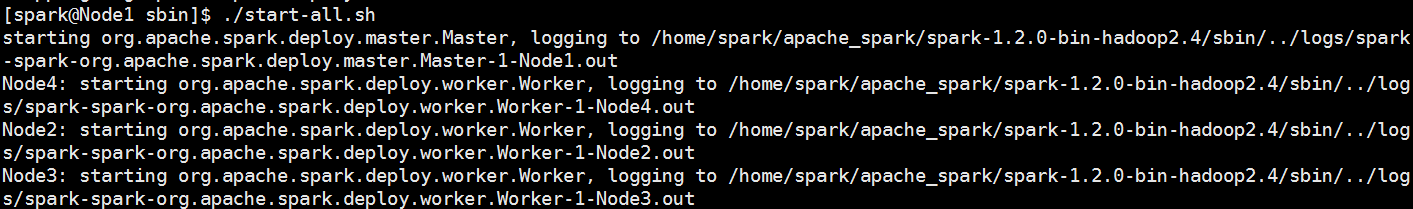

启动Spark

/home/spark/apache_spark/spark-1.2.0-bin-hadoop2.4/sbin/start-all.sh

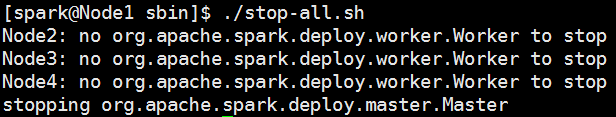

停止spark

/home/spark/apache_spark/spark-1.2.0-bin-hadoop2.4/sbin/stop-all.sh

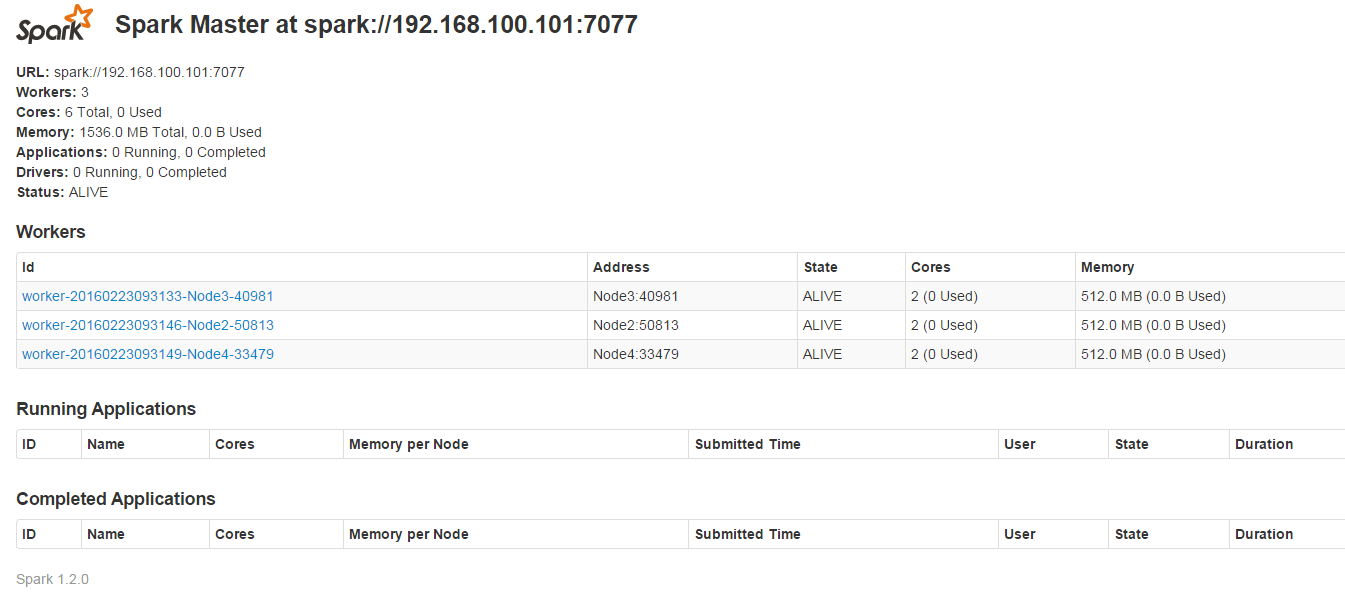

查看Spark集群信息

其他

设置时区:

cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

ntpdate time.windows.com

hwclock –systohc

参考

[1] Spark机器学习

[2] spark-1.2.0 集群环境搭建

[3] spark1.3.1安装和集群的搭建

533

533

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?