Openstack Havana,Savanna 0.3

1. 安装

# install savanna rpm pkgs

rpm -Uvh openstack-savanna-0.3-2.el6.noarch.rpm\

python-savannaclient-0.3-1.el6.noarch.rpm\

python-django-savanna-0.3-1.el6.noarch.rpm

# create user using keystone command

keystone user-create --name savanna --pass Passw0rd

keystone user-role-add --user savanna --role admin --tenant service

keystone user-role-add --user savanna --role _member_ --tenant service

# config savanna

echo -e "SAVANNA_URL = 'http://$cloud_controller_ip:8386/v1.1'\nSAVANNA_USE_NEUTRON = True" >> /etc/openstack-dashboard/local_settings

sed -e "s@NC_IP@$network_controller_ip@g" -e "s@CC_IP@$cloud_controller_ip@g" -e "s@XCAT_IP@$xcat_controller_ip@g" <$path/savanna.conf >/etc/savanna/savanna.conf

sed -i "s/'dashboards': ('project', 'admin', 'settings',),/'dashboards': ('project', 'admin', 'settings','router','savanna'),/" /usr/share/openstack-dashboard/openstack_dashboard/settings.py

sed -i "s/'openstack_dashboard',/'savannadashboard',/" /usr/share/openstack-dashboard/openstack_dashboard/settings.py

service openstack-savanna-api start

chkconfig openstack-savanna-api on

service httpd restart

然后登录dashboard会看到多出一个Savanna的标签:

表明savanna安装成功。

2.配置meta-data服务

CC:

openstack-config–set /etc/nova/nova.conf DEFAULT enabled_apisosapi_compute,metadata

openstack-config–set /etc/nova/nova.conf DEFAULT service_neutron_metadata_proxytrue

openstack-config–set /etc/nova/nova.conf DEFAULTneutron_metadata_proxy_shared_secret foo

forservice in api objectstore scheduler novncproxy cert consoleconsoleauth conductor;do service openstack-nova-$service restart;done

NC:

openstack-config–set /etc/neutron/dhcp-agent.ini DEFAULT use_namespaces True

openstack-config–set /etc/neutron/dhcp-agent.ini DEFAULT enable_isolated_metadataTrue

openstack-config–set /etc/neutron/metadata-agent.ini DEFAULT nova_metadata_ip $cloud_controller_ip

openstack-config–set /etc/neutron/metadata-agent.ini DEFAULT nova_metadata_port8775

openstack-config–set /etc/neutron/metadata-agent.ini DEFAULTmetadata_proxy_shared_secret foo

serviceneutron-metadata-agent start

chkconfigneutron-metadata-agent on

serviceneutron-dhcp-agent restart

3.创建网络本环境中没有使用l3-agent服务,不能创建router,使用的是dhcp-agent服务为虚拟机分配ip

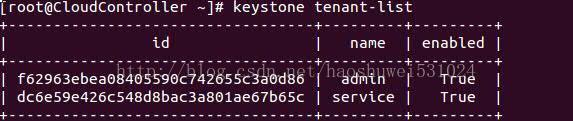

keystone tenant-list

neutronnet-create –tenant-id f62963ebea08405590c742655c3a0d86 flatnet

--provider:network_type flat--provider:physical_network physnet1

neutronsubnet-create --tenant-id 62963ebea08405590c742655c3a0d86 flatnet110.103.0.0/24

--no-gateway ----allocation-pool start=10.103.0.20,end=10.103.0.30

注意不要给子网添加网关,否则虚拟机不会自动添加169.254.169.254的路由

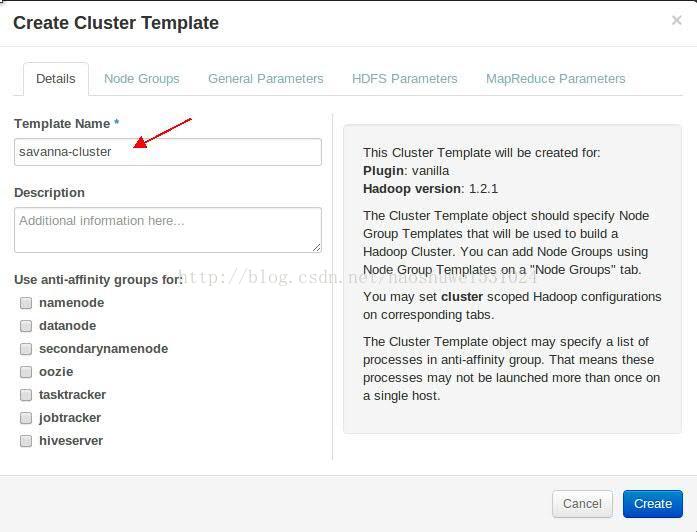

4.使用savanna界面创建cluster

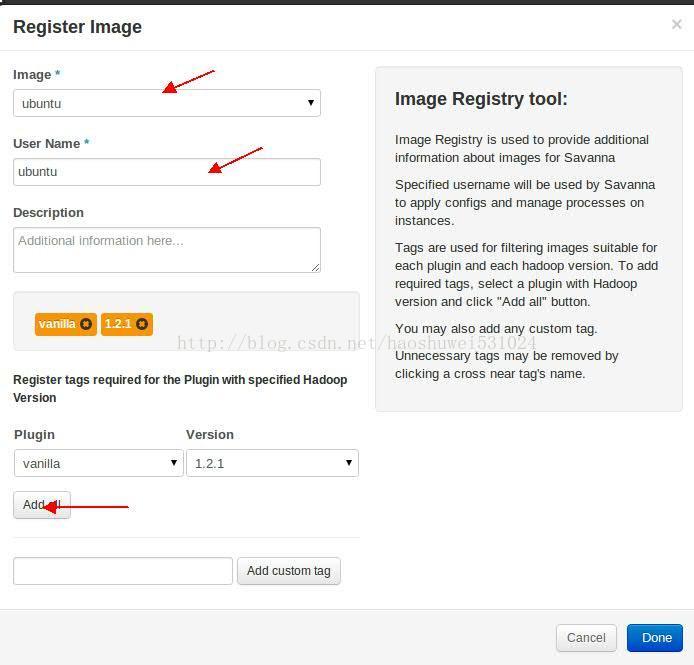

(1)注册镜像

首先使用glanceimage-create命令上传镜像,镜像必须用已经做好的ubuntu镜像:Code-Server:/repo/soloman/swift/savanna-0.3-vanilla-1.2.1-ubuntu-13.04.qcow2;

点击savanna标签,选择ImageRegistry,页面右上角点击RegisterImage:

以下步骤是必须要做的:

选择需要注册的镜像;

填写用户名:ubuntu

添加标签:vanilla1.2.1

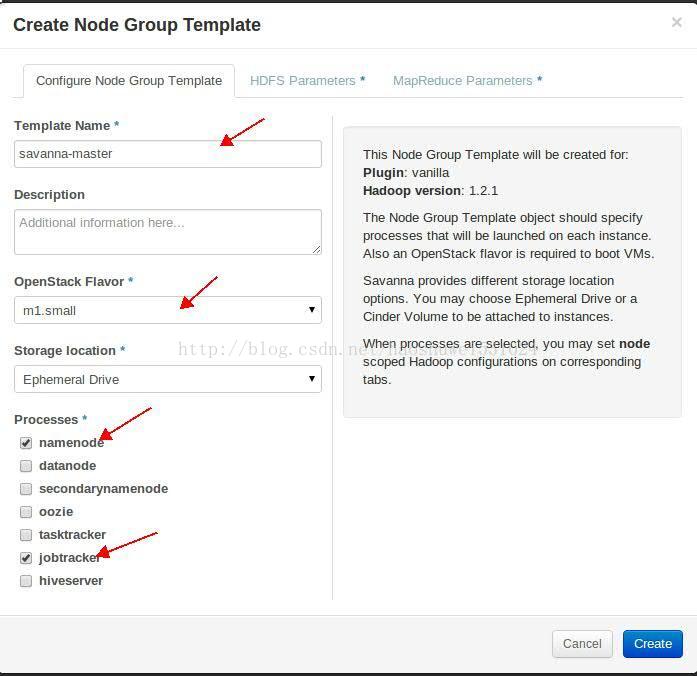

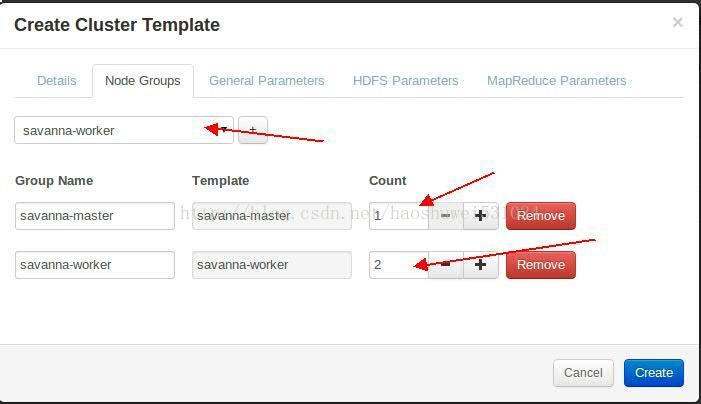

(2)创建NodeGroup Templates

点击savanna标签,选择NodeGroup Templates,页面右上角点击CreateTemplates:

hadoopcluster中分master和workers,所以要创建两种模板

a.master:

注意事项:

OpenstackFlavor最小要选择m1.small;

masternode要勾选namenode和jobtracker,如果要使用swift建立EDP,还需要勾选oozie

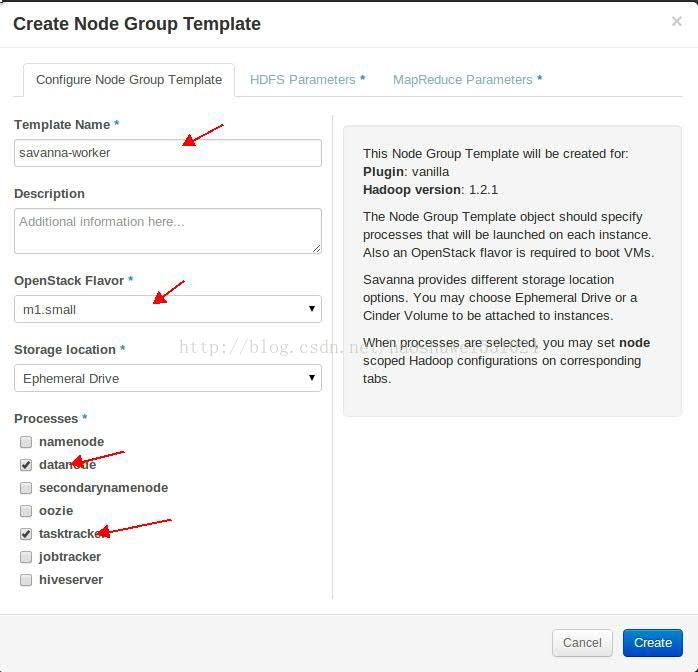

b.worker

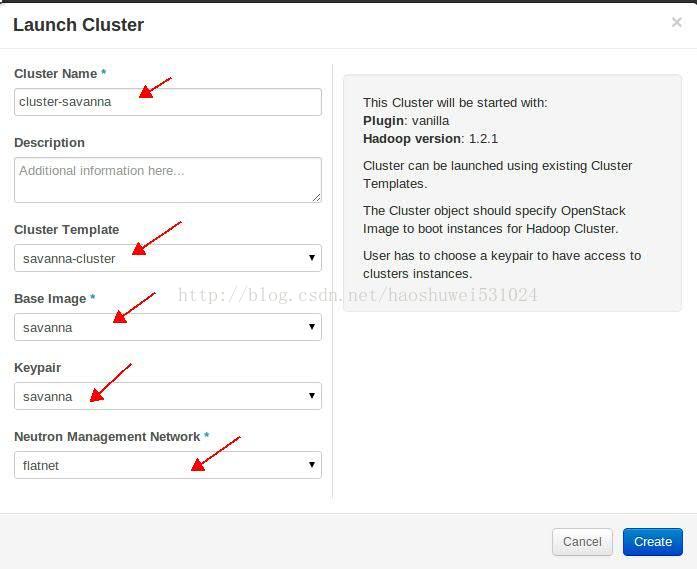

(4)创建Cluster

点击savanna标签,选择Cluster,页面右上角点击LaunchCluster:

输入cluster的名字;

选择(3)步骤中创建的模板;

选择(1)步骤中注册的镜像;

选择一个创建好的keypair;

选择创建好的flat网络;

点击Create

需要注意的事项:

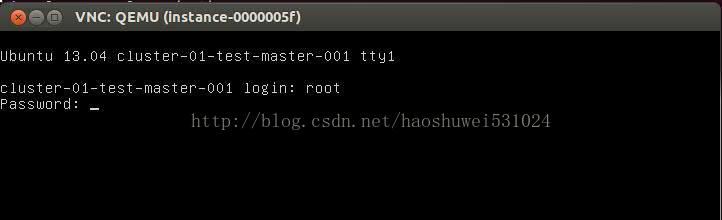

由于环境中的neutron-dhcp-agent还有问题,所以在创建instance的时候要重启一下,以便instance可以获得IP分配,可以查看compute结点上instance的日志:

比如:

tail-200f/var/lib/nova/instance/29df86f5-3268-4aef-94fb-c220428c0dc3/console.log

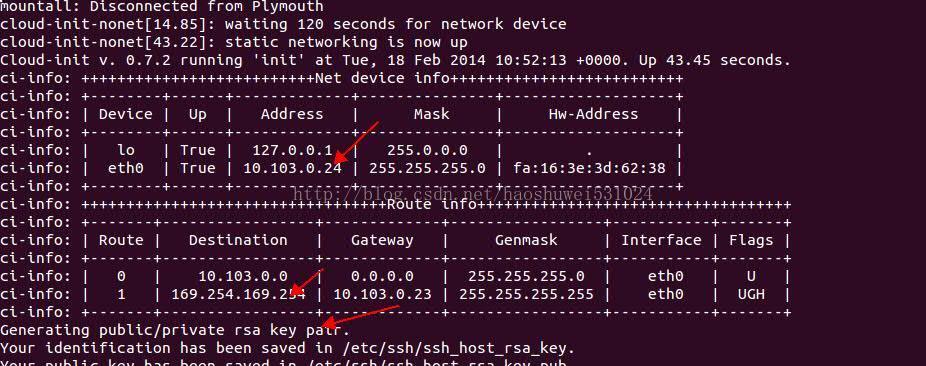

出现以下日志则说明instance获得IP并且正确执行cloud-init

instance获得dhcp的ip;

instance添加了metadata服务的路由169.254.169.254

cloud-init开始为ubuntu用户生成ssh文件

虚拟机登录:

439

439

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?