文章目录

Docker安装ES&kibban

Elasticsearch安装

安装指定版本

docker pull elasticsearch:7.4.2

- 1

创建目录

注意这里路径要与启动时指定路径一致

mkdir -p /mydata/elasticsearch/data

mkdir -p /mydata/elasticsearch/config

- 1

- 2

授权

chmod 777 /mydata/elasticsearch/config/

chmod 777 /mydata/elasticsearch/data/

- 1

- 2

启动并映射主机目录

docker run -d --name elasticsearch -p 9200:9200 -p 9300:9300 -e "discovery.type=single-node" -e ES_JAVA_OPTS="-Xms128m -Xmx512m" -v /mydata/elasticsearch/data:/usr/share/elasticsearch/data -v/mydata/elasticsearch/plugins:/usr/share/elasticsearch/plugins -d elasticsearch:7.4.2

- 1

参数解析:

-d 后台运行

–name docker容器名称

-p 映射端口

-e 环境变量

ES_JAVA_OPTS 内存大小

最后是刚刚pull下来的镜像和版本号

错误:

Caused by: java.nio.file.AccessDeniedException: /usr/share/elasticsearch/data/nodes

- 1

主机文件夹权限不够,无法写入

解决:

chmod 777 /mydata/elasticsearch/config/

chmod 777 /mydata/elasticsearch/data/

- 1

- 2

虚拟机验证

curl http://localhost:9200

- 1

启动成功

{

"name" : "1028c123ceae",

"cluster_name" : "docker-cluster",

"cluster_uuid" : "Wmkul_5uSI-erD8iF7hvow",

"version" : {

"number" : "7.4.2",

"build_flavor" : "default",

"build_type" : "docker",

"build_hash" : "2f90bbf7b93631e52bafb59b3b049cb44ec25e96",

"build_date" : "2019-10-28T20:40:44.881551Z",

"build_snapshot" : false,

"lucene_version" : "8.2.0",

"minimum_wire_compatibility_version" : "6.8.0",

"minimum_index_compatibility_version" : "6.0.0-beta1"

},

"tagline" : "You Know, for Search"

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

主机浏览器验证

浏览器输入http://localhost:9200/ 也是同样JSON

kibban安装

拉取

docker pull kibana:7.4.2

- 1

启动kibana并关联elasticsearch 修改ip为自己虚拟机ip地址

docker run --name kibana -e ELASTICSEARCH_HOSTS=http://虚拟机ip地址:9200 -p 5601:5601 -d kibana:7.4.2

- 1

启动Kibana之后就能看到界面了

访问:虚拟机ip地址:5601

可以看到kibana英文页面,要设置成中文,因为没有挂载目录则需要进入kibana容器内部

1、进入容器

docker exec -it [你的容器id] /bin/bash

- 1

2、编辑kibana.yml

vi /usr/share/kibana/config/kibana.yml

- 1

3、在最后一行加入i18n.locale: zh-CN 注意冒号后边有个空格

4、保存后退出kibana容器

exit

- 1

5、重启kibana容器

docker restart [你的容器id]

- 1

6、访问kibana 开发工具页面

IK分词器安装

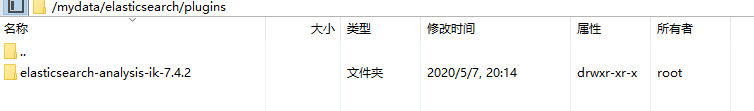

如果启动时容器有映射文件到虚拟机 -v/mydata/elasticsearch/plugins:/usr/share/elasticsearch/plugins

则可以直接在window下下载对应版本IK并解压上传到虚拟机映射目录即安装成功(需要对应es版本安装)

连接问题,设置密码登录,即可使用xftp上传文件

如果启动时没有映射目录

1、进入es容器内部plugins目录

docker exec -it 容器ID /bin/bash

- 1

2、下载安装包

wget https://github.com/medcl/elasticsearch-analysis-ik/releases/download/v7.4.2/elasticsearch-analysis-ik-7.4.2.zip

- 1

报错:wget: command not found

解决:yum -y install wget

解压

unzip 下载的文件

- 1

删除安装包

rm -rf *.zip

- 1

重名命为IK(可省略)

mv elasticsearch/ik

- 1

确定安装好了分词器,去到es的bin目录下

elasticsearch-plugin list

- 1

即可列出系统的分词器 安装成功

kibana验证(记得重启es容器)

创建索引,默认分词

PUT my_index

GET my_index/_analyze

{

"text": "我是中国人"

}

- 1

- 2

- 3

- 4

- 5

结果

{

"tokens" : [

{

"token" : "我",

"start_offset" : 0,

"end_offset" : 1,

"type" : "CN_CHAR",

"position" : 0

},

{

"token" : "是",

"start_offset" : 1,

"end_offset" : 2,

"type" : "CN_CHAR",

"position" : 1

},

{

"token" : "中国人",

"start_offset" : 2,

"end_offset" : 5,

"type" : "CN_WORD",

"position" : 2

},

{

"token" : "中国",

"start_offset" : 2,

"end_offset" : 4,

"type" : "CN_WORD",

"position" : 3

},

{

"token" : "国人",

"start_offset" : 3,

"end_offset" : 5,

"type" : "CN_WORD",

"position" : 4

}

]

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

指定ik_smart

会做最粗粒度的拆分,比如会将“中华人民共和国人民大会堂”拆分为中华人民共和国、人民大会堂

GET my_index/_analyze

{

"analyzer": "ik_smart",

"text": "我是中国人"

}

- 1

- 2

- 3

- 4

- 5

结果

{

"tokens" : [

{

"token" : "我",

"start_offset" : 0,

"end_offset" : 1,

"type" : "CN_CHAR",

"position" : 0

},

{

"token" : "是",

"start_offset" : 1,

"end_offset" : 2,

"type" : "CN_CHAR",

"position" : 1

},

{

"token" : "中国人",

"start_offset" : 2,

"end_offset" : 5,

"type" : "CN_WORD",

"position" : 2

}

]

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

指定ik_max_word

会将文本做最细粒度的拆分,比如会将“中华人民共和国人民大会堂”拆分为“中华人民共和国、中华人民、中华、华人、人民共和国、人民、共和国、大会堂、大会、会堂等词语

GET my_index/_analyze

{

"analyzer": "ik_max_word",

"text": "我是中国人"

}

- 1

- 2

- 3

- 4

- 5

结果

{

"tokens" : [

{

"token" : "我",

"start_offset" : 0,

"end_offset" : 1,

"type" : "CN_CHAR",

"position" : 0

},

{

"token" : "是",

"start_offset" : 1,

"end_offset" : 2,

"type" : "CN_CHAR",

"position" : 1

},

{

"token" : "中国人",

"start_offset" : 2,

"end_offset" : 5,

"type" : "CN_WORD",

"position" : 2

},

{

"token" : "中国",

"start_offset" : 2,

"end_offset" : 4,

"type" : "CN_WORD",

"position" : 3

},

{

"token" : "国人",

"start_offset" : 3,

"end_offset" : 5,

"type" : "CN_WORD",

"position" : 4

}

]

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

Nginx安装

实现远程词库

为什么要用远程词库?

过滤一些不能够出现的词语,以及一些流行词语

实现方式:将远程词库放在Nginx中当静态资源使用,这样添加的词语不用重启两个服务都就可以直接使用

1、拉取nginx

docker pull nginx:1.10

- 1

2、启动nginx

docker run -p 80:80 --name nginx -d nginx:1.10

- 1

这里只是随便启动一个nginx,只是为了复制出配置

3、复制配置

将容器中文件复制到当前目录(.)注意是空格一个点

docker container cp nginx:/etc/nginx .

- 1

docker container cp 容器:容器文件 虚拟机位置------复制容器文件到虚拟机 虚拟机位置使用点(.)代表当前位置

可以在nginx目录下看到复制成功的配置

drwxr-xr-x. 2 root root 4096 3月 28 2017 conf.d

-rw-r--r--. 1 root root 1007 1月 31 2017 fastcgi_params

-rw-r--r--. 1 root root 2837 1月 31 2017 koi-utf

-rw-r--r--. 1 root root 2223 1月 31 2017 koi-win

-rw-r--r--. 1 root root 3957 1月 31 2017 mime.types

lrwxrwxrwx. 1 root root 22 1月 31 2017 modules -> /usr/lib/nginx/modules

-rw-r--r--. 1 root root 643 1月 31 2017 nginx.conf

-rw-r--r--. 1 root root 636 1月 31 2017 scgi_params

-rw-r--r--. 1 root root 664 1月 31 2017 uwsgi_params

-rw-r--r--. 1 root root 3610 1月 31 2017 win-utf

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

4、停止删除nginx容器

[root@daihao mydata]# docker stop 5d

5d

[root@daihao mydata]# docker rm 5d

5d

- 1

- 2

- 3

- 4

5、修改文件名

[root@daihao mydata]# ls

elasticsearch nginx

[root@daihao mydata]# mv nginx conf

[root@daihao mydata]# ls

conf elasticsearch

[root@daihao mydata]# mkdir nginx

[root@daihao mydata]# ls

conf elasticsearch nginx

[root@daihao mydata]# mv ngin conf

[root@daihao mydata]# ls

conf elasticsearch nginx

[root@daihao mydata]# mv conf nginx/

[root@daihao mydata]# ls

elasticsearch nginx

[root@daihao mydata]# cd nginx/

[root@daihao nginx]# ls

conf

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

6、运行容器挂载目录

docker run -p 80:80 --name nginx -v /mydata/nginx/html:/usr/share/nginx/html -v /mydata/nginx/logs:/var/log/nginx -v /mydata/nginx/conf:/etc/nginx -d nginx:1.10

- 1

7、测试

在/mydata/nginx/html下新建一个index.html

vi index.html

- 1

键入任意html

浏览器测试 ip:80

创建fenci.txt 自定义分词文件键入想要分词的词语如 乔碧罗

[root@daihao nginx]# cd html/

[root@daihao html]# ls

index.html

[root@daihao html]# mkdir es

[root@daihao html]# ls

es index.html

[root@daihao html]# cd es

[root@daihao es]# vi fenci.txt

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

测试:http://192.168.175.130/es/fengci.txt

修改 IKAnalyzer.cfg.xml

[root@daihao es]# cd /mydata/

[root@daihao mydata]# ls

elasticsearch nginx

[root@daihao mydata]# cd elasticsearch/plugins/elasticsearch-analysis-ik-7.4.2/

[root@daihao elasticsearch-analysis-ik-7.4.2]# ls

commons-codec-1.9.jar config httpclient-4.5.2.jar plugin-descriptor.properties

commons-logging-1.2.jar elasticsearch-analysis-ik-7.4.2.jar httpcore-4.4.4.jar plugin-security.policy

[root@daihao elasticsearch-analysis-ik-7.4.2]# cd config/

[root@daihao config]# ls

extra_main.dic extra_single_word_full.dic extra_stopword.dic main.dic quantifier.dic suffix.dic

extra_single_word.dic extra_single_word_low_freq.dic IKAnalyzer.cfg.xml preposition.dic stopword.dic surname.dic

[root@daihao config]# vi IKAnalyzer.cfg.xml

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

修改远程词库地址为nginx文件地址

重启es容器

docker restart id

- 1

kibana测试

GET my_index/_analyze

{

"analyzer": "ik_max_word",

"text": "乔碧罗殿下"

}

- 1

- 2

- 3

- 4

- 5

结果

{

"tokens" : [

{

"token" : "乔碧罗",

"start_offset" : 0,

"end_offset" : 3,

"type" : "CN_WORD",

"position" : 0

},

{

"token" : "殿下",

"start_offset" : 3,

"end_offset" : 5,

"type" : "CN_WORD",

"position" : 1

}

]

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

成功~~~

安装可能使用命令可参见Linux命令记录

检索

1、查看es中有哪些索引

GET /_cat/indices?v

- 1

es 中会默认存在一个名为.kibana的索引

表头的含义

| 名 | 义 |

|---|---|

| health | green(集群完整) yellow(单点正常、集群不完整) red(单点不正常) |

| status | 是否能使用 |

| index | 索引名 |

| uuid | 索引统一编号 |

| pri | 主节点几个 |

| rep | 从节点几个 |

| docs.count | 文档数 |

| docs.deleted | 文档被删了多少 |

| store.size | 整体占空间大小 |

| pri.store.size | 主节点占 |

| cluster | 整个elasticsearch 默认就是集群状态,整个集群是一份完整、互备的数据。 |

| node | 集群中的一个节点,一般只一个进程就是一个node |

| shard | 分片,即使是一个节点中的数据也会通过hash算法,分成多个片存放,默认是5片。 |

| index | 相当于rdbms的database, 对于用户来说是一个逻辑数据库,虽然物理上会被分多个shard存放,也可能存放在多个node中。 |

| type | 类似于rdbms的table,但是与其说像table,其实更像面向对象中的class , 同一Json的格式的数据集合。 |

| Document(json) | 类似于rdbms的 row、面向对象里的object |

| field | 相当于字段、属性 |

2、增加一个索引(库)

PUT /movie_index

- 1

3、删除一个索引

ES 是不删除也不修改任何数据

DELETE /movie_index

- 1

4、新增文档

原有格式

PUT /index/type/id

- 1

保存在哪个索引(库)/ 哪个类型下 / 指定具体标识

注意

elasticsearch 在第一个版本的开始 每个文档都储存在一个索引中,并分配一个 映射类型,映射类型用于表示被索引的文档或者实体的类型,这样带来了一些问题 ,导致后来在 elasticsearch6.0.0 版本中一个文档只能包含一个映射类型,而在 7.0.0 中,映射类型则将被弃用,到了 8.0.0 中则将完全被删除。

所以使用type则会警告 但是不会报错

解释一下警告信息:

#! Deprecation: [types removal] Specifying types in document index requests is deprecated, use the typeless endpoints instead (/{index}/_doc/{id}, /{index}/_doc, or /{index}/_create/{id}).

#!Deprecation: [types removal]不支持在文档索引请求中指定类型,而是使用无类型的端点(/{index}/_doc/{id}, /{index}/_doc,或/{index}/_create/{id})。

- 1

- 2

- 3

#! Deprecation: [types removal] Specifying types in search requests is deprecated.

#!Deprecation: [types removal]不赞成在搜索请求中指定类型

- 1

- 2

- 3

就是告诉你_type快没用了。以后都不会有_type而是写死_doc

PUT /index/_doc/id

- 1

例:

PUT /movie_index/_doc/1

{

"id": 1,

"name": "红海行动",

"doubanScore": 8.5,

"actorList": [{

"id": 1,

"name": "张译"

},

{

"id": 2,

"name": "海清"

},

{

"id": 3,

"name": "张涵予"

}

]

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

PUT /movie_index/_doc/2

{

"id": 2,

"name": "湄公河事件",

"doubanScore": 8.0,

"actorList": [{

"id": 3,

"name": "张涵予"

}]

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

PUT /movie_index/_doc/3

{

"id": 3,

"name": "红海事件",

"doubanScore": 5.0,

"actorList": [{

"id": 4,

"name": "代号"

}, {

"id": 5,

"name": "刘德华"

}]

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

如果之前没建过index或者type,es 会自动创建。

5、直接用id查找

GET movie_index/_doc/1

- 1

6、修改—整体替换

和新增没有区别

PUT /movie_index/_doc/3

{

"id": 3,

"name": "红海事件",

"doubanScore": 5.0,

"actorList": [{

"id": 4,

"name": "代号"

}, {

"id": 5,

"name": "刘德华"

}]

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

7、修改—某个字段

POST movie_index/_update/3/

{

"doc": {

"doubanScore":"7.0"

}

}

- 1

- 2

- 3

- 4

- 5

- 6

8、删除一个document

DELETE movie_index/_doc/3

- 1

9、搜索全部数据

GET movie_index/_doc/_search

- 1

结果

{

"took" : 1, //耗费时间 毫秒

"timed_out" : false, //是否超时

"_shards" : {

"total" : 1, //发送给全部1个分片

"successful" : 1,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : {

"value" : 3, //命中3条数据

"relation" : "eq"

},

"max_score" : 1.0,

"hits" : [ //结果

{

"_index" : "movie_index",

"_type" : "_doc",

"_id" : "1",

"_score" : 1.0,

"_source" : {

"id" : 1,

"name" : "红海行动",

"doubanScore" : 8.5,

"actorList" : [

{

"id" : 1,

"name" : "张1译"

},

{

"id" : 2,

"name" : "海清"

},

{

"id" : 3,

"name" : "张涵予"

}

]

}

},

{

"_index" : "movie_index",

"_type" : "_doc",

"_id" : "2",

"_score" : 1.0,

"_source" : {

"id" : 2,

"name" : "湄公河事件",

"doubanScore" : 8.0,

"actorList" : [

{

"id" : 3,

"name" : "张涵予"

}

]

}

},

{

"_index" : "movie_index",

"_type" : "_doc",

"_id" : "3",

"_score" : 1.0,

"_source" : {

"id" : 3,

"name" : "红海事件",

"doubanScore" : 5.0,

"actorList" : [

{

"id" : 4,

"name" : "代号"

},

{

"id" : 5,

"name" : "刘德华"

}

]

}

}

]

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

10、匹配条件查询(全部)

GET movie_index/_search

{

"query":{

"match_all": {}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

11、按分词查询

GET movie_index/_search

{

"query":{

"match": {"name":"红"}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

注意结果的评分

response

{

"took" : 1,

"timed_out" : false,

"_shards" : {

"total" : 1,

"successful" : 1,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : {

"value" : 2,

"relation" : "eq"

},

"max_score" : 0.48527452,

"hits" : [

{

"_index" : "movie_index",

"_type" : "_doc",

"_id" : "1",

"_score" : 0.48527452,

"_source" : {

"id" : 1,

"name" : "红海行动",

"doubanScore" : 8.5,

"actorList" : [

{

"id" : 1,

"name" : "张1译"

},

{

"id" : 2,

"name" : "海清"

},

{

"id" : 3,

"name" : "张涵予"

}

]

}

},

{

"_index" : "movie_index",

"_type" : "_doc",

"_id" : "3",

"_score" : 0.48527452,

"_source" : {

"id" : 3,

"name" : "红海事件",

"doubanScore" : 5.0,

"actorList" : [

{

"id" : 4,

"name" : "代号"

},

{

"id" : 5,

"name" : "刘德华"

}

]

}

}

]

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

12、按分词子属性查询

GET movie_index/_search

{

"query":{

"match": {"actorList.name":"张"}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

13、按短语查询

GET movie_index/_search

{

"query":{

"match_phrase": {"name":"红海"}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

按短语查询,不再利用分词技术,直接用短语在原始数据中匹配

14 、校正匹配分词

GET movie_index/_search

{

"query":{

"fuzzy": {"name":"rad"}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

fuzzy查询,当一个单词都无法准确匹配,es通过一种算法对非常接近的单词也给与一定的评分,能够查询出来,但是消耗更多的性能。

中文测试不成功,创建了一个新的索引指定name为read,测试查找成功

15 、过滤–查询后过滤

GET movie_index/_search

{

"query":{

"match": {"name":"红"}

},

"post_filter":{

"term": {

"actorList.id": 1

}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

16、过滤–查询前过滤

(推荐)

GET movie_index/_search

{

"query":{

"bool":{

"filter":[ {"term": { "actorList.id": "1" }},

{"term": { "actorList.id": "3" }}

],

"must":{"match":{"name":"红"}}

}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

17、过滤–按范围过滤

GET movie_index/_search

{

"query": {

"bool": {

"filter": {

"range": {

"doubanScore": {"gte": 8}

}

}

}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

关于范围操作符:

| 符号 | 含义 |

|---|---|

| gt | 大于 |

| lt | 小于 |

| gte | 大于等于 |

| lte | 小于等于 |

18、排序

GET movie_index/_search

{

"query":{

"match": {"name":"红海"}

}

, "sort": [

{

"doubanScore": {

"order": "desc"

}

}

]

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

19、分页查询

GET movie_index/_search

{

"query": { "match_all": {} },

"from": 1,

"size": 1

}

- 1

- 2

- 3

- 4

- 5

- 6

20、指定查询的字段

GET movie_index/_search

{

"query": { "match_all": {} },

"_source": ["name", "doubanScore"]

}

- 1

- 2

- 3

- 4

- 5

21、高亮

GET movie_index/_search

{

"query":{

"match": {"name":"红海"}

},

"highlight": {

"fields": {"name":{} }

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

结果有·

"highlight" : {

"name" : [

"<em>红</em><em>海</em>行动"

]

}

- 1

- 2

- 3

- 4

- 5

23、复杂查询

先过滤,后查询

GET movie_index/_search

{

"query": {

"bool": {

"filter": [{

"term": {

"actorList.id": "1"

}

}, {

"term": {

"actorList.id": "3"

}

}],

"must": [{

"match": {

"name": "红海"

}

}]

}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

或

GET movie_index/_search

{

"query": {

"bool": {

"filter": [{

"terms": {

"actorList.id": [1, 3]

}

}],

"must": [{

"match": {

"name": "红海"

}

}]

}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

24、聚合

PUT atguigu/_mapping/doc

{

"properties": {

"interests": {

"type": "text",

"fielddata": true

}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

复杂检索

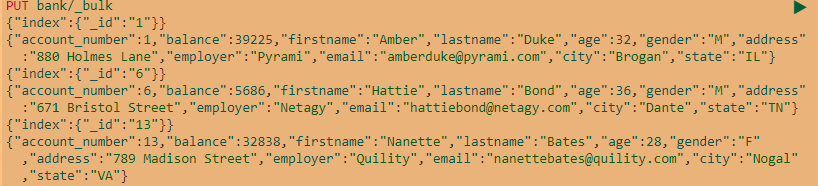

1、BulkAPI实现批量操作

格式:

{action:{metadata}}\n

{requestbody}\n

action:(行为)

create:文档不存在时创建

update:更新文档

index:创建新文档或替换已有文档

delete:删除一个文档

metadata:_index,_type,_id

create和index的区别:

如果数据存在,使用create操作失败,会提示文档已经存在,使用index可以成功执行

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

2、聚合查询

格式

name 为聚合起一个名字

AGG_TYPE 聚合类型

"aggs": {

"NAME": {

"AGG_TYPE": {}

}

}

- 1

- 2

- 3

- 4

- 5

request

#搜索address包含mil1的所有人的年龄分布以及平均年龄

GET bank/_search

{

“query”: {

“match”: {

“address”: “mill”

}

},

“aggs”: {

“ageAgg”: {

“terms”: {

“field”: “age”,

“size”: 10

}

}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

response

年龄38的有2个,年龄28的有1个,年龄32的有1个

{

"took" : 54,

"timed_out" : false,

"_shards" : {

"total" : 1,

"successful" : 1,

"skipped" : 0,

"failed" : 0

},

"hits" : {

...

},

"aggregations" : {

"ageAgg" : {

"doc_count_error_upper_bound" : 0,

"sum_other_doc_count" : 0,

"buckets" : [

{

"key" : 38,

"doc_count" : 2

},

{

"key" : 28,

"doc_count" : 1

},

{

"key" : 32,

"doc_count" : 1

}

]

}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

对求出年龄聚合求一个平均值,工资平均值

request

GET bank/_search

{

"query": {

"match": {

"address": "mill"

}

},

"aggs": {

"ageAgg": {

"terms": {

"field": "age",

"size": 10

}

},

"ageVag":{

"avg": {

"field": "age"

}

},

"balanceAvg":{

"avg": {

"field": "balance"

}

}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

response

"aggregations" : {

"ageAgg" : {

"doc_count_error_upper_bound" : 0,

"sum_other_doc_count" : 0,

"buckets" : [

{

"key" : 38,

"doc_count" : 2

},

{

"key" : 28,

"doc_count" : 1

},

{

"key" : 32,

"doc_count" : 1

}

]

},

"balanceAvg" : {

"value" : 25208.0

},

"ageVag" : {

"value" : 34.0

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

3、子聚合

request

#按照年龄聚合并且请求这些年龄段的这些人的平均薪资

GET bank/_search

{

"query": {

"match_all": {}

},

"aggs": {

"ageAgg": {

"terms": {

"field": "age",

"size": 100

},

"aggs": {

"ageVag": {

"avg": {

"field": "balance"

}

}

}

}

},

"size":0

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

在aggs聚合里再进行一次聚合

(size=0 分页不看查出的数据,只看聚合)

response

31岁的有61个人,平均工资28312.918032786885

{

"took" : 5,

"timed_out" : false,

"_shards" : {

"total" : 1,

"successful" : 1,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : {

"value" : 1000,

"relation" : "eq"

},

"max_score" : null,

"hits" : [ ]

},

"aggregations" : {

"ageAgg" : {

"doc_count_error_upper_bound" : 0,

"sum_other_doc_count" : 0,

"buckets" : [

{

"key" : 31,

"doc_count" : 61,

"ageVag" : {

"value" : 28312.918032786885

}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

request

#查出所有年龄分布,并且这些年龄段中M的平均薪资和F的平薪资以及这个年龄段的总体平均薪资

GET bank/_search

{

“query”: {

“match_all”: {

<span class="token punctuation">}</span>

<span class="token punctuation">}</span><span class="token punctuation">,</span>

<span class="token string">"aggs"</span><span class="token operator">:</span> <span class="token punctuation">{</span>

<span class="token string">"ageAgg"</span><span class="token operator">:</span> <span class="token punctuation">{</span>

<span class="token string">"terms"</span><span class="token operator">:</span> <span class="token punctuation">{</span>

<span class="token string">"field"</span><span class="token operator">:</span> <span class="token string">"age"</span><span class="token punctuation">,</span>

<span class="token string">"size"</span><span class="token operator">:</span> <span class="token number">100</span>

<span class="token punctuation">}</span><span class="token punctuation">,</span>

<span class="token string">"aggs"</span><span class="token operator">:</span> <span class="token punctuation">{</span>

<span class="token string">"genderAvg"</span><span class="token operator">:</span> <span class="token punctuation">{</span>

<span class="token string">"terms"</span><span class="token operator">:</span> <span class="token punctuation">{</span>

<span class="token string">"field"</span><span class="token operator">:</span> <span class="token string">"gender.keyword"</span>

<span class="token punctuation">}</span><span class="token punctuation">,</span>

<span class="token string">"aggs"</span><span class="token operator">:</span> <span class="token punctuation">{</span>

<span class="token string">"balanceAvg"</span><span class="token operator">:</span> <span class="token punctuation">{</span>

<span class="token string">"avg"</span><span class="token operator">:</span> <span class="token punctuation">{</span>

<span class="token string">"field"</span><span class="token operator">:</span> <span class="token string">"balance"</span>

<span class="token punctuation">}</span>

<span class="token punctuation">}</span>

<span class="token punctuation">}</span>

<span class="token punctuation">}</span><span class="token punctuation">,</span>

<span class="token string">"ageBalanAvg"</span><span class="token operator">:</span> <span class="token punctuation">{</span>

<span class="token string">"avg"</span><span class="token operator">:</span> <span class="token punctuation">{</span>

<span class="token string">"field"</span><span class="token operator">:</span> <span class="token string">"balance"</span>

<span class="token punctuation">}</span>

<span class="token punctuation">}</span>

<span class="token punctuation">}</span>

<span class="token punctuation">}</span>

<span class="token punctuation">}</span><span class="token punctuation">,</span>

<span class="token string">"size"</span><span class="token operator">:</span> <span class="token number">0</span>

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

response

{

"aggregations" : {

"ageAgg" : {

"doc_count_error_upper_bound" : 0,

"sum_other_doc_count" : 0,

"buckets" : [

{

"key" : 31,

"doc_count" : 61,

"ageBalanAvg" : {

"value" : 28312.918032786885

},

"genderAvg" : {

"doc_count_error_upper_bound" : 0,

"sum_other_doc_count" : 0,

"buckets" : [

{

"key" : "M",

"doc_count" : 35,

"balanceAvg" : {

"value" : 29565.628571428573

}

},

{

"key" : "F",

"doc_count" : 26,

"balanceAvg" : {

"value" : 26626.576923076922

}

}

]

}

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

</div>

<link href="https://csdnimg.cn/release/phoenix/mdeditor/markdown_views-60ecaf1f42.css" rel="stylesheet">

</div>

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?