上传 put C:\Users\19668\Desktop\2\kafka_2.11-0.11.0.2.tgz

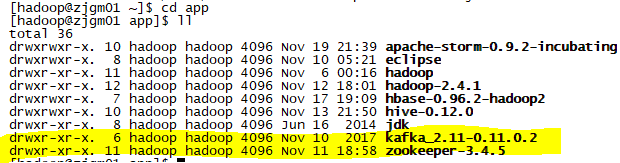

解压到app下面 tar -zxvf kafka_2.11-0.11.0.2.tgz -C app/

cd kafka_2.11-0.11.0.2/config/

[hadoop@zjgm01 config]$ vi server.properties

修改server.properties

broker.id=0

zookeeper.connect=zjgm01:2181,zjgm02:2181,zjgm03:2181

来到cd /app下将kafka_2.11-0.11.0.2

scp -r kafka_2.11-0.11.0.2 zjgm02:/home/hadoop/app/

scp -r kafka_2.11-0.11.0.2 zjgm03:/home/hadoop/app/

[hadoop@zjgm01 kafka_2.11-0.11.0.2]$ pwd

/home/hadoop/app/kafka_2.11-0.11.0.2

启动

[hadoop@zjgm01 kafka_2.11-0.11.0.2]$ bin/kafka-server-start.sh config/server.properties

查看jps zjgm01 zjgm02 是否有Kafka

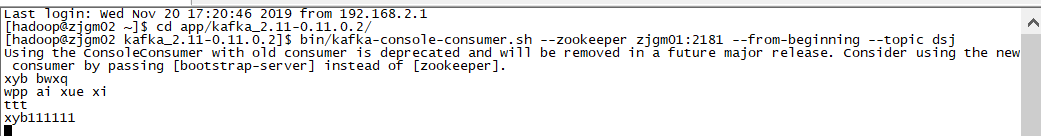

cd app/kafka_2.11-0.11.0.2/

zjgm01

bin/kafka-topics.sh --create --zookeeper zjgm01:2181 --replication-factor 3 --partitions 1 --topic dsj

bin/kafka-console-producer.sh --broker-list zjgm01:9092 --topic dsj

zjgm02

bin/kafka-console-consumer.sh --zookeeper zjgm01:2181 --from-beginning --topic dsj

ProducerDemo

package com.zhongruan.kafka;

import java.util.Properties;

import kafka.javaapi.producer.Producer;

import kafka.producer.KeyedMessage;

import kafka.producer.ProducerConfig;

public class ProducerDemo {

public static void main(String[] args) throws InterruptedException {

Properties props=new Properties();

props.put(“zk.connect”, “zjgm01:2181,zjgm02:2181,zjgm03:2181”);

props.put(“metadata.broker.list”,“zjgm01:9092,zjgm02:9092,zjgm03:9092”);

props.put(“serializer.class”, “kafka.serializer.StringEncoder”);

ProducerConfig conf=new ProducerConfig(props);

Producer<String, String> producer = new Producer<>(conf);

/producer.send(new KeyedMessage<String, String>(“dsj”,“xyb1111…”));/

//在zjgm02种显示数据xyb xue bu xi 1 ci xyb xue bu xi 2 ci

for(int i=0;i<10;i++){

Thread.sleep(2000);

producer.send(new KeyedMessage<String, String>(“dsj”,“xyb xue bu xi”+i+“ci”));

}

}

}

ConsumerDemo

package com.zhongruan.kafka;

import kafka.consumer.Consumer;

import kafka.consumer.ConsumerConfig;

import kafka.consumer.KafkaStream;

import kafka.javaapi.consumer.ConsumerConnector;

import kafka.message.MessageAndMetadata;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Properties;

public class ConsumerDemo {

public static void main(String[] args) {

Properties props=new Properties();

props.put("zookeeper.connect", "zjgm01:2181,zjgm02:2181,zjgm03:2181");

props.put("group.id","111");

props.put("auto.offset.reset", "smallest");

ConsumerConfig config=new ConsumerConfig(props);

ConsumerConnector consumer = Consumer.createJavaConsumerConnector(config);

Map<String,Integer> topicMap=new HashMap<>();

topicMap.put("dsj",1);

//topicMap.put("xyb",1);

Map<String, List<KafkaStream<byte[], byte[]>>> streamsMap = consumer.createMessageStreams(topicMap);

List<KafkaStream<byte[], byte[]>> streams = streamsMap.get("dsj");

for(KafkaStream<byte[], byte[]> kafkaStream:streams){

new Thread(new Runnable() {

@Override

public void run() {

for(MessageAndMetadata<byte[], byte[]> mm:kafkaStream){

String s = new String(mm.message());

System.out.println(s);

}

}

}).start();

}

}

}

5577

5577

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?