笔者这里借鉴hadoop编译脚本start-build.sh的经验,构建docker容器,并将hive源码目录及家目录下的.m2用-v挂载到容器中。

我的Dockerfile文件如下

FROM centos:7

WORKDIR /root

ADD jdk-8u291-linux-x64.tar.gz /opt/

RUN mv /opt/jdk1.8.0_291 /opt/jdk8

ENV JAVA_HOME /opt/jdk8

ENV CLASSPATH $JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

ENV PATH=$PATH:$JAVA_HOME/bin

RUN yum update -y && \

yum install -y java-1.8.0-openjdk \

gcc* \

make \

snappy* \

bzip2* \

lzo* \

zlib* \

openssl* \

svn \

ncurses* \

autocong \

automake \

libtool \

epel-release \

*zstd* \

gcc-c++ \

bats \

ShellCheck \

python3 \

sudo \

fuse3 \

fuse3-devel \

doxygen \

git \

rsync \

patch \

vim

######

# Install cmake 3.1.0

######

RUN mkdir -p /opt/cmake && \

curl -L -s -S \

https://cmake.org/files/v3.20/cmake-3.20.0-linux-x86_64.tar.gz \

-o /opt/cmake.tar.gz && \

tar xzf /opt/cmake.tar.gz --strip-components 1 -C /opt/cmake

ENV CMAKE_HOME /opt/cmake

ENV PATH "${PATH}:/opt/cmake/bin"

######

# Install Google Protobuf 2.5.0

######

RUN mkdir -p /opt/protobuf-src && \

curl -L -s -S \

https://github.com/google/protobuf/releases/download/v2.5.0/protobuf-2.5.0.tar.gz \

-o /opt/protobuf.tar.gz && \

tar xzf /opt/protobuf.tar.gz --strip-components 1 -C /opt/protobuf-src

RUN cd /opt/protobuf-src && ./configure --prefix=/opt/protobuf && make install

ENV PROTOBUF_HOME /opt/protobuf

ENV PATH "${PATH}:/opt/protobuf/bin"

#RUN curl -L -s https://rpm.nodesource.com/setup_10.x | bash - && \

# RUN yum install -y nodejs && \

# npm config set registry https://registry.npm.taobao.org

# RUN npm install -g n && \

# n lts && PATH="$PATH" && \

# npm install -g bower && \

# npm install -g ember-cli

RUN yum install -y wget && \

mkdir -p /opt/nodejs && \

wget -O /opt/nodejs.tar.xz https://npm.taobao.org/mirrors/node/v14.17.5/node-v14.17.5-linux-x64.tar.xz && \

tar xf /opt/nodejs.tar.xz --strip-components 1 -C /opt/nodejs && \

ln -s /opt/nodejs/bin/npm /usr/local/bin && \

ln -s /opt/nodejs/bin/node /usr/local/bin && \

npm config set registry https://registry.npm.taobao.org && \

npm install -g bower && \

npm install -g ember-cli

RUN pip3 install pylint==2.6.0 python-dateutil==2.8.1 -i https://pypi.doubanio.com/simple

RUN mkdir -p /opt/maven && \

curl -L -s -S \

https://dlcdn.apache.org/maven/maven-3/3.6.3/binaries/apache-maven-3.6.3-bin.tar.gz \

-o /opt/maven.tar.gz && \

tar xzf /opt/maven.tar.gz --strip-components 1 -C /opt/maven

ENV MAVEN_HOME /opt/maven

ENV PATH "${PATH}:/opt/maven/bin"

RUN mkdir -p /opt/isa-l-src \

&& yum install -y automake yasm libtool\

&& curl -L -s -S \

https://github.com/intel/isa-l/archive/v2.29.0.tar.gz \

-o /opt/isa-l.tar.gz \

&& tar xzf /opt/isa-l.tar.gz --strip-components 1 -C /opt/isa-l-src \

&& cd /opt/isa-l-src \

&& ./autogen.sh \

&& ./configure \

&& make "-j$(nproc)" \

&& make install \

&& cd /root \

&& rm -rf /opt/isa-l-src

###

# Avoid out of memory errors in builds

###

RUN yum clean all

ENV MAVEN_OPTS -Xms512m -Xmx3072m

####

# Install svn & Forrest (for Apache Hadoop website)

###

RUN mkdir -p /opt/apache-forrest && \

curl -L -s -S \

https://archive.apache.org/dist/forrest/0.8/apache-forrest-0.8.tar.gz \

-o /opt/forrest.tar.gz && \

tar xzf /opt/forrest.tar.gz --strip-components 1 -C /opt/apache-forrest

RUN echo 'forrest.home=/opt/apache-forrest' > build.properties

ENV FORREST_HOME=/opt/apache-forrest

执行编译mvn clean package -Pdist -DskipTests

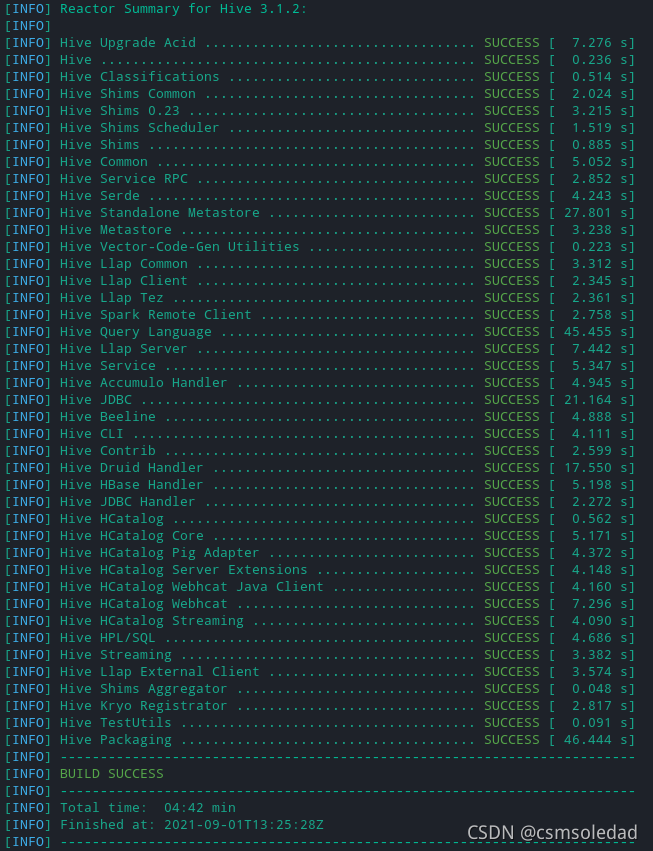

如上图所示执行成功

hive on spark支持

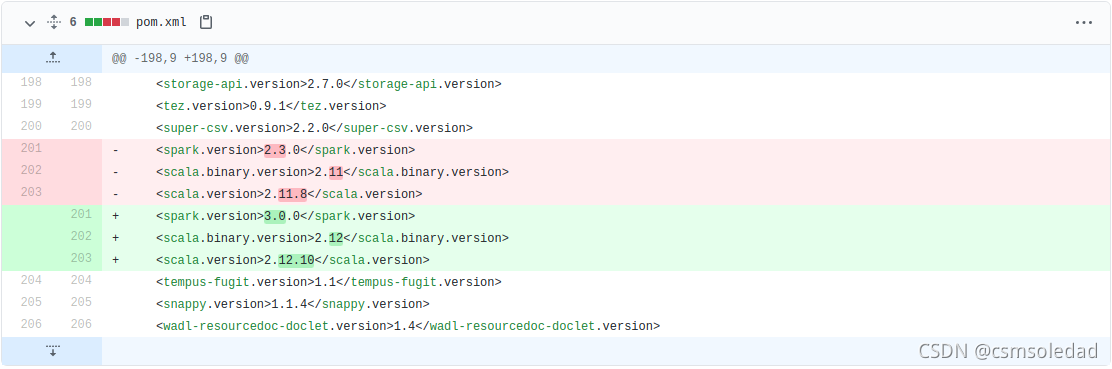

hive中带用对spark的支持,但版本过低,需要修改源码目录下的pom.xml中的版本号

实测spark-3.0.3也能成功,修改zookeeper 3.5.7 hadoop 3.1.3也能编译成功

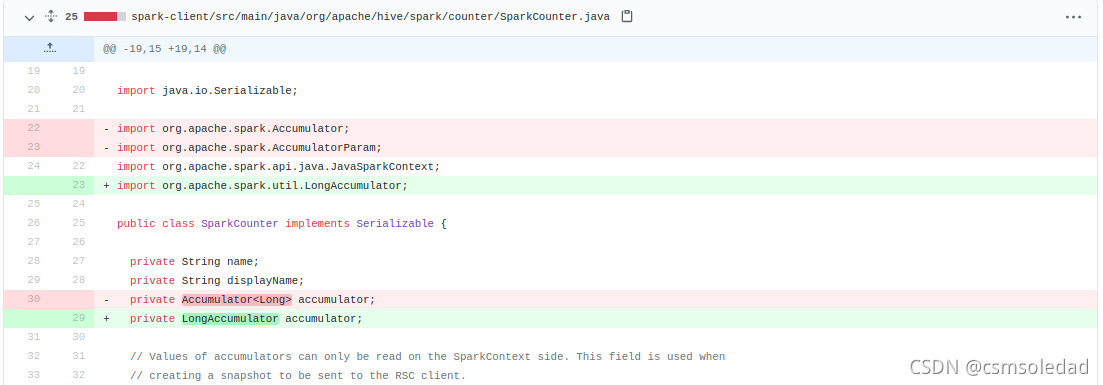

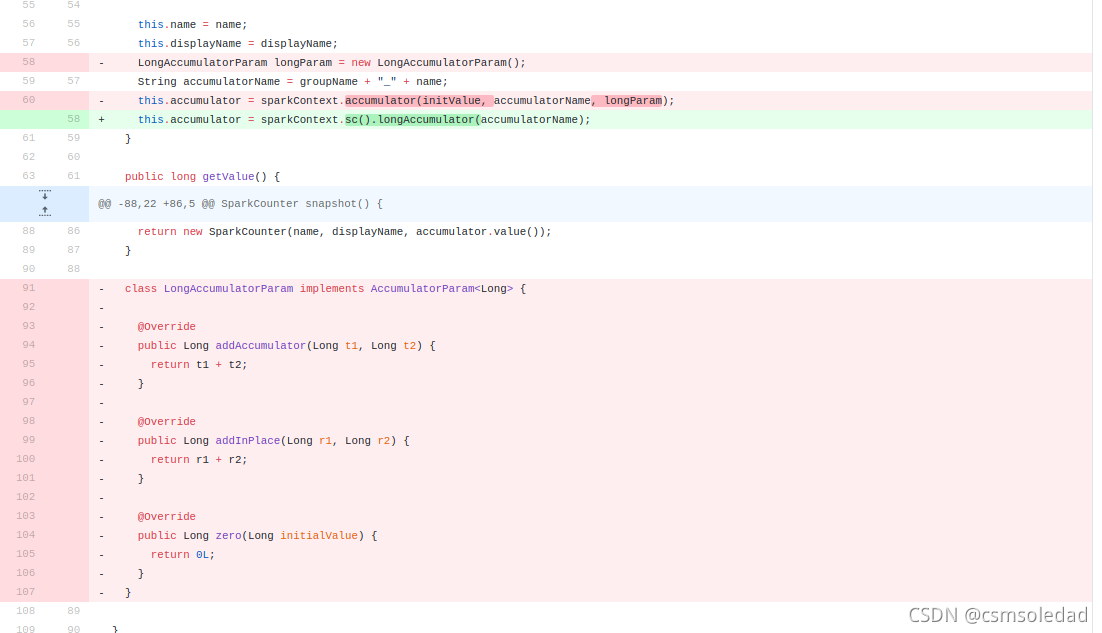

由于spark3有较大变动,故需要改变相应的源码

用idea打开该工程

修改内容参考

https://github.com/gitlbo/hive/commits/3.1.2

spark-client/src/main/java/org/apache/hive/spark/counter/SparkCounter.java

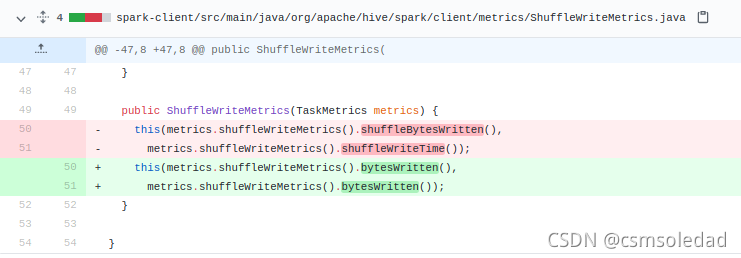

spark-client/src/main/java/org/apache/hive/spark/client/metrics/ShuffleWriteMetrics.java

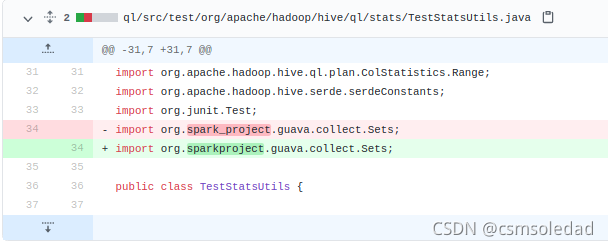

ql/src/test/org/apache/hadoop/hive/ql/stats/TestStatsUtils.java

1916

1916

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?