爬虫的特征和运行方式

User-Agent:主要用来将我们的爬虫伪装成浏览器。

Cookie:主要用来保存爬虫的登录状态。

连接数:主要用来限制单台机器与服务端的连接数量。

代理IP:主要用来伪装请求地址,提高单机并发数量。

爬虫工作的方式可以归纳为两种:深度优先、广度优先。

深度优先就是一个连接一个连接的向内爬,处理完成后再换一下一个连接,这种方式对于我们来说缺点很明显。

广度优先就是一层一层的处理,非常适合利用多线程并发技术来高效处理,因此我们也用广度优先的抓取方式。

首先我们用Visual Studio 2015创建一个控制台程序,定义一个简单的SimpleCrawler类,里面只包含几个简单的事件:

public class SimpleCrawler

{

public SimpleCrawler() { }

/// <summary>

/// 爬虫启动事件

/// </summary>

public event EventHandler<OnStartEventArgs> OnStart;

/// <summary>

/// 爬虫完成事件

/// </summary>

public event EventHandler<OnCompletedEventArgs> OnCompleted;

/// <summary>

/// 爬虫出错事件

/// </summary>

public event EventHandler<Exception> OnError;

/// <summary>

/// 定义cookie容器

/// </summary>

public CookieContainer CookieContainer { get; set; }

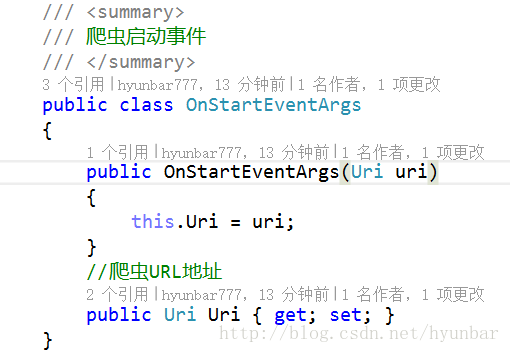

}接着我们创建一个OnStart的事件对象:

然后我们创建一个OnCompleted事件对象:

最后我们再给它增加一个异步方法,通过User-Agent将爬虫伪装成了Chrome浏览器

/// <summary>

/// 异步创建爬虫

/// </summary>

/// <param name="uri"></param>

/// <param name="proxy"></param>

/// <returns></returns>

public async Task<string> Start(Uri uri, WebProxy proxy = null)

{

return await Task.Run(() =>

{

var pageSource = string.Empty;

try

{

if (this.OnStart != null)

this.OnStart(this, new OnStartEventArgs(uri));

Stopwatch watch = new Stopwatch();

watch.Start();

HttpWebRequest request = (HttpWebRequest)WebRequest.Create(uri);

request.Accept = "*/*";

//定义文档类型及编码

request.ContentType = "application/x-www-form-urlencoded";

request.AllowAutoRedirect = false;//禁止自动跳转

request.UserAgent = "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/60.0.3112.113 Safari/537.36";

//定义请求超时事件为5s

request.Timeout = 5000;

//长连接

request.KeepAlive = true;

request.Method = "GET";

//设置代理服务器IP,伪装请求地址

if (proxy != null)

request.Proxy = proxy;

//附加Cookie容器

request.CookieContainer = this.CookieContainer;

//定义最大链接数

request.ServicePoint.ConnectionLimit = int.MaxValue;

//获取请求响应

HttpWebResponse response = (HttpWebResponse)request.GetResponse();

//将Cookie加入容器,保持登录状态

foreach (Cookie cookie in response.Cookies)

this.CookieContainer.Add(cookie);

//获取响应流

Stream stream = response.GetResponseStream();

//以UTF8的方式读取流

StreamReader reader = new StreamReader(stream,Encoding.UTF8);

//获取网站资源

pageSource = reader.ReadToEnd();

watch.Stop();

//获取当前任务线程ID

var threadID = Thread.CurrentThread.ManagedThreadId;

//获取请求执行时间

var milliseconds = watch.ElapsedMilliseconds;

reader.Close();

stream.Close();

request.Abort();

response.Close();

if (this.OnCompleted != null)

this.OnCompleted(this, new OnCompletedEventArgs(uri, threadID, milliseconds, pageSource));

}

catch (Exception ex)

{

if (this.OnError != null)

this.OnError(this, ex);

}

return pageSource;

});

}在控制台里写下爬虫的抓取代码:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.Text.RegularExpressions;

using System.Threading.Tasks;

namespace TestPa

{

class Program

{

static void Main(string[] args)

{

//定义入口URl

var cityUrl = "http://hotels.ctrip.com/citylist";

//定义泛型列表存放城市名称及对应的酒店

var cityList = new List<City>();

//调用自己写的爬虫程序

var cityCrawler = new SimpleCrawler();

cityCrawler.OnStart += (s, e) =>

{

Console.WriteLine("爬虫开始抓取的地址:" + e.Uri.ToString());

};

cityCrawler.OnError += (s, e) =>

{

Console.WriteLine("爬虫抓取出现错误:" + e.Message);

};

cityCrawler.OnCompleted += (s, e) =>

{

var links = Regex.Matches(e.PageSource, @"<a[^>]+href=""*(?<href>/hotel/[^>\s]+)""\s*[^>]*>(?<text>(?!.*img).*?)</a>", RegexOptions.IgnoreCase);

foreach(Match match in links)

{

var city = new City

{

CityName = match.Groups["text"].Value,

Uri = new Uri("http://hotels.ctrip.com" + match.Groups["href"].Value)

};

if (!cityList.Contains(city))

cityList.Add(city);

Console.WriteLine(city.CityName + "||" + city.Uri);

}

Console.WriteLine(e.PageSource);

Console.WriteLine("**********************************");

Console.WriteLine("爬虫抓取完成");

Console.WriteLine("耗时:" + e.Milliseconds + " 毫秒");

Console.WriteLine("线程:" + e.ThreadID);

Console.WriteLine("地址:" + e.Uri.ToString());

};

cityCrawler.Start(new Uri(cityUrl)).Wait();

Console.ReadKey();

}

}

public class City

{

public string CityName { get; set; }

public Uri Uri { get; set; }

}

}

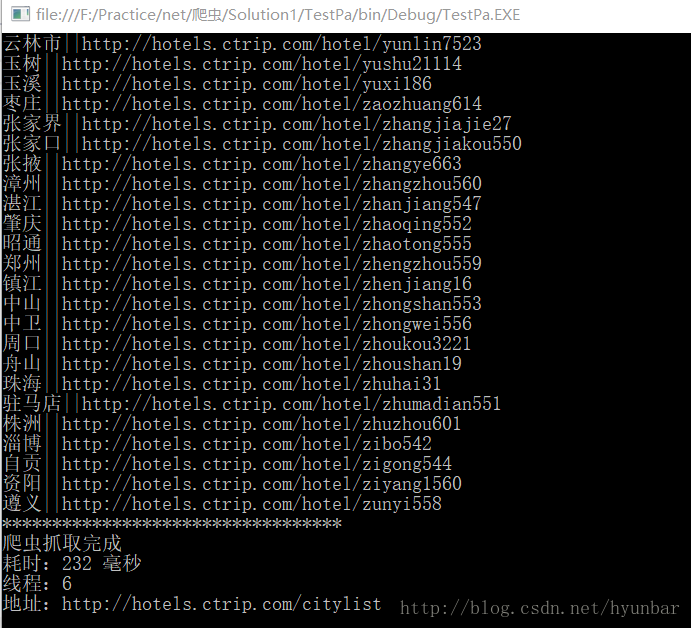

运行结果:

请看大神原文地址

基于.NET更高端智能化的网络爬虫

1590

1590

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?