环境准备 一共四台服务器,均为centos7, 安装jdk8

服务1 :192.168.1.38 s201

服务2 :192.168.1.39 s202

服务3 :192.168.1.40 s203

服务4 : 192.168.1.41 s204

1 修改192.168.1.38 主机名 为 s201

vi /etc/hostname

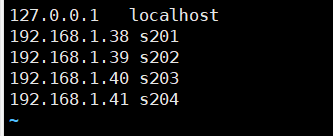

2 修改host文件

vi /etc/host

127.0.0.1 localhost

192.168.1.38 s201

192.168.1.39 s202

192.168.1.40 s203

192.168.1.41 s204

3.启用客户机共享文件夹。(可忽略)

4.修改hostname和ip地址文件

修改主机名 为s202

vi /etc/hostname

修改IP地址 vi /etc/sysconfig/network-scripts/ifcfg-ens33

IPADDR=192.168.1.39

5.重启网络服务

service network restart

6.修改/etc/resolv.conf文件 vi /etc/resolv.conf

nameserver 192.168.1.1

7.重复以上3 ~ 6过程.修改为相对应的ip地址

8 准备完全分布式主机的ssh

1.删除所有主机上的/home/centos/.ssh/*

2.在s201主机上生成密钥对

ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

3.将s201的公钥文件id_rsa.pub远程复制到202 ~ 204主机上。

scp id_rsa.pub centos@s201:/home/centos/.ssh/authorized_keys

scp id_rsa.pub centos@s202:/home/centos/.ssh/authorized_keys

scp id_rsa.pub centos@s203:/home/centos/.ssh/authorized_keys

scp id_rsa.pub centos@s204:/home/centos/.ssh/authorized_keys

9 配置完全分布式(${hadoop_home}/etc/hadoop/)

[core-site.xml]

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://s201/</value>

</property>

</configuration>

[hdfs-site.xml]

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

</configuration>

[mapred-site.xml] 内容不变

[yarn-site.xml]

<?xml version="1.0"?>

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>s201</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

修改[slaves] 文件 vi /soft/hadoop/etc/hadoop/slaves

s202

s203

s204

修改[hadoop-env.sh]

export JAVA_HOME=/usr/local/jdk1.8.0_181

分发配置到其他服务器

cd /soft/hadoop/etc/

scp -r full centos@s202:/soft/hadoop/etc/

scp -r full centos@s203:/soft/hadoop/etc/

scp -r full centos@s204:/soft/hadoop/etc/

删除符号连接

cd /soft/hadoop/etc

rm hadoop

ssh s202 rm /soft/hadoop/etc/hadoop

ssh s203 rm /soft/hadoop/etc/hadoop

ssh s204 rm /soft/hadoop/etc/hadoop

创建符号连接

cd /soft/hadoop/etc/

ln -s full hadoop

ssh s202 ln -s /soft/hadoop/etc/full /soft/hadoop/etc/hadoop

ssh s203 ln -s /soft/hadoop/etc/full /soft/hadoop/etc/hadoop

ssh s204 ln -s /soft/hadoop/etc/full /soft/hadoop/etc/hadoop

删除临时目录文件

cd /tmp

rm -rf hadoop-centos

ssh s202 rm -rf /tmp/hadoop-centos

ssh s203 rm -rf /tmp/hadoop-centos

ssh s204 rm -rf /tmp/hadoop-centos

删除hadoop日志

cd /soft/hadoop/logs

rm -rf *

ssh s202 rm -rf /soft/hadoop/logs/*

ssh s203 rm -rf /soft/hadoop/logs/*

ssh s204 rm -rf /soft/hadoop/logs/*

10.格式化文件系统

hadoop namenode -format

11.启动hadoop进程

start-all.sh 或 start-dfs.sh start-yarn.sh

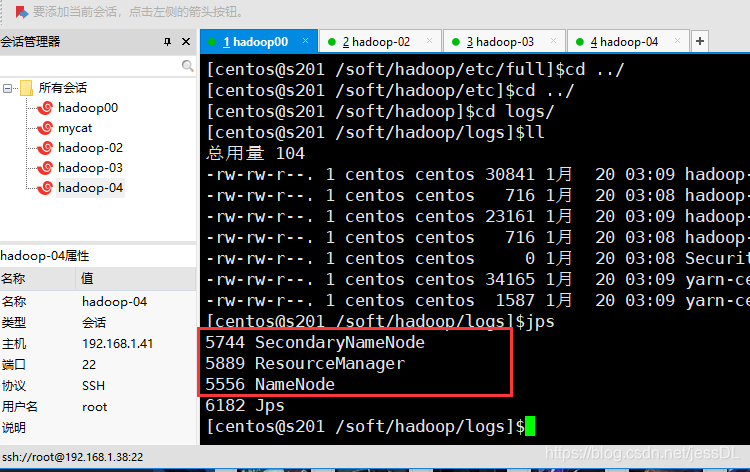

查看进程是否启动成功

s201 中会有三个进程

s202,s203,s204 中各有两个进程

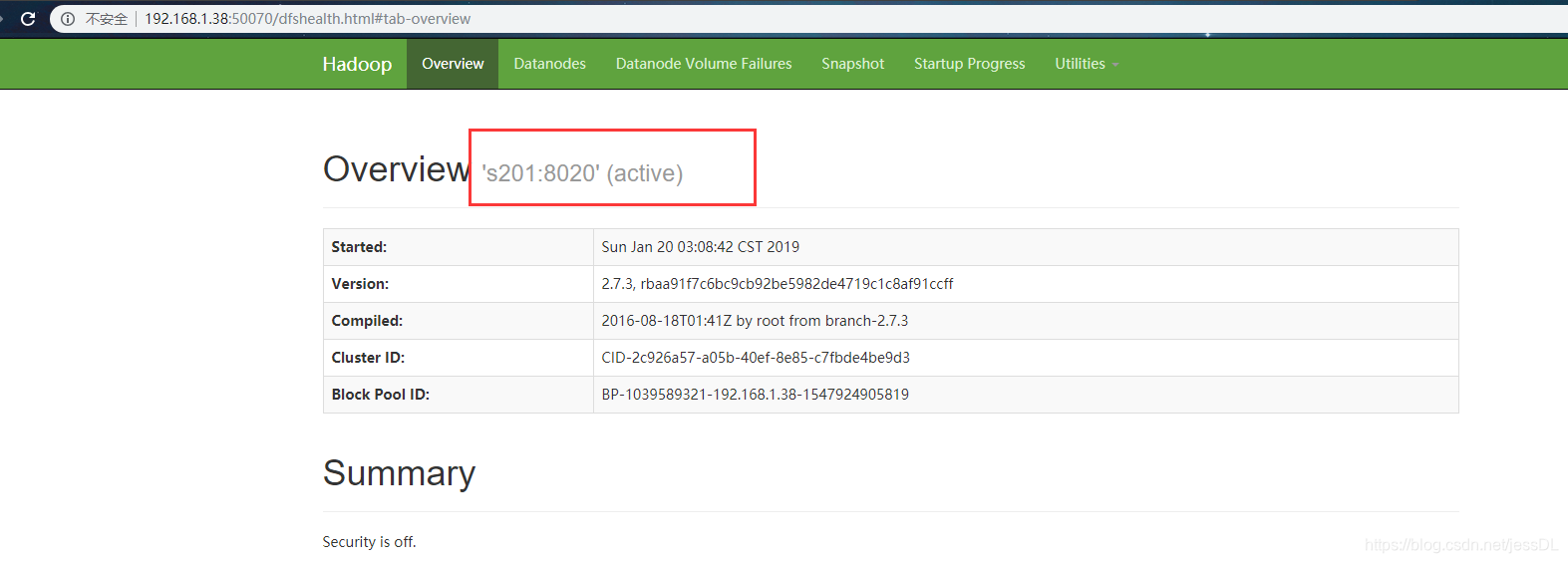

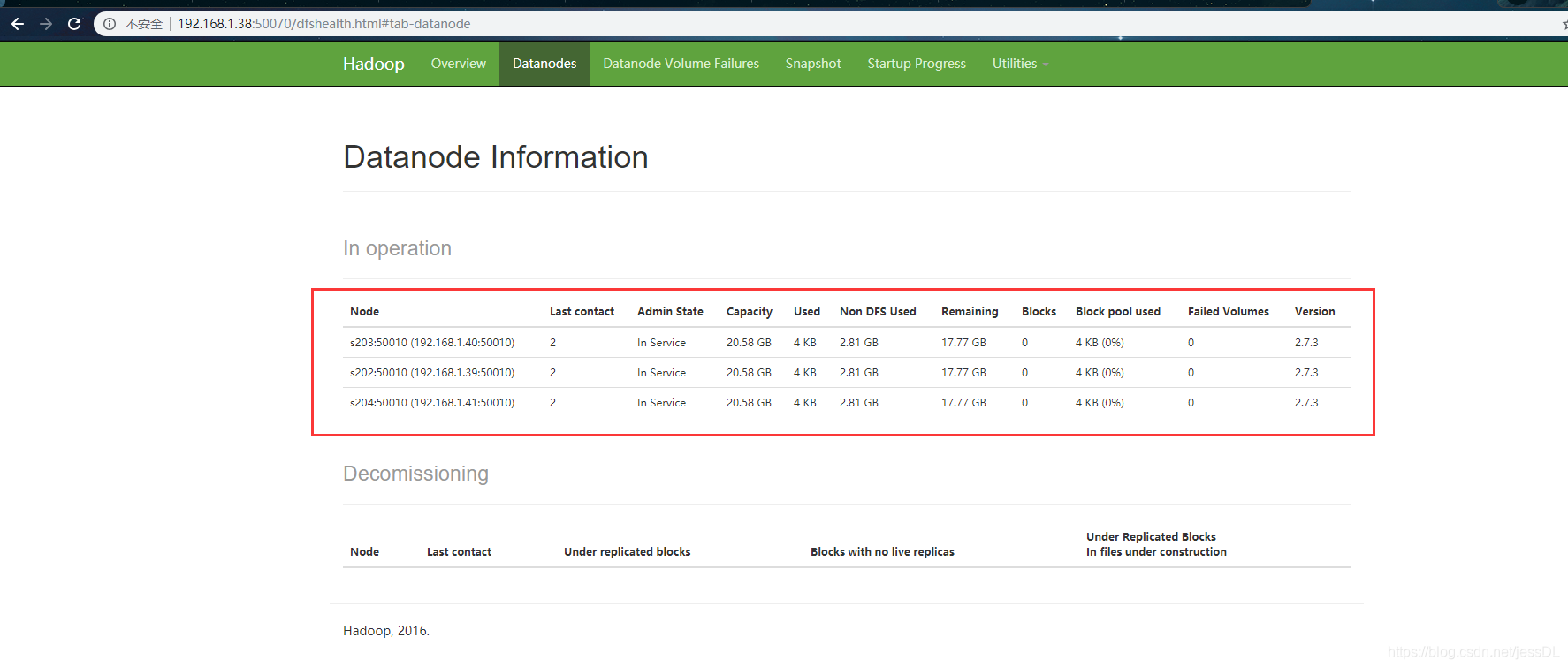

再看hadoop的web页面

出现这些就说明完全分布式就启动成功了

505

505

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?