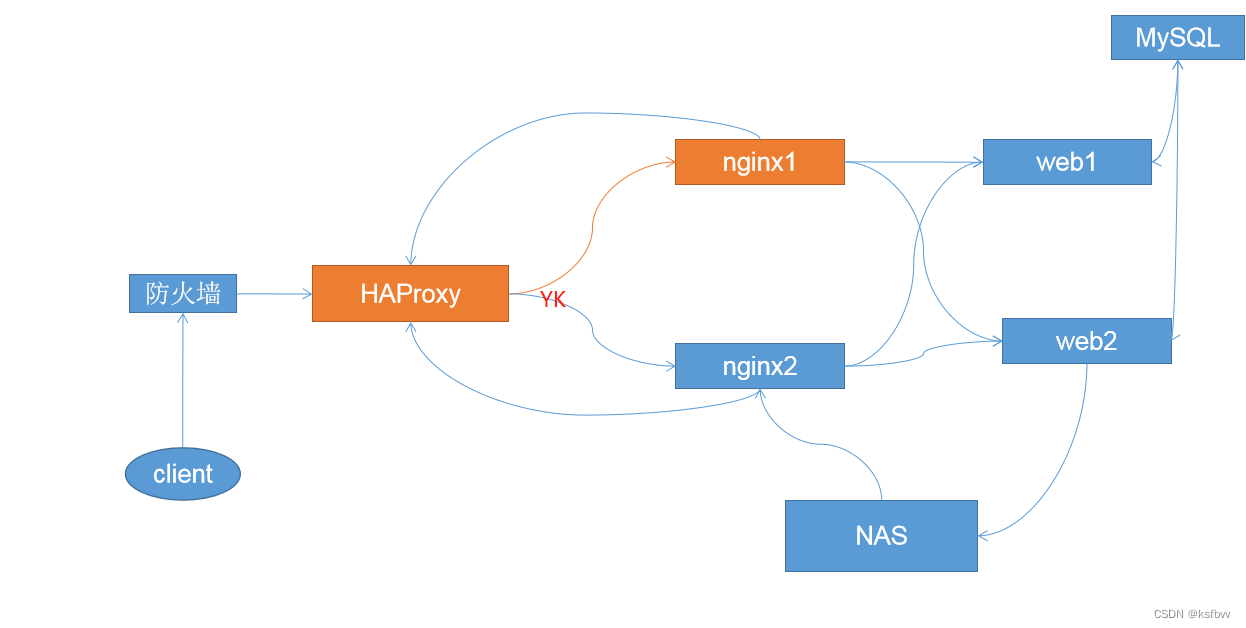

nginx和haproxy的异同点

不同点:

◆nginx可工作在四层和七层,haproxy一般工作在四层,haproxy作为负载均衡器性能比nginx优秀,如果有条件建议四层单独跑haproxy;

◆二者的定位不同:ha定位就是负载均衡器;而ng定位是web服务;

◆二者的编程规则不同,nginx每行都要加分号隔开,而haproxy不用,注意书写规范,一个key,一个value即可;

◆ng的命令类似于编程语言,很好扩展,支持js/perl等脚本,也可自行编写模块进行编译;ha可扩展功能比较少,支持ACL;

◆nginx多进程模式下工作,每个进程也可以有多子线程,可以绑定CPU防止惊群效应;haproxy是多线程,单进程性能也可以, haproxy也能多进程。

◆haproxy升级服务器比较简单,写脚本直接升级,不用重启;nginx,要改文件,然后reload。

相同点:

◆二者功能相近,支持的传输协议(http/tcp/udp)相近;

◆二者都是是用C语言开发;

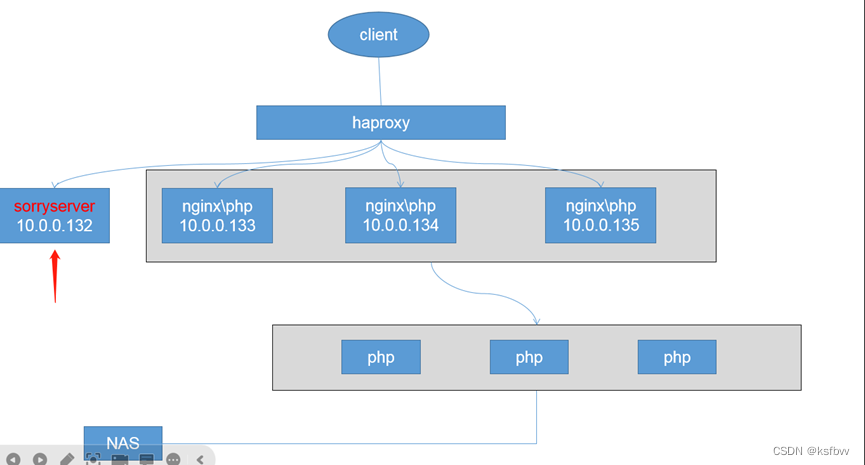

实现haproxy四层地址透传,并且做基于cookie的会话保持

首先编译安装haproxy(略),这里只贴出编译安装的依赖

yum install gcc gcc-c++ glibc glibc-devel pcre pcre-devel openssl openssl-devel systemd-devel net-tools vim iotop bc zip unzip zlib-devel rzsz tree screen 1sof tcpdump wget ntpdate -y

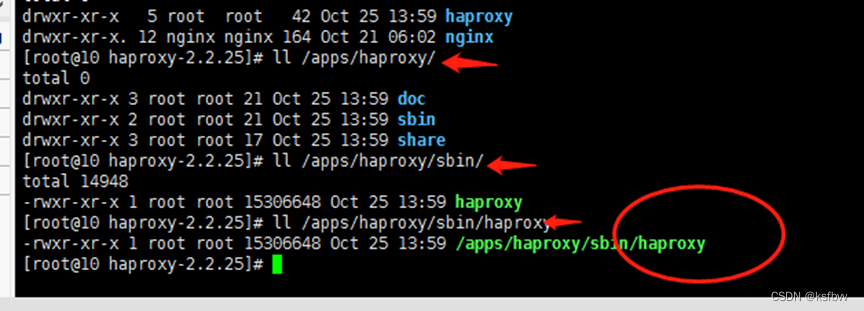

安装路径:

验证版本

[root@10 haproxy-2.2.25]# /apps/haproxy/sbin/haproxy -v

haproxy.service 文件配置

vim /usr/lib/systemd/system/haproxy.service

[Unit]

Description=HAProxy Load Balancer

After=syslog.target network.target

[Service]

ExecStartPre=/apps/haproxy/sbin/haproxy -f /etc/haproxy/haproxy.cfg -c -q

ExecStart=/apps/haproxy/sbin/haproxy -Ws -f /etc/haproxy/haproxy.cfg -p /var/lib/haproxy/haproxy.pid

ExecReload=/bin/kill -USR2 $MAINPID

[Instal1]

WantedBy=multi-user.target

~

~

haproxy.cfg配置文件内容

global

maxconn 100000

chroot /apps/haproxy

stats socket /var/lib/haproxy/haproxy.sock mode 600 level admin

uid 99

gid 99

daemon

#nbproc 4

#cpu-map 10

#cpu-map 21

#cpu-map 32

#cpu-map 43

pidfile /var/lib/haproxy/haproxy.pid

log 127.0.0.1 local3 info

defaults

option http-keep-alive

option forwardfor

maxconn 100000

mode http

timeout connect 300000ms

timeout client 300000ms

timeout server 300000ms

listen stats

mode http

bind 0.0.0.0:9999

stats enable

log global

stats uri /haproxy-status

stats auth haadmin:qlw2e3r4ys

listen web_port

bind 10.0.0.133:80

mode http

log global

server web1 127.0.0.1:8080 check inter 3000 fall 2 rise 5

~

~

重启Haproxy会出现一个报错: [ALERT] 146/132210 (3443) : Starting frontend Redis: cannot bind socket [0.0.0.0:6379]*

解决办法:(引用网络),并且将apache或nginx等WEB服务关闭

vim /etc/sysctl.conf #修改内核参数

net.ipv4.ip_nonlocal_bind = 1 #没有就新增此条记录

sysctl -p #保存结果,使结果生效

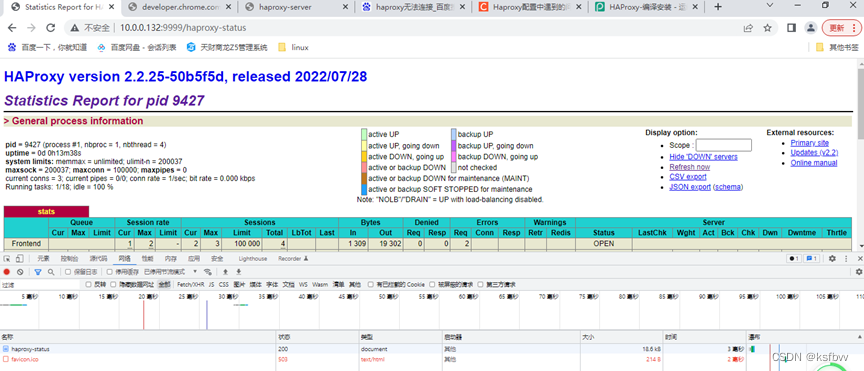

重启访问9999端口

http://10.0.0.132:9999/haproxy-status

输入账号:haadmin 密码:qlw2e3r4ys,效果:

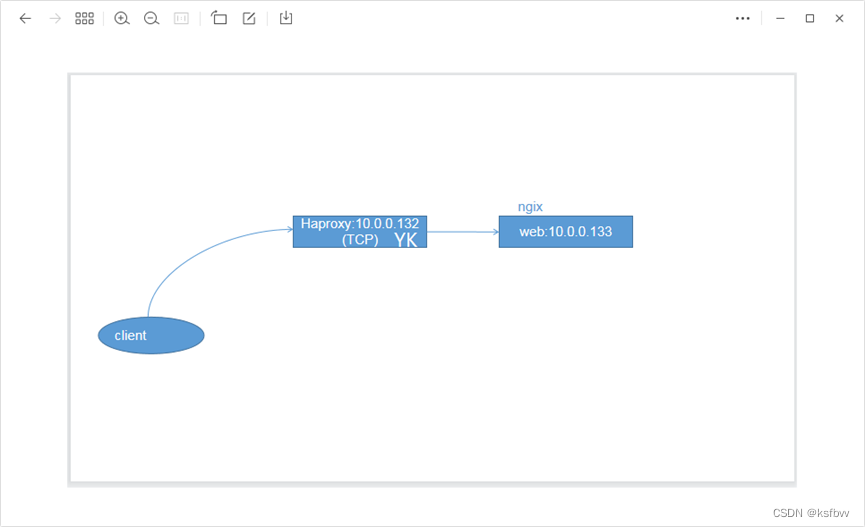

四层地址透传

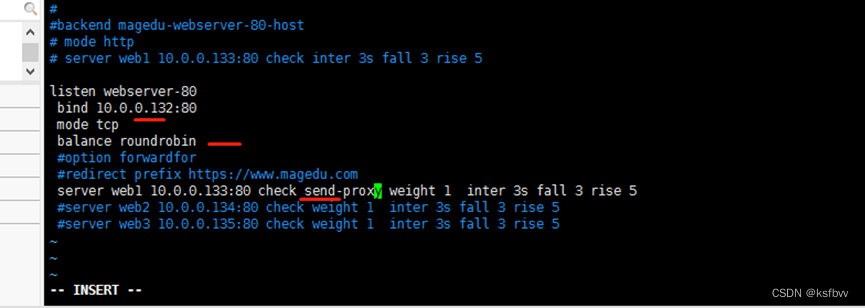

10.0.0.132配置:

重启ha:systemctl restart haproxy

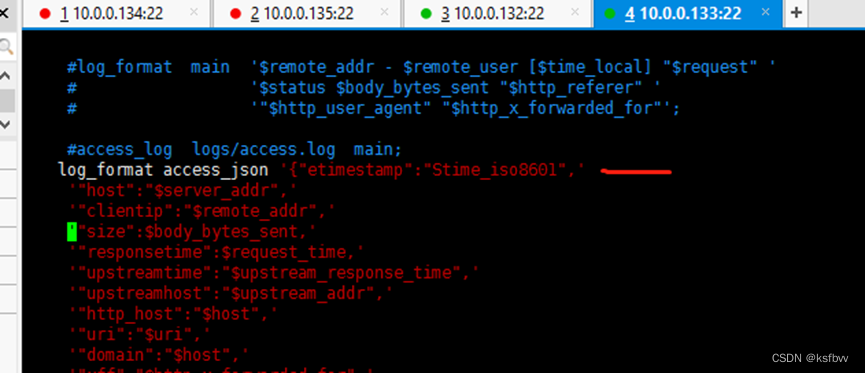

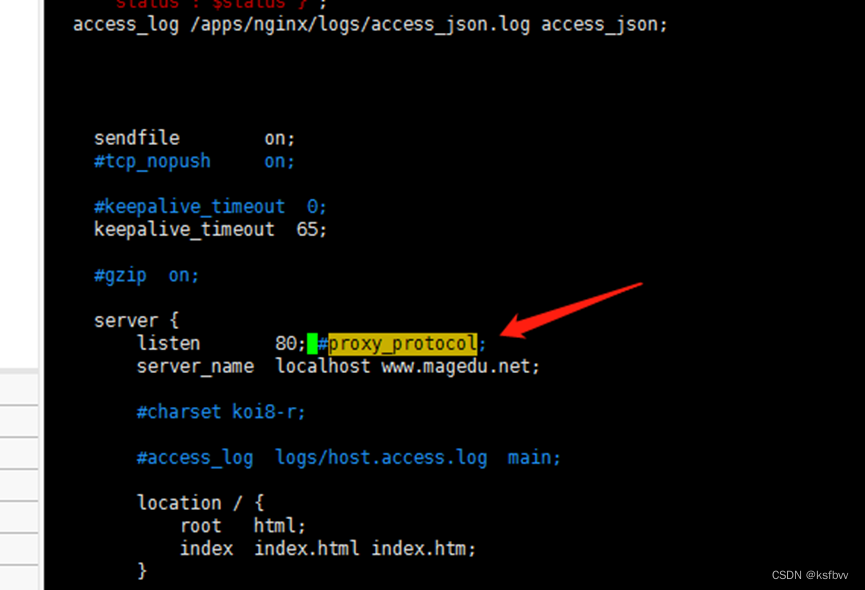

web服务器10.0.0.133 nginx.conf设置:

vim /apps/nginx/conf/nginx.conf

log_format access_json '{"etimestamp":"Stime_iso8601",'

'"host":"$server_addr",'

'"clientip":"$remote_addr",'

'"size":$body_bytes_sent,'

'"responsetime":$request_time,'

'"upstreamtime":"$upstream_response_time",'

'"upstreamhost":"$upstream_addr",'

'"http_host":"$host",'

'"uri":"$uri",'

'"domain":"$host",'

'"xff":"$http_x_forwarded_for",'

'"referer":"$http_referer",'

'"tcp_xff":"$proxy_protocol_addr",'

'"http_user_agent":"$http_user_agent",'

'"status":"$status"}';

access_log /apps/nginx/logs/access_json.log access_json;

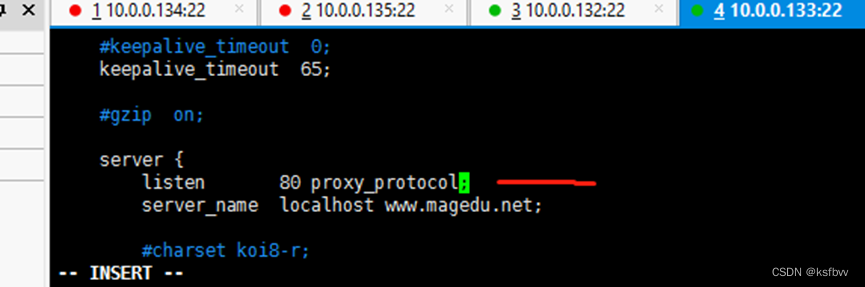

server{

listen 80 proxy_protocol;

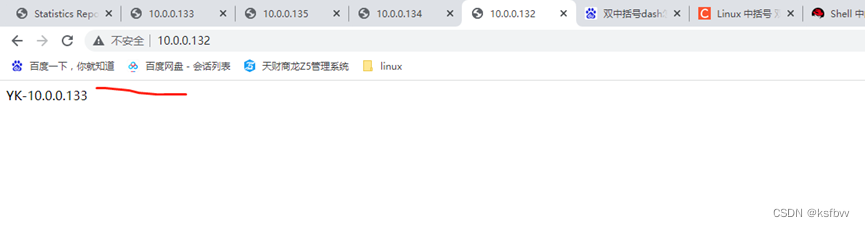

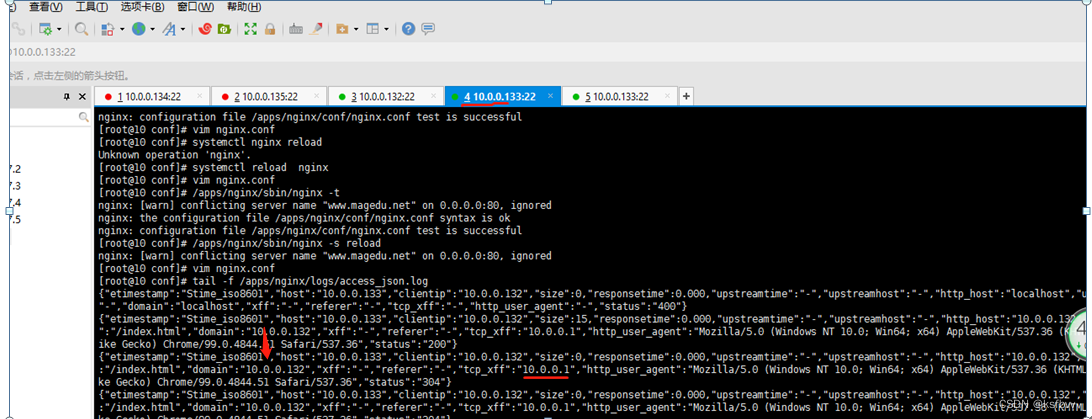

使用浏览器访问haproxy服务器地址

web服务器重载

[root@10 conf]# /apps/nginx/sbin/nginx -s reload

查看web服务器日志

tail -f /apps/nginx/logs/access_json.log

可以看到web服务器已经获取到了前端的地址。

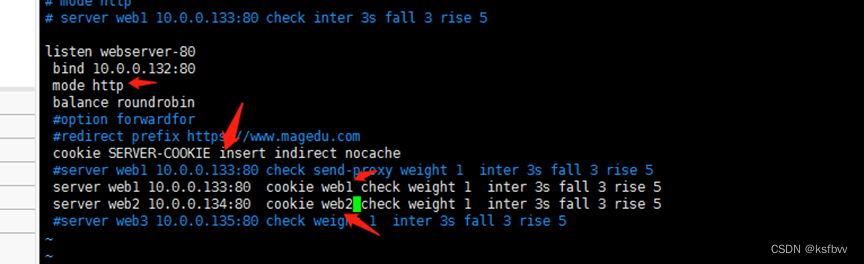

基于cookie的会话保持

hapoxy服务器(132)配置:

vim /etc/haproxy/haproxy.cfg

133web服务器注释添加信息,使nginx正常访问

重启haproxy,访问132,查看cookie信息,还是保持访问133,这种情况下除非删除cookie,否则一点时间的分流都会保持传递给目标主机.

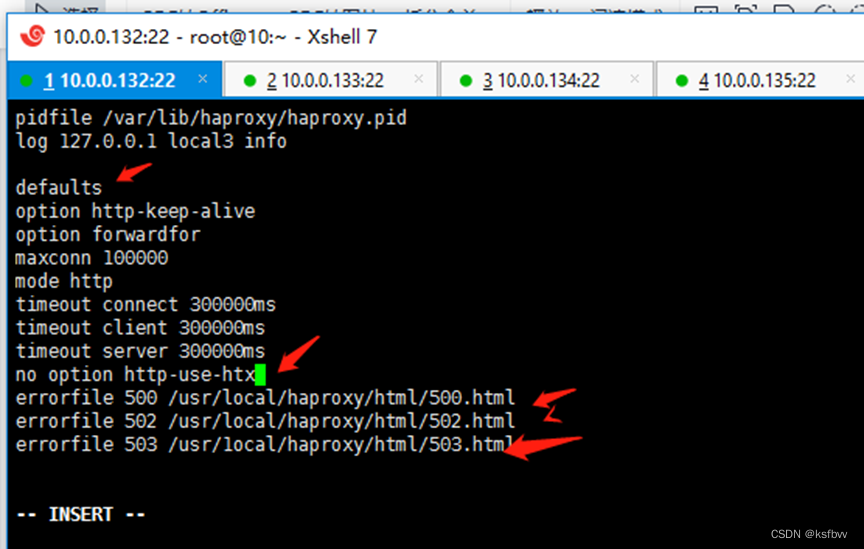

实现自定义错误页面和https的实验

修改haproxy.conf配置文件,在defaults这里插人:

errorfile 500 /usr/local/haproxy/html/500.html

errorfile 502 /usr/local/haproxy/html/502.html

errorfile 503 /usr/1ocal/haproxy/html/503.html

入代码片

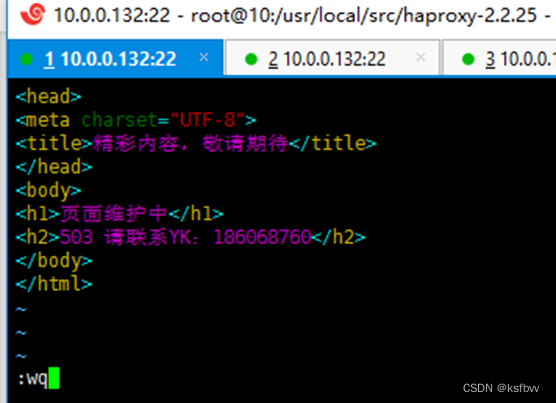

创建报错界面文件:

vim /usr/1ocal/haproxy/html/503.html

文件内容:

HTTP/1.1 503 Service Unavailable

Content-Type:text/html;charset=utf-8

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<title>精彩内容,敬请期待</title>

</head>

<body>

<h1>页面维护中</h1>

<h2>503 请联系YK:186068760</h2>

</body>

</html>

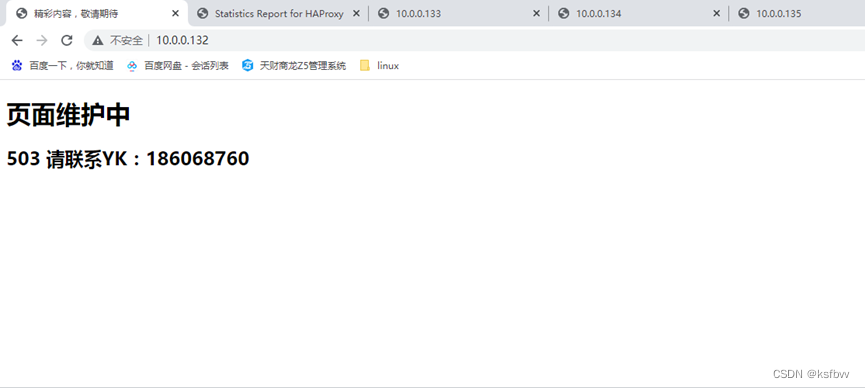

访问:sorryserver地址

OK,升级报错都可以指向这个页面,一般都是一个error服务器,使用公网地址。

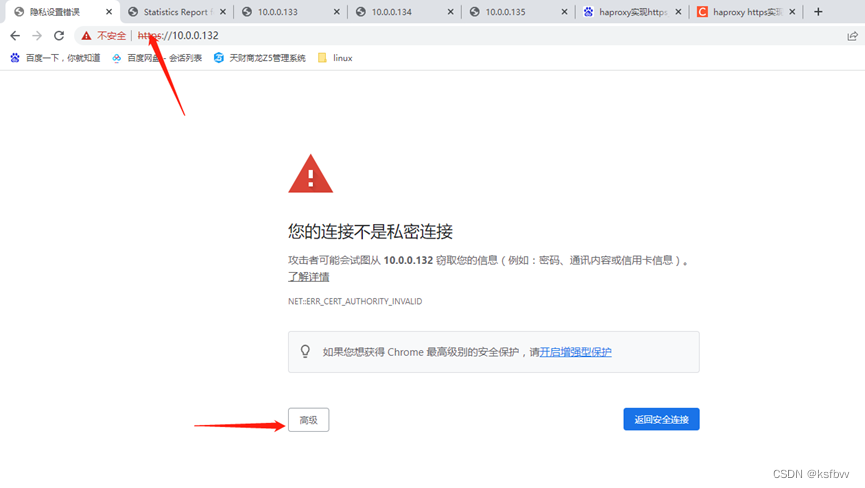

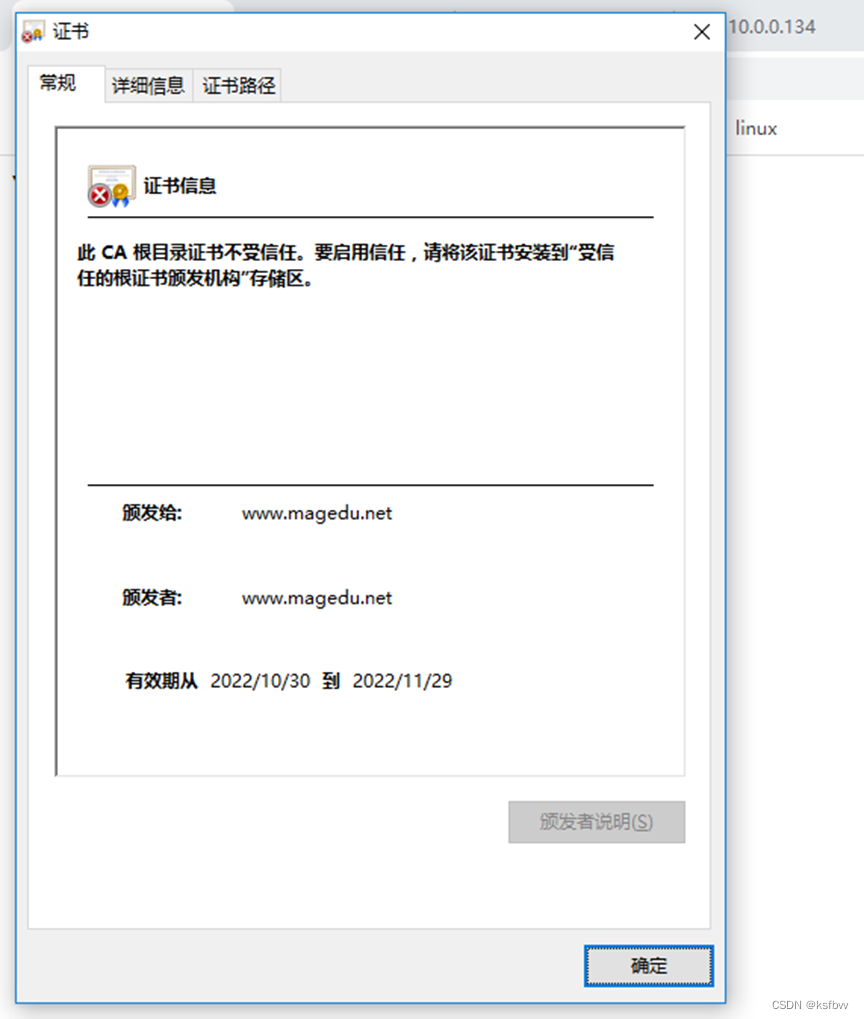

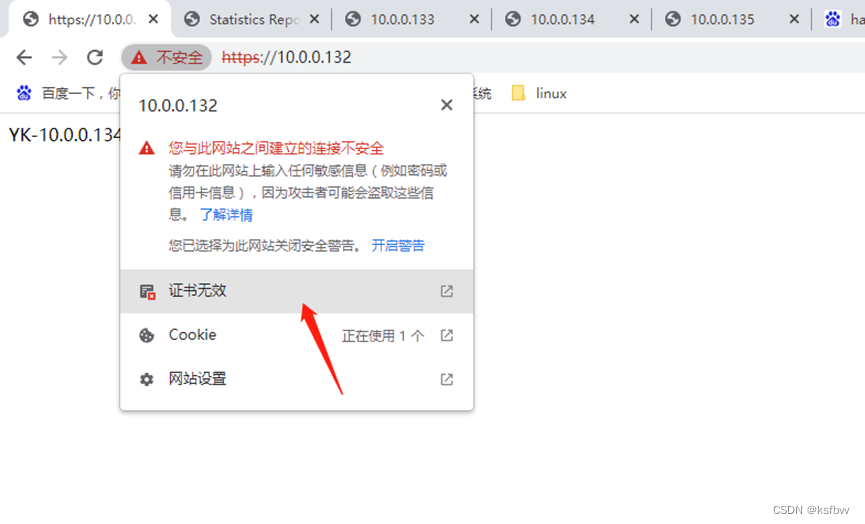

haproxy实现https:

创建证书:

[root@10 usr]# cd ..

[root@10 /]# cd /apps/

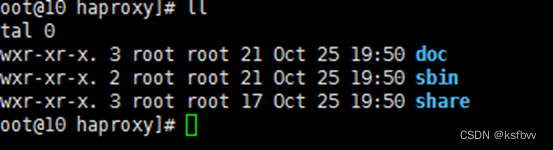

[root@10 apps]# ll

total 0

drwxr-xr-x. 5 root root 42 Oct 25 19:50 haproxy

[root@10 apps]# cd haproxy/

[root@10 haproxy]# ll

[root@10 haproxy]# mkdir certs

[root@10 haproxy]# cd certs/

[root@10 certs]# openssl genrsa -out haproxy.key 2048

[root@10 certs]# openssl req -new -x509 -key haproxy.key -out haproxy.crt -subj "/CN=www.magedu.net"

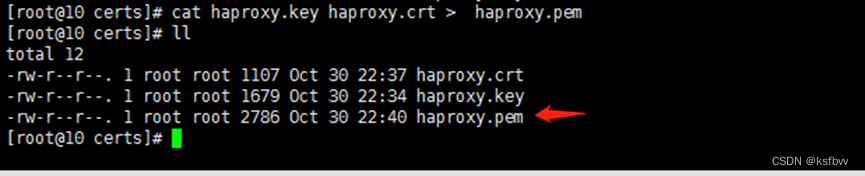

[root@10 certs]# cat haproxy.key haproxy.crt > haproxy.pem #查看证书的签发信息

[root@10 certs]# openssl x509 -in haproxy.pem -noout -text

[root@10 certs]# chmod 600 haproxy.* #安全一些

133、134web服务器启动nginx

[root@10 ~]# systemctl start nginx

[root@10 ~]# systemctl status nginx

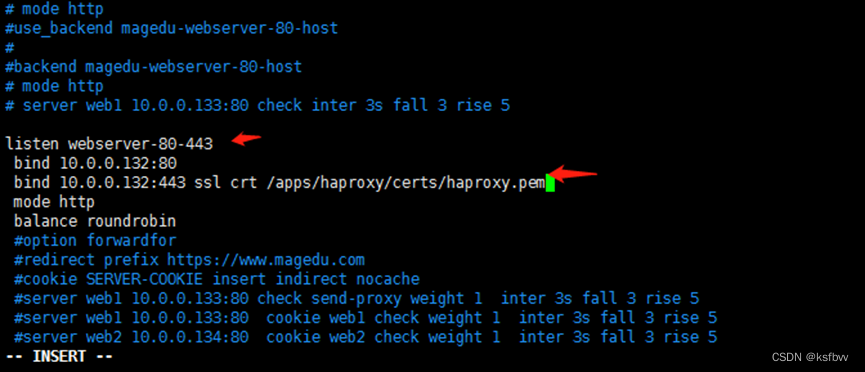

添加https访问服务器:

完成keepalived的单播非抢占多主机高可用IP, 抢占邮件通知。

keepalived 的单播非抢占多主机高可用IP

取决于VIP位于哪台机器(master(但最终取决于优先级(pri)))

安装keepalived

yum install keepalived -y

systemctl start keepalived #启动

keepalived启动不起来,重启一次OK

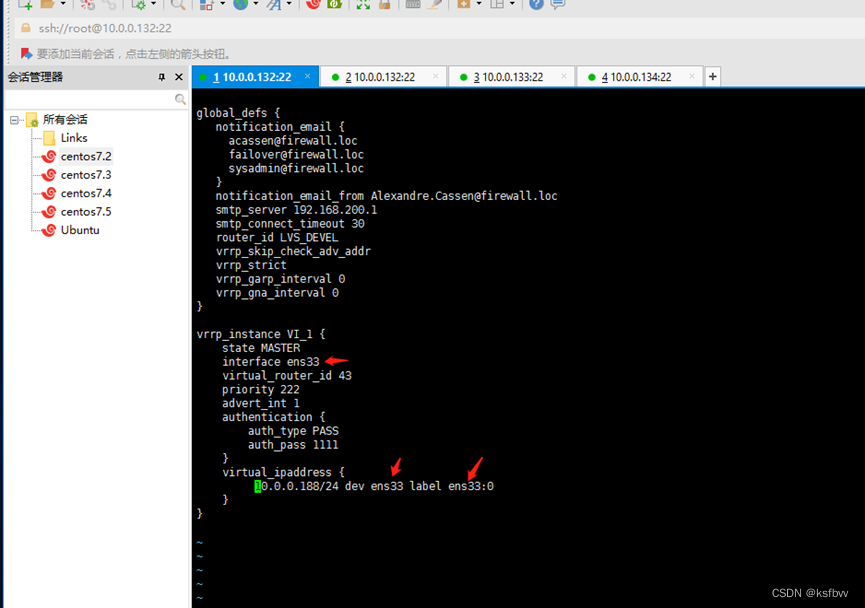

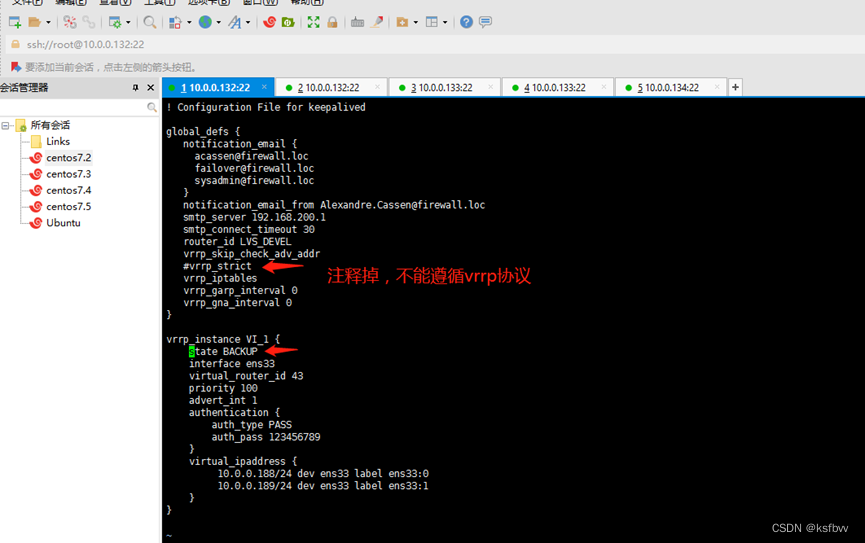

非抢占实现:keepalived配置:132、133机器:

vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 192.168.200.1

smtp_connect_timeout 30

router_id LVS_DEVEL

vrrp_skip_check_adv_addr

vrrp_strict

vrrp_garp_interval 0

vrrp_gna_interval 0

}

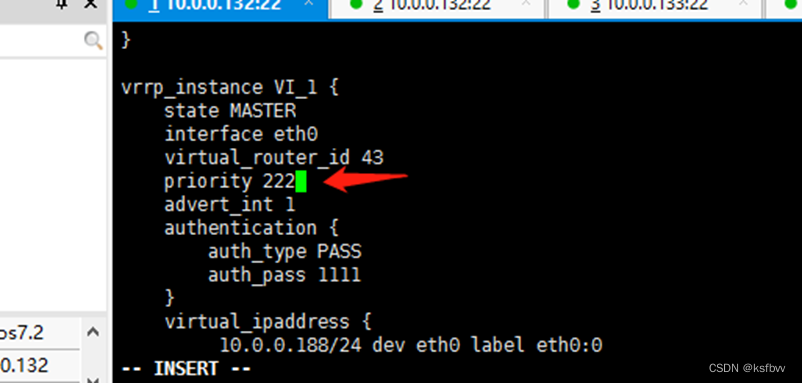

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 43

priority 222

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.188/24 dev ens33 label ens33:0

10.0.0.189/24 dev ens33 label ens33:1

}

}

重启之后报错:Cant find interface eth0 for vrrp_instance VI_1 !!!

解决办法:每个系统的网卡名称都不一样,找到自己的网卡文件,绑定在网卡子接口就OK

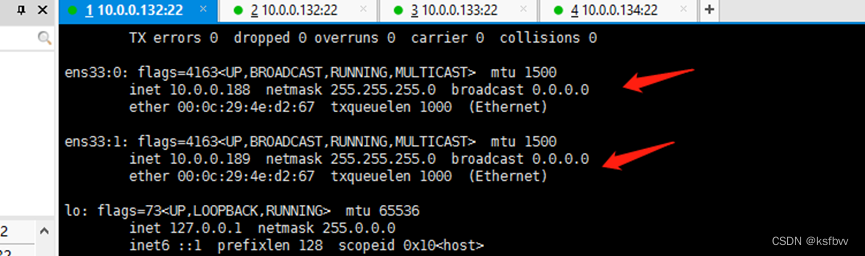

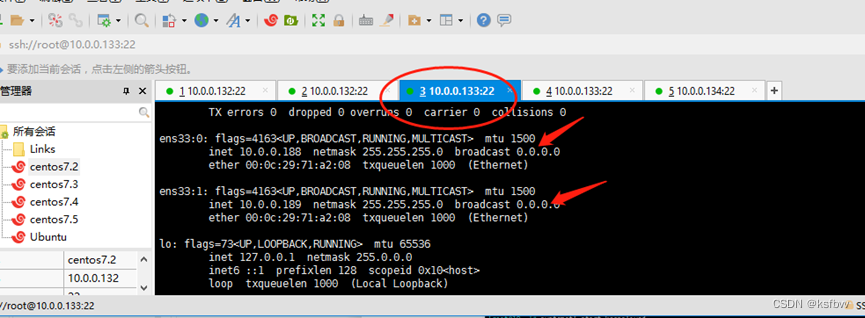

重启keepalived,可以看到VIP已经绑定到132机器

优先级配置(0-255)

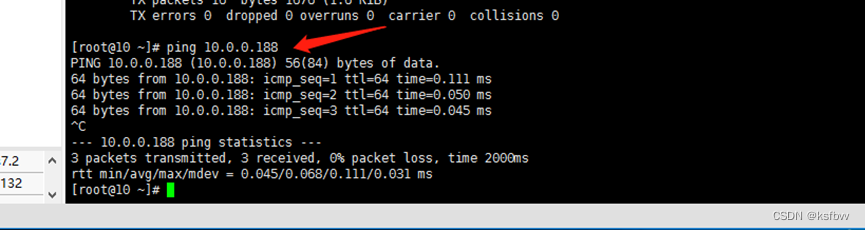

测试VIP:

#### 取消vrrp限制:

#### 取消vrrp限制:

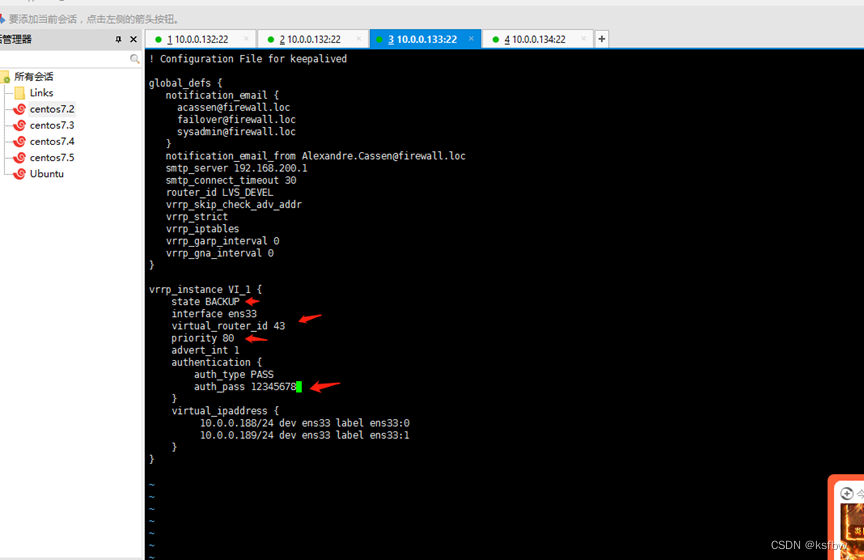

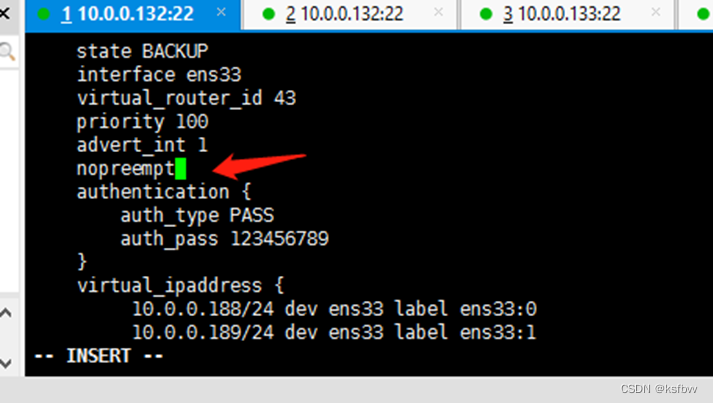

133机器的配置:

#### 优先级高的配置:nopreempt #关闭VIP抢占,需要各服务器state为BACKUP,centos7.9主从都要添加nopreempt 字段

#### 优先级高的配置:nopreempt #关闭VIP抢占,需要各服务器state为BACKUP,centos7.9主从都要添加nopreempt 字段

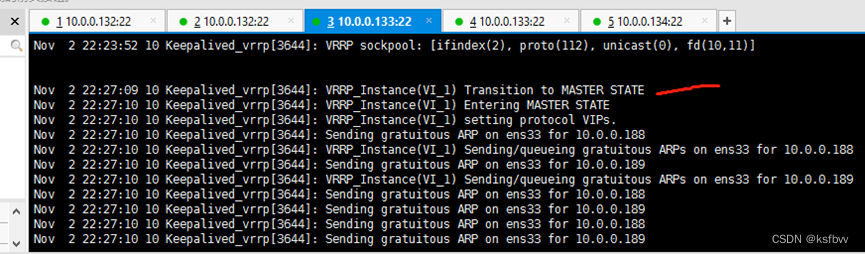

重启服务,132停掉,133看日志

重启服务,132停掉,133看日志

VIP会迁移到133上:

132重启keep,看看会不会抢占回去,

不会重新抢占,完成

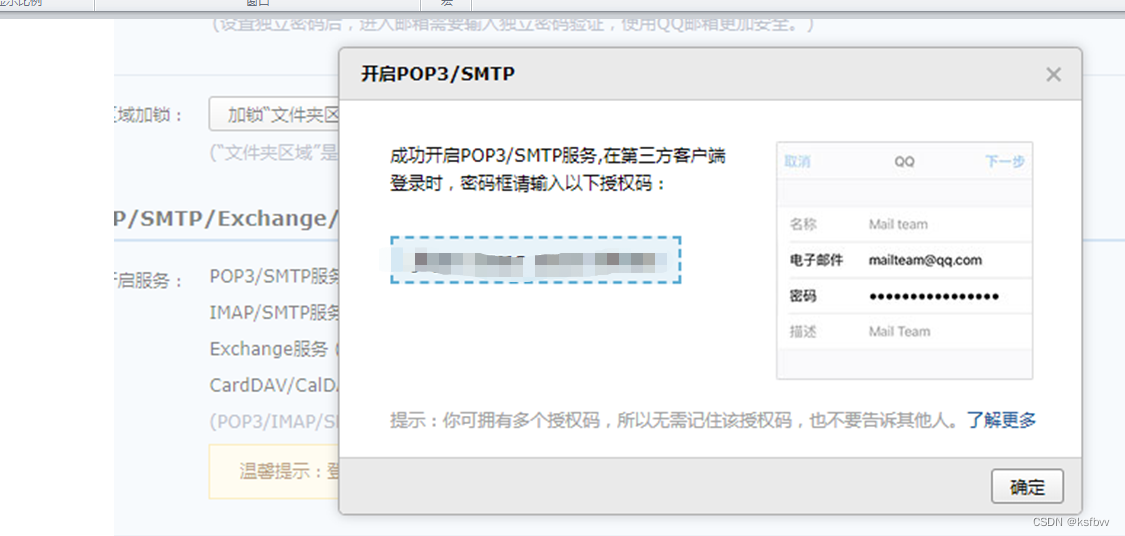

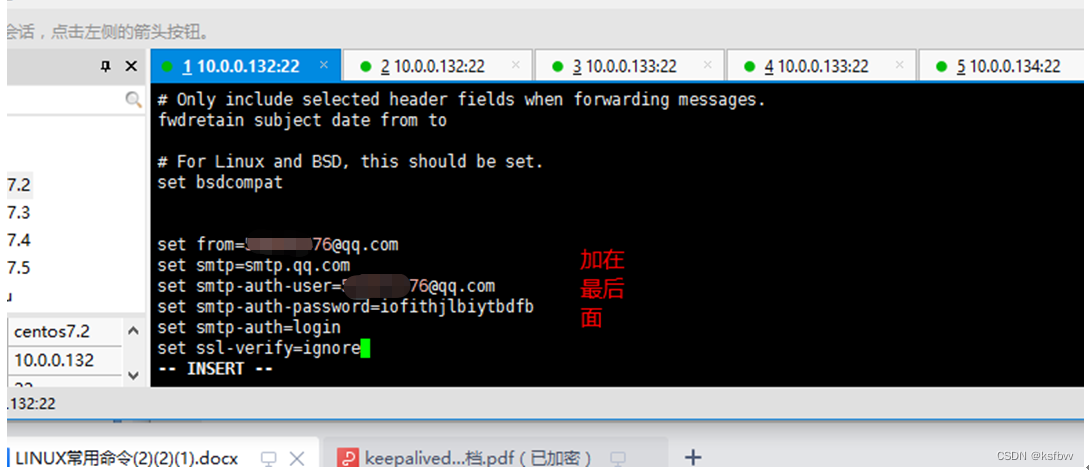

邮件通知:

yum install mailx -y

vim /etc/mail.rc

邮箱开启服务:

记录邮箱授权码

keep配置:

set from=XXXXX@qq.com

set smtp=smtp.qq.com

set smtp-auth-user=XXXXXX@qq.com

set smtp-auth-password=iofithjlbiytbdfb

set smtp-auth=login

set ssl-verify=ignore

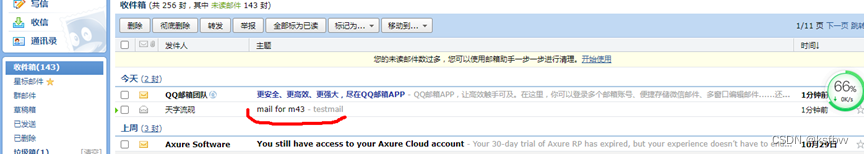

测试发送邮件

echo "testmail" | mail -s "mail for m43" XXXXX@qq.com

成功

配置邮件脚本

[root@10 etc]# cd keepalived/

[root@10 keepalived]# vim keepalived_notify.sh

#!/bin/bash

contact='568656476@qq.com'

notify(){

mailsubject="$(hostname)to be $1,vip转移"

mailbody="$(date +'%F %T'):vrrp transition,$(hostname) changed to be $1"

echo "$mailbody" | mail -s "$mailsubject" $contact

}

case $1 in

master)

notify master

;;

backup)

notify backup

;;

fault)

notify fault

;;

*)

echo "Usage: $(basename $0){master|backup|fault}"

exit 1

;;

esac

脚本测试

bash keepalived_notify.sh master

OK

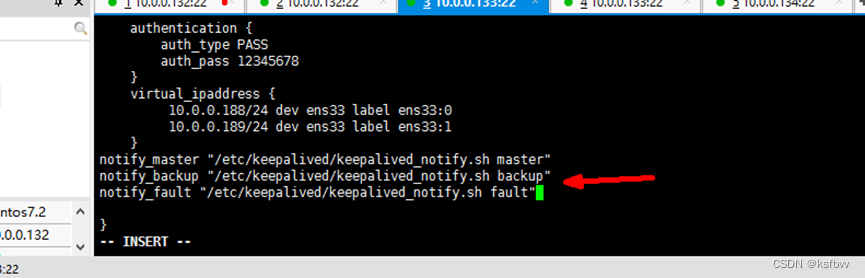

配置调用脚本

[root@10 keepalived]# vim keepalived.conf

notify_master "/etc/keepalived/keepalived_notify.sh master"

notify_backup "/etc/keepalived/keepalived_notify.sh backup"

notify_fault "/etc/keepalived/keepalived_notify.sh fault"

chmod a+x /etc/keepalived/keepalived_notify.sh #路径可执行权限

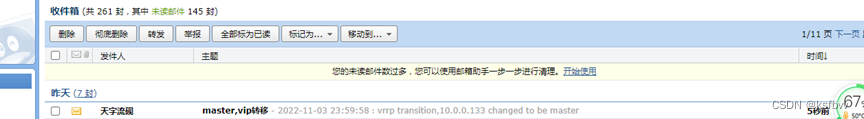

关闭132机器keep,造成master切换,观察邮箱:可以了

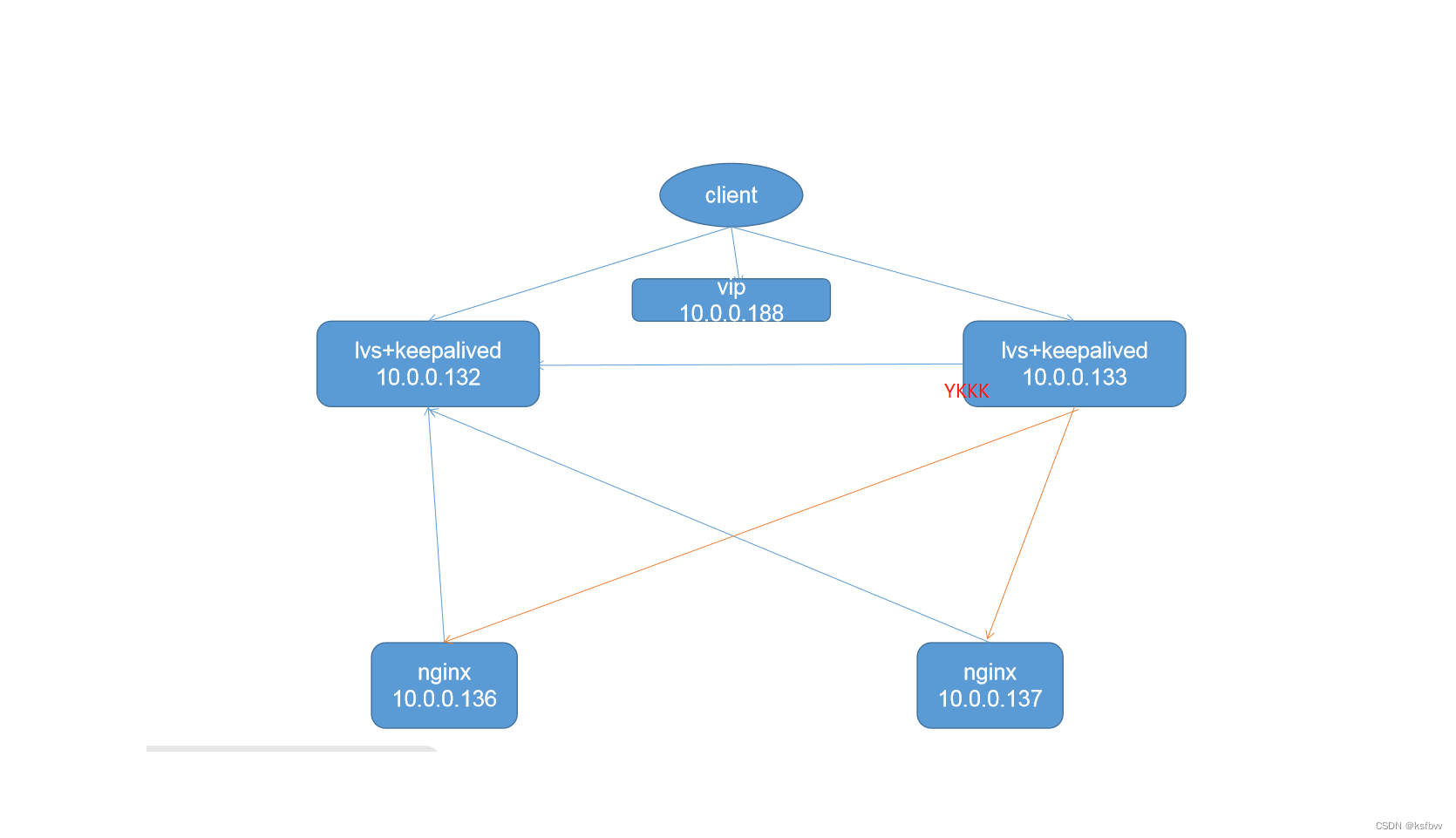

完成lvs + keepalived 高可用配置

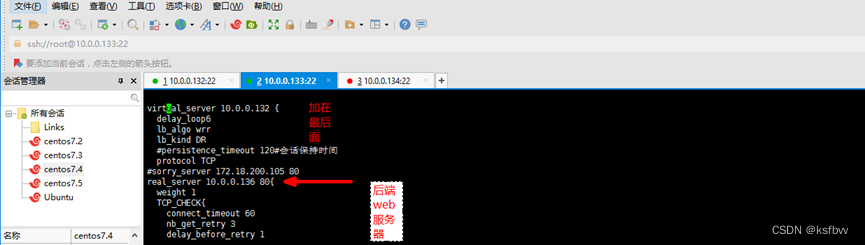

132机器配置:

vim /etc/keepalived/keepalived.conf

virtual_server 10.0.0.188 80 {

delay_loop 6

lb_algo wrr

lb_kind DR

#persistence_timeout 120#会话保持时间

protocol TCP

#sorry_server 172.18.200.105 80

real_server 10.0.0.136 80 {

weight 1

TCP_CHECK {

connect_timeout 60

nb_get_retry 3

delay_before_retry 1

connect_port 80

}

}

real_server 10.0.0.137 80 {

weight 1

TCP_CHECK {

connect_timeout 60

nb_get_retry 3

delay_before_retry 1

connect_port 80

}

}

}

重启keep

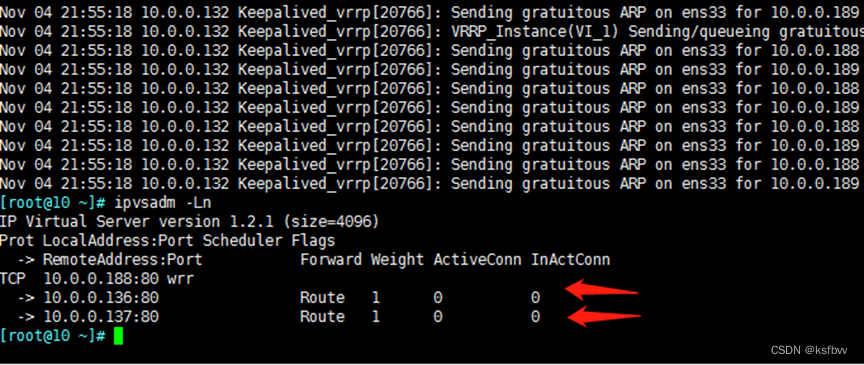

安装ipvsadm

yum install ipvsadm

ipvsadm -Ln #查看问服务器挂载成功

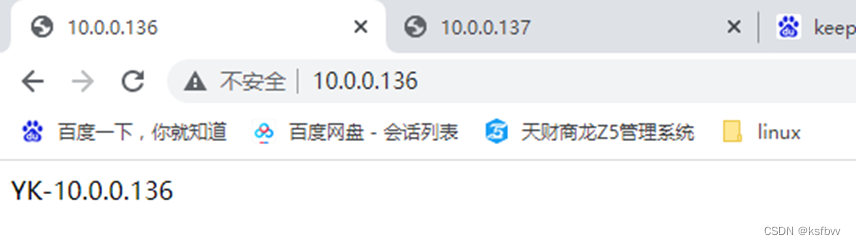

配置好后端环境:nginx并测试

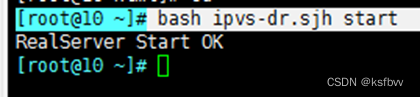

后端服务器绑定VIP:脚本:136上:

[root@10 html]# vim ipvs-dr.sjh

[root@10 html]# bash ipvs-dr.sjh start

#!/bin/sh

#LVSDR模式初始化脚本

#Zhang yk:2017-08-18

LVS_VIP=10.0.0.188

source /etc/rc.d/init.d/functions

case "$1" in

start)

/sbin/ifconfig lo:0 $LVS_VIP netmask 255.255.255.255 broadcast $LVS_VIP

/sbin/route add -host $LVS_VIP dev lo:0

echo "1" >/proc/sys/net/ipv4/conf/lo/arp_ignore

echo "2" >/proc/sys/net/ipv4/conf/lo/arp_announce

echo "1" >/proc/sys/net/ipv4/conf/all/arp_ignore

echo "2" >/proc/sys/net/ipv4/conf/all/arp_announce

sysctl -p >/dev/null 2>&1

echo "RealServer Start OK"

;;

stop)

/sbin/ifconfig lo:0 down

/sbin/route del $LVS_VIP >/dev/null 2>&1

echo "0" >/proc/sys/net/ipv4/conf/lo/arp_ignore

echo "0" >/proc/sys/net/ipv4/conf/lo/arp_announce

echo "0" >/proc/sys/net/ipv4/conf/all/arp_ignore

echo "0" >/proc/sys/net/ipv4/conf/all/arp_announce

echo "RealServer Stoped"

;;

*)

echo"Usage: $0 {start|stop}"

exit 1

esac

exit 0

拷贝到相邻web137上

[root@10 html]# scp ipvs-dr.sjh 10.0.0.137:/root/

双机运行:

[root@10 ~]# bash ipvs-dr.sjh start

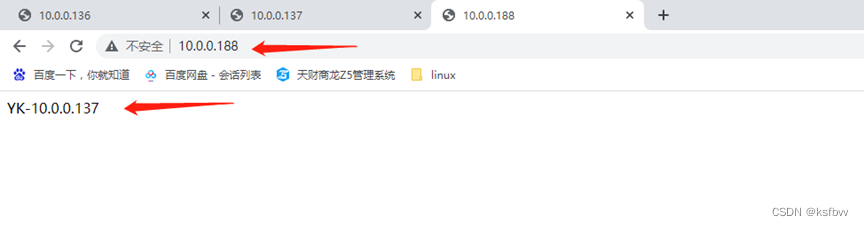

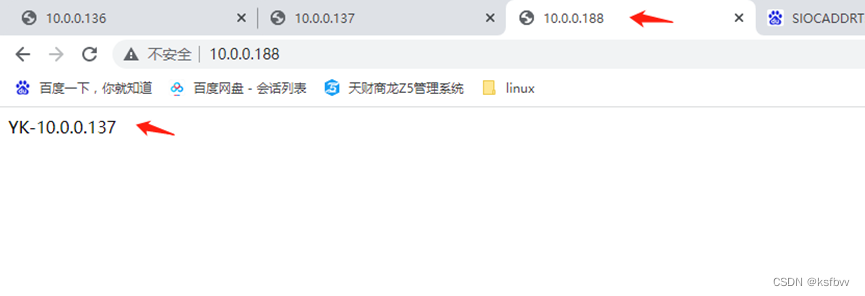

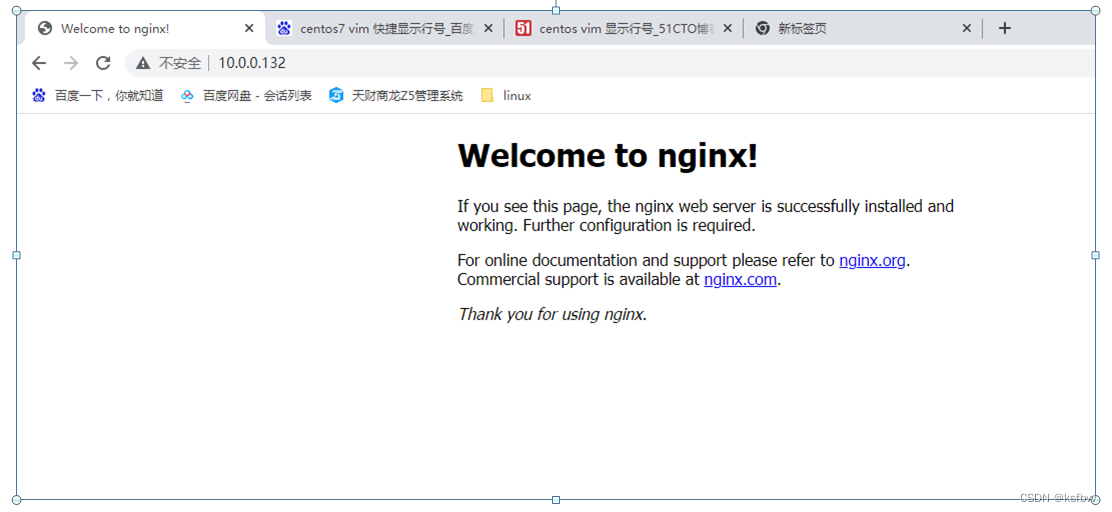

通过VIP地址访问到了后台nginx服务器,成功,这个时候将132机器stop,依然可以访问188

停掉132机器后,依然可以访问

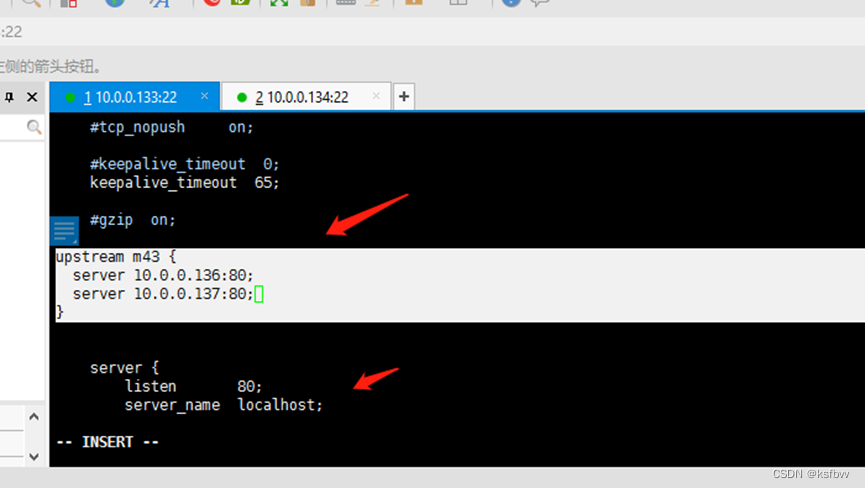

完成lvs + nginx 高可用配置

环境准备

使用133、134,因为本来就有ngnx,将133的LVS配置删掉,准备环境:nginx

[root@10 /]# cd usr/local/src

[root@10 src]# cd nginx-1.18.0/

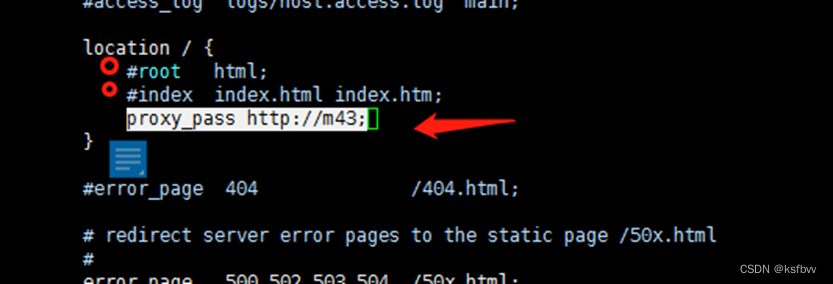

[root@10 nginx-1.18.0]# vim /apps/nginx/conf/nginx.conf

upstream m43 {

server 10.0.0.136:80;

server 10.0.0.137:80;

}

proxy_pass http://m43;

检测脚本:

vrrp_script chk_nginx{

script "/etc/keepalived/chk_nginx.sh"

interval 1

weight -80

fall 3

rise 5

timeout2

}

track_script {

chk_haproxy

}

运行脚本

yum install psmisc -y

cat /etc/keepalived/chk_nginx. sh

chmod a+x /etc/keepalived/chk_nginx. sh

bash chk_nginx. sh

访问后端服务器:

关掉132机器,依然可以访问。

如果觉得对您有用,请点个赞吧 ↓↓↓

448

448

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?