上一篇文章centOS7.2使用yum安装kubernetes中使用3台服务器搭建好了kubernetes环境,现在就学习实践下POD/RC/service实践。至于kubernetes的核心概念的理解“十分钟带你理解Kubernetes核心概念”讲得十分透彻,还有Git图片演示,在这就不多说了。

1.Pod

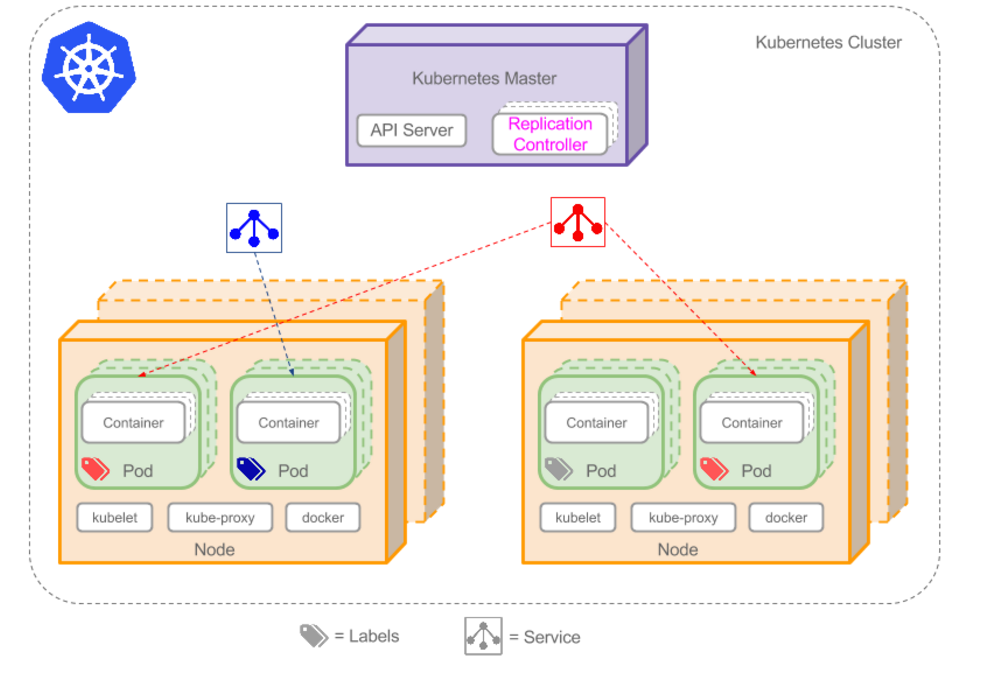

Pod是k8s的最基本的操作单元,包含一个或多个紧密相关的容器,类似于豌豆荚的概念。一个Pod可以被一个容器化的环境看作应用层的“逻辑宿主机”(Logical Host).一个Pod中的多个容器应用通常是紧耦合的。Pod在Node上被创建、启动或者销毁。

为什么k8s使用Pod在容器之上再封装一层呢?一个很重要的原因是Docker容器之间的通信受到Docker网络机制的限制。在Docker的世界中,一个容器需要通过link方式才能访问另一个容器提供的服务(端口)。大量容器之间的link将是一个非常繁重的工作。通过Pod的概念将多个容器组合在一个虚拟的“主机”内,可以实现容器之间仅需通过Localhost就能相互通信了。

一个Pod中的应用容器共享同一组资源:

(1)PID命名空间:Pod中的不同应用程序可以看见其他应用程序的进程ID

(2)网络命名空间:Pod中的多个容器能访问同一个IP和端口范围

(3)IPC命名空间:Pod中的多个容器能够使用SystemV IPC或POSIX消息队列进行通信。

(4)UTS命名空间:Pod中的多个容器共享一个主机名

(5)Volumes(共享存储卷):Pod中的各个容器可以访问在Pod级别定义的Volumes

不建议在k8s的一个pod内运行相同应用的多个实例。也就是说一个Pod内不要运行2个或2个以上相同的镜像,因为容易造成端口冲突,而且Pod内的容器都是在同一个Node上的

1.1对Pod的定义

对Pod的定义可以通过Yaml或Json格式的配置文件来完成。关于Yaml或Json中都能写哪些参数,参考官网http://kubernetes.io/docs/user-guide/pods/multi-container/

下面是Yaml格式定义的一个PHP-test-pod.yaml的Pod,其中kind为Pod,在spec中主要包含了对Contaners(容器)的定义,可以定义多个容器。文件放在master上。

- apiVersion: v1

- kind: Pod

- metadata:

- name: php-test

- labels:

- name: php-test

- spec:

- containers:

- - name: php-test

- image: 192.168.174.131:5000/php-base:1.0

- env:

- - name: ENV_TEST_1

- value: env_test_1

- - name: ENV_TEST_2

- value: env_test_2

- ports:

- - containerPort: 80

- hostPort: 80

apiVersion: v1

kind: Pod

metadata:

name: php-test

labels:

name: php-test

spec:

containers:

- name: php-test

image: 192.168.174.131:5000/php-base:1.0

env:

- name: ENV_TEST_1

value: env_test_1

- name: ENV_TEST_2

value: env_test_2

ports:

- containerPort: 80

hostPort: 80 1.2kubectl create执行pod文件

- [root@localhost k8s]# kubectl create -f ./php-pod.yaml

[root@localhost k8s]# kubectl create -f ./php-pod.yaml- [root@localhost k8s]# kubectl create -f ./php-pod.yaml

- Error from server: error when creating ”./php-pod.yaml”: pods “php-test” is forbidden: no API token found for service account default/default, retry after the token is automatically created and added to the service account

- [root@localhost k8s]#

[root@localhost k8s]# kubectl create -f ./php-pod.yaml

Error from server: error when creating "./php-pod.yaml": pods "php-test" is forbidden: no API token found for service account default/default, retry after the token is automatically created and added to the service account

[root@localhost k8s]#

修改/etc/kubernetes/apiserver 中的KUBE_ADMISSION_CONTROL,将ServiceAccount去掉

- [root@localhost k8s]# vi /etc/kubernetes/apiserver

[root@localhost k8s]# vi /etc/kubernetes/apiserver- # default admission control policies

- #KUBE_ADMISSION_CONTROL=”–admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota”

- KUBE_ADMISSION_CONTROL=”–admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ResourceQuota”

# default admission control policies

- [root@localhost k8s]# systemctl restart kube-apiserver.service

[root@localhost k8s]# systemctl restart kube-apiserver.service - [root@localhost k8s]# kubectl create -f ./php-pod.yaml

- pod “php-test” created

[root@localhost k8s]# kubectl create -f ./php-pod.yaml

pod "php-test" created1.3查看pod在哪个node上创建

- [root@localhost k8s]# kubectl get pods

- NAME READY STATUS RESTARTS AGE

- php-test 1/1 Running 0 3m

- [root@localhost k8s]# kubectl get pod php-test -o wide

- NAME READY STATUS RESTARTS AGE NODE

- php-test 1/1 Running 0 3m 192.168.174.130

- [root@localhost k8s]#

[root@localhost k8s]# kubectl get pods

NAME READY STATUS RESTARTS AGE

php-test 1/1 Running 0 3m

[root@localhost k8s]# kubectl get pod php-test -o wide

NAME READY STATUS RESTARTS AGE NODE

php-test 1/1 Running 0 3m 192.168.174.130

[root@localhost k8s]# - [root@localhost ~]# docker ps -a

- CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

- 9ca9a8d1bde1 192.168.174.131:5000/php-base:1.0 ”/bin/sh -c ’/usr/loc” About a minute ago Up About a minute k8s_php-test.ac88419d_php-test_default_173c9f54-a71c-11e6-a280-000c29066541_fab25c8c

- bec792435916 kubernetes/pause ”/pause” About a minute ago Up About a minute 0.0.0.0:80->80/tcp k8s_POD.b28ffa81_php-test_default_173c9f54-a71c-11e6-a280-000c29066541_e1c8ba7b

- [root@localhost ~]#

[root@localhost ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

9ca9a8d1bde1 192.168.174.131:5000/php-base:1.0 "/bin/sh -c '/usr/loc" About a minute ago Up About a minute k8s_php-test.ac88419d_php-test_default_173c9f54-a71c-11e6-a280-000c29066541_fab25c8c

bec792435916 kubernetes/pause "/pause" About a minute ago Up About a minute 0.0.0.0:80->80/tcp k8s_POD.b28ffa81_php-test_default_173c9f54-a71c-11e6-a280-000c29066541_e1c8ba7b

[root@localhost ~]# php-base中php有添加了个info.php页面,通过浏览器访问http://192.168.174.130/info.php,发现容器正常工作,说明pod没问题。

查看pod的详细信息

kubectl describe pod php-test

- [root@localhost k8s]# kubectl describe pod php-test

[root@localhost k8s]# kubectl describe pod php-test- [root@localhost k8s]# kubectl describe pod php-test

- Name: php-test

- Namespace: default

- Node: 192.168.174.130/192.168.174.130

- Start Time: Thu, 10 Nov 2016 16:02:47 +0800

- Labels: name=php-test

- Status: Running

- IP: 172.17.42.2

- Controllers: <none>

- Containers:

- php-test:

- Container ID: docker://9ca9a8d1bde1e13da2e7ab47fc05331eb6a8c2b6566662576b742f98e2ec9609

- Image: 192.168.174.131:5000/php-base:1.0

- Image ID: docker://sha256:104c7334b9624b054994856318e54b6d1de94c9747ab9f73cf25ae5c240a4de2

- Port: 80/TCP

- QoS Tier:

- cpu: BestEffort

- memory: BestEffort

- State: Running

- Started: Thu, 10 Nov 2016 16:03:04 +0800

- Ready: True

- Restart Count: 0

- Environment Variables:

- ENV_TEST_1: env_test_1

- ENV_TEST_2: env_test_2

- Conditions:

- Type Status

- Ready True

- No volumes.

- Events:

- FirstSeen LastSeen Count From SubobjectPath Type Reason Message

- ——— ——– —– —- ————- ——– —— ——-

- 14m 14m 1 {default-scheduler } Normal Scheduled Successfully assigned php-test to 192.168.174.130

- 14m 14m 2 {kubelet 192.168.174.130} Warning MissingClusterDNS kubelet does not have ClusterDNS IP configured and cannot create Pod using “ClusterFirst” policy. Falling back to DNSDefault policy.

- 14m 14m 1 {kubelet 192.168.174.130} spec.containers{php-test} Normal Pulled Container image “192.168.174.131:5000/php-base:1.0” already present on machine

- 14m 14m 1 {kubelet 192.168.174.130} spec.containers{php-test} Normal Created Created container with docker id 9ca9a8d1bde1

- 14m 14m 1 {kubelet 192.168.174.130} spec.containers{php-test} Normal Started Started container with docker id 9ca9a8d1bde1

- [root@localhost k8s]#

[root@localhost k8s]# kubectl describe pod php-test

Name: php-test

Namespace: default

Node: 192.168.174.130/192.168.174.130

Start Time: Thu, 10 Nov 2016 16:02:47 +0800

Labels: name=php-test

Status: Running

IP: 172.17.42.2

Controllers: <none>

Containers:

php-test:

Container ID: docker://9ca9a8d1bde1e13da2e7ab47fc05331eb6a8c2b6566662576b742f98e2ec9609

Image: 192.168.174.131:5000/php-base:1.0

Image ID: docker://sha256:104c7334b9624b054994856318e54b6d1de94c9747ab9f73cf25ae5c240a4de2

Port: 80/TCP

QoS Tier:

cpu: BestEffort

memory: BestEffort

State: Running

Started: Thu, 10 Nov 2016 16:03:04 +0800

Ready: True

Restart Count: 0

Environment Variables:

ENV_TEST_1: env_test_1

ENV_TEST_2: env_test_2

Conditions:

Type Status

Ready True

No volumes.

Events:

FirstSeen LastSeen Count From SubobjectPath Type Reason Message

--------- -------- ----- ---- ------------- -------- ------ -------

14m 14m 1 {default-scheduler } Normal Scheduled Successfully assigned php-test to 192.168.174.130

14m 14m 2 {kubelet 192.168.174.130} Warning MissingClusterDNS kubelet does not have ClusterDNS IP configured and cannot create Pod using "ClusterFirst" policy. Falling back to DNSDefault policy.

14m 14m 1 {kubelet 192.168.174.130} spec.containers{php-test} Normal Pulled Container image "192.168.174.131:5000/php-base:1.0" already present on machine

14m 14m 1 {kubelet 192.168.174.130} spec.containers{php-test} Normal Created Created container with docker id 9ca9a8d1bde1

14m 14m 1 {kubelet 192.168.174.130} spec.containers{php-test} Normal Started Started container with docker id 9ca9a8d1bde1

[root@localhost k8s]# 1.4测试稳定性

(1)在node上通过docker stop $(docker ps -a -q)停止容器,发现k8s会自动重新生成新容器。

- [root@localhost ~]# docker stop (docker ps -a -q) </span></span></li><li class=""><span>9ca9a8d1bde1 </span></li><li class="alt"><span>bec792435916 </span></li><li class=""><span>[root@localhost ~]# docker ps -a </span></li><li class="alt"><span>CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES </span></li><li class=""><span>19aba2fc5300 192.168.174.131:5000/php-base:1.0 "/bin/sh -c '/usr/loc" 2 seconds ago Up 1 seconds k8s_php-test.ac88419d_php-test_default_173c9f54-a71c-11e6-a280-000c29066541_e32e07e1 </span></li><li class="alt"><span>514903617a80 kubernetes/pause "/pause" 3 seconds ago Up 2 seconds 0.0.0.0:80->80/tcp k8s_POD.b28ffa81_php-test_default_173c9f54-a71c-11e6-a280-000c29066541_cac9bd60 </span></li><li class=""><span>9ca9a8d1bde1 192.168.174.131:5000/php-base:1.0 "/bin/sh -c '/usr/loc" 19 minutes ago Exited (137) 2 seconds ago k8s_php-test.ac88419d_php-test_default_173c9f54-a71c-11e6-a280-000c29066541_fab25c8c </span></li><li class="alt"><span>bec792435916 kubernetes/pause "/pause" 19 minutes ago Exited (2) 2 seconds ago k8s_POD.b28ffa81_php-test_default_173c9f54-a71c-11e6-a280-000c29066541_e1c8ba7b </span></li><li class=""><span>[root@localhost ~]# </span></li></ol><div class="save_code tracking-ad" data-mod="popu_249" style="display: none;"><a href="javascript:;"><img src="http://static.blog.csdn.net/images/save_snippets.png"></a></div></div><pre code_snippet_id="1978494" snippet_file_name="blog_20161110_12_7805809" name="code" class="plain" style="display: none;">[root@localhost ~]# docker stop(docker ps -a -q) 9ca9a8d1bde1 bec792435916 [root@localhost ~]# docker ps -a CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 19aba2fc5300 192.168.174.131:5000/php-base:1.0 “/bin/sh -c ‘/usr/loc” 2 seconds ago Up 1 seconds k8s_php-test.ac88419d_php-test_default_173c9f54-a71c-11e6-a280-000c29066541_e32e07e1 514903617a80 kubernetes/pause “/pause” 3 seconds ago Up 2 seconds 0.0.0.0:80->80/tcp k8s_POD.b28ffa81_php-test_default_173c9f54-a71c-11e6-a280-000c29066541_cac9bd60 9ca9a8d1bde1 192.168.174.131:5000/php-base:1.0 “/bin/sh -c ‘/usr/loc” 19 minutes ago Exited (137) 2 seconds ago k8s_php-test.ac88419d_php-test_default_173c9f54-a71c-11e6-a280-000c29066541_fab25c8c bec792435916 kubernetes/pause “/pause” 19 minutes ago Exited (2) 2 seconds ago k8s_POD.b28ffa81_php-test_default_173c9f54-a71c-11e6-a280-000c29066541_e1c8ba7b [root@localhost ~]# (2)停止node服务器(整个系统停止)

node服务器关闭,不是所有node服务器关闭,只是关闭php-test的pod所在的node。然后再master上查询pod,无法获取到php-test的pod信息了。- [root@localhost k8s]# kubectl get pods

- NAME READY STATUS RESTARTS AGE

- php-test 1/1 Terminating 1 25m

- [root@localhost k8s]# kubectl get pod php-test -o wide

- Error from server: pods “php-test” not found

- [root@localhost k8s]#

重新启动node服务器,docker ps -a,发现一个容器都没了。master上执行kubectl get pods,也发现一个pod都没了,说明如果node服务器挂了,那pod也会销毁,且不会自动在其它node上创建新的pod。这问题可以通过RC来进行解决,看下面RC内容。[root@localhost k8s]# kubectl get pods NAME READY STATUS RESTARTS AGE php-test 1/1 Terminating 1 25m [root@localhost k8s]# kubectl get pod php-test -o wide Error from server: pods "php-test" not found [root@localhost k8s]#

1.5删除pod

kubectl delete pod NAME,比如kubectl delete pod php-test

2.RC(Replication Controller)

Replication Controller确保任何时候Kubernetes集群中有指定数量的pod副本(replicas)在运行, 如果少于指定数量的pod副本(replicas),Replication Controller会启动新的Container,反之会杀死多余的以保证数量不变。Replication Controller使用预先定义的pod模板创建pods,一旦创建成功,pod 模板和创建的pods没有任何关联,可以修改pod 模板而不会对已创建pods有任何影响,也可以直接更新通过Replication Controller创建的pods。对于利用pod 模板创建的pods,Replication Controller根据label selector来关联,通过修改pods的label可以删除对应的pods。

2.1定义ReplicationController

master服务上创建文件php-controller.yaml,为了避免同一个rc定义的pod在同一个node上生成多个pod时,端口冲突,文件中不指定hostPort。replicas指定pod的数量。- apiVersion: v1

- kind: ReplicationController

- metadata:

- name: php-controller

- labels:

- name: php-controller

- spec:

- replicas: 2

- selector:

- name: php-test-pod

- template:

- metadata:

- labels:

- name: php-test-pod

- spec:

- containers:

- - name: php-test

- image: 192.168.174.131:5000/php-base:1.0

- env:

- - name: ENV_TEST_1

- value: env_test_1

- - name: ENV_TEST_2

- value: env_test_2

- ports:

- - containerPort: 80

apiVersion: v1 kind: ReplicationController metadata: name: php-controller labels: name: php-controller spec: replicas: 2 selector: name: php-test-pod template: metadata: labels: name: php-test-pod spec: containers: - name: php-test image: 192.168.174.131:5000/php-base:1.0 env: - name: ENV_TEST_1 value: env_test_1 - name: ENV_TEST_2 value: env_test_2 ports: - containerPort: 80

2.2执行[root@localhost k8s]# kubectl create -f php-controller.yamlreplicationcontroller “php-controller” created

[root@localhost k8s]# kubectl create -f php-controller.yaml replicationcontroller "php-controller" created2.3查询

[root@localhost k8s]# kubectl get rc

[root@localhost k8s]# kubectl get rc php-controller

[root@localhost k8s]# kubectl describe rc php-controller

- [root@localhost k8s]# kubectl get rc

- NAME DESIRED CURRENT AGE

- php-controller 2 2 1m

- [root@localhost k8s]# kubectl get rc php-controller

- NAME DESIRED CURRENT AGE

- php-controller 2 2 3m

- [root@localhost k8s]# kubectl describe rc php-controller

- Name: php-controller

- Namespace: default

- Image(s): 192.168.174.131:5000/php-base:1.0

- Selector: name=php-test-pod

- Labels: name=php-controller

- Replicas: 2 current / 2 desired

- Pods Status: 2 Running / 0 Waiting / 0 Succeeded / 0 Failed

- No volumes.

- Events:

- FirstSeen LastSeen Count From SubobjectPath Type Reason Message

- ——— ——– —– —- ————- ——– —— ——-

- 3m 3m 1 {replication-controller } Normal SuccessfulCreate Created pod: php-controller-8x5wp

- 3m 3m 1 {replication-controller } Normal SuccessfulCreate Created pod: php-controller-ynzl7

- [root@localhost k8s]#

[root@localhost k8s]# kubectl get rc NAME DESIRED CURRENT AGE php-controller 2 2 1m [root@localhost k8s]# kubectl get rc php-controller NAME DESIRED CURRENT AGE php-controller 2 2 3m [root@localhost k8s]# kubectl describe rc php-controller Name: php-controller Namespace: default Image(s): 192.168.174.131:5000/php-base:1.0 Selector: name=php-test-pod Labels: name=php-controller Replicas: 2 current / 2 desired Pods Status: 2 Running / 0 Waiting / 0 Succeeded / 0 Failed No volumes. Events: FirstSeen LastSeen Count From SubobjectPath Type Reason Message --------- -------- ----- ---- ------------- -------- ------ ------- 3m 3m 1 {replication-controller } Normal SuccessfulCreate Created pod: php-controller-8x5wp 3m 3m 1 {replication-controller } Normal SuccessfulCreate Created pod: php-controller-ynzl7 [root@localhost k8s]#

[root@localhost k8s]# kubectl get pods

[root@localhost k8s]# kubectl get pods -o wide

- [root@localhost k8s]# kubectl get pods -o wide

- NAME READY STATUS RESTARTS AGE NODE

- php-controller-8x5wp 1/1 Running 0 5m 192.168.174.131

- php-controller-ynzl7 1/1 Running 0 5m 192.168.174.130

- [root@localhost k8s]#

[root@localhost k8s]# kubectl get pods -o wide NAME READY STATUS RESTARTS AGE NODE php-controller-8x5wp 1/1 Running 0 5m 192.168.174.131 php-controller-ynzl7 1/1 Running 0 5m 192.168.174.130 [root@localhost k8s]#

可见在131和130的2台node服务器上都创建了Pod.2.4更改副本数量

在文件中pod副本数量是通过replicas来控制的,kubernetes允许通过kubectl scal命令来动态控制Pod的数量。(1)更改replicas的数量为3

- [root@localhost k8s]# kubectl scale rc php-controller –replicas=3

- replicationcontroller “php-controller” scaled

[root@localhost k8s]# kubectl scale rc php-controller --replicas=3 replicationcontroller "php-controller" scaled- [root@localhost k8s]# kubectl get pods -o wide

- NAME READY STATUS RESTARTS AGE NODE

- php-controller-0gkhx 1/1 Running 0 10s 192.168.174.131

- php-controller-8x5wp 1/1 Running 0 11m 192.168.174.131

- php-controller-ynzl7 1/1 Running 0 11m 192.168.174.130

- [root@localhost k8s]#

发现在31服务器上多了一个POD[root@localhost k8s]# kubectl get pods -o wide NAME READY STATUS RESTARTS AGE NODE php-controller-0gkhx 1/1 Running 0 10s 192.168.174.131 php-controller-8x5wp 1/1 Running 0 11m 192.168.174.131 php-controller-ynzl7 1/1 Running 0 11m 192.168.174.130 [root@localhost k8s]#(2)更改replicas的数量为1

- [root@localhost k8s]# kubectl scale rc php-controller –replicas=1

- replicationcontroller “php-controller” scaled

- [root@localhost k8s]# kubectl get pods -o wide

- NAME READY STATUS RESTARTS AGE NODE

- php-controller-0gkhx 1/1 Terminating 0 2m 192.168.174.131

- php-controller-8x5wp 1/1 Running 0 12m 192.168.174.131

- php-controller-ynzl7 1/1 Terminating 0 12m 192.168.174.130

看到其中2个pod的状态都是Terminating了[root@localhost k8s]# kubectl scale rc php-controller --replicas=1 replicationcontroller "php-controller" scaled [root@localhost k8s]# kubectl get pods -o wide NAME READY STATUS RESTARTS AGE NODE php-controller-0gkhx 1/1 Terminating 0 2m 192.168.174.131 php-controller-8x5wp 1/1 Running 0 12m 192.168.174.131 php-controller-ynzl7 1/1 Terminating 0 12m 192.168.174.130(3)docker rm删除

通过docker rm删除,一会后,会自动启动新的容器,现象和上面的POD测试一样。

2.5删除

通过更改replicas=0,可以把该RC下的所有Pod都删掉。另外kubeclt也提供了stop和delete命令来完成一次性删除RC和RC控制的全部Pod。需要注意的是,单纯的删除RC,并不会影响已创建好的Pod。kubectl delete rc rcName 删除rc,但是pod不会收到影响

kubectl delete -f rcfile (比如[root@localhost k8s]# kubectl delete -f php-controller.yaml )会删除rc,也会删除rc下的所有pod

3.Service

虽然每个Pod都会分配一个单独的IP地址,但这个IP地址会随着Pod的销毁而消失。这就引出一个问题:如果有一组Pod组成的一个集群来提供服务,那么如何来访问它们呢?

Kubernetes的Service(服务)就是用来解决这个问题的核心概念。

一个Service可以看作一组提供相同服务的Pod的对外访问接口。Service作用于哪些Pod是通过Label Selector来定义的。

再看上面例子,php-test Pod运行了2个副本(replicas),这2个Pod对于前端程序来说没有区别,所以前端程序不关心是哪个后端副本在提供服务。并且后端php-test Pod在发生变化(比如replicas数量变化或某个node挂了,Pod在另一个node重新生成),前端无须跟踪这些变化。“Service”就是用来实现这种解耦的抽象概念。

3.1 对Service的定义

上面rc例子,已经删除rc并且删除所有pod了。Service和RC没有先后顺序的,只是如果Pod先于Service生成,则Service中某些信息就没写到Pod中。

- [root@localhost k8s]# ls

- php-controller.yaml php-pod.yaml php-service.yaml

- [root@localhost k8s]# kubectl get rc

- [root@localhost k8s]# kubectl get pods

- [root@localhost k8s]# kubectl create -f php-controller.yaml

- replicationcontroller “php-controller” created

- [root@localhost k8s]# kubectl get rc

- NAME DESIRED CURRENT AGE

- php-controller 2 2 11s

- [root@localhost k8s]# kubectl get pods -o wide

- NAME READY STATUS RESTARTS AGE NODE

- php-controller-cntom 1/1 Running 0 28s 192.168.174.131

- php-controller-kn55k 1/1 Running 0 28s 192.168.174.130

- [root@localhost k8s]#

[root@localhost k8s]# ls php-controller.yaml php-pod.yaml php-service.yaml [root@localhost k8s]# kubectl get rc [root@localhost k8s]# kubectl get pods [root@localhost k8s]# kubectl create -f php-controller.yaml replicationcontroller "php-controller" created [root@localhost k8s]# kubectl get rc NAME DESIRED CURRENT AGE php-controller 2 2 11s [root@localhost k8s]# kubectl get pods -o wide NAME READY STATUS RESTARTS AGE NODE php-controller-cntom 1/1 Running 0 28s 192.168.174.131 php-controller-kn55k 1/1 Running 0 28s 192.168.174.130 [root@localhost k8s]#

php-service.yaml- apiVersion: v1

- kind: Service

- metadata:

- name: php-service

- labels:

- name: php-service

- spec:

- ports:

- - port: 8081

- targetPort: 80

- protocol: TCP

- selector:

- name: php-test-pod

生成,查询apiVersion: v1 kind: Service metadata: name: php-service labels: name: php-service spec: ports: - port: 8081 targetPort: 80 protocol: TCP selector: name: php-test-pod

- [root@localhost k8s]# kubectl create -f php-service.yaml

- service “php-service” created

- [root@localhost k8s]# kubectl get service

- NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE

- kubernetes 10.254.0.1 <none> 443/TCP 6d

- php-service 10.254.165.216 <none> 8081/TCP 29s

- [root@localhost k8s]# kubectl get services

- NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE

- kubernetes 10.254.0.1 <none> 443/TCP 6d

- php-service 10.254.165.216 <none> 8081/TCP 1m

- [root@localhost k8s]# kubectl get endpoints

- NAME ENDPOINTS AGE

- kubernetes 192.168.174.128:6443 6d

- php-service 172.17.42.2:80,172.17.65.3:80 1m

- [root@localhost k8s]#

通过kubectl get endpoints看到,php-service监控的两个Pod中的容器地址,这2个地址172.17.42.2:80,172.17.65.3:80,只能在内网访问(node上,安装有flannel)。[root@localhost k8s]# kubectl create -f php-service.yaml service "php-service" created [root@localhost k8s]# kubectl get service NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes 10.254.0.1 <none> 443/TCP 6d php-service 10.254.165.216 <none> 8081/TCP 29s [root@localhost k8s]# kubectl get services NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes 10.254.0.1 <none> 443/TCP 6d php-service 10.254.165.216 <none> 8081/TCP 1m [root@localhost k8s]# kubectl get endpoints NAME ENDPOINTS AGE kubernetes 192.168.174.128:6443 6d php-service 172.17.42.2:80,172.17.65.3:80 1m [root@localhost k8s]#- [root@localhost k8s]# kubectl get service

- NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE

- kubernetes 10.254.0.1 <none> 443/TCP 6d

- php-service 10.254.165.216 <none> 8081/TCP 17m

- [root@localhost k8s]#

[root@localhost k8s]# kubectl get service NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes 10.254.0.1 <none> 443/TCP 6d php-service 10.254.165.216 <none> 8081/TCP 17m [root@localhost k8s]#3.2.Pod的IP地址和Service的Cluster IP地址

php-service的ip是10.254.165.216,这是Service的Cluster IP地址,是k8s系统中的虚拟IP地址,有系统动态分配。Pod的IP地址是Docker Daemon根据docker0网桥的IP地址段进行分配的。Service的Cluster IP地址相对于Pod的IP地址来说相对稳定,Service被创建时即被分配一个IP地址,在销毁该Service之前,这个IP地址都不会变化了。而Pod在K8s集群中生命周期较短,可能被ReplicationController销毁、再次创建,新创建的Pod将会分配一个新的IP地址。

3.3外部访问Service

由于Service对象在Cluster IP Range池中分配到的IP只能在内部访问,所以其他Pod都可以无碍访问到它。但如果这个service作为前端服务,准备为集群外的客户端提供服务,我们就需要给这个服务提供公共IP了。

k8s支持两种对外提供服务的Service的type定义:NodePort和LoadBalancer

3.3.1NodePort

在定义Service时指定spec.type=NodePort,并指定spec.ports.nodePort的值,系统就会k8s集群中的每个node上打开一个主机上的真实端口号。这样,能访问Node的客户端都就能通过端口号访问内部的Service了。

php-nodePort-service.yaml

- apiVersion: v1

- kind: Service

- metadata:

- name: php-nodeport-service

- labels:

- name: php-nodeport-service

- spec:

- type: NodePort

- ports:

- - port: 8081

- targetPort: 80

- protocol: TCP

- nodePort: 30001

- selector:

- name: php-test-pod

apiVersion: v1 kind: Service metadata: name: php-nodeport-service labels: name: php-nodeport-service spec: type: NodePort ports: - port: 8081 targetPort: 80 protocol: TCP nodePort: 30001 selector: name: php-test-pod

- [root@localhost k8s]# kubectl delete service php-service

- service “php-service” deleted

[root@localhost k8s]# kubectl delete service php-service service "php-service" deleted- [root@localhost k8s]# kubectl create -f php-nodePort-service.yaml

- The Service “php-nodePort-service” is invalid.

- metadata.name: Invalid value: “php-nodePort-service”: must be a DNS 952 label (at most 24 characters, matching regex [a-z]([-a-z0-9]*[a-z0-9])?): e.g. “my-name”

- [root@localhost k8s]# kubectl create -f php-nodePort-service.yaml

- You have exposed your service on an external port on all nodes in your

- cluster. If you want to expose this service to the external internet, you may

- need to set up firewall rules for the service port(s) (tcp:30001) to serve traffic.

- See http://releases.k8s.io/release-1.2/docs/user-guide/services-firewalls.md for more details.

- service “php-nodeport-service” created

[root@localhost k8s]# kubectl create -f php-nodePort-service.yaml The Service "php-nodePort-service" is invalid. metadata.name: Invalid value: "php-nodePort-service": must be a DNS 952 label (at most 24 characters, matching regex [a-z]([-a-z0-9]*[a-z0-9])?): e.g. "my-name" [root@localhost k8s]# kubectl create -f php-nodePort-service.yaml You have exposed your service on an external port on all nodes in your cluster. If you want to expose this service to the external internet, you may need to set up firewall rules for the service port(s) (tcp:30001) to serve traffic. See http://releases.k8s.io/release-1.2/docs/user-guide/services-firewalls.md for more details. service "php-nodeport-service" created

- [root@localhost k8s]# kubectl get pods -o wide

- NAME READY STATUS RESTARTS AGE NODE

- php-controller-2bvdq 1/1 Running 0 21m 192.168.174.130

- php-controller-42muy 1/1 Running 0 21m 192.168.174.131

[root@localhost k8s]# kubectl get pods -o wide NAME READY STATUS RESTARTS AGE NODE php-controller-2bvdq 1/1 Running 0 21m 192.168.174.130 php-controller-42muy 1/1 Running 0 21m 192.168.174.131

这样我们就可以通过http://192.168.174.130:30001/info.php或http://192.168.174.131:30001/info.php进行访问了。3.2LoadBalancer

如果云服务商支持外接负载均衡器,则可以通过spec.tye=LoadBalancer定义Service,同时指定负载均衡器的IP地址。使用这种类型需要指定Service的nodePort和clusterIp.

- apiVersion: v1

- kind: Service

- metadata:

- name: php-loadbalancer-service

- labels:

- name: php-loadbalancer-service

- spec:

- type: LoadBalancer

- clusterIp: 10.254.165.216

- selector:

- app: php-test-pod

- ports:

- - port: 8081

- targetPort: 80

- protocol: TCP

- nodePort: 30001

- status:

- loadBalancer:

- ingress:

- ip: 192.168.174.127 #注意这是负载均衡器的IP地址

apiVersion: v1 kind: Service metadata: name: php-loadbalancer-service labels: name: php-loadbalancer-service spec: type: LoadBalancer clusterIp: 10.254.165.216 selector: app: php-test-pod ports: - port: 8081 targetPort: 80 protocol: TCP nodePort: 30001 status: loadBalancer: ingress: ip: 192.168.174.127 #注意这是负载均衡器的IP地址

status.loadBalancer.ingress.ip设置的192.168.174.127为云服务商提供的负载均衡器的IP地址。之后,对该Service的访问请求将会通过LoadBalancer转发到后端的Pod上去,负载分发的实现方式则依赖云服务商提供的LoadBalancer的实现机制。

3.3端口定义

如果一个Pod中有多个对外暴漏端口时,对端口进行命名,是个Endpoint不会因重名而产生歧义。

- selector:

- app: php-test-pod

- ports:

- - name: p1

- port: 8081

- targetPort: 80

- protocol: TCP

- - name: p2

- port: 8082

- targetPort: 22

selector: app: php-test-pod ports: - name: p1 port: 8081 targetPort: 80 protocol: TCP - name: p2 port: 8082 targetPort: 22

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?