我们的命令是: bin/nutch crawl url -dir data

最先进入 Crawl.java ------main方法:

//1.在 conf中加载了 nutch-site.xml;nutch-default.xml 这两个配置文件

//2.调用 run方法:

public static void main(String args[]) throws Exception {

Configuration conf = NutchConfiguration.create();

int res = ToolRunner.run(conf, new Crawl(), args);

System.exit(res);

}

@Override

public int run(String[] args) throws Exception {

if (args.length < 1) {

System.out.println

("Usage: Crawl <urlDir> -solr <solrURL> [-dir d] [-threads n] [-depth i] [-topN N]");

return -1;

}

Path rootUrlDir = null;

Path dir = new Path("crawl-" + getDate());

int threads = getConf().getInt("fetcher.threads.fetch", 10);

int depth = 5;

long topN = Long.MAX_VALUE;

String solrUrl = null;

for (int i = 0; i < args.length; i++) {

if ("-dir".equals(args[i])) {

dir = new Path(args[i+1]);

i++;

} else if ("-threads".equals(args[i])) {

threads = Integer.parseInt(args[i+1]);

i++;

} else if ("-depth".equals(args[i])) {

depth = Integer.parseInt(args[i+1]);

i++;

} else if ("-topN".equals(args[i])) {

topN = Integer.parseInt(args[i+1]);

i++;

} else if ("-solr".equals(args[i])) {

solrUrl = args[i + 1];

i++;

} else if (args[i] != null) {

rootUrlDir = new Path(args[i]);

}

}

JobConf job = new NutchJob(getConf());

if (solrUrl == null) {

LOG.warn("solrUrl is not set, indexing will be skipped...");

}

else {

// for simplicity assume that SOLR is used

// and pass its URL via conf

getConf().set("solr.server.url", solrUrl);

}

FileSystem fs = FileSystem.get(job);

if (LOG.isInfoEnabled()) {

LOG.info("crawl started in: " + dir);

LOG.info("rootUrlDir = " + rootUrlDir);

LOG.info("threads = " + threads);

LOG.info("depth = " + depth);

LOG.info("solrUrl=" + solrUrl);

if (topN != Long.MAX_VALUE)

LOG.info("topN = " + topN);

}

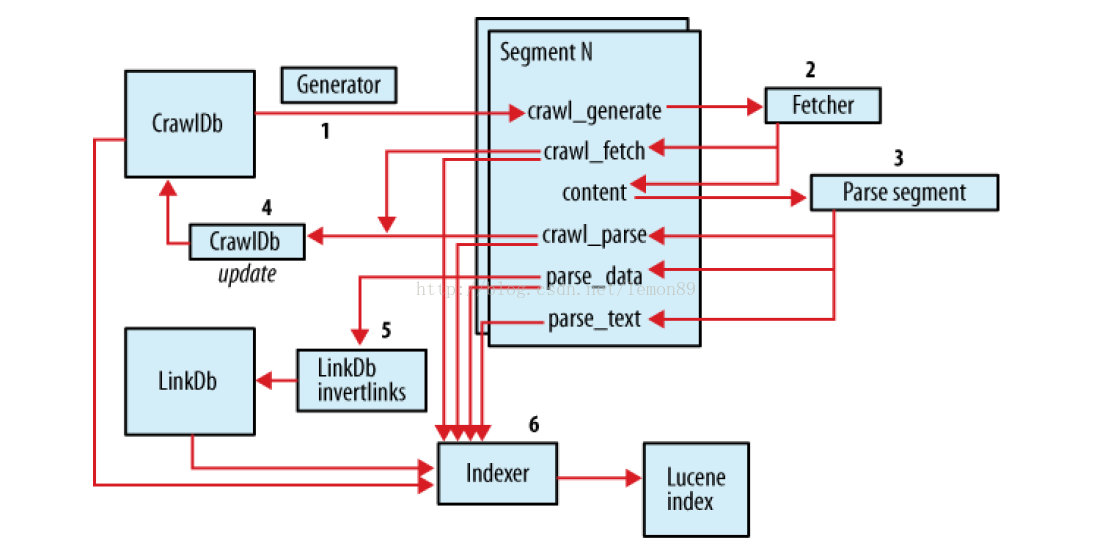

Path crawlDb = new Path(dir + "/crawldb");

Path linkDb = new Path(dir + "/linkdb");

Path segments = new Path(dir + "/segments");

Path indexes = new Path(dir + "/indexes");

Path index = new Path(dir + "/index");Path tmpDir = job.getLocalPath("crawl"+Path.SEPARATOR+getDate());

Injector injector = new Injector(getConf());

Generator generator = new Generator(getConf());

Fetcher fetcher = new Fetcher(getConf());

ParseSegment parseSegment = new ParseSegment(getConf());

CrawlDb crawlDbTool = new CrawlDb(getConf());

LinkDb linkDbTool = new LinkDb(getConf());

// initialize crawlDb

injector.inject(crawlDb, rootUrlDir);

int i;/此处 inject,fetch,update 不做详细解释,待续......

Path[] segs = generator.generate(crawlDb, segments, -1, topN, System

.currentTimeMillis());

if (segs == null) {

LOG.info("Stopping at depth=" + i + " - no more URLs to fetch.");

break;

}

fetcher.fetch(segs[0], threads); // fetch it

if (!Fetcher.isParsing(job)) {

parseSegment.parse(segs[0]); // parse it, if needed

}

crawlDbTool.update(crawlDb, segs, true, true); // update crawldb

}

3844

3844

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?