withScope是最近的发现版中新增加的一个模块,它是用来做DAG可视化的(DAG visualization on SparkUI)

以前的sparkUI中只有stage的执行情况,也就是说我们不可以看到上个RDD到下个RDD的具体信息。于是为了在

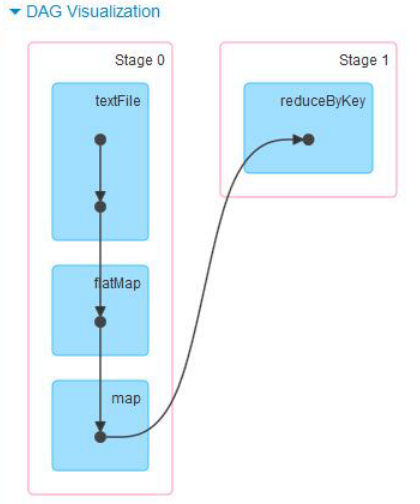

sparkUI中能展示更多的信息。所以把所有创建的RDD的方法都包裹起来,同时用RDDOperationScope 记录 RDD 的操作历史和关联,就能达成目标。下面就是一张WordCount的DAG visualization on SparkUI

记录关系的RDDOperationScope源码如下:

- /**

- * A collection of utility methods to construct a hierarchical representation of RDD scopes.

- * An RDD scope tracks the series of operations that created a given RDD.

- */

- private[spark] object RDDOperationScope extends Logging {

- private val jsonMapper = new ObjectMapper().registerModule(DefaultScalaModule)

- private val scopeCounter = new AtomicInteger(0)

- <span style="color:#ff0000;"> def fromJson(s: String): RDDOperationScope = {

- jsonMapper.readValue(s, classOf[RDDOperationScope])

- }</span>

- //返回一个全局独一无二的scopeID

- def nextScopeId(): Int = scopeCounter.getAndIncrement

- /**

- * Execute the given body such that all RDDs created in this body will have the same scope.

- * The name of the scope will be the first method name in the stack trace that is not the

- * same as this method's.

- *

- * Note: Return statements are NOT allowed in body.

- */

- private[spark] def withScope[T](

- sc: SparkContext,

- allowNesting: Boolean = false)(body: => T): T = {

- //设置跟踪堆的轨迹的scope名字

- val ourMethodName = "withScope"

- val callerMethodName = Thread.currentThread.getStackTrace()

- .dropWhile(_.getMethodName != ourMethodName)

- .find(_.getMethodName != ourMethodName)

- .map(_.getMethodName)

- .getOrElse {

- // Log a warning just in case, but this should almost certainly never happen

- logWarning("No valid method name for this RDD operation scope!")

- "N/A"

- }

- withScope[T](sc, callerMethodName, allowNesting, ignoreParent = false)(body)

- }

- /**

- * Execute the given body such that all RDDs created in this body will have the same scope.

- *

- * If nesting is allowed, any subsequent calls to this method in the given body will instantiate

- * child scopes that are nested within our scope. Otherwise, these calls will take no effect.

- *

- * Additionally, the caller of this method may optionally ignore the configurations and scopes

- * set by the higher level caller. In this case, this method will ignore the parent caller's

- * intention to disallow nesting, and the new scope instantiated will not have a parent. This

- * is useful for scoping physical operations in Spark SQL, for instance.

- *

- * Note: Return statements are NOT allowed in body.

- */

- private[spark] def withScope[T](

- sc: SparkContext,

- name: String,

- allowNesting: Boolean,

- ignoreParent: Boolean)(body: => T): T = {

- // Save the old scope to restore it later

- //先保存老的scope,之后恢复它

- val scopeKey = SparkContext.RDD_SCOPE_KEY

- val noOverrideKey = SparkContext.RDD_SCOPE_NO_OVERRIDE_KEY

- val oldScopeJson = sc.getLocalProperty(scopeKey)

- val oldScope = Option(oldScopeJson).map(RDDOperationScope.fromJson)

- val oldNoOverride = sc.getLocalProperty(noOverrideKey)

- try {

- if (ignoreParent) {

- //ignoreParent: Boolean:当ignorePatent设置为true的时候,那么回忽略之前的全部设置和scope

- //从新我们自己的scope

- sc.setLocalProperty(scopeKey, <span style="color:#ff0000;">new RDDOperationScope(name).toJson</span>)

- } else if (sc.getLocalProperty(noOverrideKey) == null) {

- // Otherwise, set the scope only if the higher level caller allows us to do so

- sc.setLocalProperty(scopeKey, <span style="color:#ff0000;">new RDDOperationScope(name, oldScope).toJson</span>)

- }

- //可选:不让我们的新的子RDD放入我们的scope中

- if (!allowNesting) {

- sc.setLocalProperty(noOverrideKey, "true")

- }

- body

- } finally {

- //把所有的新状态恢复放在一起

- sc.setLocalProperty(scopeKey, <span style="color:#ff0000;">oldScopeJson</span>)

- sc.setLocalProperty(noOverrideKey, oldNoOverride)

- }

- }

- }

3062

3062

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?