本节分析发送数据过程以及Server注册服务处理数据的过程

1

#define LOG_TAG "CalculateService"2

//#define LOG_NDEBUG 03

4

#include <fcntl.h>5

#include <sys/prctl.h>6

#include <sys/wait.h>7

#include <binder/IPCThreadState.h>8

#include <binder/ProcessState.h>9

#include <binder/IServiceManager.h>10

#include <cutils/properties.h>11

#include <utils/Log.h>12

13

#include "ICalculateService.h"14

15

using namespace android;16

17

/* ./test_client hello18

* ./test_client hello <name>19

*/20

int main(int argc, char **argv)21

{22

int cnt;23

24

if (argc < 2){25

ALOGI("Usage:\n");26

ALOGI("%s <hello|goodbye>\n", argv[0]);27

ALOGI("%s <hello|goodbye> <name>\n", argv[0]);28

return -1;29

}30

31

/* getService */32

/* 打开驱动, mmap */33

sp<ProcessState> proc(ProcessState::self());34

35

/* 获得BpServiceManager */36

sp<IServiceManager> sm = defaultServiceManager();37

38

sp<IBinder> binder =39

sm->getService(String16("calculate"));40

41

if (binder == 0)42

{43

ALOGI("can't get calculate service\n");44

return -1;45

}46

47

/* service肯定是BpCalculateServie指针 */48

sp<ICalculateService> service =49

interface_cast<ICalculateService>(binder);50

51

52

/* 多态,调用Service的函数 */53

cnt = service->reduceone(argv[2]);54

ALOGI("client call reduceone, result = %d", cnt);55

56

return 0;57

}经过前面的学习,我们知道 service 其实是一个 BpCalculateService 代理类对象的指针,因此:

1

int reduceone(int n)

2

{

3

/* 构造/发送数据 */

4

Parcel data, reply;

5

6

data.writeInt32(0);

7

data.writeInt32(n);

8

9

10

remote()->transact(SVR_CMD_REDUCE_ONE, data, &reply);

11

12

return reply.readInt32();

13

}

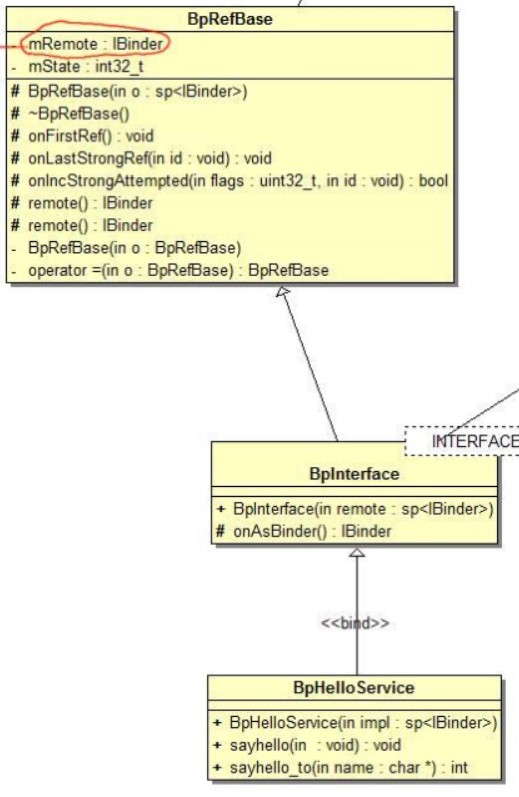

remote 函数是在 BpXXXService 的父类 BpRefBase 中实现的

1

inline IBinder* remote() { return mRemote; }前面一节分析过,这里的 mRemote 指向一个 BpBinder 对象,这个 BpBinder 对象是通过一个 handle 构造而来,这个 handle 也就是对应的服务的 handle

1

status_t BpBinder::transact(

2

uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags)

3

{

4

// Once a binder has died, it will never come back to life.

5

if (mAlive) {

6

status_t status = IPCThreadState::self()->transact(

7

mHandle, code, data, reply, flags);

8

if (status == DEAD_OBJECT) mAlive = 0;

9

return status;

10

}

11

12

return DEAD_OBJECT;

13

}

1

status_t IPCThreadState::transact(int32_t handle,2

uint32_t code, const Parcel& data,3

Parcel* reply, uint32_t flags)4

{5

status_t err = data.errorCheck();6

7

flags |= TF_ACCEPT_FDS;8

9

if (err == NO_ERROR) {10

LOG_ONEWAY(">>>> SEND from pid %d uid %d %s", getpid(), getuid(),11

(flags & TF_ONE_WAY) == 0 ? "READ REPLY" : "ONE WAY");12

err = writeTransactionData(BC_TRANSACTION, flags, handle, code, data, NULL);13

}14

15

if ((flags & TF_ONE_WAY) == 0) {16

17

if (reply) {18

err = waitForResponse(reply);19

} else {20

Parcel fakeReply;21

err = waitForResponse(&fakeReply);22

}23

24

} else {25

err = waitForResponse(NULL, NULL);26

}27

28

return err;29

}

1

status_t IPCThreadState::waitForResponse(Parcel *reply, status_t *acquireResult)

2

{

3

int32_t cmd;

4

int32_t err;

5

6

while (1) {

7

if ((err=talkWithDriver()) < NO_ERROR) break;

8

...

9

}

10

}

1

status_t IPCThreadState::talkWithDriver(bool doReceive)2

{ 3

binder_write_read bwr;4

5

// Is the read buffer empty?6

const bool needRead = mIn.dataPosition() >= mIn.dataSize();7

8

const size_t outAvail = (!doReceive || needRead) ? mOut.dataSize() : 0;9

10

bwr.write_size = outAvail;11

bwr.write_buffer = (uintptr_t)mOut.data();12

13

// This is what we'll read.14

if (doReceive && needRead) {15

bwr.read_size = mIn.dataCapacity();16

bwr.read_buffer = (uintptr_t)mIn.data();17

} else {18

bwr.read_size = 0;19

bwr.read_buffer = 0;20

}21

22

// Return immediately if there is nothing to do.23

if ((bwr.write_size == 0) && (bwr.read_size == 0)) return NO_ERROR;24

25

bwr.write_consumed = 0;26

bwr.read_consumed = 0;27

status_t err;28

do {29

/*********************mProcess->mDriverFD********************/30

if (ioctl(mProcess->mDriverFD, BINDER_WRITE_READ, &bwr) >= 0)31

err = NO_ERROR;32

33

} while (err == -EINTR);34

35

36

if (err >= NO_ERROR) {37

if (bwr.write_consumed > 0) {38

if (bwr.write_consumed < mOut.dataSize())39

mOut.remove(0, bwr.write_consumed);40

else41

mOut.setDataSize(0);42

}43

if (bwr.read_consumed > 0) {44

mIn.setDataSize(bwr.read_consumed);45

mIn.setDataPosition(0);46

}47

48

return NO_ERROR;49

}50

51

return err;52

}ioctl(mProcess->mDriverFD, BINDER_WRITE_READ, &bwr

回归到C语言了,IPCThreadState::mProcess->mDriverFD 这个就是 Binder 驱动的文件句柄,还得看看它是哪里来的。

Client 程序的刚开始有这么一句:

1

/* 打开驱动, mmap */

2

sp<ProcessState> proc(ProcessState::self());

1

sp<ProcessState> ProcessState::self()2

{3

Mutex::Autolock _l(gProcessMutex);4

if (gProcess != NULL) {5

return gProcess;6

}7

gProcess = new ProcessState;8

return gProcess;9

}

1

ProcessState::ProcessState()

2

: mDriverFD(open_driver())

3

, mVMStart(MAP_FAILED)

4

, mManagesContexts(false)

5

, mBinderContextCheckFunc(NULL)

6

, mBinderContextUserData(NULL)

7

, mThreadPoolStarted(false)

8

, mThreadPoolSeq(1)

9

{

10

if (mDriverFD >= 0) {

11

// XXX Ideally, there should be a specific define for whether we

12

// have mmap (or whether we could possibly have the kernel module

13

// availabla).

14

#if !defined(HAVE_WIN32_IPC)

15

// mmap the binder, providing a chunk of virtual address space to receive transactions.

16

mVMStart = mmap(0, BINDER_VM_SIZE, PROT_READ, MAP_PRIVATE | MAP_NORESERVE, mDriverFD, 0);

17

if (mVMStart == MAP_FAILED) {

18

// *sigh*

19

ALOGE("Using /dev/binder failed: unable to mmap transaction memory.\n");

20

close(mDriverFD);

21

mDriverFD = -1;

22

}

23

#else

24

mDriverFD = -1;

25

#endif

26

}

27

28

LOG_ALWAYS_FATAL_IF(mDriverFD < 0, "Binder driver could not be opened. Terminating.");

29

}

mDriverFD(open_driver()

1

static int open_driver()2

{3

int fd = open("/dev/binder", O_RDWR);4

if (fd >= 0) {5

fcntl(fd, F_SETFD, FD_CLOEXEC);6

int vers = 0;7

status_t result = ioctl(fd, BINDER_VERSION, &vers);8

9

size_t maxThreads = 15;10

result = ioctl(fd, BINDER_SET_MAX_THREADS, &maxThreads);11

12

} else {13

ALOGW("Opening '/dev/binder' failed: %s\n", strerror(errno));14

}15

return fd;16

}mDriverFD = open("/dev/binder", O_RDWR);

是时候分析 Server 这边的工作了:

1

int main(void)

2

{

3

/* addService */

4

5

/* while(1){ read data, 解析数据, 调用服务函数 } */

6

7

/* 打开驱动, mmap */

8

sp<ProcessState> proc(ProcessState::self());

9

10

/* 获得BpServiceManager */

11

sp<IServiceManager> sm = defaultServiceManager();

12

13

sm->addService(String16("calculate"), new BnCalculateService());

14

15

/* 循环体 */

16

ProcessState::self()->startThreadPool();

17

IPCThreadState::self()->joinThreadPool();

18

19

return 0;

20

}

首先是 addService 的过程,sm 实际上是 BpServiceManager 因此:

1

virtual status_t addService(const String16& name, const sp<IBinder>& service,2

bool allowIsolated)3

{4

Parcel data, reply;5

data.writeInterfaceToken(IServiceManager::getInterfaceDescriptor());6

data.writeString16(name);7

data.writeStrongBinder(service);8

data.writeInt32(allowIsolated ? 1 : 0);9

status_t err = remote()->transact(ADD_SERVICE_TRANSACTION, data, &reply);10

return err == NO_ERROR ? reply.readExceptionCode() : err;11

}data.writeStrongBinder(service); // service = new BnHelloService();

flatten_binder(ProcessState::self(), val, this); // val = service = new BnHelloService();

flat_binder_object obj; // 参数 binder = val = service = new BnHelloService();

IBinder *local = binder->localBinder(); // =this = new BnHelloService();

obj.type = BINDER_TYPE_BINDER;

obj.binder = reinterpret_cast<uintptr_t>(local->getWeakRefs());

obj.cookie = reinterpret_cast<uintptr_t>(local); // new BnHelloService();

将 BnHelloService 的指针付给了 obj.cookie

这里也是 remote->transact 所以没啥好分析的了和 Client 发送数据是一样的,那么看看 Server 收到数据是如何处理的

1

void IPCThreadState::joinThreadPool(bool isMain)

2

{

3

mOut.writeInt32(isMain ? BC_ENTER_LOOPER : BC_REGISTER_LOOPER);

4

5

set_sched_policy(mMyThreadId, SP_FOREGROUND);

6

7

status_t result;

8

do {

9

processPendingDerefs();

10

11

// now get the next command to be processed, waiting if necessary

12

result = getAndExecuteCommand();

13

14

} while (result != -ECONNREFUSED && result != -EBADF);

15

16

mOut.writeInt32(BC_EXIT_LOOPER);

17

talkWithDriver(false);

18

}

1

status_t IPCThreadState::executeCommand(int32_t cmd)2

{3

BBinder* obj;4

RefBase::weakref_type* refs;5

status_t result = NO_ERROR;6

7

switch (cmd) {8

case BR_TRANSACTION:9

{10

binder_transaction_data tr;11

result = mIn.read(&tr, sizeof(tr));12

ALOG_ASSERT(result == NO_ERROR,13

"Not enough command data for brTRANSACTION");14

if (result != NO_ERROR) break;15

16

Parcel buffer;17

buffer.ipcSetDataReference(18

reinterpret_cast<const uint8_t*>(tr.data.ptr.buffer),19

tr.data_size,20

reinterpret_cast<const binder_size_t*>(tr.data.ptr.offsets),21

tr.offsets_size/sizeof(binder_size_t), freeBuffer, this);22

23

const pid_t origPid = mCallingPid;24

const uid_t origUid = mCallingUid;25

const int32_t origStrictModePolicy = mStrictModePolicy;26

const int32_t origTransactionBinderFlags = mLastTransactionBinderFlags;27

28

mCallingPid = tr.sender_pid;29

mCallingUid = tr.sender_euid;30

mLastTransactionBinderFlags = tr.flags;31

32

int curPrio = getpriority(PRIO_PROCESS, mMyThreadId);33

if (gDisableBackgroundScheduling) {34

if (curPrio > ANDROID_PRIORITY_NORMAL) {35

36

setpriority(PRIO_PROCESS, mMyThreadId, ANDROID_PRIORITY_NORMAL);37

}38

} else {39

if (curPrio >= ANDROID_PRIORITY_BACKGROUND) {40

41

set_sched_policy(mMyThreadId, SP_BACKGROUND);42

}43

}44

45

//ALOGI(">>>> TRANSACT from pid %d uid %d\n", mCallingPid, mCallingUid);46

47

Parcel reply;48

status_t error;49

50

/************************tr.cookie************************/51

if (tr.target.ptr) {52

sp<BBinder> b((BBinder*)tr.cookie);53

error = b->transact(tr.code, buffer, &reply, tr.flags);54

55

} else {56

error = the_context_object->transact(tr.code, buffer, &reply, tr.flags);57

}58

59

if ((tr.flags & TF_ONE_WAY) == 0) {60

LOG_ONEWAY("Sending reply to %d!", mCallingPid);61

if (error < NO_ERROR) reply.setError(error);62

sendReply(reply, 0);63

} else {64

LOG_ONEWAY("NOT sending reply to %d!", mCallingPid);65

}66

67

mCallingPid = origPid;68

mCallingUid = origUid;69

mStrictModePolicy = origStrictModePolicy;70

mLastTransactionBinderFlags = origTransactionBinderFlags;71

72

}73

break;74

}75

76

return result;77

}sp<BBinder> b((BBinder*)tr.cookie);

error = b->transact(tr.code, buffer, &reply, tr.flags);

b 就指向了 BnHelloService

1

status_t BBinder::transact(

2

uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags)

3

{

4

data.setDataPosition(0);

5

6

status_t err = NO_ERROR;

7

switch (code) {

8

case PING_TRANSACTION:

9

reply->writeInt32(pingBinder());

10

break;

11

default:

12

err = onTransact(code, data, reply, flags);

13

break;

14

}

15

16

if (reply != NULL) {

17

reply->setDataPosition(0);

18

}

19

20

return err;

21

}

这里就调用到了,BnHelloService->onTransact 函数

总结:

1、发送数据都用 remote()->transact ,利用 ProcessState 里的 binder fd 通过 ioctl 发送数据

2、注册服务的实质,将一个BnXXXService的指针,作为flat_binder_object.cookie 注册进去

3、Service 处理数据实质,取出 cookie 也就是 BnXXXService 调用它的 onTransact 函数

本文深入分析了Binder机制在Android系统中的工作原理,从客户端发送数据到服务端处理数据的全过程,包括Binder驱动交互、进程间通信及服务注册流程。

本文深入分析了Binder机制在Android系统中的工作原理,从客户端发送数据到服务端处理数据的全过程,包括Binder驱动交互、进程间通信及服务注册流程。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?