任意一图书类别的书籍信息数据

参考网址:豆瓣读书网

爬虫逻辑:【分页网页url采集】-【数据采集】

这次的逻辑要求分两步走,封装两个函数

函数1:get_urls(n) → 【分页网页url采集】

n:页数参数

结果:得到一个分页网页的list

函数2:get_data(ui,d_h,d_c) → 【数据采集】

ui:数据信息网页

d_h:user-agent信息

d_c:cookies信息

结果:得到数据的list,每条数据用dict存储

采集数据字段

按照第一个红色框中圈出的内容进行每个书籍内容的获取

设置登录信息cookies

写多了就会背了,标准的处理方式

cookies_lst = cookies.split("; ")

dic_cookies = {}

for i in cookies_lst:

dic_cookies[i.split("=")[0]] = i.split("=")[1]

注意:使用异常处理(try…except…)

要求采集200条数据,结果保存为dataframe,并做基本数据清洗

10页【分页网页url采集】- 每页20条数据

评分 字段

页数 字段

评价数量 字段

本次采集难点

详细信息部分字段并不能保持完全一致,较难标准化(和上一个博客的要求②类似,没有统一的标准)

前期准备和封装第一个函数

和逻辑1里面的代码一模一样,直接可以给出

import requests

from bs4 import BeautifulSoup

import pandas as pd

def get_url(n):

'''

【分页网址url采集】函数

n:页数参数

结果:得到一个分页网页的list

'''

lst = []

for i in range(10):

ui = "https://book.douban.com/tag/%E7%94%B5%E5%BD%B1?start={}&type=T".format(i*20)

#print(ui)

lst.append(ui)

return lst

网页信息解析

关于headers和cookies已经设置过了,可以直接拿来用,如下

dic_heders = {

'User-Agent' : 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/75.0.3770.100 Safari/537.36'

}

dic_cookies = {}

cookies = 'll="108296"; bid=b9z-Z1JF8wQ; _vwo_uuid_v2=DDF408830197B90007427EFEAB67DF985|b500ed9e7e3b5f6efec01c709b7000c3; douban-fav-remind=1; __yadk_uid=2D7qqvQghjfgVOD0jdPUlybUNa2MBZbz; gr_user_id=5943e535-83de-4105-b840-65b7a0cc92e1; dbcl2="150296873:VHh1cXhumGU"; push_noty_num=0; push_doumail_num=0; __utmv=30149280.15029; __gads=ID=dcc053bfe97d2b3c:T=1579101910:S=ALNI_Ma5JEn6w7PLu-iTttZOFRZbG4sHCw; ct=y; Hm_lvt_cfafef0aa0076ffb1a7838fd772f844d=1579102240; __utmz=81379588.1579138975.5.5.utmcsr=baidu|utmccn=(organic)|utmcmd=organic; __utmz=30149280.1579162528.9.8.utmcsr=baidu|utmccn=(organic)|utmcmd=organic; ck=csBn; _pk_ref.100001.3ac3=%5B%22%22%2C%22%22%2C1581081161%2C%22https%3A%2F%2Fwww.baidu.com%2Flink%3Furl%3DNq2xYeTOYsYNs1a4LeFRmxqwD_0zDOBN253fDrX-5wRdwrQqUpYGFSmifESD4TLN%26wd%3D%26eqid%3De7868ab7001090b7000000035e1fbf95%22%5D; _pk_ses.100001.3ac3=*; __utma=30149280.195590675.1570957615.1581050101.1581081161.16; __utmc=30149280; __utma=81379588.834351582.1571800818.1581050101.1581081161.12; __utmc=81379588; ap_v=0,6.0; gr_session_id_22c937bbd8ebd703f2d8e9445f7dfd03=b6a046c7-15eb-4e77-bc26-3a7af29e68b1; gr_cs1_b6a046c7-15eb-4e77-bc26-3a7af29e68b1=user_id%3A1; gr_session_id_22c937bbd8ebd703f2d8e9445f7dfd03_b6a046c7-15eb-4e77-bc26-3a7af29e68b1=true; _pk_id.100001.3ac3=6ec264aefc5132a2.1571800818.12.1581082472.1581050101.; __utmb=30149280.7.10.1581081161; __utmb=81379588.7.10.1581081161'

cookies_lst = cookies.split("; ")

for i in cookies_lst:

dic_cookies[i.split("=")[0]] = i.split("=")[1]

网页信息请求,所有的书籍信息都被收集在【ul】标签下面

编写代码获取其中的一个书籍内容进行试错

ri = requests.get(u1, headers = dic_heders, cookies = dic_cookies)

soup_i = BeautifulSoup(ri.text, 'lxml')

ul = soup_i.find('ul', class_ = 'subject-list')

lis = ul.find_all('li')

获取其中的标签信息,以字典方式存储

dic = {}

dic['标题'] = lis[0].find("div", class_ = 'info').h2.text.replace(" ","").replace("\n","")

dic['评分'] = lis[0].find("span", class_ = 'rating_nums').text.replace("(","")

dic['评价人数'] = lis[0].find("span", class_ = 'pl').text.replace(" ","").replace("\n","").replace("(","").replace(")","")

dic['简介'] = lis[0].find("p").text.replace("\n",",")

dic['其他'] = lis[0].find("div", class_ = "pub").text.replace(" ","").replace("\n","")

print(dic)

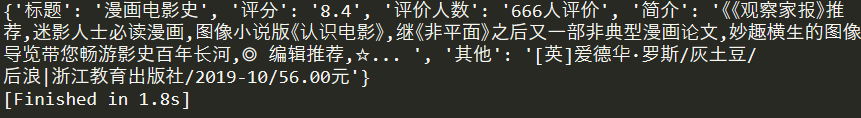

输出的结果为:

然后试错无误后,进行遍历循环,然后将数据存放到列表中

lst = []

for li in lis:

dic = {}

dic['标题'] = li.find("div", class_ = 'info').h2.text.replace(" ","").replace("\n","")

dic['评分'] = li.find("span", class_ = 'rating_nums').text.replace("(","")

dic['评价人数'] = li.find("span", class_ = 'pl').text.replace(" ","").replace("\n","").replace("(","").replace(")","")

dic['简介'] = li.find("p").text.replace("\n",",")

dic['其他'] = li.find("div", class_ = "pub").text.replace(" ","").replace("\n","")

lst.append(dic)

print(lst)

输出结果为:

封装第二个函数

前期试错已经正常运行了,下面将过程封装成目标函数

def get_data(ui,d_h,d_c):

'''

【数据采集】

ui:数据信息网页

d_h:user-agent信息

d_c:cookies信息

结果:得到数据的list,每条数据用dict存储

'''

ri = requests.get(ui, headers = d_h, cookies = d_c)

soup_i = BeautifulSoup(ri.text, 'lxml')

ul = soup_i.find('ul', class_ = 'subject-list')

lis = ul.find_all('li')

lst = []

for li in lis:

dic = {}

dic['标题'] = li.find("div", class_ = 'info').h2.text.replace(" ","").replace("\n","")

dic['评分'] = li.find("span", class_ = 'rating_nums').text.replace("(","")

dic['评价人数'] = li.find("span", class_ = 'pl').text.replace(" ","").replace("\n","").replace("(","").replace(")","")

dic['简介'] = li.find("p").text.replace("\n",",")

dic['其他'] = li.find("div", class_ = "pub").text.replace(" ","").replace("\n","")

lst.append(dic)

return lst

print(get_data(u1,dic_heders,dic_cookies))

输出结果和上面的结果一致,然后进行异常错误判断

datalst = []

errorlst = []

for u in urllst_1:

try:

datalst.extend(get_data(u,dic_heders,dic_cookies))

print("成功采集{}条数据".format(len(datalst)))

except:

errorlst.append(u)

print("页面访问失败,网址为,",u)

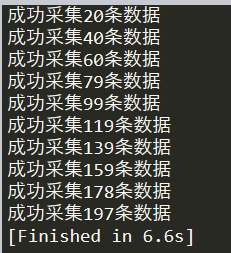

输出结果为:

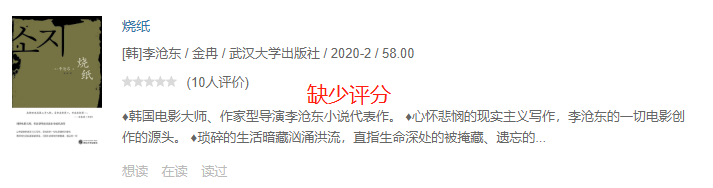

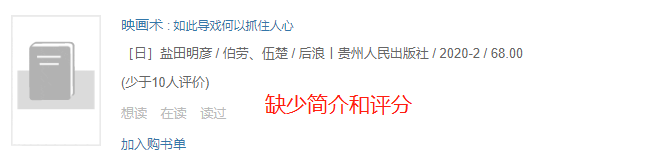

分析一下报错的原因,是因为标签识别的问题,查看问题的网址的内容,发现小于10人评价的书籍是没有评分的,有的甚至没有简介,如下。解决方式:在进行“评分”和“评分人数”获取的时候,尝试不分开获取信息,对于简介的内容直接进行异常判断,看看结果

修改代码如下,将“评分”和“评价人数”直接和并起来,获得数据之后在进行数据清洗,对于没有简介内容的,直接进行异常判断处理

# dic['评分'] = li.find("span", class_ = 'rating_nums').text.replace("(","")

# dic['评价人数'] = li.find("span", class_ = 'pl').text.replace(" ","").replace("\n","").replace("(","").replace(")","")

dic['评价'] = li.find("div", class_ = 'star clearfix').text.replace(" ","").replace("\n","")

try:

dic['简介'] = li.find("p").text.replace("\n",",")

except:

continue

重新运行代码,输出的结果为:

注意:网站的信息是实时更新的,所以爬虫代码也应该定期维护一下,具体的情况就要有具体分析了

数据清洗

将数据转化为dataframe,由于要处理数据,如果再使用Sublime的话,就显得很笨重,这时候数据处理在spyder里运行

df = pd.DataFrame(datalst)

print(df)

输出的结果为:

先处理“评价”字段:(下面是按照评价字段进行排序,其实也是按照评分的大小进行排序)

由于评分这部分的缺失的数据较少,而且其评价人数也较少,这里直接采取剔除数据进行处理,可以看出“评分”为空的“评价”数据里面都是以“(”开头,因此剔除这些数据就可以筛选“评价”字段的开头第一个字符不为“(”的数据即可,代码如下

df = df[df['评价'].str[0] != "("]

print(df)

输出结果:

然后在处理"评分"和"评价人数"字段,以及提取价钱字段(页码不是所有的所有,所有改成提取价钱字段)

df = pd.DataFrame(datalst)

df = df[df['评价'].str[0] != '(']

df['评分'] = df['评价'].str.split("(").str[0].astype("float")

df['评价人数'] =df['评价'].str.split("(").str[1].str.split("人").str[0].astype('int')

del df['评价']

df['价格'] = df['其他'].str.split('/').str[-1]

df.to_excel("豆瓣数据爬取.xlsx",index = False)

输出的结果为:

全部代码及结果输出

import requests

from bs4 import BeautifulSoup

import pandas as pd

def get_url(n):

'''

【分页网址url采集】函数

n:页数参数

结果:得到一个分页网页的list

'''

lst = []

for i in range(10):

ui = "https://book.douban.com/tag/%E7%94%B5%E5%BD%B1?start={}&type=T".format(i*20)

#print(ui)

lst.append(ui)

return lst

def get_data(ui,d_h,d_c):

'''

【数据采集】

ui:数据信息网页

d_h:user-agent信息

d_c:cookies信息

结果:得到数据的list,每条数据用dict存储

'''

ri = requests.get(ui, headers = d_h, cookies = d_c)

soup_i = BeautifulSoup(ri.text, 'lxml')

ul = soup_i.find('ul', class_ = 'subject-list')

lis = ul.find_all('li')

lst = []

for li in lis:

dic = {}

dic['标题'] = li.find("div", class_ = 'info').h2.text.replace(" ","").replace("\n","")

# dic['评分'] = li.find("span", class_ = 'rating_nums').text.replace("(","")

# dic['评价人数'] = li.find("span", class_ = 'pl').text.replace(" ","").replace("\n","").replace("(","").replace(")","")

dic['评价'] = li.find("div", class_ = 'star clearfix').text.replace(" ","").replace("\n","")

try:

dic['简介'] = li.find("p").text.replace("\n",",")

except:

continue

dic['其他'] = li.find("div", class_ = "pub").text.replace(" ","").replace("\n","")

lst.append(dic)

return lst

if __name__ == "__main__":

urllst_1 = get_url(10)

u1 = urllst_1[0]

#print(u1)

dic_heders = {

'User-Agent' : 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/75.0.3770.100 Safari/537.36'

}

dic_cookies = {}

cookies = 'll="108296"; bid=b9z-Z1JF8wQ; _vwo_uuid_v2=DDF408830197B90007427EFEAB67DF985|b500ed9e7e3b5f6efec01c709b7000c3; douban-fav-remind=1; __yadk_uid=2D7qqvQghjfgVOD0jdPUlybUNa2MBZbz; gr_user_id=5943e535-83de-4105-b840-65b7a0cc92e1; dbcl2="150296873:VHh1cXhumGU"; push_noty_num=0; push_doumail_num=0; __utmv=30149280.15029; __gads=ID=dcc053bfe97d2b3c:T=1579101910:S=ALNI_Ma5JEn6w7PLu-iTttZOFRZbG4sHCw; ct=y; Hm_lvt_cfafef0aa0076ffb1a7838fd772f844d=1579102240; __utmz=81379588.1579138975.5.5.utmcsr=baidu|utmccn=(organic)|utmcmd=organic; __utmz=30149280.1579162528.9.8.utmcsr=baidu|utmccn=(organic)|utmcmd=organic; ck=csBn; _pk_ref.100001.3ac3=%5B%22%22%2C%22%22%2C1581081161%2C%22https%3A%2F%2Fwww.baidu.com%2Flink%3Furl%3DNq2xYeTOYsYNs1a4LeFRmxqwD_0zDOBN253fDrX-5wRdwrQqUpYGFSmifESD4TLN%26wd%3D%26eqid%3De7868ab7001090b7000000035e1fbf95%22%5D; _pk_ses.100001.3ac3=*; __utma=30149280.195590675.1570957615.1581050101.1581081161.16; __utmc=30149280; __utma=81379588.834351582.1571800818.1581050101.1581081161.12; __utmc=81379588; ap_v=0,6.0; gr_session_id_22c937bbd8ebd703f2d8e9445f7dfd03=b6a046c7-15eb-4e77-bc26-3a7af29e68b1; gr_cs1_b6a046c7-15eb-4e77-bc26-3a7af29e68b1=user_id%3A1; gr_session_id_22c937bbd8ebd703f2d8e9445f7dfd03_b6a046c7-15eb-4e77-bc26-3a7af29e68b1=true; _pk_id.100001.3ac3=6ec264aefc5132a2.1571800818.12.1581082472.1581050101.; __utmb=30149280.7.10.1581081161; __utmb=81379588.7.10.1581081161'

cookies_lst = cookies.split("; ")

for i in cookies_lst:

dic_cookies[i.split("=")[0]] = i.split("=")[1]

datalst = []

errorlst = []

for u in urllst_1:

try:

datalst.extend(get_data(u,dic_heders,dic_cookies))

print("成功采集{}条数据".format(len(datalst)))

except:

errorlst.append(u)

print("数据采集失败,网址是", u)

df = pd.DataFrame(datalst)

df = df[df['评价'].str[0]!='(']

df['评分'] = df['评价'].str.split("(").str[0].astype("float")

df['评价人数'] =df['评价'].str.split("(").str[1].str.split("人").str[0].astype('int')

del df['评价']

df['价格'] = df['其他'].str.split('/').str[-1]

df.to_excel("豆瓣数据爬取.xlsx",index = False)

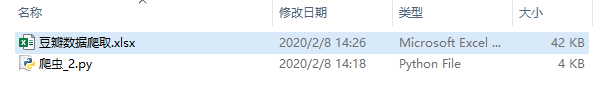

Excel文件数据如下:

2025

2025

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?