日志收集

GrayLog

环境搭建

配置文件

docker-component.yaml

version: '3'

services:

mongo:

image: mongo:4.2

networks:

- graylog

elasticsearch:

image: docker.elastic.co/elasticsearch/elasticsearch-oss:7.10.2

environment:

- http.host=0.0.0.0

- transport.host=localhost

- network.host=0.0.0.0

- "ES_JAVA_OPTS=-Dlog4j2.formatMsgNoLookups=true -Xms512m -Xmx512m"

ulimits:

memlock:

soft: -1

hard: -1

deploy:

resources:

limits:

memory: 1g

ports:

- 9200:9200

- 9300:9300

networks:

- graylog

graylog:

image: graylog/graylog:4.2

environment:

- GRAYLOG_PASSWORD_SECRET=somepasswordpepper

- GRAYLOG_ROOT_PASSWORD_SHA2=8c6976e5b5410415bde908bd4dee15dfb167a9c873fc4bb8a81f6f2ab448a918

- GRAYLOG_HTTP_EXTERNAL_URI=http://localhost:9090/ # 这里注意要改ip

entrypoint: /usr/bin/tini -- wait-for-it elasticsearch:9200 -- /docker-entrypoint.sh

networks:

- graylog

depends_on:

- mongo

- elasticsearch

ports:

- 9090:9000

- 1514:1514

- 1514:1514/udp

- 12201:12201

- 12201:12201/udp

networks:

graylog:

运行

docker-compose up -d

访问

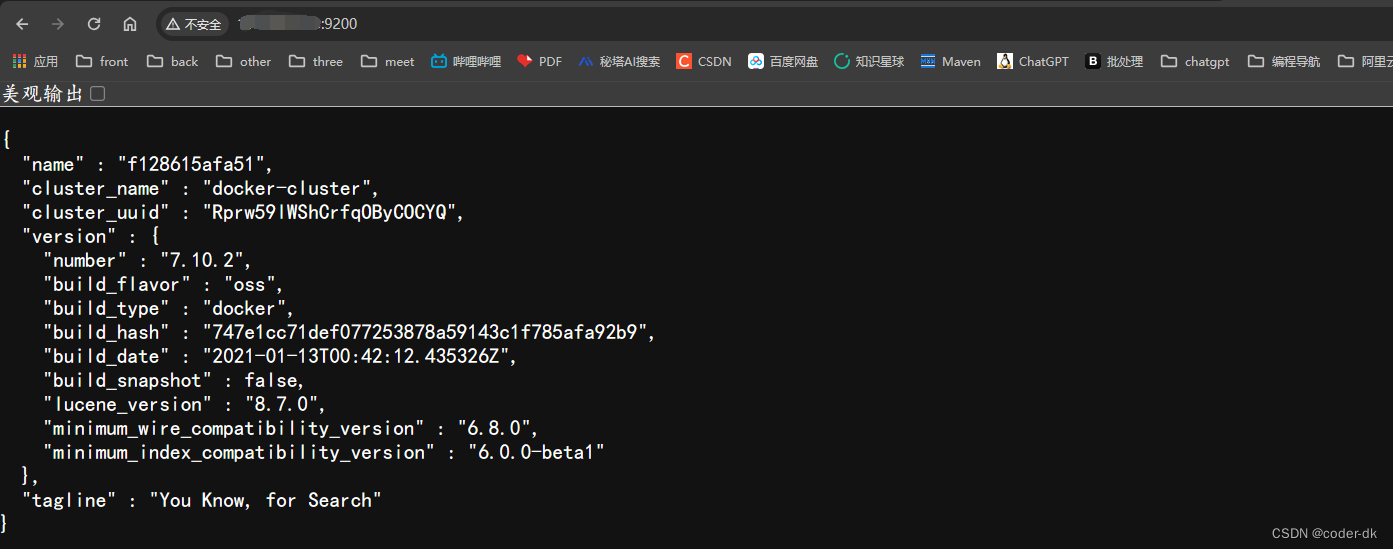

elasticsearch: http://ip:9200

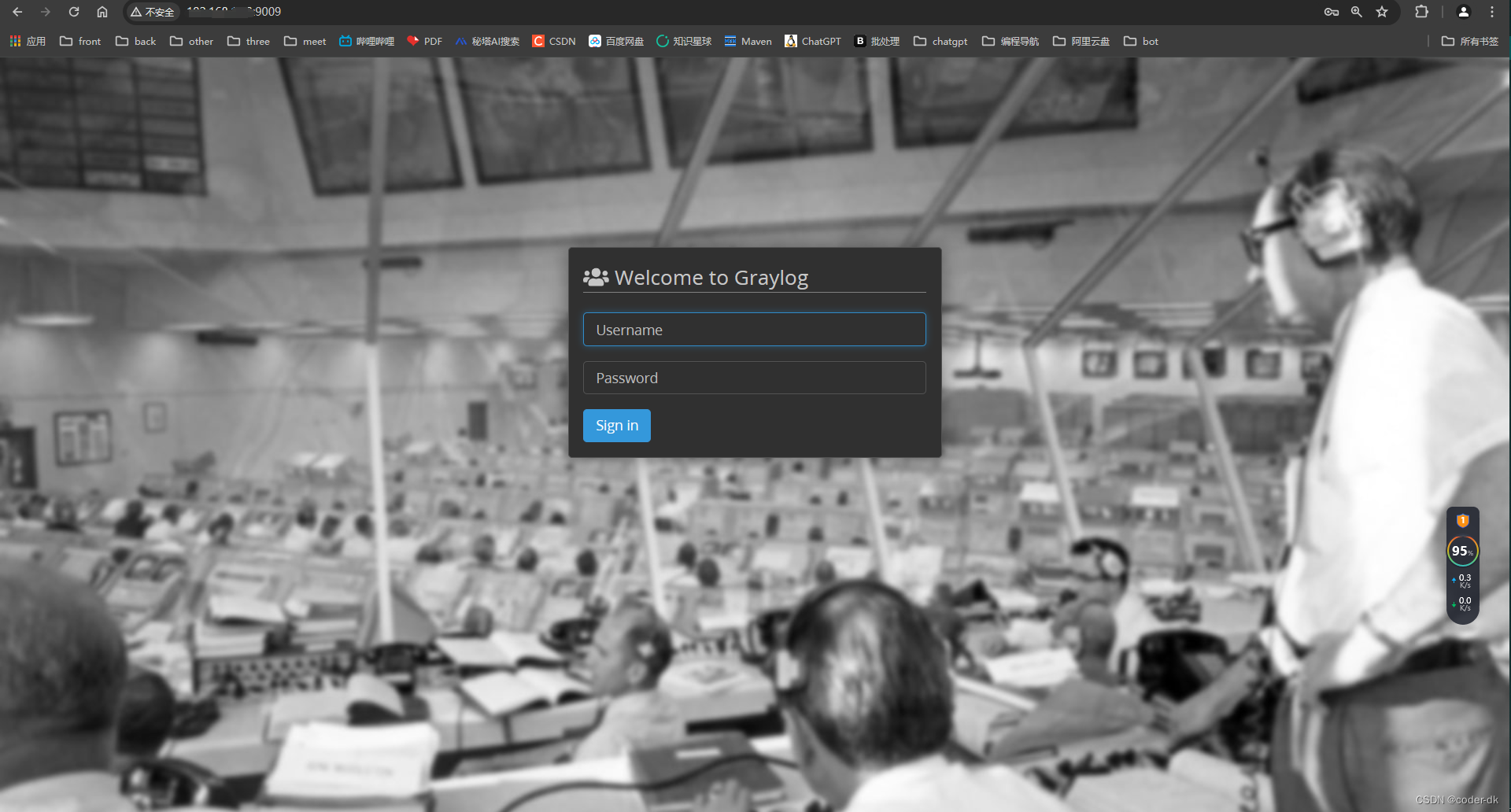

graylog:http://ip:9009

用户名/密码:admin/admin

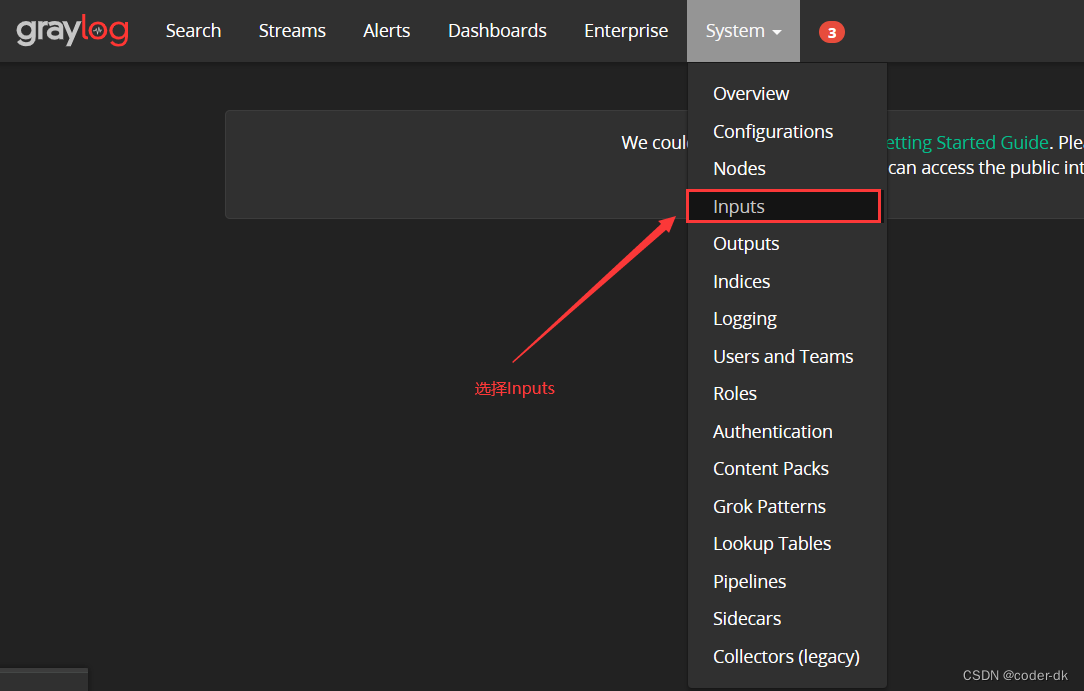

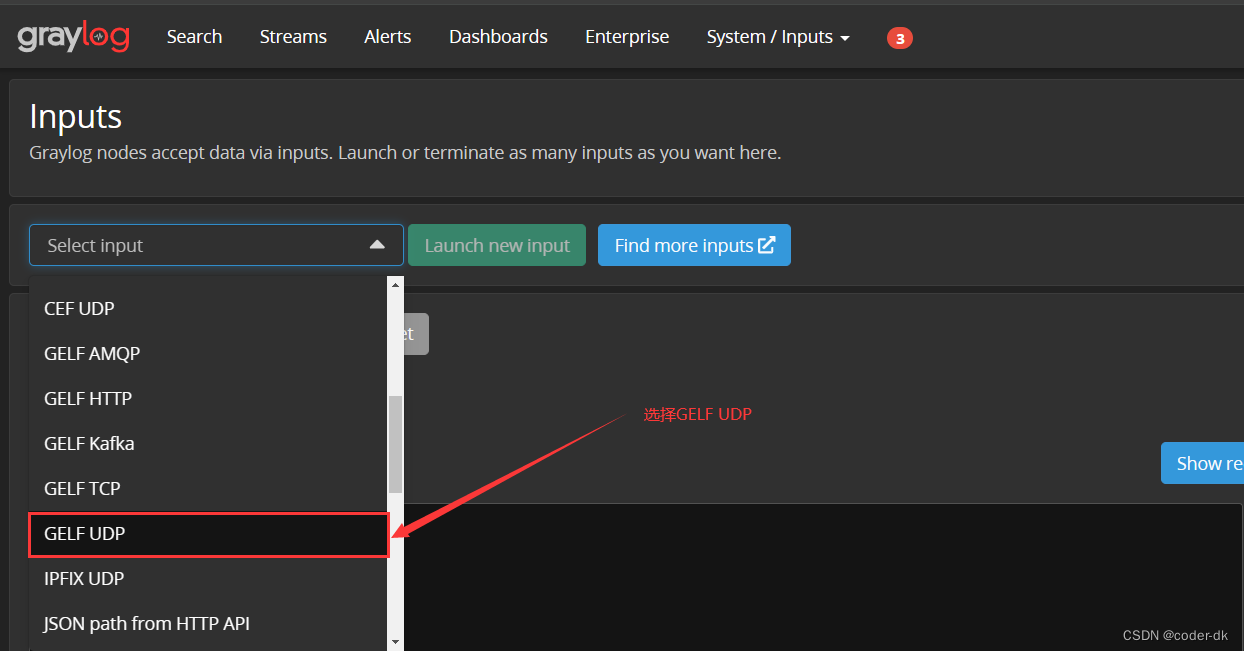

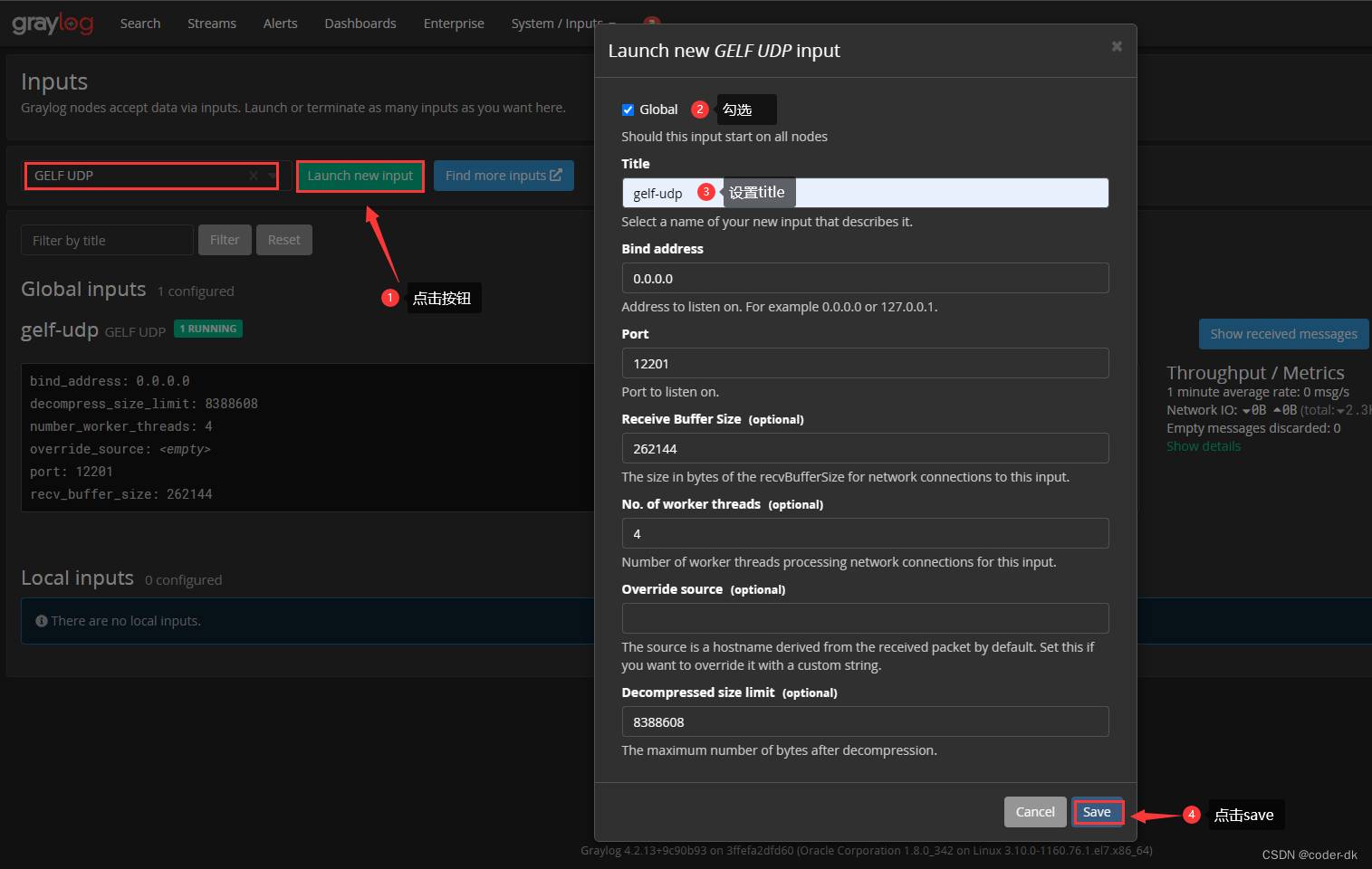

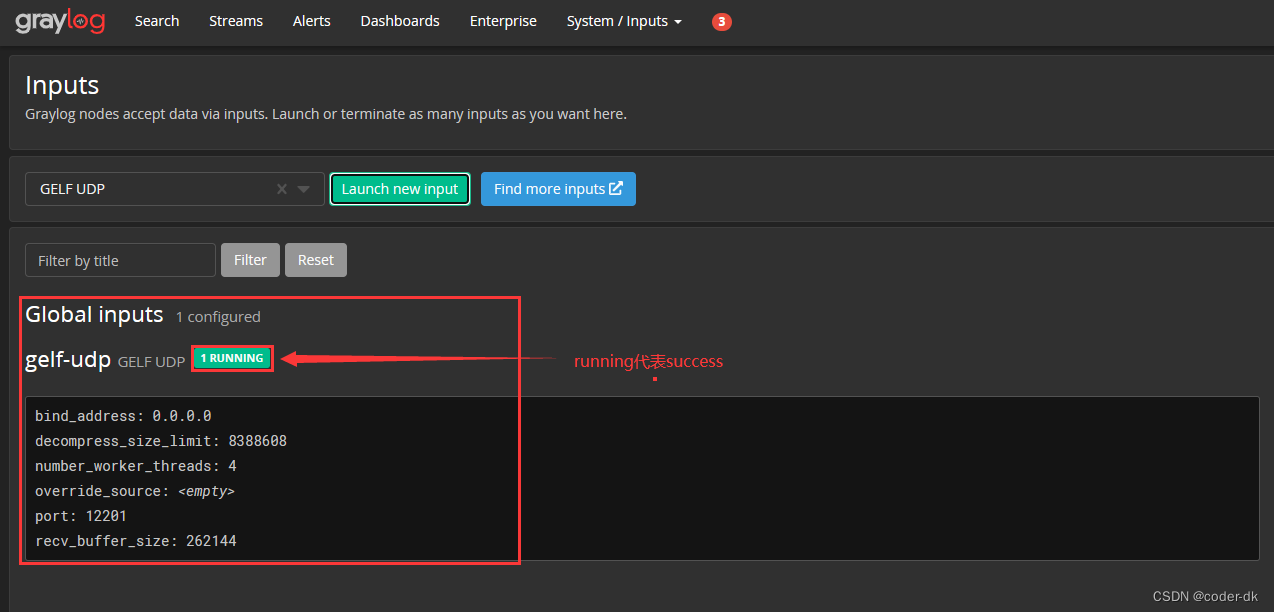

创建UDP

- 选择

Inputs

- 选择

GELF UDP

- 设置

tilte

- 查看状态

SpringBoot集成GrayLog

引入依赖

pom

<dependency>

<groupId>de.siegmar</groupId>

<artifactId>logback-gelf</artifactId>

<version>3.0.0</version>

</dependency>

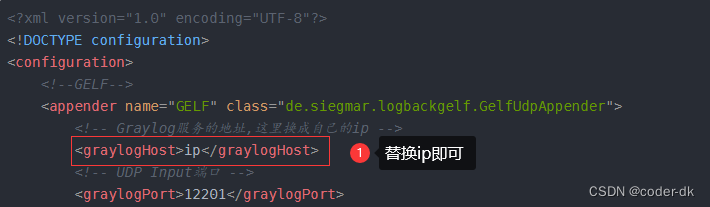

设置logback-springxml

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE configuration>

<configuration>

<!--GELF-->

<appender name="GELF" class="de.siegmar.logbackgelf.GelfUdpAppender">

<!-- Graylog服务的地址,这里换成自己的ip -->

<graylogHost>ip</graylogHost>

<!-- UDP Input端口 -->

<graylogPort>12201</graylogPort>

<!-- 最大GELF数据块大小(单位:字节),508为建议最小值,最大值为65467 -->

<maxChunkSize>508</maxChunkSize>

<!-- 是否使用压缩 -->

<useCompression>true</useCompression>

<encoder class="de.siegmar.logbackgelf.GelfEncoder">

<!-- 是否发送原生的日志信息 -->

<includeRawMessage>false</includeRawMessage>

<includeMarker>true</includeMarker>

<includeMdcData>true</includeMdcData>

<includeCallerData>false</includeCallerData>

<includeRootCauseData>false</includeRootCauseData>

<!-- 是否发送日志级别的名称,否则默认以数字代表日志级别 -->

<includeLevelName>true</includeLevelName>

<shortPatternLayout class="ch.qos.logback.classic.PatternLayout">

<pattern>%m%nopex</pattern>

</shortPatternLayout>

<fullPatternLayout class="ch.qos.logback.classic.PatternLayout">

<pattern>%d - [%thread] %-5level %logger{35} - %msg%n</pattern>

</fullPatternLayout>

<!-- 配置应用名称(服务名称),通过staticField标签可以自定义一些固定的日志字段 -->

<staticField>app_name:austin</staticField>

</encoder>

</appender>

<include resource="org/springframework/boot/logging/logback/base.xml"/>

<root level="INFO">

<appender-ref ref="GELF" />

<appender-ref ref="CONSOLE" />

</root>

</configuration>

上述文件只需换成自己的ip

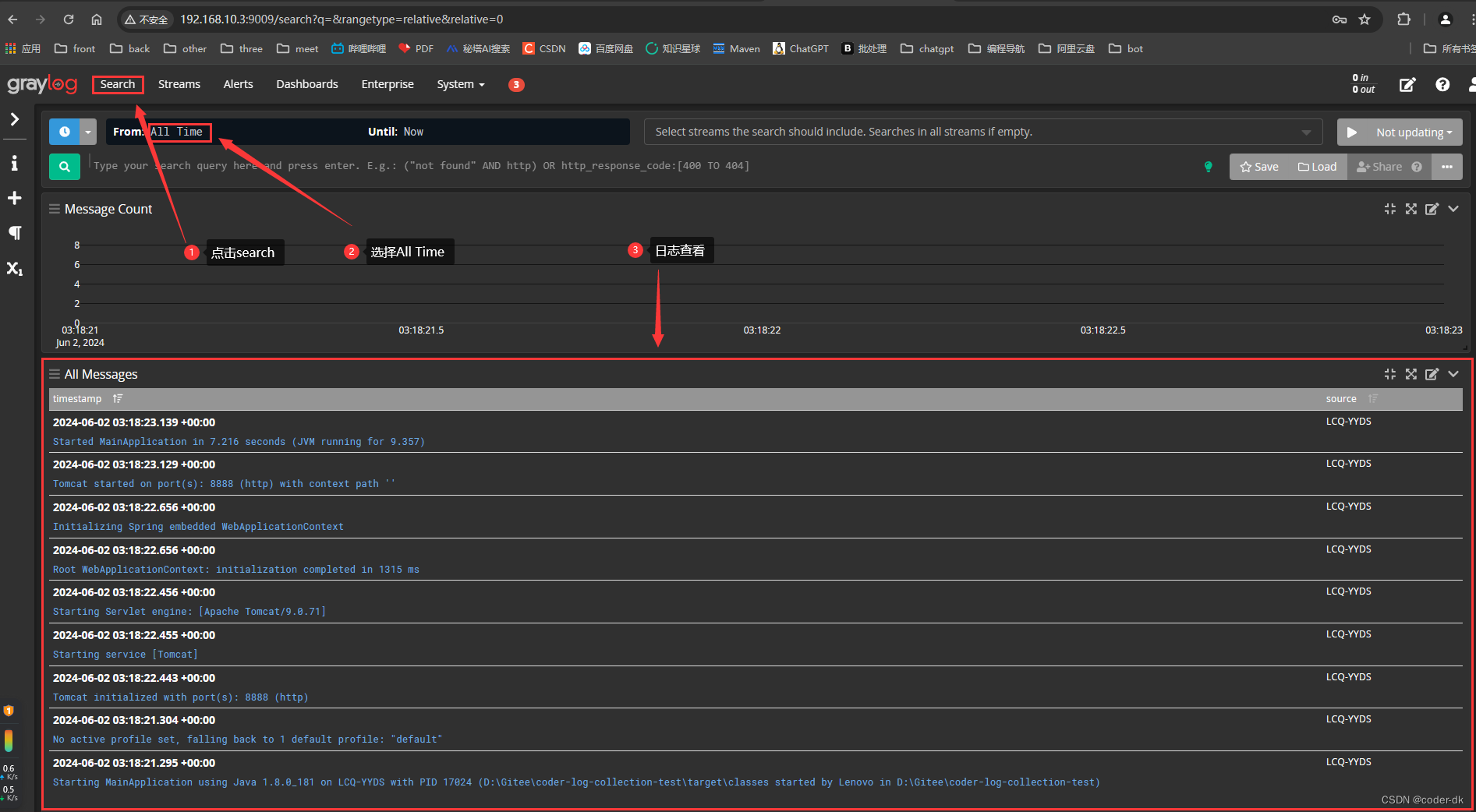

启动项目,查看日志

ELK

环境搭建

SpringBoot集成ELK

logstash.conf配置

input {

tcp {

port => 5000

}

}

filter {

grok {

## keep_empty_captures => false,

match => {

"message" => "%{GREEDYDATA:app_name} %{TIMESTAMP_ISO8601:log_time} %{GREEDYDATA:log_thread} %{LOGLEVEL:log_level} %{GREEDYDATA:log_class} - %{GREEDYDATA:log_content} %{IP:log_ip}"

}

}

}

## Add your filters / logstash plugins configuration here

output {

elasticsearch {

hosts => "elasticsearch:9200"

user => "logstash_internal"

password => "${LOGSTASH_INTERNAL_PASSWORD}"

}

}

常用KQL

查询以收到开头的

log_content : 收到*

大于某个时间

log_time > "2024-06-05 14:00:00"

查询某天某时

log_time : *2024-06-05 16*

模糊匹配

log_content : *大华*

查询非空字段

app_name : *

ip查询

log_ip : 192.169.11.45

1008

1008

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?