原理

Residual Network的基本原理就是跳跃传播,和传统的CNN网络(作为Residual Network的mainly road),Residaul 添加了新的传播通道(shortcut),具体可以参看下面的图片:

- 中间加入BN层提高速度(As you want)

- shortcut具体跳跃几个层由自己决定,一般总的层数越多,跳跃的也越多。

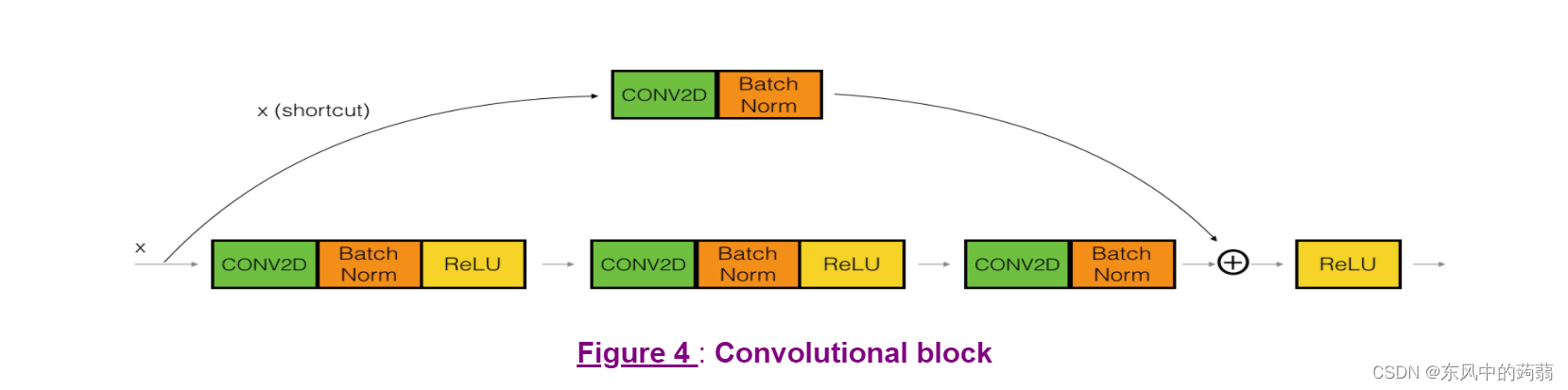

- shortcut份两种,一种是 X X X经过这几层之后尺寸不变,可以直接相加,另一种是 X X X经过几次CN层尺寸发生变化(如前两张图), X X X需要经过CN层变换之后相加(第三张图)。

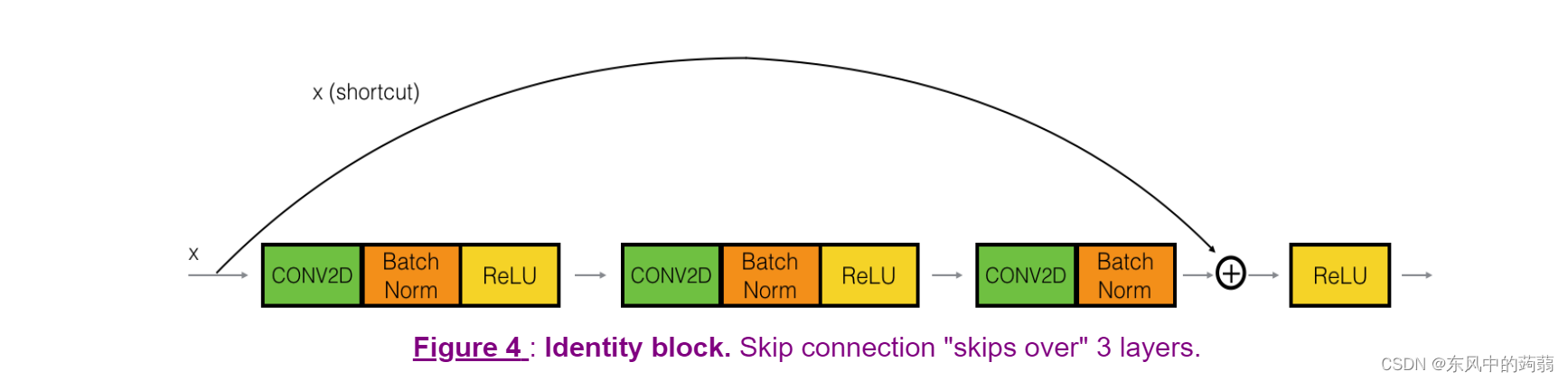

- 我们将跳跃的几个层封装起来,如果输入和输出尺寸相等(也就是前面的尺寸不变),我们称为 I d e n t i t y B l o c k Identity \ Block Identity Block,如果尺寸变化则称为 C o n v o l u t i o n B l o c k Convolution Block ConvolutionBlock.

Identity Block

# UNQ_C1

# GRADED FUNCTION: identity_block

def identity_block(X, f, filters, training=True, initializer=random_uniform):

"""

Implementation of the identity block as defined in Figure 4

Arguments:

X -- input tensor of shape (m, n_H_prev, n_W_prev, n_C_prev)

f -- integer, specifying the shape of the middle CONV's window for the main path

filters -- python list of integers, defining the number of filters in the CONV layers of the main path

training -- True: Behave in training mode

False: Behave in inference mode

initializer -- to set up the initial weights of a layer. Equals to random uniform initializer

Returns:

X -- output of the identity block, tensor of shape (m, n_H, n_W, n_C)

"""

# Retrieve Filters

F1, F2, F3 = filters

# Save the input value. You'll need this later to add back to the main path.

X_shortcut = X

# First component of main path

X = Conv2D(filters = F1, kernel_size = 1, strides = (1,1), padding = 'valid', kernel_initializer = initializer(seed=0))(X)

X = BatchNormalization(axis = 3)(X, training = training) # Default axis

X = Activation('relu')(X)

### START CODE HERE

## Second component of main path (≈3 lines)

X = Conv2D(filters=F2,kernel_size=f,strides=(1,1),padding='same',kernel_initializer=initializer(seed=0))(X)

X = BatchNormalization(axis=3)(X,training=training)

X = Activation('relu')(X)

## Third component of main path (≈2 lines)

X = Conv2D(filters=F3,kernel_size=1,strides=(1,1),padding='valid',kernel_initializer=initializer(seed=0))(X)

X = BatchNormalization(axis=3)(X,training=training)

## Final step: Add shortcut value to main path, and pass it through a RELU activation (≈2 lines)

X = Add()([X,X_shortcut])

X = Activation('relu')(X)

### END CODE HERE

return X

Convolutional Block

# UNQ_C2

# GRADED FUNCTION: convolutional_block

def convolutional_block(X, f, filters, s = 2, training=True, initializer=glorot_uniform):

"""

Implementation of the convolutional block as defined in Figure 4

Arguments:

X -- input tensor of shape (m, n_H_prev, n_W_prev, n_C_prev)

f -- integer, specifying the shape of the middle CONV's window for the main path

filters -- python list of integers, defining the number of filters in the CONV layers of the main path

s -- Integer, specifying the stride to be used

training -- True: Behave in training mode

False: Behave in inference mode

initializer -- to set up the initial weights of a layer. Equals to Glorot uniform initializer,

also called Xavier uniform initializer.

Returns:

X -- output of the convolutional block, tensor of shape (n_H, n_W, n_C)

"""

# Retrieve Filters

F1, F2, F3 = filters

# Save the input value

X_shortcut = X

##### MAIN PATH #####

# First component of main path glorot_uniform(seed=0)

X = Conv2D(filters = F1, kernel_size = 1, strides = (s, s), padding='valid', kernel_initializer = initializer(seed=0))(X)

X = BatchNormalization(axis = 3)(X, training=training)

X = Activation('relu')(X)

### START CODE HERE

## Second component of main path (≈3 lines)

X = Conv2D(filters=F2,kernel_size=f,strides=(1,1),padding='same',kernel_initializer=initializer(seed=0))(X)

X = BatchNormalization(axis=3)(X,training=training)

X = Activation('relu')(X)

## Third component of main path (≈2 lines)

X = Conv2D(filters=F3,kernel_size=1,strides=(1,1),padding='valid',kernel_initializer=initializer(seed=0))(X)

X = BatchNormalization(axis=3)(X,training=training)

##### SHORTCUT PATH ##### (≈2 lines)

X_shortcut = Conv2D(filters=F3,kernel_size=1,strides=(s,s),padding='valid',kernel_initializer=initializer(seed=0))(X_shortcut)

X_shortcut = BatchNormalization(axis=3)(X_shortcut,training=training)

### END CODE HERE

# Final step: Add shortcut value to main path (Use this order [X, X_shortcut]), and pass it through a RELU activation

X = Add()([X, X_shortcut])

X = Activation('relu')(X)

return X

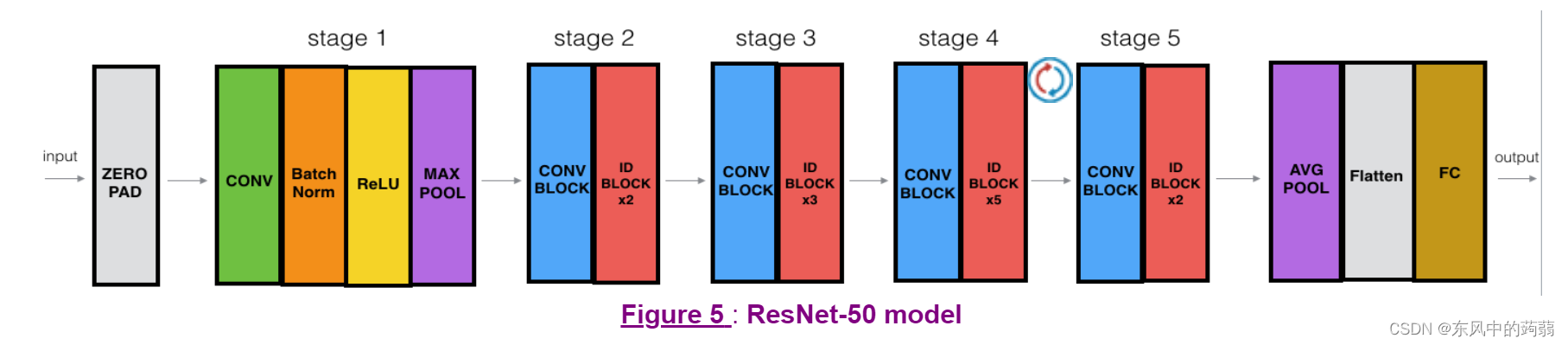

ResNet(50 layers)

# UNQ_C3

# GRADED FUNCTION: ResNet50

def ResNet50(input_shape = (64, 64, 3), classes = 6):

"""

Stage-wise implementation of the architecture of the popular ResNet50:

CONV2D -> BATCHNORM -> RELU -> MAXPOOL -> CONVBLOCK -> IDBLOCK*2 -> CONVBLOCK -> IDBLOCK*3

-> CONVBLOCK -> IDBLOCK*5 -> CONVBLOCK -> IDBLOCK*2 -> AVGPOOL -> FLATTEN -> DENSE

Arguments:

input_shape -- shape of the images of the dataset

classes -- integer, number of classes

Returns:

model -- a Model() instance in Keras

"""

# Define the input as a tensor with shape input_shape

X_input = Input(input_shape)

# Zero-Padding

X = ZeroPadding2D((3, 3))(X_input)

# Stage 1

X = Conv2D(64, (7, 7), strides = (2, 2), kernel_initializer = glorot_uniform(seed=0))(X)

X = BatchNormalization(axis = 3)(X)

X = Activation('relu')(X)

X = MaxPooling2D((3, 3), strides=(2, 2))(X)

# Stage 2

X = convolutional_block(X, f = 3, filters = [64, 64, 256], s = 1)

X = identity_block(X, 3, [64, 64, 256])

X = identity_block(X, 3, [64, 64, 256])

### START CODE HERE

## Stage 3 (≈4 lines)

X = convolutional_block(X,f=3,s=2,filters=[128,128,512])

X = identity_block(X,3,[128,128,512])

X = identity_block(X,3,[128,128,512])

X = identity_block(X,3,[128,128,512])

## Stage 4 (≈6 lines)

X = convolutional_block(X,f=3,s=2,filters=[256,256,1024])

X = identity_block(X,3,[256,256,1024])

X = identity_block(X,3,[256,256,1024])

X = identity_block(X,3,[256,256,1024])

X = identity_block(X,3,[256,256,1024])

X = identity_block(X,3,[256,256,1024])

## Stage 5 (≈3 lines)

X = convolutional_block(X,f=3,s=2,filters=[512,512,2048])

X = identity_block(X,3,[512,512,2048])

X = identity_block(X,3,[512,512,2048])

## AVGPOOL (≈1 line). Use "X = AveragePooling2D(...)(X)"

X = AveragePooling2D(pool_size=(2,2))(X)

### END CODE HERE

# output layer

X = Flatten()(X)

X = Dense(classes, activation='softmax', kernel_initializer = glorot_uniform(seed=0))(X)

# Create model

model = Model(inputs = X_input, outputs = X)

return model

model.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])

model.fit(X_train, Y_train, epochs = 10, batch_size = 32)

preds = model.evaluate(X_test, Y_test)

print ("Loss = " + str(preds[0]))

print ("Test Accuracy = " + str(preds[1]))

5160

5160

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?