人工智能-作业5:卷积-池化-激活

作业链接

- For循环版本:手工实现 卷积-池化-激活

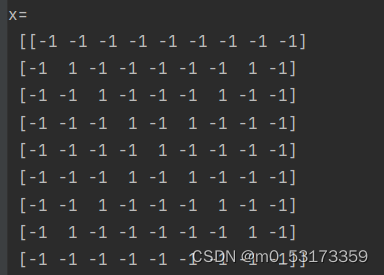

import numpy as np

x = np.array([[-1, -1, -1, -1, -1, -1, -1, -1, -1],

[-1, 1, -1, -1, -1, -1, -1, 1, -1],

[-1, -1, 1, -1, -1, -1, 1, -1, -1],

[-1, -1, -1, 1, -1, 1, -1, -1, -1],

[-1, -1, -1, -1, 1, -1, -1, -1, -1],

[-1, -1, -1, 1, -1, 1, -1, -1, -1],

[-1, -1, 1, -1, -1, -1, 1, -1, -1],

[-1, 1, -1, -1, -1, -1, -1, 1, -1],

[-1, -1, -1, -1, -1, -1, -1, -1, -1]])

print("x=\n", x)

Kernel = [[0 for i in range(0, 3)] for j in range(0, 3)]

Kernel[0] = np.array([[1, -1, -1],

[-1, 1, -1],

[-1, -1, 1]])

Kernel[1] = np.array([[1, -1, 1],

[-1, 1, -1],

[1, -1, 1]])

Kernel[2] = np.array([[-1, -1, 1],

[-1, 1, -1],

[1, -1, -1]])

stride = 1

feature_map_h = 7

feature_map_w = 7

feature_map = [0 for i in range(0, 3)]

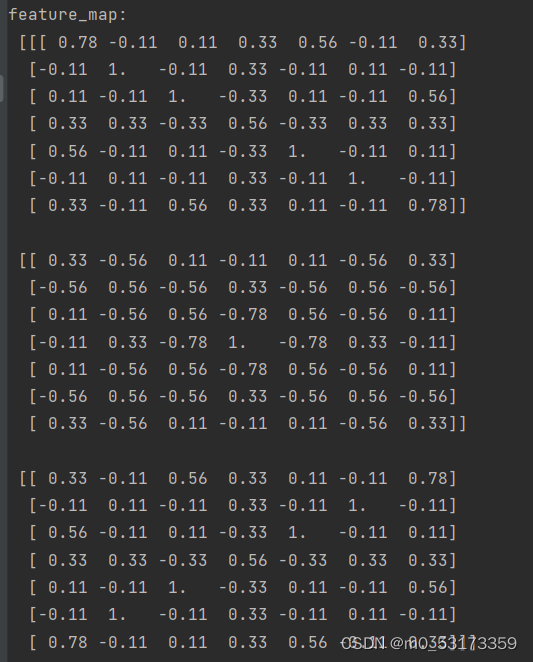

for i in range(0, 3):

feature_map[i] = np.zeros((feature_map_h, feature_map_w))

for h in range(feature_map_h):

for w in range(feature_map_w):

v_start = h * stride

v_end = v_start + 3

h_start = w * stride

h_end = h_start + 3

window = x[v_start:v_end, h_start:h_end]

for i in range(0, 3):

feature_map[i][h, w] = np.divide(np.sum(np.multiply(window, Kernel[i][:, :])), 9)

print("feature_map:\n", np.around(feature_map, decimals=2))

pooling_stride = 2

pooling_h = 4

pooling_w = 4

feature_map_pad_0 = [[0 for i in range(0, 8)] for j in range(0, 8)]

for i in range(0, 3):

feature_map_pad_0[i] = np.pad(feature_map[i], ((0, 1), (0, 1)), 'constant', constant_values=(0, 0))

pooling = [0 for i in range(0, 3)]

for i in range(0, 3):

pooling[i] = np.zeros((pooling_h, pooling_w))

for h in range(pooling_h):

for w in range(pooling_w):

v_start = h * pooling_stride

v_end = v_start + 2

h_start = w * pooling_stride

h_end = h_start + 2

for i in range(0, 3):

pooling[i][h, w] = np.max(feature_map_pad_0[i][v_start:v_end, h_start:h_end])

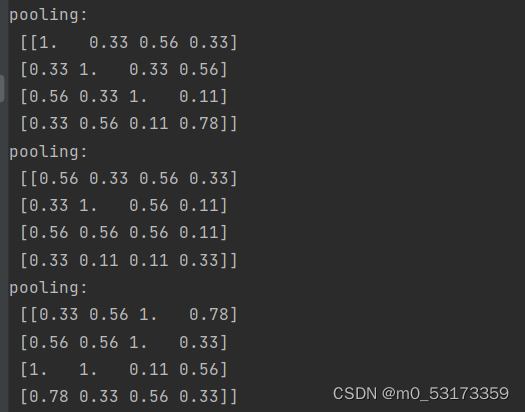

print("pooling:\n", np.around(pooling[0], decimals=2))

print("pooling:\n", np.around(pooling[1], decimals=2))

print("pooling:\n", np.around(pooling[2], decimals=2))

def relu(x):

return (abs(x) + x) / 2

relu_map_h = 7

relu_map_w = 7

relu_map = [0 for i in range(0, 3)]

for i in range(0, 3):

relu_map[i] = np.zeros((relu_map_h, relu_map_w))

for i in range(0, 3):

relu_map[i] = relu(feature_map[i])

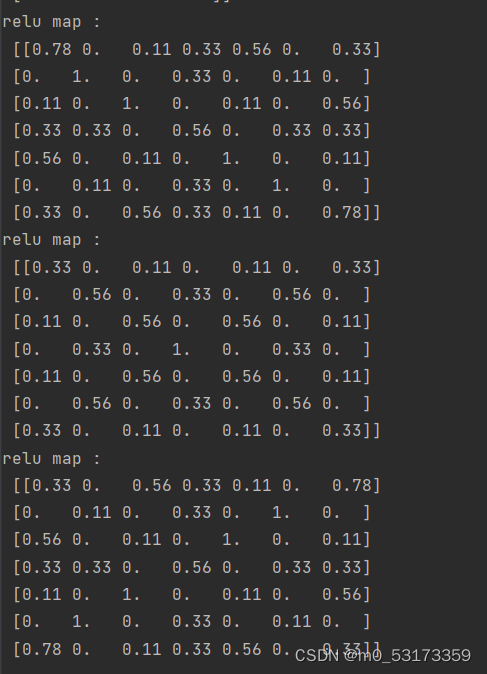

print("relu map :\n",np.around(relu_map[0], decimals=2))

print("relu map :\n",np.around(relu_map[1], decimals=2))

print("relu map :\n",np.around(relu_map[2], decimals=2))

- Pytorch版本:调用函数完成 卷积-池化-激活

import numpy as np

import torch

import torch.nn as nn

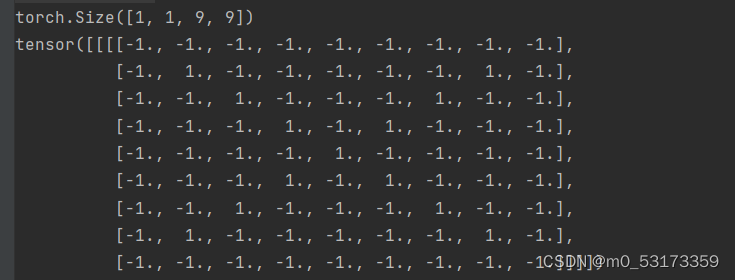

x = torch.tensor([[[[-1, -1, -1, -1, -1, -1, -1, -1, -1],

[-1, 1, -1, -1, -1, -1, -1, 1, -1],

[-1, -1, 1, -1, -1, -1, 1, -1, -1],

[-1, -1, -1, 1, -1, 1, -1, -1, -1],

[-1, -1, -1, -1, 1, -1, -1, -1, -1],

[-1, -1, -1, 1, -1, 1, -1, -1, -1],

[-1, -1, 1, -1, -1, -1, 1, -1, -1],

[-1, 1, -1, -1, -1, -1, -1, 1, -1],

[-1, -1, -1, -1, -1, -1, -1, -1, -1]]]], dtype=torch.float)

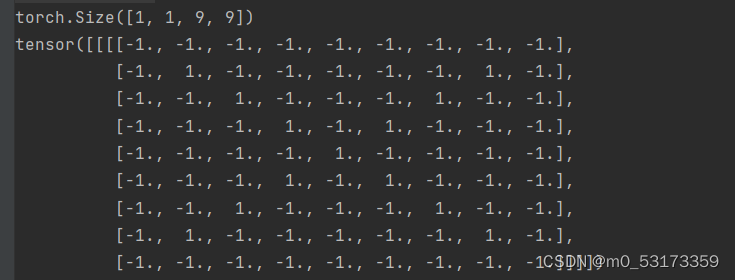

print(x.shape)

print(x)

print("--------------- 卷积 ---------------")

conv1 = nn.Conv2d(1, 1, (3, 3), 1)

conv1.weight.data = torch.Tensor([[[[1, -1, -1],

[-1, 1, -1],

[-1, -1, 1]]

]])

conv2 = nn.Conv2d(1, 1, (3, 3), 1)

conv2.weight.data = torch.Tensor([[[[1, -1, 1],

[-1, 1, -1],

[1, -1, 1]]

]])

conv3 = nn.Conv2d(1, 1, (3, 3), 1)

conv3.weight.data = torch.Tensor([[[[-1, -1, 1],

[-1, 1, -1],

[1, -1, -1]]

]])

feature_map1 = conv1(x)

feature_map2 = conv2(x)

feature_map3 = conv3(x)

print(feature_map1 / 9)

print(feature_map2 / 9)

print(feature_map3 / 9)

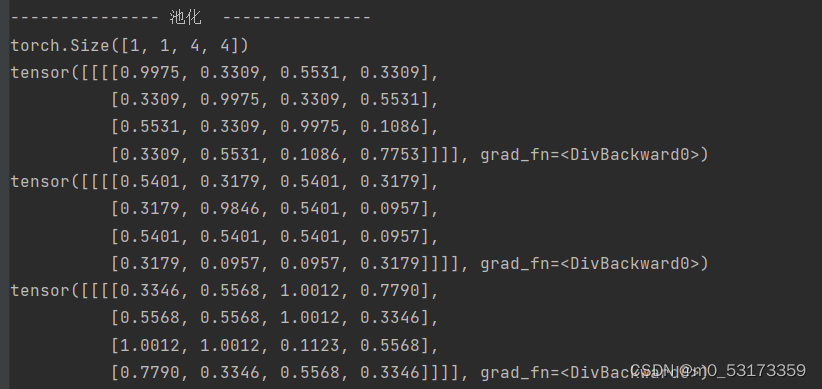

print("--------------- 池化 ---------------")

max_pool = nn.MaxPool2d(2, padding=0, stride=2)

zeroPad = nn.ZeroPad2d(padding=(0, 1, 0, 1))

feature_map_pad_0_1 = zeroPad(feature_map1)

feature_pool_1 = max_pool(feature_map_pad_0_1)

feature_map_pad_0_2 = zeroPad(feature_map2)

feature_pool_2 = max_pool(feature_map_pad_0_2)

feature_map_pad_0_3 = zeroPad(feature_map3)

feature_pool_3 = max_pool(feature_map_pad_0_3)

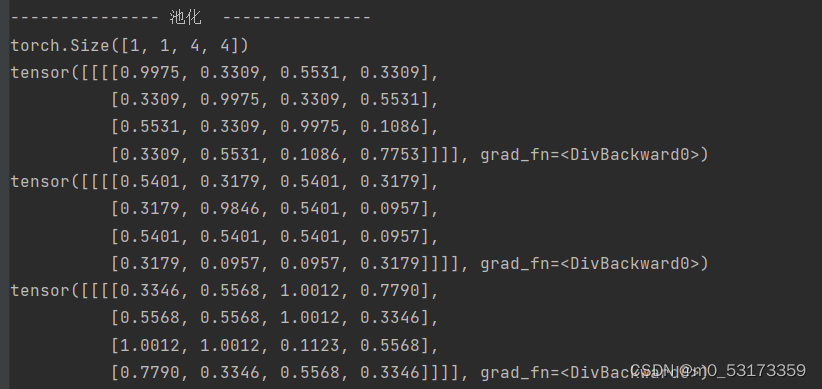

print(feature_pool_1.size())

print(feature_pool_1 / 9)

print(feature_pool_2 / 9)

print(feature_pool_3 / 9)

print("--------------- 激活 ---------------")

activation_function = nn.ReLU()

feature_relu1 = activation_function(feature_map1)

feature_relu2 = activation_function(feature_map2)

feature_relu3 = activation_function(feature_map3)

print(feature_relu1 / 9)

print(feature_relu2 / 9)

print(feature_relu3 / 9)

- 结果

print(x.shape)

print(x)

img = x.data.squeeze().numpy()

plt.imshow(img, cmap='gray')

plt.title('原图')

plt.show()

print("--------------- 卷积 ---------------")

conv1 = nn.Conv2d(1, 1, (3, 3), 1)

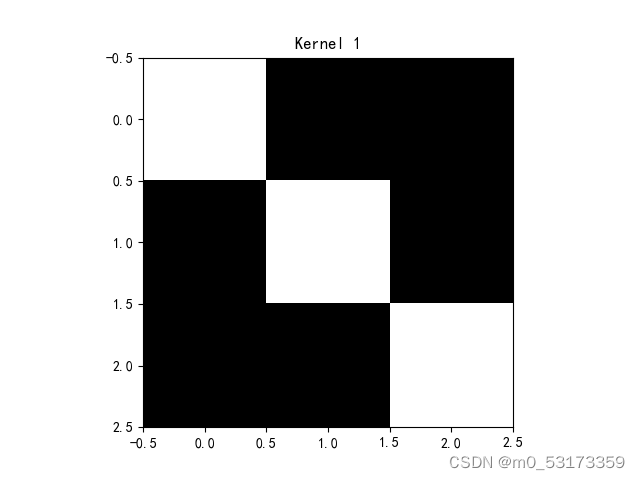

conv1.weight.data = torch.Tensor([[[[1, -1, -1],

[-1, 1, -1],

[-1, -1, 1]]

]])

img = conv1.weight.data.squeeze().numpy()

plt.imshow(img, cmap='gray')

plt.title('Kernel 1')

plt.show()

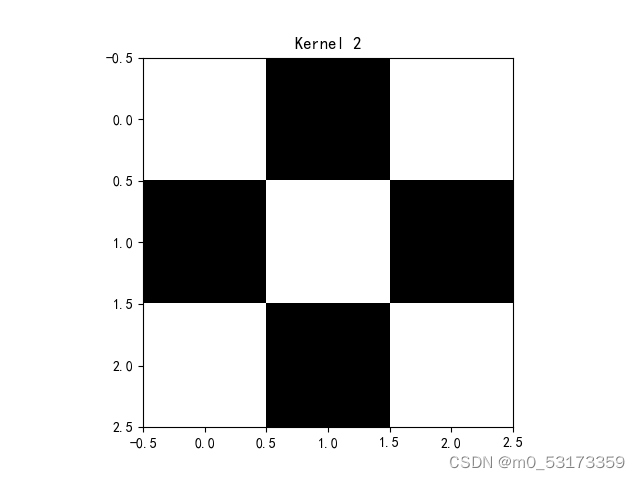

conv2 = nn.Conv2d(1, 1, (3, 3), 1)

conv2.weight.data = torch.Tensor([[[[1, -1, 1],

[-1, 1, -1],

[1, -1, 1]]

]])

img = conv2.weight.data.squeeze().numpy()

plt.imshow(img, cmap='gray')

plt.title('Kernel 2')

plt.show()

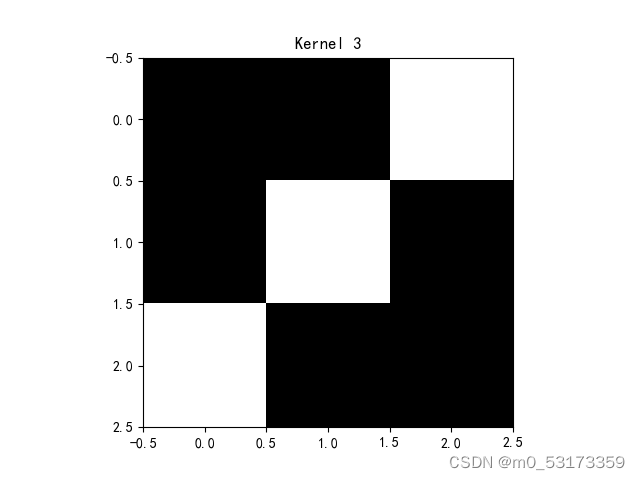

conv3 = nn.Conv2d(1, 1, (3, 3), 1)

conv3.weight.data = torch.Tensor([[[[-1, -1, 1],

[-1, 1, -1],

[1, -1, -1]]

]])

img = conv3.weight.data.squeeze().numpy()

plt.imshow(img, cmap='gray')

plt.title('Kernel 3')

plt.show()

feature_map1 = conv1(x)

feature_map2 = conv2(x)

feature_map3 = conv3(x)

print(feature_map1 / 9)

print(feature_map2 / 9)

print(feature_map3 / 9)

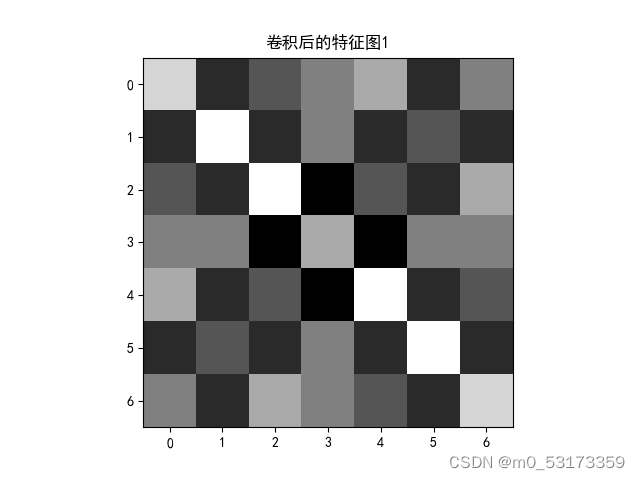

img = feature_map1.data.squeeze().numpy()

plt.imshow(img, cmap='gray')

plt.title('卷积后的特征图1')

plt.show()

print("--------------- 池化 ---------------")

max_pool = nn.MaxPool2d(2, padding=0, stride=2)

zeroPad = nn.ZeroPad2d(padding=(0, 1, 0, 1))

feature_map_pad_0_1 = zeroPad(feature_map1)

feature_pool_1 = max_pool(feature_map_pad_0_1)

feature_map_pad_0_2 = zeroPad(feature_map2)

feature_pool_2 = max_pool(feature_map_pad_0_2)

feature_map_pad_0_3 = zeroPad(feature_map3)

feature_pool_3 = max_pool(feature_map_pad_0_3)

print(feature_pool_1.size())

print(feature_pool_1 / 9)

print(feature_pool_2 / 9)

print(feature_pool_3 / 9)

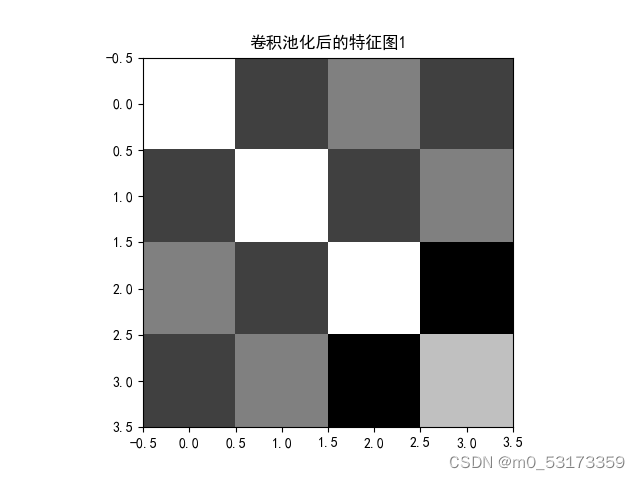

img = feature_pool_1.data.squeeze().numpy()

plt.imshow(img, cmap='gray')

plt.title('卷积池化后的特征图1')

plt.show()

print("--------------- 激活 ---------------")

activation_function = nn.ReLU()

feature_relu1 = activation_function(feature_map1)

feature_relu2 = activation_function(feature_map2)

feature_relu3 = activation_function(feature_map3)

print(feature_relu1 / 9)

print(feature_relu2 / 9)

print(feature_relu3 / 9)

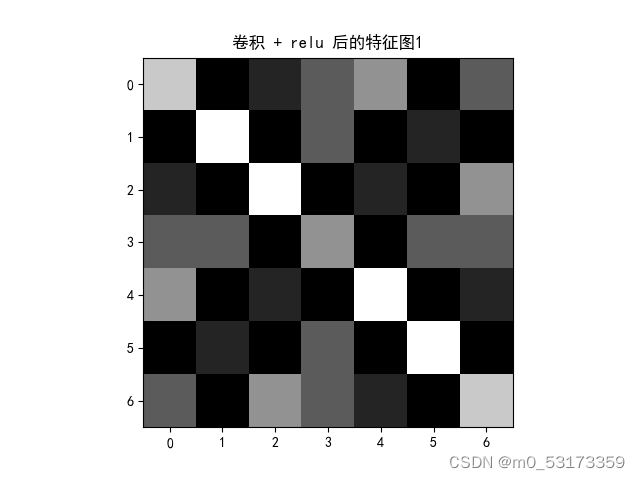

img = feature_relu1.data.squeeze().numpy()

plt.imshow(img, cmap='gray')

plt.title('卷积 + relu 后的特征图1')

plt.show()

911

911

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?