- or raise IgnoreRequest: process_exception() methods of

installed downloader middleware will be called

return None

def process_response(self, request, response, spider):

Called with the response returned from the downloader.

Must either;

- return a Response object

- return a Request object

- or raise IgnoreRequest

return response

def process_exception(self, request, exception, spider):

Called when a download handler or a process_request()

(from other downloader middleware) raises an exception.

Must either:

- return None: continue processing this exception

- return a Response object: stops process_exception() chain

- return a Request object: stops process_exception() chain

pass

def spider_opened(self, spider):

spider.logger.info(‘Spider opened: %s’ % spider.name)

settings.py

-- coding: utf-8 --

Scrapy settings for zol2 project

For simplicity, this file contains only settings considered important or

commonly used. You can find more settings consulting the documentation:

https://doc.scrapy.org/en/latest/topics/settings.html

https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

https://doc.scrapy.org/en/latest/topics/spider-middleware.html

BOT_NAME = ‘zol2’

SPIDER_MODULES = [‘zol2.spiders’]

NEWSPIDER_MODULE = ‘zol2.spiders’

Crawl responsibly by identifying yourself (and your website) on the user-agent

USER_AGENT = ‘Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/73.0.3683.75 Safari/537.36’

Obey robots.txt rules

ROBOTSTXT_OBEY = True

Configure maximum concurrent requests performed by Scrapy (default: 16)

#CONCURRENT_REQUESTS = 32

Configure a delay for requests for the same website (default: 0)

See https://doc.scrapy.org/en/latest/topics/settings.html#download-delay

See also autothrottle settings and docs

DOWNLOAD_DELAY = 0.5

The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16

Disable cookies (enabled by default)

#COOKIES_ENABLED = False

Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False

Override the default request headers:

#DEFAULT_REQUEST_HEADERS = {

‘Accept’: ‘text/html,application/xhtml+xml,application/xml;q=0.9,/;q=0.8’,

‘Accept-Language’: ‘en’,

#}

Enable or disable spider middlewares

See https://doc.scrapy.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

‘zol2.middlewares.Zol2SpiderMiddleware’: 543,

#}

Enable or disable downloader middlewares

See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

#DOWNLOADER_MIDDLEWARES = {

‘zol2.middlewares.Zol2DownloaderMiddleware’: 543,

#}

Enable or disable extensions

See https://doc.scrapy.org/en/latest/topics/extensions.html

#EXTENSIONS = {

‘scrapy.extensions.telnet.TelnetConsole’: None,

#}

Configure item pipelines

See https://doc.scrapy.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {

‘zol2.pipelines.Zol2Pipeline’: 300,

}

IMAGES_STORE = “/home/pyvip/env_spider/zol2/zol2/images”

Enable and configure the AutoThrottle extension (disabled by default)

See https://doc.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

The average number of requests Scrapy should be sending in parallel to

each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False

Enable and configure HTTP caching (disabled by default)

See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = ‘httpcache’

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = ‘scrapy.extensions.httpcache.FilesystemCacheStorage’

pazol2.py

-- coding: utf-8 --

import scrapy

from scrapy.linkextractors import LinkExtractor

from scrapy.spiders import CrawlSpider, Rule

from zol2.items import Zol2Item

class Pazol2Spider(CrawlSpider):

name = ‘pazol2’

allowed_domains = [‘desk.zol.com.cn’]

start_urls = [‘http://desk.zol.com.cn/fengjing/1920x1080/’]

front_url = “http://desk.zol.com.cn”

num = 1

rules = (

1.解决翻页

Rule(LinkExtractor(allow=r’/fengjing/1920x1080/[0-1]?[0-9]?.html’), callback=‘parse_album’, follow=True),

2.进入各个图库的每一张图片页

Rule(LinkExtractor(allow=r’/bizhi/\d+\d+\d+.html’, restrict_xpaths=(“//div[@class=‘main’]/ul[@class=‘pic-list2 clearfix’]/li”, “//div[@class=‘photo-list-box’]”)), follow=True),

3.点击各个图片1920*1080按钮,获得html

Rule(LinkExtractor(allow=r’/showpic/1920x1080_\d+_\d+.html’), callback=‘get_img’, follow=True),

如果你也是看准了Python,想自学Python,在这里为大家准备了丰厚的免费学习大礼包,带大家一起学习,给大家剖析Python兼职、就业行情前景的这些事儿。

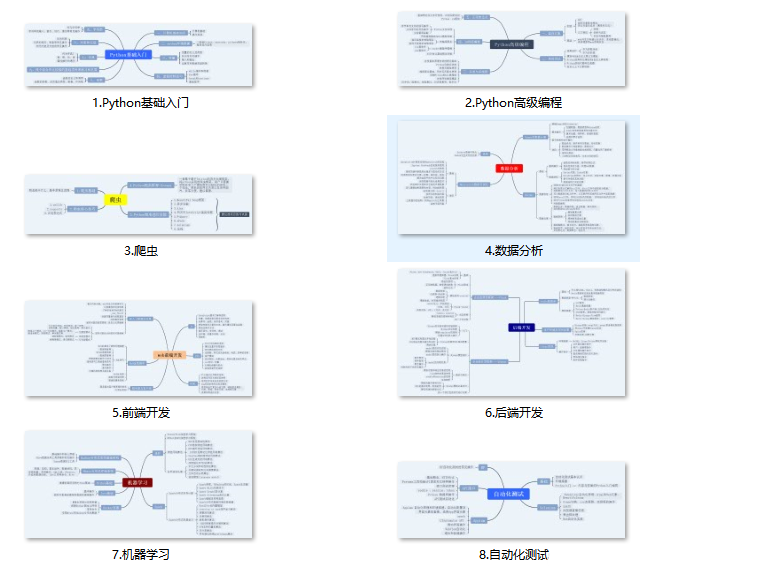

一、Python所有方向的学习路线

Python所有方向路线就是把Python常用的技术点做整理,形成各个领域的知识点汇总,它的用处就在于,你可以按照上面的知识点去找对应的学习资源,保证自己学得较为全面。

二、学习软件

工欲善其必先利其器。学习Python常用的开发软件都在这里了,给大家节省了很多时间。

三、全套PDF电子书

书籍的好处就在于权威和体系健全,刚开始学习的时候你可以只看视频或者听某个人讲课,但等你学完之后,你觉得你掌握了,这时候建议还是得去看一下书籍,看权威技术书籍也是每个程序员必经之路。

四、入门学习视频

我们在看视频学习的时候,不能光动眼动脑不动手,比较科学的学习方法是在理解之后运用它们,这时候练手项目就很适合了。

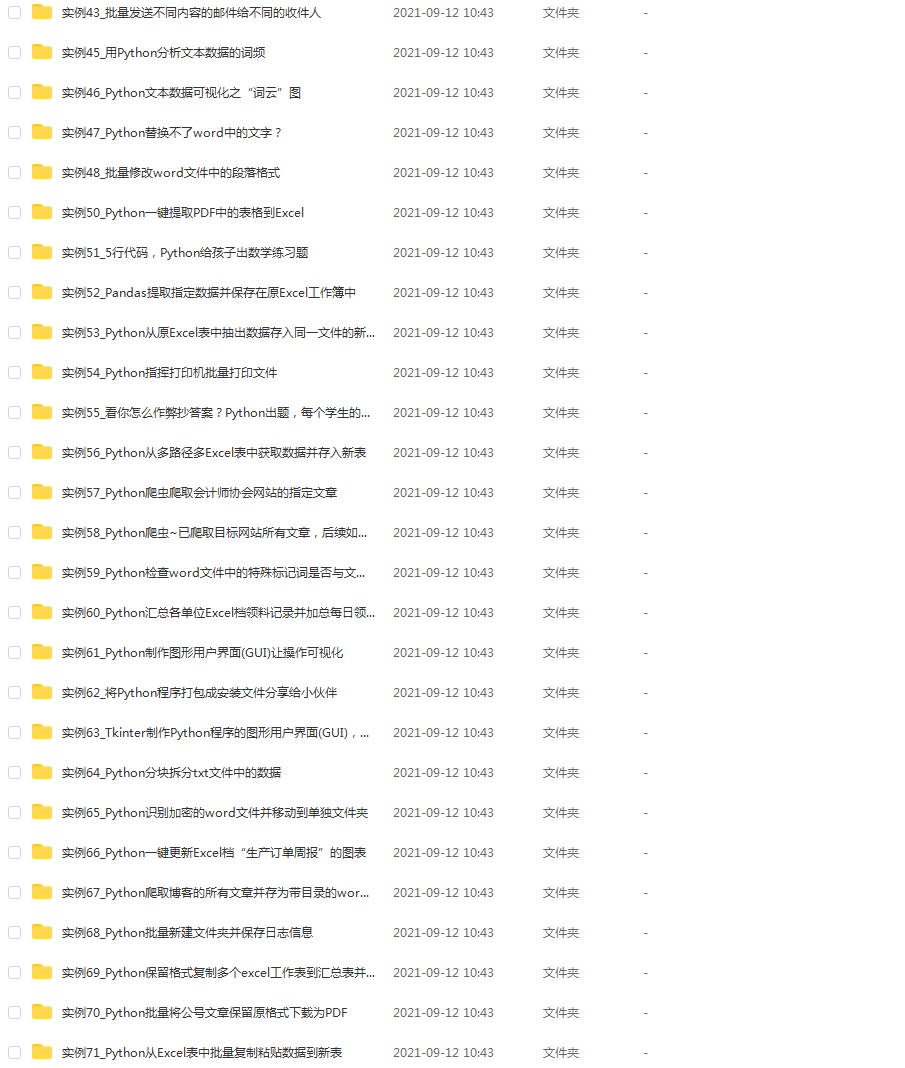

四、实战案例

光学理论是没用的,要学会跟着一起敲,要动手实操,才能将自己的所学运用到实际当中去,这时候可以搞点实战案例来学习。

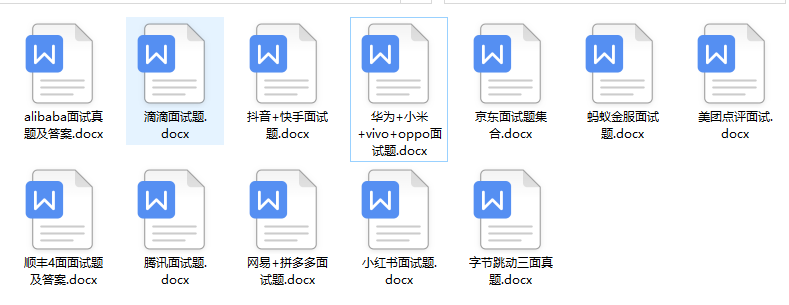

五、面试资料

我们学习Python必然是为了找到高薪的工作,下面这些面试题是来自阿里、腾讯、字节等一线互联网大厂最新的面试资料,并且有阿里大佬给出了权威的解答,刷完这一套面试资料相信大家都能找到满意的工作。

成为一个Python程序员专家或许需要花费数年时间,但是打下坚实的基础只要几周就可以,如果你按照我提供的学习路线以及资料有意识地去实践,你就有很大可能成功!

最后祝你好运!!!

小编13年上海交大毕业,曾经在小公司待过,也去过华为、OPPO等大厂,18年进入阿里一直到现在。

深知大多数初中级Python工程师,想要提升技能,往往是自己摸索成长或者是报班学习,但自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

因此收集整理了一份《2024年Python爬虫全套学习资料》送给大家,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友,同时减轻大家的负担。

由于文件比较大,这里只是将部分目录截图出来,每个节点里面都包含大厂面经、学习笔记、源码讲义、实战项目、讲解视频

如果你觉得这些内容对你有帮助,可以添加下面V无偿领取!(备注:python)

海交大毕业,曾经在小公司待过,也去过华为、OPPO等大厂,18年进入阿里一直到现在。**

深知大多数初中级Python工程师,想要提升技能,往往是自己摸索成长或者是报班学习,但自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

因此收集整理了一份《2024年Python爬虫全套学习资料》送给大家,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友,同时减轻大家的负担。

由于文件比较大,这里只是将部分目录截图出来,每个节点里面都包含大厂面经、学习笔记、源码讲义、实战项目、讲解视频

如果你觉得这些内容对你有帮助,可以添加下面V无偿领取!(备注:python)

[外链图片转存中…(img-5Pb9QEM1-1711211141193)]

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?