现在能在网上找到很多很多的学习资源,有免费的也有收费的,当我拿到1套比较全的学习资源之前,我并没着急去看第1节,我而是去审视这套资源是否值得学习,有时候也会去问一些学长的意见,如果可以之后,我会对这套学习资源做1个学习计划,我的学习计划主要包括规划图和学习进度表。

分享给大家这份我薅到的免费视频资料,质量还不错,大家可以跟着学习

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

import pandas as pd

Import helper functions

from mlfromscratch.utils import train_test_split, accuracy_score, Plot

Decision stump used as weak classifier in this impl. of Adaboost

class DecisionStump():

def init(self):

Determines if sample shall be classified as -1 or 1 given threshold

self.polarity = 1

The index of the feature used to make classification

self.feature_index = None

The threshold value that the feature should be measured against

self.threshold = None

Value indicative of the classifier’s accuracy

self.alpha = None

class Adaboost():

“”"Boosting method that uses a number of weak classifiers in

ensemble to make a strong classifier. This implementation uses decision

stumps, which is a one level Decision Tree.

Parameters:

n_clf: int

The number of weak classifiers that will be used.

“”"

def init(self, n_clf=5):

self.n_clf = n_clf

def fit(self, X, y):

n_samples, n_features = np.shape(X)

Initialize weights to 1/N

w = np.full(n_samples, (1 / n_samples))

self.clfs = []

Iterate through classifiers

for _ in range(self.n_clf):

clf = DecisionStump()

Minimum error given for using a certain feature value threshold

for predicting sample label

min_error = float(‘inf’)

Iterate throught every unique feature value and see what value

makes the best threshold for predicting y

for feature_i in range(n_features):

feature_values = np.expand_dims(X[:, feature_i], axis=1)

unique_values = np.unique(feature_values)

Try every unique feature value as threshold

for threshold in unique_values:

p = 1

Set all predictions to ‘1’ initially

prediction = np.ones(np.shape(y))

Label the samples whose values are below threshold as ‘-1’

prediction[X[:, feature_i] < threshold] = -1

Error = sum of weights of misclassified samples

error = sum(w[y != prediction])

If the error is over 50% we flip the polarity so that samples that

were classified as 0 are classified as 1, and vice versa

E.g error = 0.8 => (1 - error) = 0.2

if error > 0.5:

error = 1 - error

p = -1

If this threshold resulted in the smallest error we save the

configuration

if error < min_error:

clf.polarity = p

clf.threshold = threshold

clf.feature_index = feature_i

min_error = error

Calculate the alpha which is used to update the sample weights,

Alpha is also an approximation of this classifier’s proficiency

clf.alpha = 0.5 * math.log((1.0 - min_error) / (min_error + 1e-10))

Set all predictions to ‘1’ initially

predictions = np.ones(np.shape(y))

The indexes where the sample values are below threshold

negative_idx = (clf.polarity * X[:, clf.feature_index] < clf.polarity * clf.threshold)

Label those as ‘-1’

predictions[negative_idx] = -1

Calculate new weights

Missclassified samples gets larger weights and correctly classified samples smaller

w *= np.exp(-clf.alpha * y * predictions)

Normalize to one

w /= np.sum(w)

Save classifier

self.clfs.append(clf)

def predict(self, X):

n_samples = np.shape(X)[0]

y_pred = np.zeros((n_samples, 1))

For each classifier => label the samples

for clf in self.clfs:

Set all predictions to ‘1’ initially

predictions = np.ones(np.shape(y_pred))

The indexes where the sample values are below threshold

negative_idx = (clf.polarity * X[:, clf.feature_index] < clf.polarity * clf.threshold)

Label those as ‘-1’

predictions[negative_idx] = -1

Add predictions weighted by the classifiers alpha

学好 Python 不论是就业还是做副业赚钱都不错,但要学会 Python 还是要有一个学习规划。最后大家分享一份全套的 Python 学习资料,给那些想学习 Python 的小伙伴们一点帮助!

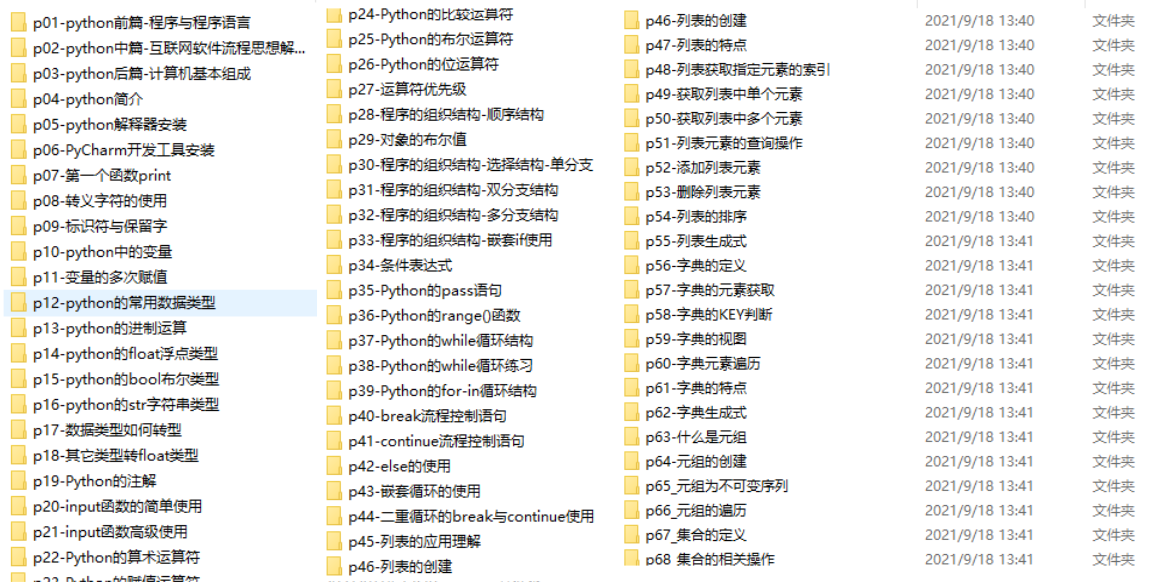

一、Python所有方向的学习路线

Python所有方向路线就是把Python常用的技术点做整理,形成各个领域的知识点汇总,它的用处就在于,你可以按照上面的知识点去找对应的学习资源,保证自己学得较为全面。

二、学习软件

工欲善其事必先利其器。学习Python常用的开发软件都在这里了,给大家节省了很多时间。

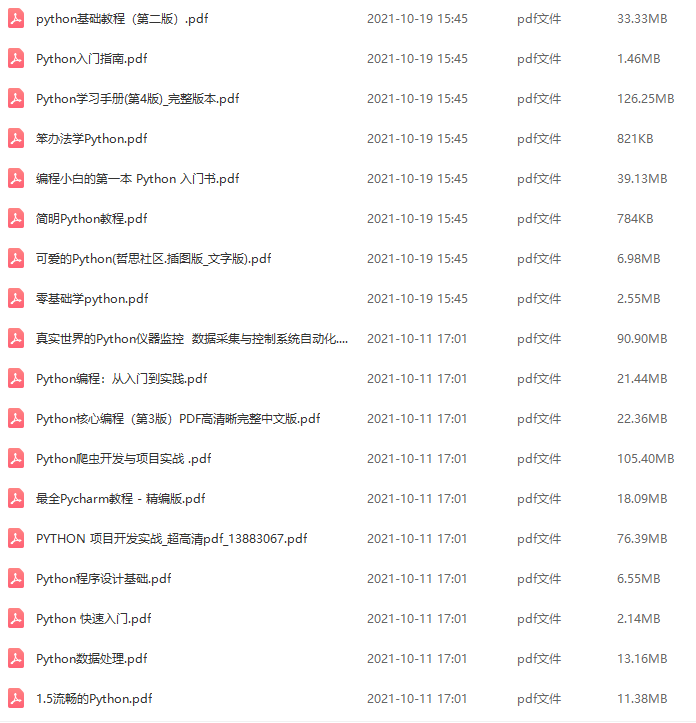

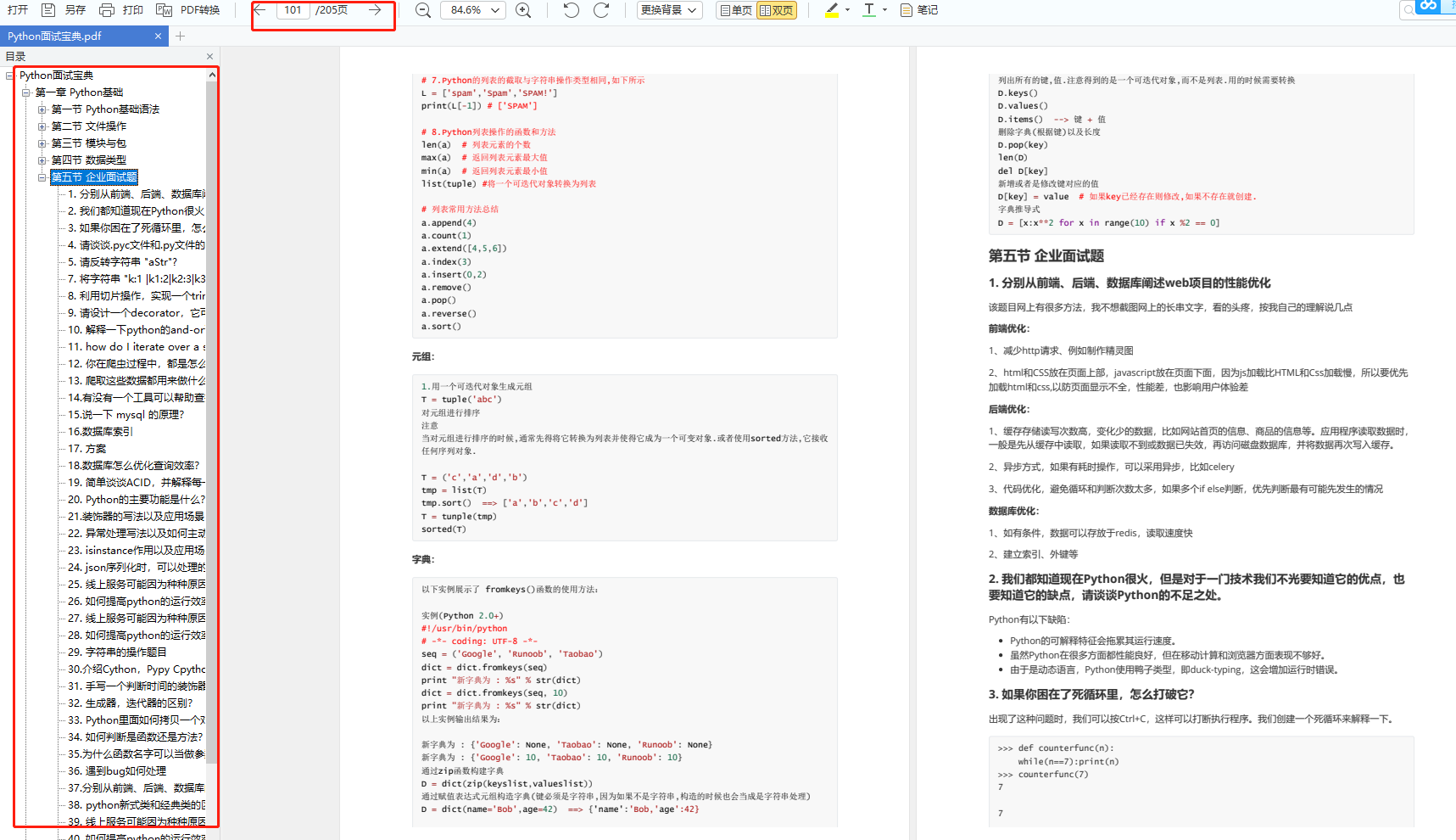

三、全套PDF电子书

书籍的好处就在于权威和体系健全,刚开始学习的时候你可以只看视频或者听某个人讲课,但等你学完之后,你觉得你掌握了,这时候建议还是得去看一下书籍,看权威技术书籍也是每个程序员必经之路。

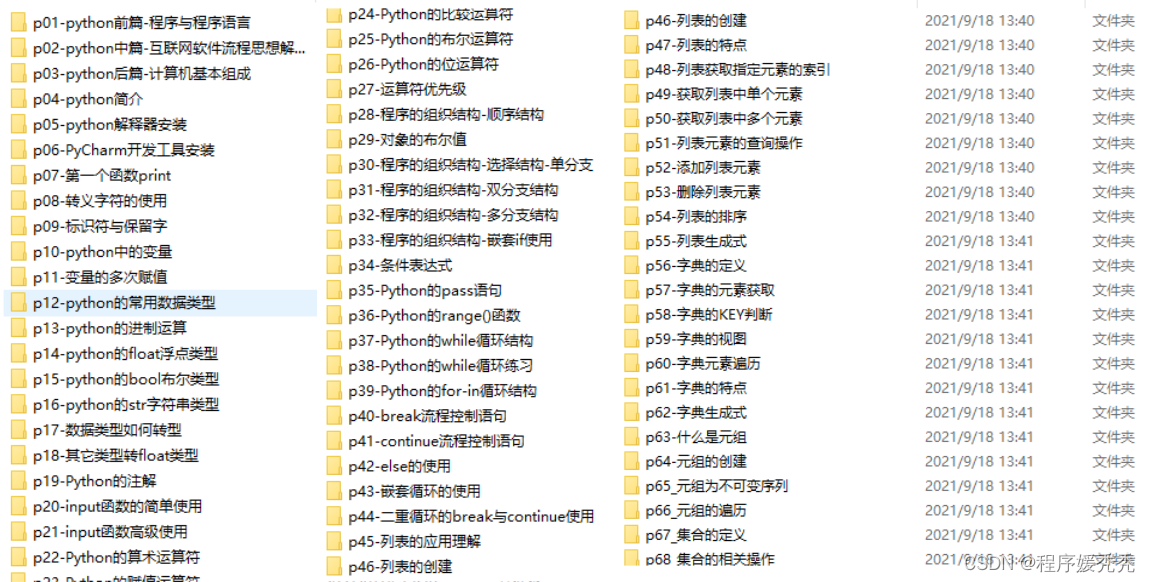

四、入门学习视频

我们在看视频学习的时候,不能光动眼动脑不动手,比较科学的学习方法是在理解之后运用它们,这时候练手项目就很适合了。

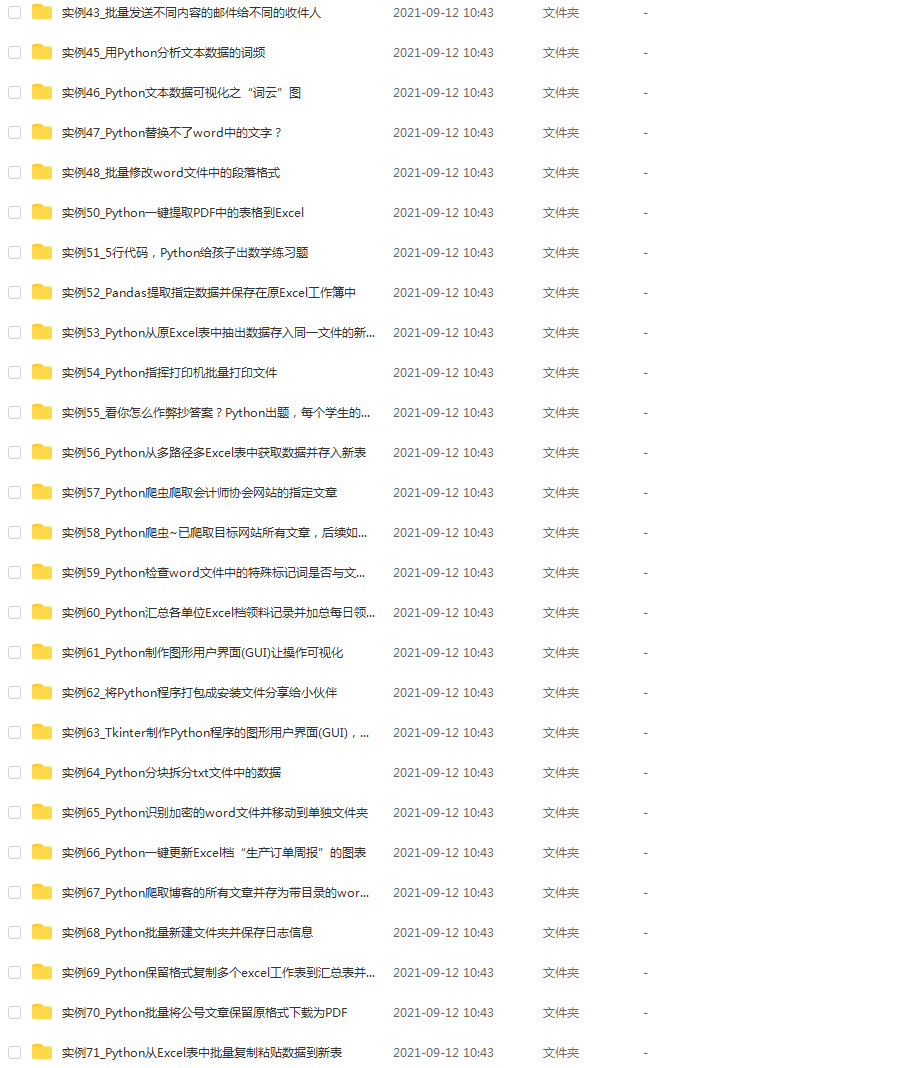

五、实战案例

光学理论是没用的,要学会跟着一起敲,要动手实操,才能将自己的所学运用到实际当中去,这时候可以搞点实战案例来学习。

六、面试资料

我们学习Python必然是为了找到高薪的工作,下面这些面试题是来自阿里、腾讯、字节等一线互联网大厂最新的面试资料,并且有阿里大佬给出了权威的解答,刷完这一套面试资料相信大家都能找到满意的工作。

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?