上一次的实验博客里面写到我的电脑不适配本地的Dpcpp编译器,只能在DevCloud上面写,而DevCloud没有GPU设备,恰巧矩阵乘法示例实验要用到CPU和GPU两个,因此我只能借用舍友电脑来完成实验了。

1.分别测试CPU和GPU执行矩阵乘法的时间

附上代码:

#include <chrono>

#include <iostream>

#include <CL/sycl.hpp>

#define random_float() (rand() / double(RAND_MAX))

using namespace std;

using namespace sycl;

// return execution time

double gpu_kernel(float *A, float *B, float *C, int M, int N, int K, int block_size, sycl::queue &q) {

// define the workgroup size and mapping

auto grid_rows = (M + block_size - 1) / block_size * block_size;

auto grid_cols = (N + block_size - 1) / block_size * block_size;

auto local_ndrange = range<2>(block_size, block_size);

auto global_ndrange = range<2>(grid_rows, grid_cols);

double duration = 0.0f;

auto e = q.submit([&](sycl::handler &h) {

h.parallel_for<class k_name_t>(

sycl::nd_range<2>(global_ndrange, local_ndrange), [=](sycl::nd_item<2> index) {

int row = index.get_global_id(0);

int col = index.get_global_id(1);

float sum = 0.0f;

for (int i = 0; i < K; i++) {

sum += A[row * K + i] * B[i * N + col];

}

C[row * N + col] = sum;

});

});

e.wait();

duration += (e.get_profiling_info<info::event_profiling::command_end>() -

e.get_profiling_info<info::event_profiling::command_start>()) /1000.0f/1000.0f;

return(duration);

}

// return execution time

double cpu_kernel(float *cA, float *cB, float *cC, int M, int N, int K) {

double duration = 0.0;

std::chrono::high_resolution_clock::time_point s, e;

// Single Thread Computation in CPU

s = std::chrono::high_resolution_clock::now();

for(int i = 0; i < M; i++) {

for(int j = 0; j < N; j++) {

float sum = 0.0f;

for(int k = 0; k < K; k++) {

sum += cA[i * K + k] * cB[k * N + j];

}

cC[i * N + j] = sum;

}

}

e = std::chrono::high_resolution_clock::now();

duration = std::chrono::duration<float, std::milli>(e - s).count();

return(duration);

}

int verify(float *cpu_res, float *gpu_res, int length){

int err = 0;

for(int i = 0; i < length; i++) {

if( fabs(cpu_res[i] - gpu_res[i]) > 1e-3) {

err++;

printf("\n%lf, %lf", cpu_res[i], gpu_res[i]);

}

}

return(err);

}

int gemm(const int M,

const int N,

const int K,

const int block_size,

const int iterations,

sycl::queue &q) {

cout << "Problem size: c(" << M << "," << N << ") ="

<< " a(" << M << "," << K << ") *"

<< " b(" << K << "," << N << ")\n";

auto A = malloc_shared<float>(M * K, q);

auto B = malloc_shared<float>(K * N, q);

auto C = malloc_shared<float>(M * N, q);

auto C_host = malloc_host<float>(M * N, q);

// init the A/B/C

for(int i=0; i < M * K; i++) {

A[i] = random_float();

}

for(int i=0; i < K * N; i++) {

B[i] = random_float();

}

for(int i=0; i < M * N; i++) {

C[i] = 0.0f;

C_host[i] = 0.0f;

}

double flopsPerMatrixMul

= 2.0 * static_cast<double>(M) * static_cast<double>(N) * static_cast<double>(K);

double duration_gpu = 0.0f;

double duration_cpu = 0.0f;

// GPU compuation and timer

int warmup = 10;

for (int run = 0; run < iterations + warmup; run++) {

float duration = gpu_kernel(A, B, C, M, N, K, block_size, q);

if(run >= warmup) duration_gpu += duration;

}

duration_gpu = duration_gpu / iterations;

// CPU compuation and timer

warmup = 2;

for(int run = 0; run < iterations/2 + warmup; run++) {

float duration = cpu_kernel(A, B, C_host, M, N, K);

if(run >= warmup) duration_cpu += duration;

}

duration_cpu = duration_cpu / iterations/2;

// Compare the resutls of CPU and GPU

int errCode = 0;

errCode = verify(C_host, C, M*N);

if(errCode > 0) printf("\nThere are %d errors\n", errCode);

printf("\nPerformance Flops = %lf, \n"

"GPU Computation Time = %lf (ms); \n"

"CPU Computaiton Time = %lf (ms); \n",

flopsPerMatrixMul, duration_gpu, duration_cpu);

free(A, q);

free(B, q);

free(C, q);

free(C_host, q);

return(errCode);

}

int main() {

auto propList = cl::sycl::property_list {cl::sycl::property::queue::enable_profiling()};

queue my_gpu_queue( cl::sycl::gpu_selector_v , propList);

int errCode = gemm(2000, 2000, 2000, 4, 10, my_gpu_queue);

return(errCode);

}设定M=N=K=2000。

本地运行结果:

可以看到GPU执行时间为1409ms左右,CPU执行时间为6540ms左右,GPU执行时间比CPU短。

2.分别改变矩阵行数和列数,测试CPU和GPU的执行时间

附上代码:

#include <chrono>

#include <iostream>

#include <CL/sycl.hpp>

#define random_float() (rand() / double(RAND_MAX))

using namespace std;

using namespace sycl;

#define tileY 2

#define tileX 2

// return execution time

double gpu_kernel(float *A, float *B, float *C,

int M, int N, int K,

int BLOCK, sycl::queue &q) {

// define the workgroup size and mapping

auto grid_rows = M / tileY;

auto grid_cols = N / tileX;

auto local_ndrange = range<2>(BLOCK, BLOCK);

auto global_ndrange = range<2>(grid_rows, grid_cols);

double duration = 0.0f;

auto e = q.submit([&](sycl::handler &h) {

h.parallel_for<class k_name_t>(

sycl::nd_range<2>(global_ndrange, local_ndrange), [=](sycl::nd_item<2> index) {

int row = tileY * index.get_global_id(0);

int col = tileX * index.get_global_id(1);

float sum[tileY][tileX] = {0.0f};

float subA[tileY] = {0.0f};

float subB[tileX] = {0.0f};

// core computation

for (int k = 0; k < N; k++) {

// read data to register

for(int m = 0; m < tileY; m++) {

subA[m] = A[(row + m) * N + k];

}

for(int p = 0; p < tileX; p++) {

subB[p] = B[k * N + p + col];

}

for (int m = 0; m < tileY; m++) {

for (int p = 0; p < tileX; p++) {

sum[m][p] += subA[m] * subB[p];

}

}

} //end of K

// write results back

for (int m = 0; m < tileY; m++) {

for (int p = 0; p < tileX; p++) {

C[(row + m) * N + col + p] = sum[m][p];

}

}

});

});

e.wait();

duration += (e.get_profiling_info<info::event_profiling::command_end>() -

e.get_profiling_info<info::event_profiling::command_start>()) /1000.0f/1000.0f;

return(duration);

}

// return execution time

double cpu_kernel(float *cA, float *cB, float *cC, int M, int N, int K) {

double duration = 0.0;

std::chrono::high_resolution_clock::time_point s, e;

// Single Thread Computation in CPU

s = std::chrono::high_resolution_clock::now();

for(int i = 0; i < M; i++) {

for(int j = 0; j < N; j++) {

float sum = 0.0f;

for(int k = 0; k < K; k++) {

sum += cA[i * K + k] * cB[k * N + j];

}

cC[i * N + j] = sum;

}

}

e = std::chrono::high_resolution_clock::now();

duration = std::chrono::duration<float, std::milli>(e - s).count();

return(duration);

}

int verify(float *cpu_res, float *gpu_res, int length){

int err = 0;

for(int i = 0; i < length; i++) {

if( fabs(cpu_res[i] - gpu_res[i]) > 1e-3) {

err++;

printf("\n%lf, %lf", cpu_res[i], gpu_res[i]);

}

}

return(err);

}

int gemm(const int M,

const int N,

const int K,

const int block_size,

const int iterations,

sycl::queue &q) {

cout << "Problem size: c(" << M << "," << N << ") ="

<< " a(" << M << "," << K << ") *"

<< " b(" << K << "," << N << ")\n";

auto A = malloc_shared<float>(M * K, q);

auto B = malloc_shared<float>(K * N, q);

auto C = malloc_shared<float>(M * N, q);

auto C_host = malloc_host<float>(M * N, q);

// init the A/B/C

for(int i=0; i < M * K; i++) {

A[i] = random_float();

}

for(int i=0; i < K * N; i++) {

B[i] = random_float();

}

for(int i=0; i < M * N; i++) {

C[i] = 0.0f;

C_host[i] = 0.0f;

}

double flopsPerMatrixMul

= 2.0 * static_cast<double>(M) * static_cast<double>(N) * static_cast<double>(K);

double duration_gpu = 0.0f;

double duration_cpu = 0.0f;

// GPU compuation and timer

int warmup = 10;

for (int run = 0; run < iterations + warmup; run++) {

float duration = gpu_kernel(A, B, C, M, N, K, block_size, q);

if(run >= warmup) duration_gpu += duration;

}

duration_gpu = duration_gpu / iterations;

// CPU compuation and timer

warmup = 2;

for(int run = 0; run < iterations/2 + warmup; run++) {

float duration = cpu_kernel(A, B, C_host, M, N, K);

if(run >= warmup) duration_cpu += duration;

}

duration_cpu = duration_cpu / iterations/2;

// Compare the resutls of CPU and GPU

int errCode = 0;

errCode = verify(C_host, C, M*N);

if(errCode > 0) printf("\nThere are %d errors\n", errCode);

printf("\nGEMM size M = %d, N = %d, K = %d", M, N, K);

printf("\nWork-Group size = %d * %d, tile_X = %d, tile_Y = %d", block_size, block_size, tileX, tileY);

printf("\nPerformance Flops = %lf, \n"

"GPU Computation Time = %lf (ms); \n"

"CPU Computaiton Time = %lf (ms); \n",

flopsPerMatrixMul, duration_gpu, duration_cpu);

free(A, q);

free(B, q);

free(C, q);

free(C_host, q);

return(errCode);

}

int main() {

auto propList = cl::sycl::property_list {cl::sycl::property::queue::enable_profiling()};

queue my_gpu_queue( cl::sycl::gpu_selector_v , propList);

int errCode = gemm(512, 512, 512, /* GEMM size, M, N, K */

4, /* workgroup size */

10, /* repeat time */

my_gpu_queue);

return(errCode);

}其中tileX和tileY的改变会引起行数列数的改变

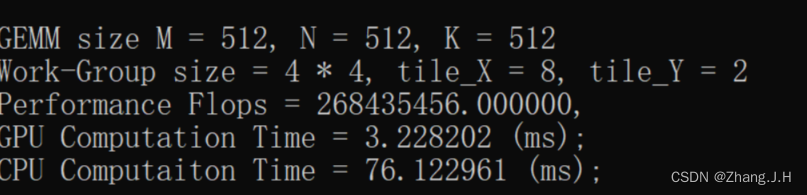

运行结果:

tileX=2,tileY=2

tileX=2,tileY=4

tileX=2,tileY=8

tileX=4,tileY=2

tileX=8,tileY=2

分析:

1.在tileX,tileY的变化中,可以看到CPU执行时间基本没有改变,说明其变化对于CPU来说并无太大影响。

2.保持tileX不变,当tileY增大时,GPU执行时间有所改变,但改变较小,说明其改变对GPU执行时间影响较小;保持tileY不变,当tileX增大时,GPU执行时间变化较大,说明tileX的变化对GPU执行时间影响很大。

3.当tileX和tileY达到8以上时GPU和CPU执行时间有很大概率爆掉,出现错误现象,下面列出两组错误数据:

209

209

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?