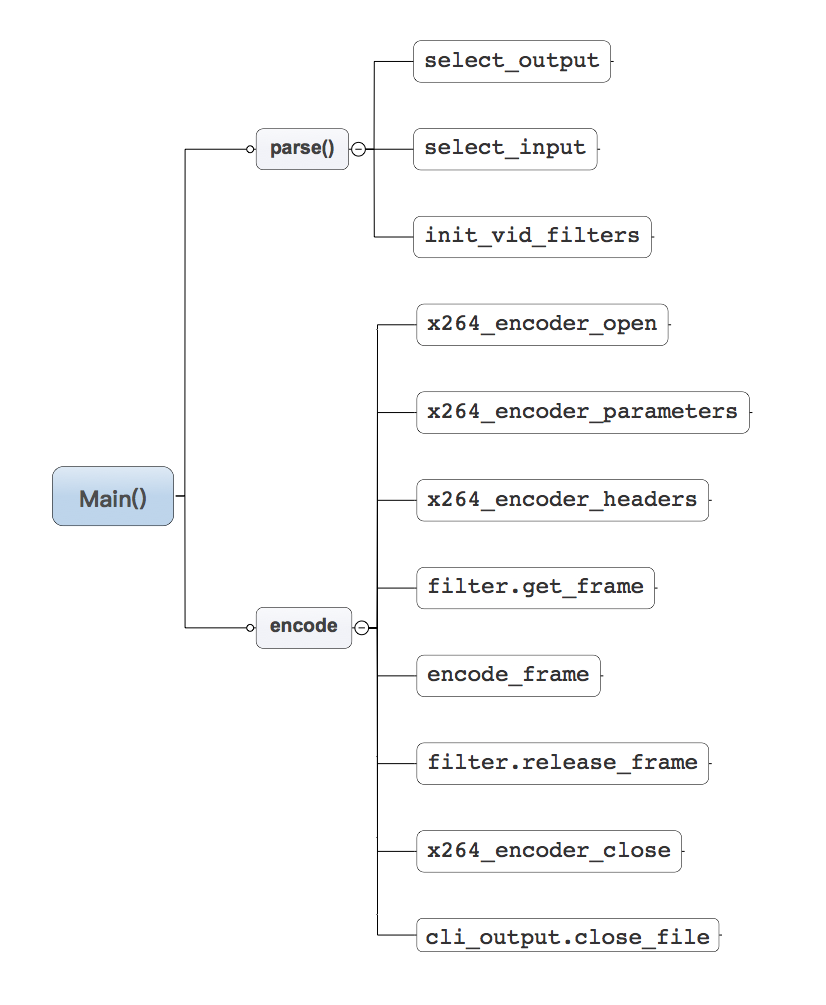

代码结构图:

Figure 1. x264函数调用图

x264命令行入口函数main()

int main( int argc, char **argv )

{

x264_param_t param;

cli_opt_t opt = {0};

int ret = 0;

FAIL_IF_ERROR( x264_threading_init(), "unable to initialize threading\n" );

#ifdef _WIN32

FAIL_IF_ERROR( !get_argv_utf8( &argc, &argv ), "unable to convert command line to UTF-8\n" );

GetConsoleTitleW( org_console_title, CONSOLE_TITLE_SIZE );

_setmode( _fileno( stdin ), _O_BINARY );

_setmode( _fileno( stdout ), _O_BINARY );

_setmode( _fileno( stderr ), _O_BINARY );

#endif

/* Parse command line */

if( parse( argc, argv, ¶m, &opt ) < 0 )

ret = -1;

#ifdef _WIN32

/* Restore title; it can be changed by input modules */

SetConsoleTitleW( org_console_title );

#endif

/* Control-C handler */

signal( SIGINT, sigint_handler );

if( !ret )

/* 真正的编码部分*/

ret = encode( ¶m, &opt );

/* clean up handles */

if( filter.free )

filter.free( opt.hin );

else if( opt.hin )

cli_input.close_file( opt.hin );

if( opt.hout )

cli_output.close_file( opt.hout, 0, 0 );

if( opt.tcfile_out )

fclose( opt.tcfile_out );

if( opt.qpfile )

fclose( opt.qpfile );

#ifdef _WIN32

SetConsoleTitleW( org_console_title );

free( argv );

#endif

return ret;

}上述代码可以看出,main函数里面主要调用了两个函数,parse()和encode()。其中有两个比较重要的参数x264_param_t,cli_opt_t。

参数设置parse()

typedef struct {

int b_progress;

int i_seek;

hnd_t hin;

hnd_t hout;

FILE *qpfile;

FILE *tcfile_out;

double timebase_convert_multiplier;

int i_pulldown;

} cli_opt_t;typedef struct x264_param_t

{

/* CPU flags */

unsigned int cpu; /*cpu的架构eg. arm-v7a,x86 ...这个是动态监测的,在编码过程中会根据cpu的特性进行加速*/

int i_threads; /* encode multiple frames in parallel */

int i_lookahead_threads; /* multiple threads for lookahead analysis */

int b_sliced_threads; /* Whether to use slice-based threading. 除了slice-base的线程方式还有frame-base的*/

int b_deterministic; /* whether to allow non-deterministic optimizations when threaded */

int b_cpu_independent; /* force canonical behavior rather than cpu-dependent optimal algorithms */

int i_sync_lookahead; /* threaded lookahead buffer */

/* Video Properties */

int i_width;

int i_height;

int i_csp; /* CSP of encoded bitstream .CSP 就是colorSpace*/

int i_level_idc; /*离散余弦变化的等级*/

int i_frame_total; /* number of frames to encode if known, else 0 */

/* NAL HRD

* Uses Buffering and Picture Timing SEIs to signal HRD

* The HRD in H.264 was not designed with VFR in mind.

* It is therefore not recommendeded to use NAL HRD with VFR.

* Furthermore, reconfiguring the VBV (via x264_encoder_reconfig)

* will currently generate invalid HRD. */

int i_nal_hrd;

struct

{

/* they will be reduced to be 0 < x <= 65535 and prime */

int i_sar_height;

int i_sar_width; /*宽高比*/

int i_overscan; /* 0=undef, 1=no overscan, 2=overscan */

/* see h264 annex E for the values of the following */

int i_vidformat;

int b_fullrange;

int i_colorprim;

int i_transfer;

int i_colmatrix;

int i_chroma_loc; /* both top & bottom */

} vui;

/* Bitstream parameters */

int i_frame_reference; /* Maximum number of reference frames */

int i_dpb_size; /* Force a DPB size larger than that implied by B-frames and reference frames.

* Useful in combination with interactive error resilience. */

int i_keyint_max; /* Force an IDR keyframe at this interval */

int i_keyint_min; /* Scenecuts closer together than this are coded as I, not IDR. */

int i_scenecut_threshold; /* how aggressively to insert extra I frames */

int b_intra_refresh; /* Whether or not to use periodic intra refresh instead of IDR frames. */

int i_bframe; /* how many b-frame between 2 references pictures */

int i_bframe_adaptive;

int i_bframe_bias;

int i_bframe_pyramid; /* Keep some B-frames as references: 0=off, 1=strict hierarchical, 2=normal */

int b_open_gop;

int b_bluray_compat;

int i_avcintra_class;

int b_deblocking_filter;

int i_deblocking_filter_alphac0; /* [-6, 6] -6 light filter, 6 strong */

int i_deblocking_filter_beta; /* [-6, 6] idem */

int b_cabac;

int i_cabac_init_idc;

int b_interlaced;

int b_constrained_intra;

int i_cqm_preset;

char *psz_cqm_file; /* filename (in UTF-8) of CQM file, JM format */

uint8_t cqm_4iy[16]; /* used only if i_cqm_preset == X264_CQM_CUSTOM */

uint8_t cqm_4py[16];

uint8_t cqm_4ic[16];

uint8_t cqm_4pc[16];

uint8_t cqm_8iy[64];

uint8_t cqm_8py[64];

uint8_t cqm_8ic[64];

uint8_t cqm_8pc[64];

/* Log */

void (*pf_log)( void *, int i_level, const char *psz, va_list );

void *p_log_private;

int i_log_level;

int b_full_recon; /* fully reconstruct frames, even when not necessary for encoding. Implied by psz_dump_yuv */

char *psz_dump_yuv; /* filename (in UTF-8) for reconstructed frames */

/* Encoder analyser parameters */

struct

{

unsigned int intra; /* intra partitions */

unsigned int inter; /* inter partitions */

int b_transform_8x8;

int i_weighted_pred; /* weighting for P-frames */

int b_weighted_bipred; /* implicit weighting for B-frames */

int i_direct_mv_pred; /* spatial vs temporal mv prediction */

int i_chroma_qp_offset;

int i_me_method; /* motion estimation algorithm to use (X264_ME_*) */

int i_me_range; /* integer pixel motion estimation search range (from predicted mv) */

int i_mv_range; /* maximum length of a mv (in pixels). -1 = auto, based on level */

int i_mv_range_thread; /* minimum space between threads. -1 = auto, based on number of threads. */

int i_subpel_refine; /* subpixel motion estimation quality */

int b_chroma_me; /* chroma ME for subpel and mode decision in P-frames */

int b_mixed_references; /* allow each mb partition to have its own reference number */

int i_trellis; /* trellis RD quantization */

int b_fast_pskip; /* early SKIP detection on P-frames */

int b_dct_decimate; /* transform coefficient thresholding on P-frames */

int i_noise_reduction; /* adaptive pseudo-deadzone */

float f_psy_rd; /* Psy RD strength */

float f_psy_trellis; /* Psy trellis strength */

int b_psy; /* Toggle all psy optimizations */

int b_mb_info; /* Use input mb_info data in x264_picture_t */

int b_mb_info_update; /* Update the values in mb_info according to the results of encoding. */

/* the deadzone size that will be used in luma quantization */

int i_luma_deadzone[2]; /* {inter, intra} */

int b_psnr; /* compute and print PSNR stats */

int b_ssim; /* compute and print SSIM stats */

} analyse;

/* Rate control parameters */

struct

{

int i_rc_method; /* X264_RC_* */

int i_qp_constant; /* 0 to (51 + 6*(x264_bit_depth-8)). 0=lossless */

int i_qp_min; /* min allowed QP value */

int i_qp_max; /* max allowed QP value */

int i_qp_step; /* max QP step between frames */

int i_bitrate;

float f_rf_constant; /* 1pass VBR, nominal QP */

float f_rf_constant_max; /* In CRF mode, maximum CRF as caused by VBV */

float f_rate_tolerance;

int i_vbv_max_bitrate;

int i_vbv_buffer_size;

float f_vbv_buffer_init; /* <=1: fraction of buffer_size. >1: kbit */

float f_ip_factor;

float f_pb_factor;

/* VBV filler: force CBR VBV and use filler bytes to ensure hard-CBR.

* Implied by NAL-HRD CBR. */

int b_filler;

int i_aq_mode; /* psy adaptive QP. (X264_AQ_*) */

float f_aq_strength;

int b_mb_tree; /* Macroblock-tree ratecontrol. */

int i_lookahead;

/* 2pass */

int b_stat_write; /* Enable stat writing in psz_stat_out */

char *psz_stat_out; /* output filename (in UTF-8) of the 2pass stats file */

int b_stat_read; /* Read stat from psz_stat_in and use it */

char *psz_stat_in; /* input filename (in UTF-8) of the 2pass stats file */

/* 2pass params (same as ffmpeg ones) */

float f_qcompress; /* 0.0 => cbr, 1.0 => constant qp */

float f_qblur; /* temporally blur quants */

float f_complexity_blur; /* temporally blur complexity */

x264_zone_t *zones; /* ratecontrol overrides */

int i_zones; /* number of zone_t's */

char *psz_zones; /* alternate method of specifying zones */

} rc;

/* Cropping Rectangle parameters: added to those implicitly defined by

non-mod16 video resolutions. */

struct

{

unsigned int i_left;

unsigned int i_top;

unsigned int i_right;

unsigned int i_bottom;

} crop_rect;

/* frame packing arrangement flag */

int i_frame_packing;

/* Muxing parameters */

int b_aud; /* generate access unit delimiters */

int b_repeat_headers; /* put SPS/PPS before each keyframe */

int b_annexb; /* if set, place start codes (4 bytes) before NAL units,

* otherwise place size (4 bytes) before NAL units. */

int i_sps_id; /* SPS and PPS id number */

int b_vfr_input; /* VFR input. If 1, use timebase and timestamps for ratecontrol purposes.

* If 0, use fps only. */

int b_pulldown; /* use explicity set timebase for CFR */

uint32_t i_fps_num; /*帧率的分子,由于浮点数在运算过程中反复使用可能会扩大误差,所以才用分数形势,本人猜测*/

uint32_t i_fps_den; /*帧率的分母*/

uint32_t i_timebase_num; /* Timebase numerator */

uint32_t i_timebase_den; /* Timebase denominator */

int b_tff;

/* Pulldown:

* The correct pic_struct must be passed with each input frame.

* The input timebase should be the timebase corresponding to the output framerate. This should be constant.

* e.g. for 3:2 pulldown timebase should be 1001/30000

* The PTS passed with each frame must be the PTS of the frame after pulldown is applied.

* Frame doubling and tripling require b_vfr_input set to zero (see H.264 Table D-1)

*

* Pulldown changes are not clearly defined in H.264. Therefore, it is the calling app's responsibility to manage this.

*/

int b_pic_struct;

/* Fake Interlaced.

*

* Used only when b_interlaced=0. Setting this flag makes it possible to flag the stream as PAFF interlaced yet

* encode all frames progessively. It is useful for encoding 25p and 30p Blu-Ray streams.

*/

int b_fake_interlaced;

/* Don't optimize header parameters based on video content, e.g. ensure that splitting an input video, compressing

* each part, and stitching them back together will result in identical SPS/PPS. This is necessary for stitching

* with container formats that don't allow multiple SPS/PPS. */

int b_stitchable;

int b_opencl; /* use OpenCL when available */

int i_opencl_device; /* specify count of GPU devices to skip, for CLI users */

void *opencl_device_id; /* pass explicit cl_device_id as void*, for API users */

char *psz_clbin_file; /* filename (in UTF-8) of the compiled OpenCL kernel cache file */

/* Slicing parameters */

int i_slice_max_size; /* Max size per slice in bytes; includes estimated NAL overhead. */

int i_slice_max_mbs; /* Max number of MBs per slice; overrides i_slice_count. */

int i_slice_min_mbs; /* Min number of MBs per slice */

int i_slice_count; /* Number of slices per frame: forces rectangular slices. */

int i_slice_count_max; /* Absolute cap on slices per frame; stops applying slice-max-size

* and slice-max-mbs if this is reached. */

/* Optional callback for freeing this x264_param_t when it is done being used.

* Only used when the x264_param_t sits in memory for an indefinite period of time,

* i.e. when an x264_param_t is passed to x264_t in an x264_picture_t or in zones.

* Not used when x264_encoder_reconfig is called directly. */

void (*param_free)( void* );

/* Optional low-level callback for low-latency encoding. Called for each output NAL unit

* immediately after the NAL unit is finished encoding. This allows the calling application

* to begin processing video data (e.g. by sending packets over a network) before the frame

* is done encoding.

*

* This callback MUST do the following in order to work correctly:

* 1) Have available an output buffer of at least size nal->i_payload*3/2 + 5 + 64.

* 2) Call x264_nal_encode( h, dst, nal ), where dst is the output buffer.

* After these steps, the content of nal is valid and can be used in the same way as if

* the NAL unit were output by x264_encoder_encode.

*

* This does not need to be synchronous with the encoding process: the data pointed to

* by nal (both before and after x264_nal_encode) will remain valid until the next

* x264_encoder_encode call. The callback must be re-entrant.

*

* This callback does not work with frame-based threads; threads must be disabled

* or sliced-threads enabled. This callback also does not work as one would expect

* with HRD -- since the buffering period SEI cannot be calculated until the frame

* is finished encoding, it will not be sent via this callback.

*

* Note also that the NALs are not necessarily returned in order when sliced threads is

* enabled. Accordingly, the variable i_first_mb and i_last_mb are available in

* x264_nal_t to help the calling application reorder the slices if necessary.

*

* When this callback is enabled, x264_encoder_encode does not return valid NALs;

* the calling application is expected to acquire all output NALs through the callback.

*

* It is generally sensible to combine this callback with a use of slice-max-mbs or

* slice-max-size.

*

* The opaque pointer is the opaque pointer from the input frame associated with this

* NAL unit. This helps distinguish between nalu_process calls from different sources,

* e.g. if doing multiple encodes in one process.

*/

void (*nalu_process) ( x264_t *h, x264_nal_t *nal, void *opaque );

} x264_param_t;通过x264_param_t的定义,我们大致可以推测h264有哪些功能,通过函数parse()可以对其赋值,使用方法可以参照前一篇文章h264源码分析[0]。

至此,设置x264的参数过程就结束了。parse()的具体解析参数的过程就不再赘述了,无非就是一些字符串的解析,但是,有两个函数值得注意:

select_output()

static int select_output( const char *muxer, char *filename, x264_param_t *param )

{

/*获取拓展名*/

const char *ext = get_filename_extension( filename );

if( !strcmp( filename, "-" ) || strcasecmp( muxer, "auto" ) )

ext = muxer;

if( !strcasecmp( ext, "mp4" ) )

{

#if HAVE_GPAC || HAVE_LSMASH

cli_output = mp4_output;

param->b_annexb = 0;

param->b_repeat_headers = 0;

if( param->i_nal_hrd == X264_NAL_HRD_CBR )

{

x264_cli_log( "x264", X264_LOG_WARNING, "cbr nal-hrd is not compatible with mp4\n" );

param->i_nal_hrd = X264_NAL_HRD_VBR;

}

#else

x264_cli_log( "x264", X264_LOG_ERROR, "not compiled with MP4 output support\n" );

return -1;

#endif

}

else if( !strcasecmp( ext, "mkv" ) )

{

cli_output = mkv_output;

param->b_annexb = 0;

param->b_repeat_headers = 0;

}

else if( !strcasecmp( ext, "flv" ) )

{

cli_output = flv_output;

param->b_annexb = 0;

param->b_repeat_headers = 0;

}

else

cli_output = raw_output;

return 0;

}输出格式可以为mp4,flv,mkv,其它的类型则按raw类型输出,我测试是yuv420sp。根据不同的类型,cli_output被赋值为mp4_output,flv_output,mkv_output或者raw_output。

这里,我就看一看最简单raw_output类型的输出:

static int open_file( char *psz_filename, hnd_t *p_handle, cli_output_opt_t *opt )

{

if( !strcmp( psz_filename, "-" ) )

*p_handle = stdout;

else if( !(*p_handle = x264_fopen( psz_filename, "w+b" )) )

return -1;

return 0;

}

static int set_param( hnd_t handle, x264_param_t *p_param )

{

return 0;

}

static int write_headers( hnd_t handle, x264_nal_t *p_nal )

{

int size = p_nal[0].i_payload + p_nal[1].i_payload + p_nal[2].i_payload;

if( fwrite( p_nal[0].p_payload, size, 1, (FILE*)handle ) )

return size;

return -1;

}

static int write_frame( hnd_t handle, uint8_t *p_nalu, int i_size, x264_picture_t *p_picture )

{

if( fwrite( p_nalu, i_size, 1, (FILE*)handle ) )

return i_size;

return -1;

}

static int close_file( hnd_t handle, int64_t largest_pts, int64_t second_largest_pts )

{

if( !handle || handle == stdout )

return 0;

return fclose( (FILE*)handle );

}

const cli_output_t raw_output = { open_file, set_param, write_headers, write_frame, close_file };raw_output的代码很简单,只是对文件操作进行了简单的封装。其它类型的输出文件在目录common/output/下,有兴趣可以去看看。

select_input

static int select_input( const char *demuxer, char *used_demuxer, char *filename,hnd_t *p_handle, video_info_t *info, cli_input_opt_t *opt )

{

int b_auto = !strcasecmp( demuxer, "auto" );

const char *ext = b_auto ? get_filename_extension( filename ) : "";

int b_regular = strcmp( filename, "-" );

if( !b_regular && b_auto )

ext = "raw";

b_regular = b_regular && x264_is_regular_file_path( filename );

if( b_regular )

{

FILE *f = x264_fopen( filename, "r" );

if( f )

{

b_regular = x264_is_regular_file( f );

fclose( f );

}

}

const char *module = b_auto ? ext : demuxer;

if( !strcasecmp( module, "avs" ) || !strcasecmp( ext, "d2v" ) || !strcasecmp( ext, "dga" ) )

{

#if HAVE_AVS

cli_input = avs_input;

module = "avs";

#else

x264_cli_log( "x264", X264_LOG_ERROR, "not compiled with AVS input support\n" );

return -1;

#endif

}

else if( !strcasecmp( module, "y4m" ) )

cli_input = y4m_input;

else if( !strcasecmp( module, "raw" ) || !strcasecmp( ext, "yuv" ) )

cli_input = raw_input;

else

{

#if HAVE_FFMS

if( b_regular && (b_auto || !strcasecmp( demuxer, "ffms" )) &&

!ffms_input.open_file( filename, p_handle, info, opt ) )

{

module = "ffms";

b_auto = 0;

cli_input = ffms_input;

}

#endif

#if HAVE_LAVF

if( (b_auto || !strcasecmp( demuxer, "lavf" )) &&

!lavf_input.open_file( filename, p_handle, info, opt ) )

{

module = "lavf";

b_auto = 0;

cli_input = lavf_input;

}

#endif

#if HAVE_AVS

if( b_regular && (b_auto || !strcasecmp( demuxer, "avs" )) &&

!avs_input.open_file( filename, p_handle, info, opt ) )

{

module = "avs";

b_auto = 0;

cli_input = avs_input;

}

#endif

if( b_auto && !raw_input.open_file( filename, p_handle, info, opt ) )

{

module = "raw";

b_auto = 0;

cli_input = raw_input;

}

FAIL_IF_ERROR( !(*p_handle), "could not open input file `%s' via any method!\n", filename );

}

strcpy( used_demuxer, module );

return 0;

}

输入的选择和输出的选择道理是一样的。

编码主体encode()

static int encode( x264_param_t *param, cli_opt_t *opt )

{

x264_t *h = NULL;

x264_picture_t pic;

cli_pic_t cli_pic;

const cli_pulldown_t *pulldown = NULL; // shut up gcc不懂什么意思

int i_frame = 0;/*当前编码帧的索引*/

int i_frame_output = 0;/*已编码的帧数*/

int64_t i_end, i_previous = 0, i_start = 0;/*分别代表编码的结束时间,和前一帧的时间以及编码开始的时间*/

int64_t i_file = 0;/*编码后文件的大小*/

int i_frame_size;/*每一帧编码后的大小*/

int64_t last_dts = 0;/*最后一帧的解码时间戳*/

int64_t prev_dts = 0;/*前一帧解码时间戳*/

int64_t first_dts = 0;/*第一帧的解码时间戳*/

# define MAX_PTS_WARNING 3 /* arbitrary */

int pts_warning_cnt = 0;

int64_t largest_pts = -1;/*因为时间戳是自增的,所以是最后一帧的时间戳,*/

int64_t second_largest_pts = -1;/*倒数第二帧的时间戳*/

int64_t ticks_per_frame;

double duration; /*解码用时,单位是毫秒*/

double pulldown_pts = 0;

int retval = 0;

opt->b_progress &= param->i_log_level < X264_LOG_DEBUG;

/* set up pulldown */

if( opt->i_pulldown && !param->b_vfr_input )

{

param->b_pulldown = 1;

param->b_pic_struct = 1;

pulldown = &pulldown_values[opt->i_pulldown];

param->i_timebase_num = param->i_fps_den;

FAIL_IF_ERROR2( fmod( param->i_fps_num * pulldown->fps_factor, 1 ),

"unsupported framerate for chosen pulldown\n" );

param->i_timebase_den = param->i_fps_num * pulldown->fps_factor;

}

h = x264_encoder_open( param );

FAIL_IF_ERROR2( !h, "x264_encoder_open failed\n" );

x264_encoder_parameters( h, param );

FAIL_IF_ERROR2( cli_output.set_param( opt->hout, param ), "can't set outfile param\n" );

i_start = x264_mdate();

/* ticks/frame = ticks/second / frames/second */

ticks_per_frame = (int64_t)param->i_timebase_den * param->i_fps_den / param->i_timebase_num / param->i_fps_num;

FAIL_IF_ERROR2( ticks_per_frame < 1 && !param->b_vfr_input, "ticks_per_frame invalid: %"PRId64"\n", ticks_per_frame );

ticks_per_frame = X264_MAX( ticks_per_frame, 1 );

/*如果不是重复写在头部,那么就在最开始写,而且真正的写操作是由cli_output.write_headers来完成的。*/

if( !param->b_repeat_headers )

{

// Write SPS/PPS/SEI

x264_nal_t *headers;

int i_nal;

FAIL_IF_ERROR2( x264_encoder_headers( h, &headers, &i_nal ) < 0, "x264_encoder_headers failed\n" );

FAIL_IF_ERROR2( (i_file = cli_output.write_headers( opt->hout, headers )) < 0, "error writing headers to output file\n" );

}

if( opt->tcfile_out )

fprintf( opt->tcfile_out, "# timecode format v2\n" );

/* Encode frames */

for( ; !b_ctrl_c && (i_frame < param->i_frame_total || !param->i_frame_total); i_frame++ )

{

/*获得待编码的帧数据,并保存在cli_pic中*/

if( filter.get_frame( opt->hin, &cli_pic, i_frame + opt->i_seek ) )

break;

x264_picture_init( &pic );

convert_cli_to_lib_pic( &pic, &cli_pic );

/*设置显示时间戳*/

if( !param->b_vfr_input )

pic.i_pts = i_frame;

if( opt->i_pulldown && !param->b_vfr_input )

{

pic.i_pic_struct = pulldown->pattern[ i_frame % pulldown->mod ];

/*设置显示时间戳*/

pic.i_pts = (int64_t)( pulldown_pts + 0.5 );

pulldown_pts += pulldown_frame_duration[pic.i_pic_struct];

}

else if( opt->timebase_convert_multiplier )

pic.i_pts = (int64_t)( pic.i_pts * opt->timebase_convert_multiplier + 0.5 );

if( pic.i_pts <= largest_pts )

{

if( cli_log_level >= X264_LOG_DEBUG || pts_warning_cnt < MAX_PTS_WARNING )

x264_cli_log( "x264", X264_LOG_WARNING, "non-strictly-monotonic pts at frame %d (%"PRId64" <= %"PRId64")\n",

i_frame, pic.i_pts, largest_pts );

else if( pts_warning_cnt == MAX_PTS_WARNING )

x264_cli_log( "x264", X264_LOG_WARNING, "too many nonmonotonic pts warnings, suppressing further ones\n" );

pts_warning_cnt++;

pic.i_pts = largest_pts + ticks_per_frame;

}

second_largest_pts = largest_pts;

largest_pts = pic.i_pts;

if( opt->tcfile_out )

fprintf( opt->tcfile_out, "%.6f\n", pic.i_pts * ((double)param->i_timebase_num / param->i_timebase_den) * 1e3 );

if( opt->qpfile )

parse_qpfile( opt, &pic, i_frame + opt->i_seek );

prev_dts = last_dts;

/*编码,获取解码时间戳并保存早last_dts中,同时返回编码数据的大小i_frame_size。这里的pic是输入数据,与后面的flush delayed frames不同,那个是Null*/

i_frame_size = encode_frame( h, opt->hout, &pic, &last_dts );

/*i_frame_size 可能是0,因为当存在B帧的时候,它需要把后面的帧数据存入后才能编码,这也是为什么最后还要flush delayed frames的原因*/

if( i_frame_size < 0 )

{

b_ctrl_c = 1; /* lie to exit the loop */

retval = -1;

}

else if( i_frame_size )

{

i_file += i_frame_size;

i_frame_output++;

if( i_frame_output == 1 )

/*如果目前只编码了第一帧,则初始化所有解码时间戳*/

first_dts = prev_dts = last_dts;

}

if( filter.release_frame( opt->hin, &cli_pic, i_frame + opt->i_seek ) )

break;

/* update status line (up to 1000 times per input file) */

if( opt->b_progress && i_frame_output )

i_previous = print_status( i_start, i_previous, i_frame_output, param->i_frame_total, i_file, param, 2 * last_dts - prev_dts - first_dts );

}

/* Flush delayed frames */

while( !b_ctrl_c && x264_encoder_delayed_frames( h ) )

{

prev_dts = last_dts;

/*这里前面提到过,第三个参数输入是空*/

i_frame_size = encode_frame( h, opt->hout, NULL, &last_dts );

if( i_frame_size < 0 )

{

b_ctrl_c = 1; /* lie to exit the loop */

retval = -1;

}

else if( i_frame_size )

{

i_file += i_frame_size;

i_frame_output++;

if( i_frame_output == 1 )

first_dts = prev_dts = last_dts;

}

if( opt->b_progress && i_frame_output )

i_previous = print_status( i_start, i_previous, i_frame_output, param->i_frame_total, i_file, param, 2 * last_dts - prev_dts - first_dts );

}

fail:

if( pts_warning_cnt >= MAX_PTS_WARNING && cli_log_level < X264_LOG_DEBUG )

x264_cli_log( "x264", X264_LOG_WARNING, "%d suppressed nonmonotonic pts warnings\n", pts_warning_cnt-MAX_PTS_WARNING );

/* duration algorithm fails when only 1 frame is output */

if( i_frame_output == 1 )

duration = (double)param->i_fps_den / param->i_fps_num;

else if( b_ctrl_c )

duration = (double)(2 * last_dts - prev_dts - first_dts) * param->i_timebase_num / param->i_timebase_den;

else

duration = (double)(2 * largest_pts - second_largest_pts) * param->i_timebase_num / param->i_timebase_den;

i_end = x264_mdate();

/* Erase progress indicator before printing encoding stats. */

if( opt->b_progress )

fprintf( stderr, " \r" );

if( h )

x264_encoder_close( h );

fprintf( stderr, "\n" );

if( b_ctrl_c )

fprintf( stderr, "aborted at input frame %d, output frame %d\n", opt->i_seek + i_frame, i_frame_output );

cli_output.close_file( opt->hout, largest_pts, second_largest_pts );

opt->hout = NULL;

if( i_frame_output > 0 )

{

double fps = (double)i_frame_output * (double)1000000 /

(double)( i_end - i_start );

fprintf( stderr, "encoded %d frames, %.2f fps, %.2f kb/s\n", i_frame_output, fps,

(double) i_file * 8 / ( 1000 * duration ) );

}

return retval;

}

encode里面比较关键的地方我加了注释,其中还有如下几个关键函数:

filter.get_frame();//获取编码帧,这个地方有点意思,稍后会讲一讲

encode_frame( h, opt->hout, &pic, &last_dts );/*编码,注意这里的 last_dts,是从函数里面获得编码时间戳*/

filter.release_frame()//释放帧get_frame()

filter的初始化工作是在parse()中完成的,首先调用init_vid_filters()完成filter的初始化工作。

static int init_vid_filters( char *sequence, hnd_t *handle, video_info_t *info, x264_param_t *param, int output_csp )

{

/* 注册所有filter,实际上就是把所有的filter链接成链表*/

x264_register_vid_filters();

/* intialize baseline filters */

if( x264_init_vid_filter( "source", handle, &filter, info, param, NULL ) ) /* wrap demuxer into a filter */

return -1;

if( x264_init_vid_filter( "resize", handle, &filter, info, param, "normcsp" ) ) /* normalize csps to be of a known/supported format */

return -1;

if( x264_init_vid_filter( "fix_vfr_pts", handle, &filter, info, param, NULL ) ) /* fix vfr pts */

return -1;

/* parse filter chain */

for( char *p = sequence; p && *p; )

{

int tok_len = strcspn( p, "/" );

int p_len = strlen( p );

p[tok_len] = 0;

int name_len = strcspn( p, ":" );

p[name_len] = 0;

name_len += name_len != tok_len;

if( x264_init_vid_filter( p, handle, &filter, info, param, p + name_len ) )

return -1;

p += X264_MIN( tok_len+1, p_len );

}

/* force end result resolution */

if( !param->i_width && !param->i_height )

{

param->i_height = info->height;

param->i_width = info->width;

}

/* force the output csp to what the user specified (or the default) */

param->i_csp = info->csp;

int csp = info->csp & X264_CSP_MASK;

if( output_csp == X264_CSP_I420 && (csp < X264_CSP_I420 || csp >= X264_CSP_I422) )

param->i_csp = X264_CSP_I420;

else if( output_csp == X264_CSP_I422 && (csp < X264_CSP_I422 || csp >= X264_CSP_I444) )

param->i_csp = X264_CSP_I422;

else if( output_csp == X264_CSP_I444 && (csp < X264_CSP_I444 || csp >= X264_CSP_BGR) )

param->i_csp = X264_CSP_I444;

else if( output_csp == X264_CSP_RGB && (csp < X264_CSP_BGR || csp > X264_CSP_RGB) )

param->i_csp = X264_CSP_RGB;

param->i_csp |= info->csp & X264_CSP_HIGH_DEPTH;

/* if the output range is not forced, assign it to the input one now */

if( param->vui.b_fullrange == RANGE_AUTO )

param->vui.b_fullrange = info->fullrange;

/*当确定了输出分辨率以及色彩空间后,再次初始化resizefilter,防止其不支持当前的分辨率和色彩空间*/

if( x264_init_vid_filter( "resize", handle, &filter, info, param, NULL ) )

return -1;

char args[20];

sprintf( args, "bit_depth=%d", x264_bit_depth );

if( x264_init_vid_filter( "depth", handle, &filter, info, param, args ) )

return -1;

return 0;

}现在来看一下get_frame(),以filter resize为例,看看它的代码时怎么写的:

static int get_frame( hnd_t handle, cli_pic_t *output, int frame )

{

resizer_hnd_t *h = handle;

if( h->prev_filter.get_frame( h->prev_hnd, output, frame ) )

return -1;

if( h->variable_input && check_resizer( h, output ) )

return -1;

h->working = 1;

if( h->pre_swap_chroma )

XCHG( uint8_t*, output->img.plane[1], output->img.plane[2] );

if( h->ctx )

{

sws_scale( h->ctx, (const uint8_t* const*)output->img.plane, output->img.stride,0, output->img.height, h->buffer.img.plane, h->buffer.img.stride );

output->img = h->buffer.img; /* copy img data */

}

else

output->img.csp = h->dst_csp;

if( h->post_swap_chroma )

XCHG( uint8_t*, output->img.plane[1], output->img.plane[2] );

return 0;

}

注意这里h->prev_filter.get_frame( h->prev_hnd, output, frame ),它是调用了前一个filter的get_frame。那其他的filter是怎么样的呢?我查看源码里面所支持的filter,发现,除了sourcefilter之外其他的也是调用prev_filter的get_frame。分析不难理解这是为什么,首先source filter是整个filter链上的第一个filter,所以不存在prev_filter,source filter 封装了cli_input的read_frame方法,所以source filter的功能就是解复用。分析道这里,我相信你也明白了,实际上在调用get_frame的时候,会走一遍所有filter的get_frame,同时也会执行该filter所要做的工作,例如上面的resizefilter,他的工作就是调用ffmpeg里面的sws_scale( h->ctx, (const uint8_t* const*)output->img.plane, output->img.stride,0, output->img.height, h->buffer.img.plane, h->buffer.img.stride );来完成缩放工作。说到这里,我们脑洞一下,要是想加个水印什么的,也是可以自定义一个filter加载到这里来做。

encode_frame

接下来看看重中之重的编码部分,前面可以说都是为了这做准备。

static int encode_frame( x264_t *h, hnd_t hout, x264_picture_t *pic, int64_t *last_dts )

{

x264_picture_t pic_out;

x264_nal_t *nal;

int i_nal;

int i_frame_size = 0;

/*编码*/

i_frame_size = x264_encoder_encode( h, &nal, &i_nal, pic, &pic_out );

FAIL_IF_ERROR( i_frame_size < 0, "x264_encoder_encode failed\n" );

if( i_frame_size )

{

/*将编码后的数据写到文件中*/

i_frame_size = cli_output.write_frame( hout, nal[0].p_payload, i_frame_size, &pic_out );

*last_dts = pic_out.i_dts;

}

return i_frame_size;

}

encode_frame调用真正的编码接口,最后把编码后的数据利用cli_output的write_frame方法写到文件中。

总结

以上的工作可以说是准备工作,还没有真正进入到编码的过程。但是,代码量确实非常大的,我想说一句Reading the fucking source code ,is really boring!

4771

4771

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?