1.准备好对应的软件

1.1 Hadoop-2.6.0-cdh5.7.0-src.tar.gz

1.2 jdk-7u80-linux-x64.tar.gz 必须是1.7版本的jdk,1.8的一编译就报错

1.3 apache-maven-3.3.9-bin.tar.gz 最好是3.0及以上的版本

1.4 protobuf-2.5.0.tar.gz

2.编译hadoop

首先将上述软件下载到服务器上

2.1 安装依赖

1[root@hadoop001 ~]# yum install -y gcc gcc-c++ make cmake svn ncurses-devel

2[root@hadoop001 ~]# yum install -y openssl openssl-devel svn ncurses-devel zlib-devel libtool

3[root@hadoop001 ~]# yum install -y snappy snappy-devel bzip2 bzip2-devel lzo lzo-devel lzop autoconf automake

2.2安装jdk

1[root@hadoop001 ~]# mkdir /usr/java/

2[root@hadoop001 ~]# cd /usr/java/

3上传或者下载jdk文件,解压jdk压缩包的时候要注意所属组跟所属用户的相关权限

4配置/etc/profile环境变量

5export JAVA_HOME=/usr/java/jdk1.7.0_80

6export PATH=$JAVA_HOME/bin/:$PATH

7[root@hadoop001 java]# source /etc/profile

8[root@hadoop001 jdk1.7.0_80]# java -version

9java version "1.7.0_80"

10Java(TM) SE Runtime Environment (build 1.7.0_80-b15)

11Java HotSpot(TM) 64-Bit Server VM (build 24.80-b11, mixed mode)

2.3安装配置maven

1[root@hadoop001 java]# su - hadoop

2[hadoop@hadoop001 ~]$ mkdir soft maven_repo source

3[hadoop@hadoop001 ~]$ cd soft/

4[hadoop@hadoop001 ~]$ tar -zxvf ~/soft/apache-maven-3.3.9-bin.tar.gz -C ~/app/

5[hadoop@hadoop001 ~]$ vi ~/.bash_profile

6#添加或修改如下内容,注意MAVEN_OPTS设置了maven运行的内存,防止内存太小导致编译失败

7export MAVEN_HOME=/home/hadoop/app/apache-maven-3.3.9

8export MAVEN_OPTS="-Xms1024m -Xmx1024m"

9export PATH=$MAVEN_HOME/bin:$PATH

10[hadoop@hadoop001 ~]$ source ~/.bash_profile

11[hadoop@hadoop001 ~]$ which mvn

12~/app/apache-maven-3.3.9/bin/mvn

13[hadoop@hadoop001 ~]$

14编辑maven配置文件,编辑的时候千万要小心

15/home/gzf/app/apache-maven-3.3.9/conf/settings.xml

16 <mirror>

17 <id>nexus-aliyun</id>

18 <mirrorOf>central</mirrorOf>

19 <name>Nexus aliyun</name>

20 <url>http://maven.aliyun.com/nexus/content/groups/public</url>

21 </mirror>

22说明:修改settings.xml一定要注意,修改格式错误,一开始编译就会报错,而且有些maven的库文件阿里云的镜像是没有的,可以在https://repository.cloudera.com/artifactory/cloudera-repos/这里找到对应的文件

2.4安装protobuf

1[hadoop@hadoop001 soft]$ tar -zxvf protobuf-2.5.0.tar.gz -C ~/app/

2[hadoop@hadoop001 soft]$ cd ~/app/protobuf-2.5.0

3[hadoop@hadoop001 protobuf-2.5.0]$ ./configure --prefix=/home/hadoop/app/protobuf-2.5.0

4[hadoop@hadoop001 protobuf-2.5.0]$ make

5[hadoop@hadoop001 protobuf-2.5.0]$ make install

6[hadoop@hadoop001 protobuf-2.5.0]$ vi ~/.bash_profile

7export PROTOBUF_HOME=/home/hadoop/app/protobuf-2.5.0

8export PATH=$PROTOBUF_HOME/bin:$PATH

9[hadoop@hadoop001 protobuf-2.5.0]$ source ~/.bash_profile

10[hadoop@hadoop001 protobuf-2.5.0]$ protoc --version

11libprotoc 2.5.0

2.5编译hadoop

1[hadoop@hadoop001 ~]$ tar -zxvf ~/soft/hadoop-2.6.0-cdh5.7.0-src.tar.gz -C ~/source/

2[hadoop@hadoop001 hadoop-2.6.0-cdh5.7.0]$ mvn clean package -Pdist,native -DskipTests -Dtar

3-Pdist,native :重新编译hadoop动态库

4-DskipTests :跳过测试

5-Dtar :打成tar包

开始编译hadoop,时间会比较久,请耐心等待。

3.编译碰到的问题

3.1问题一

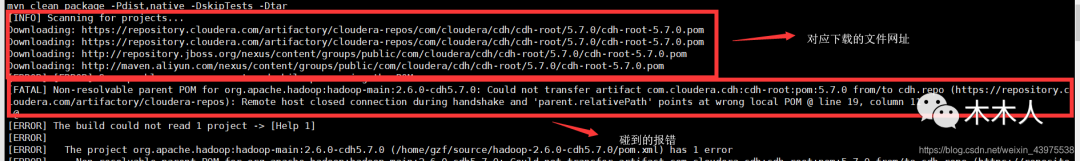

默认maven库的位置下载在~/.m2/repository,具体文件的具体位置可以看下载网址的路径.(上图有一个案例:下载的文件网址)

3.2问题二

下面这个报错是没有下载到文件,导致无法进行下一步,去上面的路径找对应的下载地址进行下载

1Failed to execute goal on project hadoop-auth: Could not resolve dependencies for project org.apache.hadoop:hadoop-auth:jar:2.6.0-cdh5.7.0: Failed to collect dependencies at org.mortbay.jetty:jetty-util:jar:6.1.26.cloudera.4: Failed to read artifact descriptor for org.mortbay.jetty:jetty-util:jar:6.1.26.cloudera.4: Could not transfer artifact org.mortbay.jetty:jetty-util:pom:6.1.26.cloudera.4 from/to cdh.repo (https://repository.cloudera.com/artifactory/cloudera-repos): Remote host closed connection during handshake: SSL peer shut down incorrectly

3.3 问题三

未找到apache-tomcat-6.0.44.tar.gz,可以使用香港的服务器进行编译,也可以直接下载好tomcat,上传到对应的路径,以下是hadoop-2.6.0-cdh5.7.0编译对应的tomcat存放路径:

1/home/gzf/source/hadoop-2.6.0-cdh5.7.0/hadoop-common-project/hadoop-kms/downloads

2/home/gzf/source/hadoop-2.6.0-cdh5.7.0/hadoop-hdfs-project/hadoop-hdfs-httpfs/downloads/

编译成功

1#有 BUILD SUCCESS 信息则表示编译成功

2[INFO] Apache Hadoop Scheduler Load Simulator ............. SUCCESS [ 13.592 s]

3[INFO] Apache Hadoop Tools Dist ........................... SUCCESS [ 12.042 s]

4[INFO] Apache Hadoop Tools ................................ SUCCESS [ 0.094 s]

5[INFO] Apache Hadoop Distribution ......................... SUCCESS [01:49 min]

6[INFO] ------------------------------------------------------------------------

7[INFO] BUILD SUCCESS 出现这个SUCCESS,表示编译成功

8[INFO] ------------------------------------------------------------------------

9[INFO] Total time: 37:39 min

10[INFO] Finished at: 2019-04-07T16:48:42+08:00

11[INFO] Final Memory: 200M/989M

12[INFO] ------------------------------------------------------------------------

编译成功对应的tar包位置

1[hadoop@hadoop001 repository]$ cd ~/source/hadoop-2.6.0-cdh5.7.0/hadoop-dist/target/

2[hadoop@hadoop001 target]$ ll

3total 563948

4drwxrwxr-x 2 hadoop hadoop 4096 Jul 10 15:33 antrun

5drwxrwxr-x 3 hadoop hadoop 4096 Jul 10 15:33 classes

6-rw-rw-r-- 1 hadoop hadoop 1995 Jul 10 15:33 dist-layout-stitching.sh

7-rw-rw-r-- 1 hadoop hadoop 687 Jul 10 15:33 dist-tar-stitching.sh

8drwxrwxr-x 9 hadoop hadoop 4096 Jul 10 15:33 hadoop-2.6.0-cdh5.7.0

9-rw-rw-r-- 1 hadoop hadoop 191842644 Jul 10 15:33 hadoop-2.6.0-cdh5.7.0.tar.gz

10-rw-rw-r-- 1 hadoop hadoop 7313 Jul 10 15:33 hadoop-dist-2.6.0-cdh5.7.0.jar

11-rw-rw-r-- 1 hadoop hadoop 385572232 Jul 10 15:33 hadoop-dist-2.6.0-cdh5.7.0-javadoc.jar

12-rw-rw-r-- 1 hadoop hadoop 4852 Jul 10 15:33 hadoop-dist-2.6.0-cdh5.7.0-sources.jar

13-rw-rw-r-- 1 hadoop hadoop 4852 Jul 10 15:33 hadoop-dist-2.6.0-cdh5.7.0-test-sources.jar

14drwxrwxr-x 2 hadoop hadoop 4096 Jul 10 15:33 javadoc-bundle-options

15drwxrwxr-x 2 hadoop hadoop 4096 Jul 10 15:33 maven-archiver

16drwxrwxr-x 3 hadoop hadoop 4096 Jul 10 15:33 maven-shared-archive-resources

17drwxrwxr-x 3 hadoop hadoop 4096 Jul 10 15:33 test-classes

18drwxrwxr-x 2 hadoop hadoop 4096 Jul 10 15:33 test-dir

查看编译之后是否支持各种格式的文件

1[hadoop@hadoop001 hadoop-2.6.0-cdh5.7.0]$ bin/hadoop checknative

219/07/10 18:01:51 INFO bzip2.Bzip2Factory: Successfully loaded & initialized native-bzip2 library system-native

319/07/10 18:01:51 INFO zlib.ZlibFactory: Successfully loaded & initialized native-zlib library

4Native library checking:

5hadoop: true /home/gzf/source/hadoop-2.6.0-cdh5.7.0/hadoop-dist/target/hadoop-2.6.0-cdh5.7.0/lib/native/libhadoop.so.1.0.0

6zlib: true /lib64/libz.so.1

7snappy: true /usr/lib64/libsnappy.so.1

8lz4: true revision:99

9bzip2: true /lib64/libbz2.so.1

10openssl: true /usr/lib64/libcrypto.so

2680

2680

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?