在搭建hadoop高可用之前先要搭建zookeeper:

参考官方文档进行安装部署

http://zookeeper.apache.org/doc/r3.4.5/zookeeperAdmin.html#sc_systemReq

1.vim /etc/hosts

添加:

192.168.12.51 cm01

192.168.6.92 cm02

192.168.9.54 cm03

2.vim /etc/hostname 改成相应的主机名

在对应机器上执行hostname cm0i (i=1,2,3),使hostname生效

3.配置免密

ssh-keygen

ssh-copy-id root@[主机名]

ssh-keygen

ssh-copy-id root@192.168.12.51

ssh-copy-id root@192.168.6.92

ssh-copy-id root@192.168.9.54

4 安装

wget http://archive.cloudera.com/cdh5/cdh/5/hadoop-2.6.0-cdh5.8.3.tar.gz

解压到对应文件夹下:

tar -zxvf hadoop-2.6.0-cdh5.8.3.tar.gz -C /usr/local/hadoop

修改配置并按配置添加相应的目录:

core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://ha</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>cm01:2181,cm02:2181,cm03:2181</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/var/lib/hadoop/log</value>

</property>

</configuration>

hdfs-site.xml

<property>

<name>dfs.namenode.name.dir</name>

<value>/var/lib/hadoop/hdfs</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/var/lib/hadoop/data</value>

</property>

<!--指定hdfs的nameservice为ha,需要和core-site.xml中的保持一致 -->

<property>

<name>dfs.nameservices</name>

<value>ha</value>

</property>

<!-- ha下面有两个NameNode,分别是nn1,nn2 -->

<property>

<name>dfs.ha.namenodes.ha</name>

<value>nn1,nn2</value>

</property>

<!-- nn1的RPC通信地址 -->

<property>

<name>dfs.namenode.rpc-address.ha.nn1</name>

<value>cm01:9000</value>

</property>

<!-- nn1的http通信地址 -->

<property>

<name>dfs.namenode.http-address.ha.nn1</name>

<value>cm01:50070</value>

</property>

<!-- nn2的RPC通信地址 -->

<property>

<name>dfs.namenode.rpc-address.ha.nn2</name>

<value>cm02:9000</value>

</property>

<!-- nn2的http通信地址 -->

<property>

<name>dfs.namenode.http-address.ha.nn2</name>

<value>cm02:50070</value>

</property>

<!-- 指定NameNode的元数据在JournalNode上的存放位置 -->

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://cm01:8485;cm02:8485;cm03:8485/ha</value>

</property>

<!-- 指定JournalNode在本地磁盘存放数据的位置 -->

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/usr/local/hadoop/data</value>

</property>

<!-- 开启NameNode失败自动切换 -->

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<!-- 配置失败自动切换实现方式 -->

<property>

<name>dfs.client.failover.proxy.provider.ha</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>cm01:2181,cm02:2181,cm03:2181</value>

</property>

<!-- 配置隔离机制方法,多个机制用换行分割,即每个机制暂用一行-->

<property>

<name>dfs.ha.fencing.methods</name>

<value>

sshfence

shell(/bin/true)

</value>

</property>

<!-- 使用sshfence隔离机制时需要ssh免登陆 -->

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/root/.ssh/id_rsa</value>

</property>

<!-- 配置sshfence隔离机制超时时间 -->

<property>

<name>dfs.ha.fencing.ssh.connect-timeout</name>

<value>30000</value>

</property>

yarn-site.xml

<!-- 开启RM高可用 -->

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<!--开启故障自动切换-->

<property>

<name>yarn.resourcemanager.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<!-- 分别指定RM的地址(hostname,不加端口号) -->

<property>

<name>yarn.resourcemanager.hostname.rm1</name>

<value>cm01</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm2</name>

<value>cm02</value>

</property>

<!--在namenode1上配置rm1,在namenode2上配置rm2-->

<property>

<name>yarn.resourcemanager.ha.id</name>

<value>rm1</value>

</property>

<!-- 指定zk集群地址 -->

<property>

<name>yarn.resourcemanager.zk-address</name>

<value>cm01:2181,cm02:2181,cm03:2181</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

5 问题总结:

问题一:

start-yarn.sh

出现:

localhost: Error: JAVA_HOME is not set and could not be found.

在hadoop-env.sh中export JAVA_HOME

export JAVA_HOME=/usr/java/jdk1.8

系统环境变量里面已经设置了,为什么还要再设置一边?这个问题先留着。

问题二:

active启动了

standby启动的时候出现以下异常:

Error starting ResourceManager

org.apache.hadoop.yarn.webapp.WebAppException: Error starting http server

原来是yarn.resourcemanager.ha.id在第二台机器上没有改过来,将第二个resourcemanager的该配置值改成rm2

yarn.resourcemanager.ha.id

rm1

问题三:查看activeResourmanagerIp:8088的页面的时候,提示的资源是24G,24vcore。但是实际上3台机器的资源为16G,8vcore

需要在yarn-site.xml中添加配置项

#设置RM分配的container最小的内存为1024M

<property>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>1024</value>

</property>

#设置RM分配的container每次增加的内存大小

<property>

<name>yarn.scheduler.increment-allocation-mb</name>

<value>512</value>

</property>

#设置RM分配的container最大的内存为2048M

<property>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>2048</value>

</property>

#设置RM分配的Vcore

<property>

<name>yarn.scheduler.minimum-allocation-vcores</name>

<value>1</value>

</property>

<property>

<name>yarn.scheduler.increment-allocation-vcores</name>

<value>1</value>

</property>

<property>

<name>yarn.scheduler.maximum-allocation-vcores</name>

<value>2</value>

</property>

#设置单个NM的内存大小

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>3072</value>

</property>

#设置单个NM的vcore

<property>

<name>yarn.nodemanager.resource.cpu-vcores</name>

<value>2</value>

</property>

#虚拟内存率,默认2.1,放这表明有这个东西

<property>

<name>yarn.nodemanager.vmem-pmem-ratio</name>

<value>2.1</value>

</property>

在mapred-site.xml中添加以下配置:

<property>

<name>mapreduce.map.memory.mb</name>

<value>1024</value>

</property>

<property>

<name>mapreduce.map.cpu.vcores</name>

<value>1</value>

</property>

<property>

<name>mapreduce.reduce.memory.mb</name>

<value>2048</value>

</property>

<property>

<name>mapreduce.reduce.cpu.vcores</name>

<value>1</value>

</property>

停掉有的nn和rm。修改配置重启

yarn-daemon.sh stop resourcemanager

yarn-daemon.sh stop nodemanager

active rm :

start-yarn.sh

standby rm:

yarn-daemon.sh start resourcemanager

yarn-daemon.sh start nodemanager

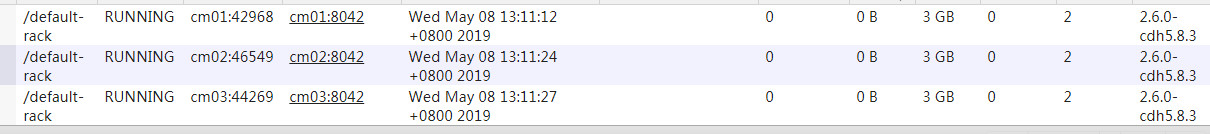

UI查看资源情况:

参考文章:

cdh hadoop官方文档:

http://archive.cloudera.com/cdh5/cdh/5/hadoop-2.6.0-cdh5.8.3/

csdn的博客:这篇文章搭建了zookeeper,高可用的hadoop,hbase 写很详细

https://blog.csdn.net/qq_40784783/article/details/79115526

yarn资源的配置文章参考

https://blog.csdn.net/suifeng3051/article/details/48135521#commentsedit

https://blog.csdn.net/dxl342/article/details/52840455

446

446

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?