一:通过URLStreamHandler实例以标准输出方式显示Hadoop文件系统文件

程序读取hdfs://localhost/user/hadoop/quangle.txt的内容,以标准方式输出

1.源程序如下:

import java.io.InputStream;

import java.net.URL;

import org.apache.hadoop.fs.FsUrlStreamHandlerFactory;

import org.apache.hadoop.io.IOUtils;

public class URLCat{

static{

URL.setURLStreamHandlerFactory(new FsUrlStreamHandlerFactory());

}

public static void main(String[] args) throws Exception{

InputStream in=null;

try{

in=new URL(args[0]).openStream();

IOUtils.copyBytes(in,System.out,4096,false);

}

catch(Exception e)

{

e.printStackTrace();

}

finally{

IOUtils.closeStream(in);#close Data stream

}

}

}2.编译与执行

javac URLCat.java #编辑.java源程序

jar -cvf URLCat.jar ./URLCat*.class #打包成jar文件

hadoop URLCat hdfs://localhost/user/hadoop/quangle.txt #执行程序

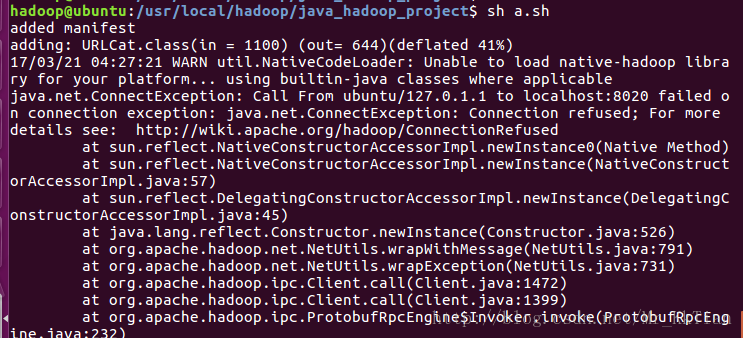

编译过程中遇到如下错误:

added manifest

adding: URLCat.class(in = 1100) (out= 644)(deflated 41%)

17/03/21 04:27:21 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

java.net.ConnectException: Call From ubuntu/127.0.1.1 to localhost:8020 failed on connection exception: java.net.ConnectException: Connection refused; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused

Solution:

把etc/core-site.xml中将fs.default.name的value值修改hdfs://localhost:8020(之前是hdfs://localhost:9000),只需要把端口号由9000改为8020后,然后重新启动集群,就可以运行了。

1565

1565

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?