不过问题也随之而已,其一是给的集群其实就是一远程登录账户,而且没有root权限;其二集群共10个节点,但是共享存储的。本人这方面知识少,找了一个星期资料,才知道是NFS的。

后来找到了一个帖子:《Hadoop - 將Hadoop建構在NFS以及NIF》,受到启发.

不过给的权限确实小,并无root权限,即能操作的目录仅在/home/my_user_name/目录下,幸好后来本人得知NFS下/tmp目录也是可以用的. 所以在这种艰苦的环境下,只能将用/tmp了.

其缺点就是:服务器的重启,/tmp会清空,从而Hadoop集群里的数据将丢失,好在本人只是做点research,不碍事!

下面讲下配置吧!

- Hadoop version: hadoop-1.0.1

- Java version: jdk1.6.0_12

0. 设置Java路径, 因为是NFS,而且本人权限只是user, 所以只能修改$HOME/.bash_profile

- export JAVA_HOME=/usr/java/jdk1.6.0_12

- export JRE_HOME=/usr/java/jdk1.6.0_12/jre

- export CLASSPATH=.:$JAVA_HOME/lib:$JAVA_HOME/jre/lib

- export PATH=$JAVA_HOME/bin:$JAVA_HOME/jre/bin:$PATH

然后使其生效:

- source $HOME/.bash_profile

1. 编辑 conf/hadoop-env.sh, 添加JAVA_HOME

- export JAVA_HOME=/usr/java/jdk1.6.0_12/

- export HADOOP_LOG_DIR=/tmp/hadoop_username_log_dir

2. 将 src/core/core-default.xml, src/hdfs/hdfs-default.xml, src/mapred/mapred-default.xml 复制到 conf下,并重命名为 core-site.xml, hdfs-site.xml, mapred-site.xml, 并编辑这三文件.

- <!--core-site.xml-->

- <property>

- <name>fs.default.name</name>

- <value>hdfs://head:9000</value>

- <description>The name of the default file system. A URI whose

- scheme and authority determine the FileSystem implementation. The

- uri's scheme determines the config property (fs.SCHEME.impl) naming

- the FileSystem implementation class. The uri's authority is used to

- determine the host, port, etc. for a filesystem.</description>

- </property>

- <property>

- <name>hadoop.tmp.dir</name>

- <value>/tmp/hadoop-1.0.1_${user.name}</value>

- <description>A base for other temporary directories.</description>

- </property>

- <!--hdfs-site.xml-->

- <property>

- <name>dfs.replication</name>

- <value>3</value>

- <description>Default block replication.

- The actual number of replications can be specified when the file is created.

- The default is used if replication is not specified in create time.

- </description>

- </property>

- <!--mapred-site.xml-->

- <property>

- <name>mapred.job.tracker</name>

- <value>head:9001</value>

- <description>The host and port that the MapReduce job tracker runs

- at. If "local", then jobs are run in-process as a single map

- and reduce task.

- </description>

- </property>

3. 这个是最重要的,我们需要再次编辑 conf/hadoop-env.sh,放在第一步编辑是完全可以的,这里放在最后一步,是为了强调!!就是修改HADOOP_LOG_DIR

- export HADOOP_LOG_DIR=/tmp/hadoop-1.0.1_log_dir

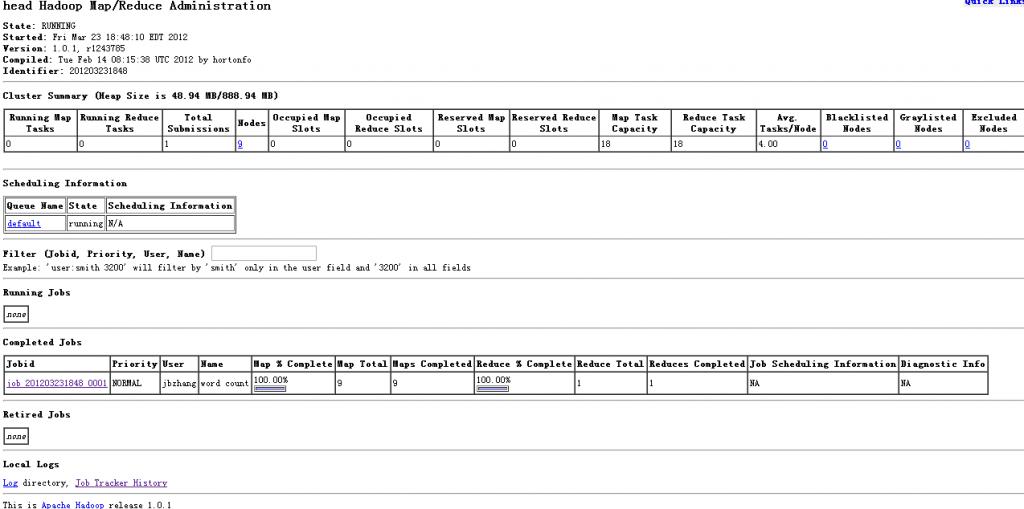

4. 测试正常运行

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?