HBase1.1.x部署在Hadoop2.6.0上(3台vm虚拟机的部分分布式)

下载

配置环境变量/etc/profile

#hbase

export HBASE_HOME=/usr/local/soft/hbase-1.1.5

export PATH=$PATH:$HBASE_HOME/bin

配置$HBASE_HOME/conf/hbase-env.sh

export JAVA_HOME=/usr/lib/jvm/java-7-openjdk-amd64

export HBASE_MANAGES_ZK=true

配置$HBASE_HOME/conf/hbase-site.xml

<configuration>

<property>

<name> hbase.rootdir </name>

<value>hdfs://192.168.0.11:9000/hbase</value>

<description> hbase.rootdir是RegionServer的共享目录,用于持久化存储HBase数据,默认写入/tmp中。如果不修改此配置,在HBase重启时,数据会丢失。此处一般设置的是hdfs的文件目录,如NameNode运行在namenode.Example.org主机的9090端口,则需要设置为hdfs://namenode.example.org:9000/hbase

</description>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

<description>此项用于配置HBase的部署模式,false表示单机或者伪分布式模式,true表不完全分布式模式。

</description>

</property>

</configuration>

配置$HBASE_HOME/conf/regionservers

删掉了原来唯一有的localhost

192.168.0.11

192.168.0.12

192.168.0.13

拷贝hadoop的hdfs-site.xml和core-site.xml 放到$HBASE_HOME/conf下

cp /usr/local/soft/hadoop-2.6.0/etc/hadoop/hdfs-site.xml /usr/local/soft/hbase-1.1.5/conf

cp /usr/local/soft/hadoop-2.6.0/etc/hadoop/core-site.xml /usr/local/soft/hbase-1.1.5/conf

复制hbase到其他节点

# scp /etc/profile root@192.168.0.12:/etc/profile 这样不会覆盖

scp /etc/profile root@192.168.0.12:/etc/

# scp /etc/profile root@192.168.0.13:/etc/profile 这样不会覆盖

scp /etc/profile root@192.168.0.13:/etc/

scp -r /usr/local/soft/hbase-1.1.5 root@192.168.0.12:/usr/local/soft/

scp -r /usr/local/soft/hbase-1.1.5 root@192.168.0.13:/usr/local/soft/

解决JDK8遇到的启动警告

如果使用jdk8以上,会有以下警告

Java HotSpot™ 64-Bit Server VM warning: ignoring option PermSize=128m; support was removed in 8.0

Java HotSpot™ 64-Bit Server VM warning: ignoring option MaxPermSize=128m; support was removed in 8.0

解决方法,所有节点 vi /home/hadoop/app/hbase/conf/hbase-env.sh 注释掉以下行(把下面各行最前面都加上 #)

# Configure PermSize. Only needed in JDK7. You can safely remove it for JDK8+

export HBASE_MASTER_OPTS="$HBASE_MASTER_OPTS -XX:PermSize=128m -XX:MaxPermSize=128m -XX:ReservedCodeCacheSize=256m"

export HBASE_REGIONSERVER_OPTS="$HBASE_REGIONSERVER_OPTS -XX:PermSize=128m -XX:MaxPermSize=128m -XX:ReservedCodeCacheSize=256m"

启动

# 主节点

[root@master conf]# start-hbase.sh

localhost: starting zookeeper, logging to /usr/local/soft/hbase-1.1.5/bin/../logs/hbase-root-zookeeper-master.out

starting master, logging to /usr/local/soft/hbase-1.1.5/bin/../logs/hbase-root-master-master.out

192.168.0.13: starting regionserver, logging to /usr/local/soft/hbase-1.1.5/bin/../logs/hbase-root-regionserver-node2.out

192.168.0.12: starting regionserver, logging to /usr/local/soft/hbase-1.1.5/bin/../logs/hbase-root-regionserver-node1.out

192.168.0.11: starting regionserver, logging to /usr/local/soft/hbase-1.1.5/bin/../logs/hbase-root-regionserver-master.out

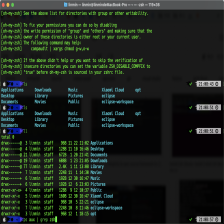

[root@master conf]# jps

74322 HQuorumPeer

74388 HMaster

74518 HRegionServer

2903 ResourceManager

60745 Worker

2586 NameNode

2762 SecondaryNameNode

74797 Jps

61117 SparkSubmit

60653 Master

# 各个从节点

[root@node1 conf]# jps

79111 DataNode

111161 Jps

79209 NodeManager

111116 HRegionServer

104094 Worker

验证

[root@master conf]# hbase shell

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/usr/local/soft/hbase-1.1.5/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/usr/local/soft/hadoop-2.6.0/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

2019-12-05 01:53:56,821 WARN [main] util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

HBase Shell; enter 'help<RETURN>' for list of supported commands.

Type "exit<RETURN>" to leave the HBase Shell

Version 1.1.5, r239b80456118175b340b2e562a5568b5c744252e, Sun May 8 20:29:26 PDT 2016

hbase(main):001:0>

可以使用Ctrl+C退出

停止

如果有超多节点,也可以在一些其他节点上启动从HMaster

启动从HMaster

bin/hbase-daemon.sh start master

备注:如果需要单独启动一个regionserver,使用类似命令

bin/hbase-daemon.sh start regionserver

如果启动了从HMaster

停止hbase 首先停止其他节点上的HMaster

在对应节点bin/hbase-daemon.sh stop master

然后再停止其他

bin/start-hbase.sh

# 主节点

[root@master conf]# stop-hbase.sh

stopping hbase....................

localhost: stopping zookeeper.

[root@master conf]# jps

2903 ResourceManager

60745 Worker

75288 Jps

2586 NameNode

2762 SecondaryNameNode

61117 SparkSubmit

60653 Master

# 其他从节点

[root@node1 conf]# jps

79111 DataNode

79209 NodeManager

111290 Jps

104094 Worker

参考说明(可以跳过)

配置JAVA_HOME,HBASE_CLASSPATH,HBASE_MANAGES_ZK.

HBASE_CLASSPATH设置为本机Hadoop安装目录下的conf目录(即/usr/local/hadoop/conf)----这里暂时没有配置,因为hadoop2.6.0没有这个目录,看见别人2.6.0也没有配置这个

export JAVA_HOME=/usr/lib/jvm/java-7-openjdk-amd64

export HBASE_CLASSPATH=/usr/local/hadoop/conf

export HBASE_MANAGES_ZK=true

子雨老师实验室的教程是单机版的…都没有给子节点进行配置…

所以又看了其他教程

http://c.biancheng.net/view/6515.html

https://www.cnblogs.com/leffss/p/9184171.html

2335

2335

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?