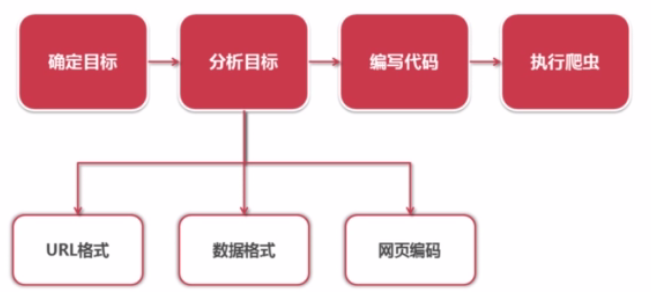

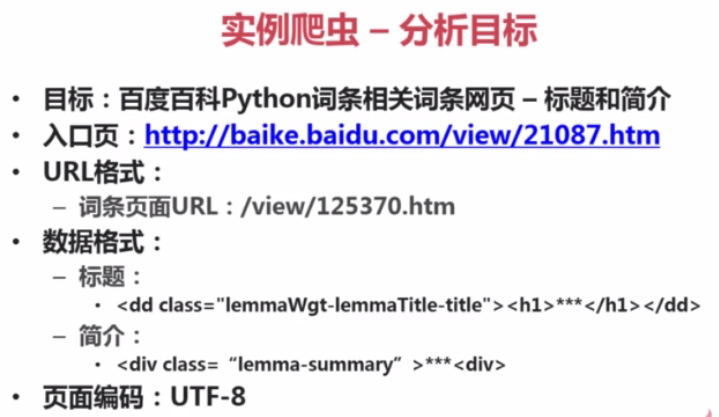

一、分析目标

如果分析目标与上述不同,请自行修改,因为页面会随意更新数据格式等相关信息

二、调试程序

spider_main.py

#!/usr/bin/python

# -*- coding: UTF-8 -*-

import url_manager

import html_downloader

import html_outputer

import html_parser

class SpiderMain(object):

# 初始各个对象, 其中UrlManager、HtmlDownloader、HtmlParser、HtmlOutputer四个对象需要之后创建

def __init__(self):

self.urls = url_manager.UrlManager() # URL管理器

self.downloader = html_downloader.HtmlDownloader() # 下载器

self.parser = html_parser.HtmlParser() # 解析器

self.outputer = html_outputer.HtmlOutputer() # 输出器

def craw(self, root_url):

count = 1

# 将root_url添加到url管理器

self.urls.add_new_url(root_url)

# 只要添加的url里有新的url

while self.urls.has_new_url():

try:

new_url = self.urls.get_new_url()

print 'craw %d : %s' % (count, new_url)

# 启动下载器,将获取到的url下载下来

html_cont = self.downloader.download(new_url)

# 调用解析器解析下载的这个页面

new_urls, new_data = self.parser.parse(new_url, html_cont)

# 将解析出的url添加到url管理器, 将数据添加到输出器里

self.urls.add_new_urls(new_urls)

self.outputer.collect_data(new_data)

if count == 1000:

break

count = count + 1

except:

print 'craw failed'

self.outputer.output_html()

if __name__ == "__main__":

root_url = "http://baike.baidu.com/link?url=92QjmNSCiMatDE-Sb65QLtad37q_bmvugik4DziB3VSQ7VeceqRseYIuCyy78_eg_wYbvbe9d7P7ZvgbW1swUaBLXiChLXEplFzOpoN3EEkTlr5UALue9WdbjyAqJEPS" # 这个URL根据实际情况的url进行修改

obj_spider = SpiderMain()

obj_spider.craw(root_url) # 启动爬虫

-------------------------------------------------------------------

url_manager.py (URL管理器)

#!/usr/bin/env python2

# -*- coding: UTF-8 -*-

class UrlManager(object):

def __init__(self):

self.new_urls = set()

self.old_urls = set()

def add_new_url(self, url):

if url is None:

return

if url not in self.new_urls and url not in self.old_urls:

self.new_urls.add(url)

def add_new_urls(self, urls):

if urls is None or len(urls) == 0:

return

for url in urls:

self.add_new_url(url)

def has_new_url(self):

return len(self.new_urls) != 0

def get_new_url(self):

new_url = self.new_urls.pop()

self.old_urls.add(new_url)

return new_url

-----------------------------------------------------------------------

html_downloader.py (HTML下载器)#!/usr/bin/python

# -*- coding: UTF-8 -*-

import urllib2

class HtmlDownloader(object):

def download(self, url):

if url is None:

return None

response = urllib2.urlopen(url)

if response.getcode() != 200:

return None

return response.read()

-----------------------------------------------------------------

html_parser.py (HTML解析器)

#!/usr/bin/python

# -*- coding: UTF-8 -*-

import urlparse

import re

from bs4 import BeautifulSoup

class HtmlParser(object):

def parse(self, page_url, html_cont):

if page_url is None or html_cont is None:

return

soup = BeautifulSoup(html_cont, 'html.parser', from_encoding='utf-8')

new_urls = self._get_new_urls(page_url, soup)

new_data = self._get_new_data(page_url, soup)

return new_urls, new_data

def _get_new_urls(self, page_url, soup):

new_urls = set()

links = soup.find_all('a', href=re.compile("/view/\d+\.htm"))

for link in links:

new_url = link['href']

new_full_url = urlparse.urljoin(page_url, new_url)

new_urls.add(new_full_url)

return new_urls

def _get_new_data(self, page_url, soup):

res_data = {}

# url

res_data['url'] = page_url

# <dd class="lemmaWgt-lemmaTitle-title"><h1>Python</h1>

title_node = soup.find('dd', class_="lemmaWgt-lemmaTitle-title").find("h1")

res_data['title'] = title_node.get_text()

summary_node = soup.find('div', class_="lemma-summary")

res_data['summary'] = summary_node.get_text()

return res_data

--------------------------------------------------------

html_outputer.py (HTML输出器)

#!/usr/bin/python

# -*- coding: UTF-8 -*-

class HtmlOutputer(object):

def __init__(self):

self.datas = []

def collect_data(self, data):

if data is None:

return

self.datas.append(data)

def output_html(self):

fout = open('output.html', 'w')

fout.write("<html>")

fout.write("<body>")

fout.write("<table>")

for data in self.datas:

fout.write("<tr>")

fout.write("<td>%s</td>" % data['url'])

fout.write("<td>%s</td>" % data['title'].encode('utf-8'))

fout.write("<td>%s</td>" % data['summary'].encode('utf-8'))

fout.write("</tr>")

fout.write("</table>")

fout.write("</body>")

fout.write("</html>")

fout.close()

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?