一、概述

- flume收集日志文件到hdfs

- jdk1.7.0_67

- apache-flume-1.6.0-bin

二、安装

- 下载、解压

- 在conf目录下创建flume.conf,名字可自定义

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = exec

a1.sources.r1.command = tail -F /data/logs/nginx/access.log

a1.sources.r1.channels = c1

# Describe the sink

a1.sinks.k1.type = hdfs

a1.sinks.k1.channel = c1

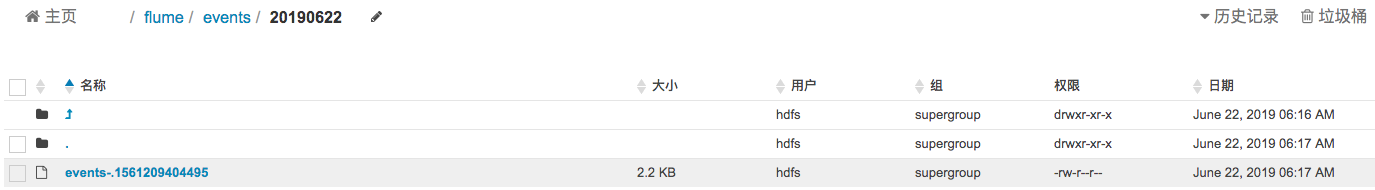

a1.sinks.k1.hdfs.path = hdfs://ip:8020/flume/events/%y%m%d

a1.sinks.k1.hdfs.fileType = DataStream

a1.sinks.k1.hdfs.writeFormat = Text

# 前缀

a1.sinks.k1.hdfs.filePrefix = events-

# 间隔多久产生新文件,默认是:30(秒) 0表示不以时间间隔为准

a1.sinks.k1.hdfs.rollInterval = 0

# 128M生成一个新文件

a1.sinks.k1.hdfs.rollSize = 134217728

# 生成新文件不已

a1.sinks.k1.hdfs.rollCount = 0

# 60秒内没有新内容写入,关闭流,去掉.tmp。当后面有内容写入时会新开一个.tmp文件来接收

a1.sinks.k1.hdfs.idleTimeout=60

a1.sinks.k1.hdfs.useLocalTimeStamp = true

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1- 运行,因为我这边使用的是CM+CDH,安装hdfs的时候创建了默认角色hdfs,所以使用hdfs角色启动

sudo -u hdfs ./bin/flume-ng agent --conf conf --conf-file ./conf/flume.conf --name a1 -Dflume.root.logger=INFO,console &

784

784

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?