用从关注到使用argo已经快一年时间了,对于小型的算法工作流,argo用起来还是非常简单高效的。这篇文章主要介绍argo以及粗略浏览一下背后的代码(因为argo的manifest如果要写得好,就得看背后的数据结构),如果你只是在寻找argo的使用教程,建议直接看github的文档,有问题可以看issues,这是最佳方式。

阅读要求

熟练使用k8s/golang

算法工作流

在讨论算法工作流之前,先看一下工作流的介绍。工作流,简单来说是完成一件事的工作流程

像下图所描述的任务,的就是一个典型的工作流

一般来说,工作流是一个有向无环图(DAG)

算法工作流也是一个DAG,里面的一个点,就是一个step,目前我所接触的工作流,有简单到一个step就可以完成的,也有n多个step组成的。每个step,都会有对应的输入,也会有对应的输出,然后构成一个完整的算法pipeline。

一个算法工作流,由一个或者多个算法step构成,但是算法工作流要按照一种预期的方式执行,就需要有配套的调度服务,来保证不同的step有序执行。

算法工作流调度服务,目前社区有两个比较出色的开源项目,airflow以及argo。

airflow

传送门airflow

是apache下孵化的一个工作流引擎,可以裸机部署,也可以部署在k8s集群中

优点

- 代码即配置(py实现)

- 提供UI,过程跟踪比较方便

缺点

- API开放的功能比较少,做集成比较难度较高

- 对k8s的支持比较少

argo

golang开发的一个基于k8s crd的工作流引擎,社区活跃程度相当高,使用的公司包括Google/IBM/Nvidia等。由于argo是基于k8s开发的,对于有k8s技术栈的团队来说,argo无疑比airflow更加适合。

下面讨论主要围绕2.3版本展开。

从两个功能看argo

我目前常用的两个功能,

DAG or Steps based declaration of workflows

github.com/argoproj/argo/examples/dag-diamond-steps.yaml

# The following workflow executes a diamond workflow

#

# A

# / \

# B C

# \ /

# D

apiVersion: argoproj.io/v1alpha1

kind: Workflow

metadata:

generateName: dag-diamond-

spec:

entrypoint: diamond

templates:

- name: diamond

dag:

tasks:

- name: A

template: echo

arguments:

parameters: [{name: message, value: A}]

- name: B

dependencies: [A]

template: echo

arguments:

parameters: [{name: message, value: B}]

- name: C

dependencies: [A]

template: echo

arguments:

parameters: [{name: message, value: C}]

- name: D

dependencies: [B, C]

template: echo

arguments:

parameters: [{name: message, value: D}]

- name: echo

inputs:

parameters:

- name: message

container:

image: alpine:3.7

command: [echo, "{{inputs.parameters.message}}"]

这是一个钻石形状的DAG工作流

第一阶段:执行A

第二阶段:B和C是同时执行的

第三阶段:D需要等B和C同时成功执行才会触发(注意:argo目前支持的是条件与,暂时不支持条件或,假如D的触发条件是B或C任意一个完成,这种情况需要定制开发)

Workflow 数据结构

Workflow的manifest对应的数据结构在 https://github.com/argoproj/argo/blob/release-2.3/pkg/apis/workflow/v1alpha1/types.go#58

注:下文都是基于2.3的相对路径

type Workflow struct {

metav1.TypeMeta `json:",inline"`

metav1.ObjectMeta `json:"metadata"`

Spec WorkflowSpec `json:"spec"`

Status WorkflowStatus `json:"status"`

}

这就是argo的顶层数据结构设计,TypeMeta 与ObjectMeta 用默认的结构,Spec是用户需要填充的数据,Status是workflow controller需要更新的数据,感谢k8s openapi,非常优美的设计。

接下来是WorkflowSpec ,这里只挑一些比较重要的数据,不然就太占篇幅了。

// WorkflowSpec is the specification of a Workflow.

type WorkflowSpec struct {

// Templates is a list of workflow templates used in a workflow

Templates []Template `json:"templates"`

// Entrypoint is a template reference to the starting point of the workflow

Entrypoint string `json:"entrypoint"`

// Arguments contain the parameters and artifacts sent to the workflow entrypoint

// Parameters are referencable globally using the 'workflow' variable prefix.

// e.g. {{workflow.parameters.myparam}}

Arguments Arguments `json:"arguments,omitempty"`

// ServiceAccountName is the name of the ServiceAccount to run all pods of the workflow as.

ServiceAccountName string `json:"serviceAccountName,omitempty"`

...

}

Templates: 定义各种各样的模板,由于是声明式的设计,生产环境中,可以为定位问题提供直接有效的信息。工作流完成之后,建议落地,方便将来查找问题。

Entrypoint :代表dag的第一个入口

Arguments: 不同steps的参数传递,所有steps可见

ImagePullSecrets : 如果kubelet没有配置默认的镜像仓凭证,就需要配置ImagePullSecrets来拉docker镜像

ServiceAccountName : argo submit的时候,可以指定serviceaccount,如果不指定,用的是namesapce内的default sa,一般不会用default,会给一个高权限的sa

接下来看一下Template

// Template is a reusable and composable unit of execution in a workflow

type Template struct {

// Name is the name of the template

Name string `json:"name"`

// Inputs describe what inputs parameters and artifacts are supplied to this template

Inputs Inputs `json:"inputs,omitempty"`

// Outputs describe the parameters and artifacts that this template produces

Outputs Outputs `json:"outputs,omitempty"`

// NodeSelector is a selector to schedule this step of the workflow to be

// run on the selected node(s). Overrides the selector set at the workflow level.

NodeSelector map[string]string `json:"nodeSelector,omitempty"`

// Affinity sets the pod's scheduling constraints

// Overrides the affinity set at the workflow level (if any)

Affinity *apiv1.Affinity `json:"affinity,omitempty"`

// Metdata sets the pods's metadata, i.e. annotations and labels

Metadata Metadata `json:"metadata,omitempty"`

// Deamon will allow a workflow to proceed to the next step so long as the container reaches readiness

Daemon *bool `json:"daemon,omitempty"`

// Steps define a series of sequential/parallel workflow steps

Steps [][]WorkflowStep `json:"steps,omitempty"`

// Container is the main container image to run in the pod

Container *apiv1.Container `json:"container,omitempty"`

// Script runs a portion of code against an interpreter

Script *ScriptTemplate `json:"script,omitempty"`

// Resource template subtype which can run k8s resources

Resource *ResourceTemplate `json:"resource,omitempty"`

// DAG template subtype which runs a DAG

DAG *DAGTemplate `json:"dag,omitempty"`

...

}

值得一提的是Container 字段,用的是k8s api的结构,如果需要配置ImagePullPolicy,就在这个字段配置。

Artifact support -S3

这个例子用的是全局artifact配置,要运行这个例子,需要在controler configmap配置好artifactory的凭证信息

github.com/argoproj/argo/examples/artifact-passing.yaml

# This example demonstrates the ability to pass artifacts

# from one step to the next.

apiVersion: argoproj.io/v1alpha1

kind: Workflow

metadata:

generateName: artifact-passing-

spec:

entrypoint: artifact-example

templates:

- name: artifact-example

steps:

- - name: generate-artifact

template: whalesay

- - name: consume-artifact

template: print-message

arguments:

artifacts:

- name: message

from: "{{steps.generate-artifact.outputs.artifacts.hello-art}}"

- name: whalesay

container:

image: docker/whalesay:latest

command: [sh, -c]

args: ["sleep 1; cowsay hello world | tee /tmp/hello_world.txt"]

outputs:

artifacts:

- name: hello-art

path: /tmp/hello_world.txt

- name: print-message

inputs:

artifacts:

- name: message

path: /tmp/message

container:

image: alpine:latest

command: [sh, -c]

args: ["cat /tmp/message"]

第一阶段,generate-artifact step运行,负责创建一个文件

cowsay hello world | tee /tmp/hello_world.txt"

第二阶段,print-message step使用第一阶段创建的文件,并cat

cat /tmp/message

这是artifactory简单传递的例子,如果想了解实现,请看argo 调度单位pod

架构设计

设计还是比较清晰简单的,两个Informer,分别监听不同的资源,pod与wf(workflow)的交互,主要依赖于argoexec拉起的两个container,下面会展开讲。

本地实验环境用到的3个argo镜像

argoproj/workflow-controller v2.3.0 d0f63f453544 8 months ago 37.5 MB

argoproj/argoexec v2.3.0 c5ceebf7886f 8 months ago 286 MB

argoproj/argoui v2.3.0 64f7e1f854a6 10 months ago 183 MB

ui镜像用途不大,试用一下就好,但生产应该不会用到。因为实际上一般都不会用这种三方ui,跟团队的前端技术栈有关。

那么主要用到的镜像就是两个

- argoexec :用于拉起pod的init和wait两个container

- workflow-controller: 用于监听crd

argo的部署还是比较简单的,涉及的镜像比较少,代码比较容易trace。

优点

- 提供artifactory storage功能,artifact目前支持s3、hdfs等方式

- 提供argo client,二次开发难度比较低

缺点

调度都是以pod为单位的,对于大型模型的训练(数十个节点),会有一些麻烦

argo 调度单位pod

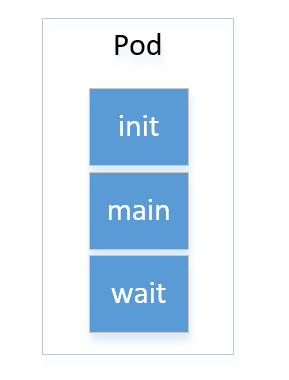

工作流的一个step,对应一个典型的pod。从用户提供的Template数据结构来看,这是一个完整的container启动信息。

实现不同step的参数传递是比较简单的,在创建pod的时候,用gotpl注入参数即可。难度稍高的是文件注入。文件注入的方式,要么在build镜像的时候嵌入一些sdk性质的工具,要么就是动态复制文件进去。argo用的是动态复制文件的方式。

算法一般会有输入,也会有输出。argo采用的方式,是在一个pod插入两个container,也就是sidecar 容器,执行的镜像都是argoexec。argoexec的功能实现代码实现在 argoproj/argo/cmd/argoexec/main.go:16

由于有两个sidecar container,处理输入输出的文件变得比较简单,因为Volume是pod内可见的,只需要mount的路径对就行,详情可以往下看。这样用户的container尽可能保持完整。用户里面有三个容器

main container:业务逻辑实际运行的容器

下面看一下与argo相关的两个sidecar container功能实现

init container:

主要是准备artifactory

入口的代码在:github.com/argoproj/argo/cmd/argoexec/commands/init.go:34

func loadArtifacts() error {

wfExecutor := initExecutor()

defer wfExecutor.HandleError()

defer stats.LogStats()

// Download input artifacts

err := wfExecutor.StageFiles()

if err != nil {

wfExecutor.AddError(err)

return err

}

err = wfExecutor.LoadArtifacts()

if err != nil {

wfExecutor.AddError(err)

return err

}

return nil

}

StageFiles的功能是拉取script/resource,这个属于argo另外的核心功能,可以trace一下代码看看

LoadArtifacts的功能是拉取artifact,这个功能

github.com/argoproj/argo/workflow/executor/executor.go:124

// LoadArtifacts loads artifacts from location to a container path

func (we *WorkflowExecutor) LoadArtifacts() error {

log.Infof("Start loading input artifacts...")

for _, art := range we.Template.Inputs.Artifacts {

log.Infof("Downloading artifact: %s", art.Name)

if !art.HasLocation() {

if art.Optional {

log.Warnf("Ignoring optional artifact '%s' which was not supplied", art.Name)

continue

} else {

return errors.New("required artifact %s not supplied", art.Name)

}

}

artDriver, err := we.InitDriver(art)

if err != nil {

return err

}

// Determine the file path of where to load the artifact

if art.Path == "" {

return errors.InternalErrorf("Artifact %s did not specify a path", art.Name)

}

var artPath string

mnt := common.FindOverlappingVolume(&we.Template, art.Path)

if mnt == nil {

artPath = path.Join(common.ExecutorArtifactBaseDir, art.Name)

} else {

// If we get here, it means the input artifact path overlaps with an user specified

// volumeMount in the container. Because we also implement input artifacts as volume

// mounts, we need to load the artifact into the user specified volume mount,

// as opposed to the `input-artifacts` volume that is an implementation detail

// unbeknownst to the user.

log.Infof("Specified artifact path %s overlaps with volume mount at %s. Extracting to volume mount", art.Path, mnt.MountPath)

artPath = path.Join(common.ExecutorMainFilesystemDir, art.Path)

}

// The artifact is downloaded to a temporary location, after which we determine if

// the file is a tarball or not. If it is, it is first extracted then renamed to

// the desired location. If not, it is simply renamed to the location.

tempArtPath := artPath + ".tmp"

err = artDriver.Load(&art, tempArtPath)

if err != nil {

return err

}

if isTarball(tempArtPath) {

err = untar(tempArtPath, artPath)

_ = os.Remove(tempArtPath)

} else {

err = os.Rename(tempArtPath, artPath)

}

if err != nil {

return err

}

log.Infof("Successfully download file: %s", artPath)

if art.Mode != nil {

err = os.Chmod(artPath, os.FileMode(*art.Mode))

if err != nil {

return errors.InternalWrapError(err)

}

}

}

return nil

}

InitDriver:初始化artifactory driver,目前支持s3/hdfs/git/http等

这里download好文件之后,跟容器是怎么交互的呢?同样以artifact-passing这个例子为例。由于这个例子有两个step,也就是两个pod,第二个step会使用第一个step的output artifact,也会有正常的wait,我们先看第2个step就行,第1个step也类似

这是argo submit的时候,加了–watch的输出:

STEP PODNAME DURATION MESSAGE

✔ artifact-passing-bgdjp

├---✔ generate-artifact artifact-passing-bgdjp-2525271161 9s

└---✔ consume-artifact artifact-passing-bgdjp-2453013743 16s

describe一下artifact-passing-bgdjp-2453013743,输出:

Name: artifact-passing-bgdjp-2453013743

Namespace: argo

Priority: 0

Node: c-pc/192.168.52.128

Start Time: Sat, 15 Feb 2020 23:42:57 +0800

Labels: workflows.argoproj.io/completed=true

workflows.argoproj.io/workflow=artifact-passing-bgdjp

Annotations: cni.projectcalico.org/podIP: 100.64.201.217/32

workflows.argoproj.io/node-name: artifact-passing-bgdjp[1].consume-artifact

workflows.argoproj.io/template:

{"name":"print-message","inputs":{"artifacts":[{"name":"message","path":"/tmp/message","s3":{"endpoint":"argo-artifacts-minio.default:9000...

Status: Succeeded

IP: 100.64.201.217

IPs:

IP: 100.64.201.217

Controlled By: Workflow/artifact-passing-bgdjp

Init Containers:

init:

Container ID: docker://b646cd069376014db11b36bc14ff3b5a3ad95be1f0c7b974e3892f002915ce21

Image: argoproj/argoexec:v2.3.0

Image ID: docker-pullable://argoproj/argoexec@sha256:85132fc2c8bc373fca885df17637d5d35682a23de8d1390668a5e1c149f2f187

Port: <none>

Host Port: <none>

Command:

argoexec

init

State: Terminated

Reason: Completed

Exit Code: 0

Started: Sat, 15 Feb 2020 23:42:58 +0800

Finished: Sat, 15 Feb 2020 23:42:58 +0800

Ready: True

Restart Count: 0

Environment:

ARGO_POD_NAME: artifact-passing-bgdjp-2453013743 (v1:metadata.name)

Mounts:

/argo/inputs/artifacts from input-artifacts (rw)

/argo/podmetadata from podmetadata (rw)

/argo/secret/argo-artifacts-minio from argo-artifacts-minio (ro)

/var/run/secrets/kubernetes.io/serviceaccount from argo-admin-account-token-xbr9t (ro)

Containers:

wait:

Container ID: docker://d3b40cf3b2ee407e5508d1d528ca853e59cc09f42bcb20562a121b3343f29665

Image: argoproj/argoexec:v2.3.0

Image ID: docker-pullable://argoproj/argoexec@sha256:85132fc2c8bc373fca885df17637d5d35682a23de8d1390668a5e1c149f2f187

Port: <none>

Host Port: <none>

Command:

argoexec

wait

State: Terminated

Reason: Completed

Exit Code: 0

Started: Sat, 15 Feb 2020 23:42:59 +0800

Finished: Sat, 15 Feb 2020 23:43:13 +0800

Ready: False

Restart Count: 0

Environment:

ARGO_POD_NAME: artifact-passing-bgdjp-2453013743 (v1:metadata.name)

Mounts:

/argo/podmetadata from podmetadata (rw)

/argo/secret/argo-artifacts-minio from argo-artifacts-minio (ro)

/mainctrfs/tmp/message from input-artifacts (rw,path="message")

/var/run/docker.sock from docker-sock (ro)

/var/run/secrets/kubernetes.io/serviceaccount from argo-admin-account-token-xbr9t (ro)

main:

Container ID: docker://a2feca1462a0336c5501ce2a5e1e55486d8bf35e4da4a661442081788c848d4c

Image: alpine:latest

Image ID: docker-pullable://alpine@sha256:ab00606a42621fb68f2ed6ad3c88be54397f981a7b70a79db3d1172b11c4367d

Port: <none>

Host Port: <none>

Command:

sh

-c

Args:

cat /tmp/message

State: Terminated

Reason: Completed

Exit Code: 0

Started: Sat, 15 Feb 2020 23:43:13 +0800

Finished: Sat, 15 Feb 2020 23:43:13 +0800

Ready: False

Restart Count: 0

Environment: <none>

Mounts:

/tmp/message from input-artifacts (rw,path="message")

/var/run/secrets/kubernetes.io/serviceaccount from argo-admin-account-token-xbr9t (ro)

Conditions:

Type Status

Initialized True

Ready False

ContainersReady False

PodScheduled True

Volumes:

podmetadata:

Type: DownwardAPI (a volume populated by information about the pod)

Items:

metadata.annotations -> annotations

docker-sock:

Type: HostPath (bare host directory volume)

Path: /var/run/docker.sock

HostPathType: Socket

argo-artifacts-minio:

Type: Secret (a volume populated by a Secret)

SecretName: argo-artifacts-minio

Optional: false

input-artifacts:

Type: EmptyDir (a temporary directory that shares a pod's lifetime)

Medium:

SizeLimit: <unset>

argo-admin-account-token-xbr9t:

Type: Secret (a volume populated by a Secret)

SecretName: argo-admin-account-token-xbr9t

Optional: false

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute for 300s

node.kubernetes.io/unreachable:NoExecute for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled <unknown> default-scheduler Successfully assigned argo/artifact-passing-bgdjp-2453013743 to c-pc

Normal Pulled 18h kubelet, c-pc Container image "argoproj/argoexec:v2.3.0" already present on machine

Normal Created 18h kubelet, c-pc Created container init

Normal Started 18h kubelet, c-pc Started container init

Normal Pulled 18h kubelet, c-pc Container image "argoproj/argoexec:v2.3.0" already present on machine

Normal Created 18h kubelet, c-pc Created container wait

Normal Started 18h kubelet, c-pc Started container wait

Normal Pulling 18h kubelet, c-pc Pulling image "alpine:latest"

Normal Pulled 18h kubelet, c-pc Successfully pulled image "alpine:latest"

Normal Created 18h kubelet, c-pc Created container main

Normal Started 18h kubelet, c-pc Started container main

有实际的pod信息,看pod的设计就比较直观了,一共三个container

Init Containers: 只有一个init container

Containers: 有main和wait两个container

先看pod的volume

Volumes:

podmetadata: pod的元数据,用于关联pod和step

docker-sock: 用于支持docker in docker,关于这种使用方式,可以去看docker的官网看一下用法。这里稍微提一下,docker要跑起来,会有server和client,docker in docker里面只是做了client的映射,server还是宿主机的docker server(dockerd),挂在宿主机的docker之后,意味着可以可以对容器进行一些精准操作

argo-artifacts-minio: 就是创建的minio 凭证secret,包含accesskey和secret key

input-artifacts: 第一个step的输出,第二个step的输入

argo-admin-account-token-xbr9t: k8s token,因为wait需要跟api server通信,所以需要挂在token

重点看一下input-artifacts的信息,

input-artifacts:

Type: EmptyDir (a temporary directory that shares a pod's lifetime)

Medium:

SizeLimit: <unset>

由于是EmptyDir,所以它在宿主机的路径就不需要关心,改目录的生命周期和pod是一样的。

同样一个路径,在不同的容器可以挂在为不同的目录,查看describe的输出,可以发现各自挂在的路径是这样的:

init: /argo/inputs/artifacts

main: /tmp/message

wait: /mainctrfs/tmp/message

看到这里,LoadArtifacts函数的代码基本就可以理解了

artPath = path.Join(common.ExecutorArtifactBaseDir, art.Name)

ExecutorArtifactBaseDir const变量的值就是 /argo/inputs/artifacts

wait container:

一方面需要监听进程是否完成,以便让工作流继续跑,另一方面,也要保存输出的artifactory

TODO

一些实践经验

- 为了保证k8s的资源以及安全,argo部署的时候,选择namespace install方式就行,而且k8s管理员也不会给你cluster scope的权限哈

- 默认可以在全局的configmap配置s3 artifact的凭证信息,用的是一个租户的bucket,如果需要隔离不同工作流的artifact,那么就需要在工作流里面指定凭证信息。由于工作流模板带了敏感信息,信息安全的问题就要注意一下

- 初期使用argo的时候,的ImagePullPolicy是可以指定的,不然用默认的IfNotExist策略,你会发现你的镜像没有生效。

- 如果算法脚本是需要大量并行的pod跑,那么argo可能不太适合,这种情况,就只能用自研的工作流引擎了。回应开头所说,argo比较适合小型的工作流。

2706

2706

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?